AMD Zen Microarchiture Part 2: Extracting Instruction-Level Parallelism

by Ian Cutress on August 23, 2016 8:45 PM EST- Posted in

- CPUs

- AMD

- x86

- Zen

- Microarchitecture

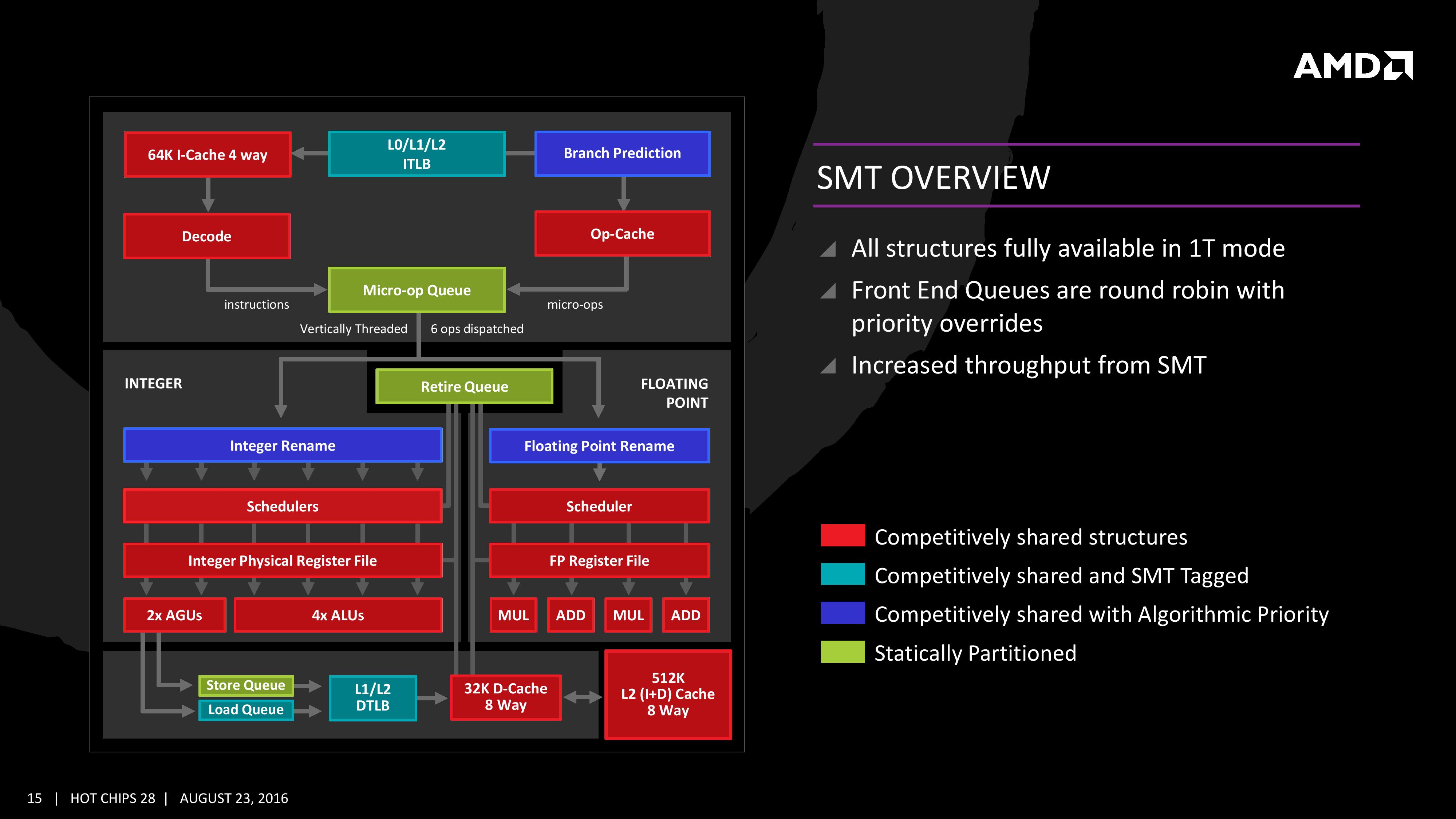

Simultaneous MultiThreading (SMT)

Zen will be AMD’s first foray into a true simultaneous multithreading structure, and certain parts of the core will act differently depending on their implementation. There are many ways to manage threads, particularly to avoid stalls where one thread is blocking another that ends in the system hanging or crashing. The drivers that communicate with the OS also have to make sure they can distinguish between threads running on new cores or when a core is already occupied – to achieve maximum throughput then four threads should be across two cores, but for efficiency where speed isn’t a factor, perhaps power gating/clock gating half the cores in a CCX is a good idea.

There are a number of ways that AMD will deal with thread management. The basic way is time slicing, and giving each thread an equal share of the pie. This is not always the best policy, especially when you have one performance dominant thread, or one thread that creates a lot of stalls, or a thread where latency is vital. In some methodologies the importance of a thread can be tagged or determined, and this is what we get here, though for some of the structures in the core it has to revert to a basic model.

With each thread, AMD performs internal analysis on the data stream for each to see which thread has algorithmic priority. This means that certain threads will require more resources, or that a branch miss needs to be prioritized to avoid long stall delays. The elements in blue (Branch Prediction, INT/FP Rename) operate on this methodology.

A thread can also be tagged with higher priority. This is important for latency sensitive operations, such as a touch-screen input or immediate user input elements required. The Translation Lookaside Buffers work in this way, to prioritize looking for recent virtual memory address translations. The Load Queue is similarly enabled this way, as typically low latency workloads require data as soon as possible, so the load queue is perfect for this.

Certain parts of the core are statically partitioned, giving each thread an equal timing. This is implemented mostly for anything that is typically processed in-order, such as anything coming out of the micro-op queue, the retire queue and the store queue.

The rest of the core is competitive, meaning that if a thread demands more resources it will try to get there first if there is space to do so each cycle.

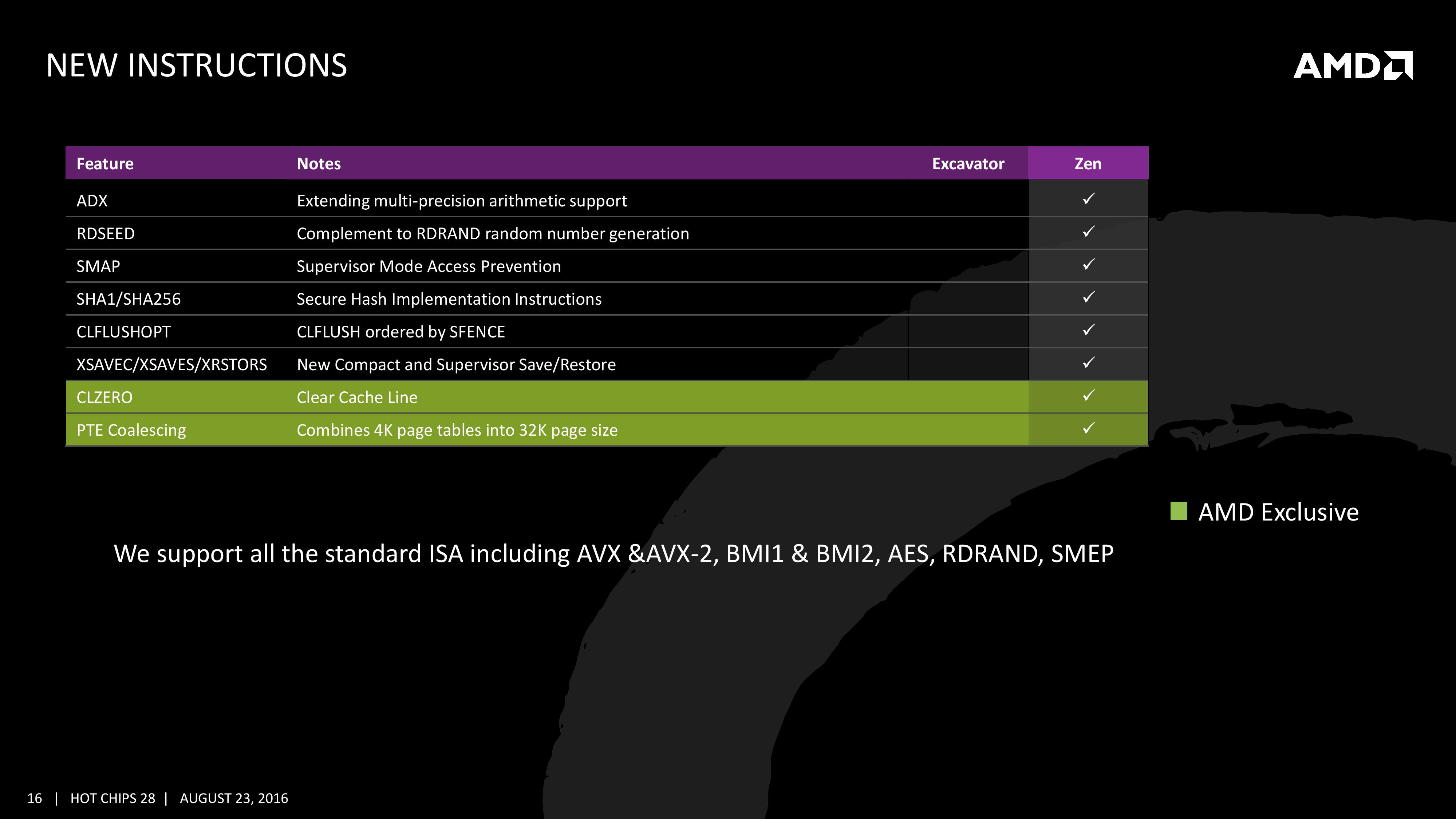

New Instructions

AMD has a couple of tricks up its sleeve for Zen. Along with including the standard ISA, there are a few new custom instructions that are AMD only.

Some of the new commands are linked with ones that Intel already uses, such as RDSEED for random number generation, or SHA1/SHA256 for cryptography. The two new instructions are CLZERO and PTE Coalescing.

The first, CLZERO, is aimed to clear a cache line and is more aimed at the data center and HPC crowds. This allows a thread to clear a poisoned cache line atomically (in one cycle) in preparation for zero data structures. It also allows a level of repeatability when the cache line is filled with expected data. CLZERO support will be determined by a CPUID bit.

PTE (Page Table Entry) Coalescing is the ability to combine small 4K page tables into 32K page tables, and is a software transparent implementation. This is useful for reducing the number of entries in the TLBs and the queues, but requires certain criteria of the data to be used within the branch predictor to be met.

106 Comments

View All Comments

CrazyElf - Tuesday, August 23, 2016 - link

If they can really get a 40% improvement over Excavator, and I mean 40% on average, not on a few select benchmarks, then AMD has a serious chance of being a compelling option once again.I'm hoping to see more improvements on Floating Point, which was comically bad in Bulldozer.

A big part of the problem is that we don't know how well Zen will clock or the power consumption. Still, this should be a major leap in performance overall. We'll have to wait for the launch day benchmarks to see the true story.

Another big concern is the platform. CPU performance is only part of the story. We need a good platform that can rival the Z170 and Intel HEDT platforms for this to be compelling on the desktop. For mobile, there will have to be good dual channel Zen APUs (Carrizo, as Anandtech noted was heavily gimped by poor quality OEM designs obsessed with cost cutting).

jabber - Wednesday, August 24, 2016 - link

Yeah I don't think OEMs and others are that worried about supporting AMD. AMD have withered away so much, making AMD CPU capable gear must have become a very minor part of say ASUS/Gigabyte/MSI etc. revenue stream. Making AMD based graphics cards is okay but motherboards? Not so much.teuast - Wednesday, August 24, 2016 - link

I wouldn't speak so soon. Just this year MSI and Gigabyte (at least) have introduced new AM3+ boards with USB 3.1 and PCIe 3.0. Why, I'm not sure, but if they're doing that for something as old and deprecated as the FX chips, it would defy logic for Zen to come out and for them to only release a few token efforts.I will say, if the CPUs are good but you're right about OEMs not being concerned with support, then the first OEM to say "hey, why don't we make some actually good AM4 boards?" is going to make an absolute killing.

h4rm0ny - Thursday, August 25, 2016 - link

Are you sure about the PCI-E v3 on AM3+ motherboards? I can find recent releases that have USB3.1 and M.2, but none that support PCI-Ev3. Can you link me or provide a model number? I didn't think 3rd generation PCI-E was possible on the Bulldozer line.SKD007 - Thursday, August 25, 2016 - link

SABERTOOTH 990FX/GEN3 R2.0SKD007 - Thursday, August 25, 2016 - link

https://www.asus.com/Motherboards/SABERTOOTH_990FX...Outlander_04 - Thursday, August 25, 2016 - link

A little misleading . The Graphics pci-e controller is built in to an FX processor so adding a pci-e 3 standard slot to a motherboard will make no difference to actual bandwidth.Not an issue though since x16 pci-e 2 has the same bandwidth as x8 pci-e 3 and intel boards with SLI/crossfire ability running at x8/x8 do not choke any current graphics card

h4rm0ny - Thursday, August 25, 2016 - link

What about PCI-E SSDs? Can I get full bandwidth on those? I agree about the graphics cards but that's not so important to me. If I can get full PCI-Ev3 x4 performance for an SSD then I'll pribably buy this as a hold-over until Zen. Thanks fir the link!fanofanand - Friday, August 26, 2016 - link

Pci-e 3.0 x4 should be the same as 2.0 x 8. So long as you have a vacant x8 it should theoretically work the same.extide - Wednesday, September 7, 2016 - link

I think they use a PLX chip and turn the 32 2.0 lanes from the FX chip into 16 3.0 lanes.