The 2TB Samsung 850 Pro & EVO SSD Review

by Kristian Vättö on July 23, 2015 10:00 AM ESTPerformance Consistency

We've been looking at performance consistency since the Intel SSD DC S3700 review in late 2012 and it has become one of the cornerstones of our SSD reviews. Back in the days many SSD vendors were only focusing on high peak performance, which unfortunately came at the cost of sustained performance. In other words, the drives would push high IOPS in certain synthetic scenarios to provide nice marketing numbers, but as soon as you pushed the drive for more than a few minutes you could easily run into hiccups caused by poor performance consistency.

Once we started exploring IO consistency, nearly all SSD manufacturers made a move to improve consistency and for the 2015 suite, I haven't made any significant changes to the methodology we use to test IO consistency. The biggest change is the move from VDBench to Iometer 1.1.0 as the benchmarking software and I've also extended the test from 2000 seconds to a full hour to ensure that all drives hit steady-state during the test.

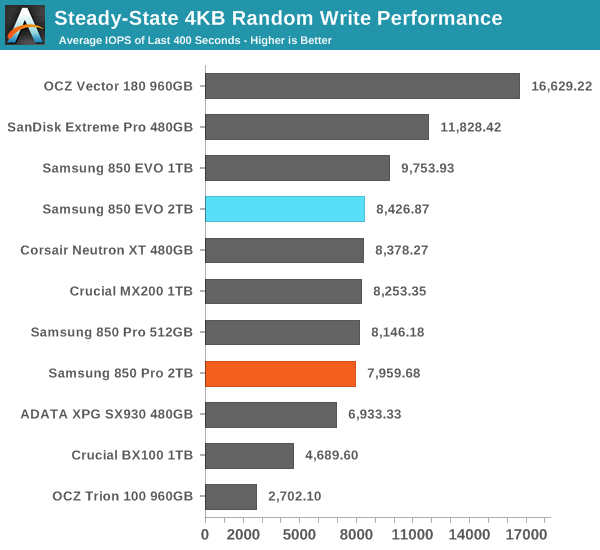

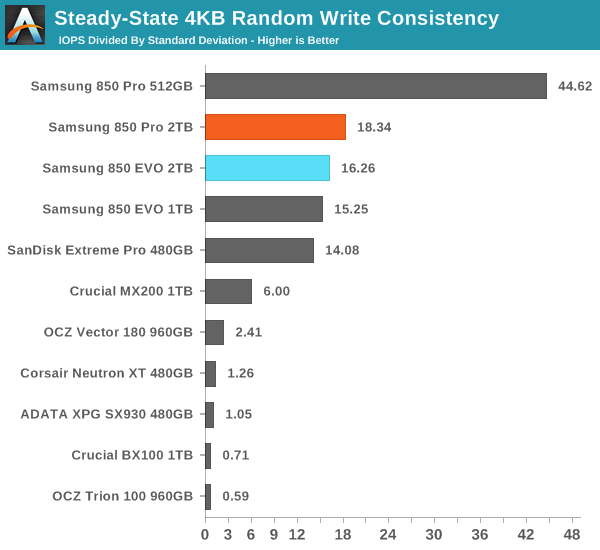

For better readability, I now provide bar graphs with the first one being an average IOPS of the last 400 seconds and the second graph displaying the IOPS divided by standard deviation during the same period. Average IOPS provides a quick look into overall performance, but it can easily hide bad consistency, so looking at standard deviation is necessary for a complete look into consistency.

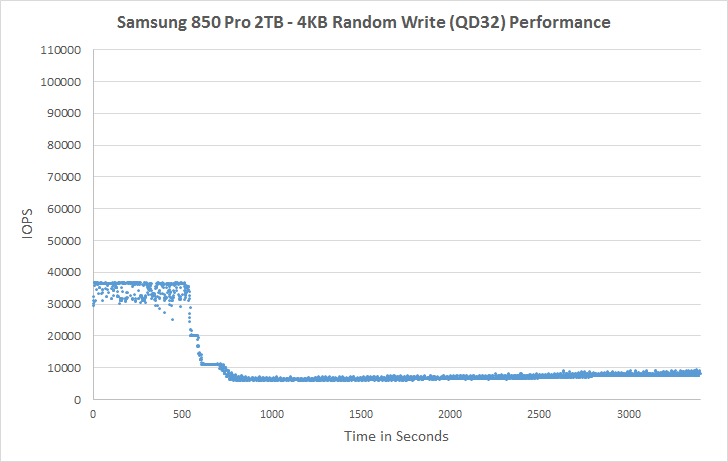

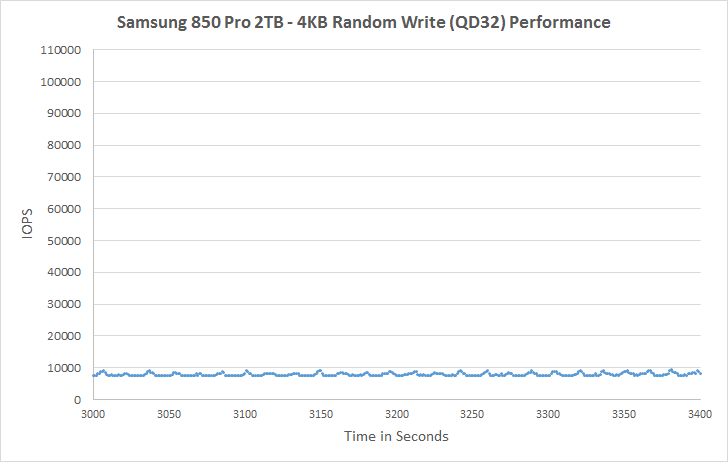

I'm still providing the same scatter graphs too, of course. However, I decided to dump the logarithmic graphs and go linear-only since logarithmic graphs aren't as accurate and can be hard to interpret for those who aren't familiar with them. I provide two graphs: one that includes the whole duration of the test and another that focuses on the last 400 seconds of the test to get a better scope into steady-state performance. These results are for all drives 240GB and up.

In steady-state 4KB random write performance the EVO is actually slightly faster than the Pro, but given that the EVO employs more over-provisioning (12% vs 7%), it's not out of the ordinary. The 2TB EVO performs slightly lower than the 1TB model, so it seems that despite the upgraded DRAM controller the controller may not be ideal for more than 1TB of NAND (internal SRAM caches and the like are the same as in the MEX controller). Nevertheless, the difference isn't substantial and in any case the Pro and EVO both boast excellent steady-state performance.

Both 2TB drives also have great consistency, although the 2TB Pro can't challenge the 512GB Pro that clearly leads the consistency graph. Given the same amount of raw processing power, managing less NAND is obviously easier because the more NAND there is, the more garbage collection calculations the controller has to process, which results in increased variation in performance.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

The behavior in steady-state is similar to other 850 Pro and EVO drives, which is hardly surprising given the underlying firmware similarities. One area to note, though, is the increased performance variation with additional over-provisioning (OP), whereas especially the 512GB Pro presents very consistent performance with 25% OP. In any case, performance with additional OP is class-leading in both Pro and EVO.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

66 Comments

View All Comments

leexgx - Thursday, July 23, 2015 - link

the bug is related to the incorrect Qued trim support on the Samsung drivesthe samsung drives says they support Qued Trim support but they failed to implement it correctly when they added SATA 3.2 in the latest firmware updates, the Old firmware did not have Qued trim bug because the SSD did not advertise support for it, other SSDs that advertise Qued support it have been patched or don't have the buggy support to start off with (accept the m500)

editorsorgtfo - Thursday, July 23, 2015 - link

Can you corroborate this? Nothing in the patch hints at a vendor issue.leexgx - Thursday, July 23, 2015 - link

i guess this is relating to RAID , there is a failed implementation of advertised Queued Trim support in the samsung 840 and 850 evo/pro drives (the drive says it supports it but it does not support it correctly so TRIM commands are issued incorrectly as to why there is a black list for all 840* and 850* drives)your post seems to be related to RAID and kernel issue (but the issue did not happen on Intel drives that they changed to) they rebuilt there intel SSD setups the same as the samsung ones

they did the same auto restore only the drives changed they had 0 problems once they changed to intel/"other whatever it was" SSDs that also supported Qued TRIM even thought they was not using it the RAID bit probably was (was a bit ago when i looked at it)

sustainednotburst - Friday, July 24, 2015 - link

Algolia stated Queued Trim is disabled on their systems, so its not related to Queued Trim.leexgx - Saturday, July 25, 2015 - link

the problem with samsung drives and Qued trim is till there (not just fake qued trim they failed to implement they also failed to implement the 3.2 spec and the advertised features that samsung is exposing)Gigaplex - Thursday, July 23, 2015 - link

Those two links show separate bugs. The algolia reported bug was a kernel issue. The second bug which vFunct was probably referring to is a firmware bug where the SSD advertises queued TRIM support but does not handle it correctly. The kernel works around this by blacklisting queued TRIM from known-bad drives. Windows doesn't support queued TRIM at all which is why you don't see the issue there yet.jann5s - Thursday, July 23, 2015 - link

@AT: please do some data retention measurements with SSD drives! I'm so curious to see if the myth is true and to what extent!Shadowmaster625 - Thursday, July 23, 2015 - link

With 2 whole gigabytes of DRAM, why are random writes not saturating the SATA bus?Kristian Vättö - Thursday, July 23, 2015 - link

The extra DRAM is needed for the NAND mapping table, it's not used to cache any more host IOs.KaarlisK - Thursday, July 23, 2015 - link

Where did TRIM validation go? (The initial approach, which checked whether TRIM restored performance on a filled drive).Considering that controllers have had problems with TRIM not restoring performance, even if this is a minor revision, it still seems an important aspect to test.