The AMD Radeon R9 Fury Review, Feat. Sapphire & ASUS

by Ryan Smith on July 10, 2015 9:00 AM ESTMeet The ASUS STRIX R9 Fury

Our second card of the day is ASUS’s STRIX R9 Fury, which arrived just in time for the article cutoff. Unlike Sapphire, Asus is releasing just a single card, the STRIX-R9FURY-DC3-4G-GAMING.

| Radeon R9 Fury Launch Cards | |||||

| ASUS STRIX R9 Fury | Sapphire Tri-X R9 Fury | Sapphire Tri-X R9 Fury OC | |||

| Boost Clock | 1000MHz / 1020MHz (OC) |

1000MHz | 1040MHz | ||

| Memory Clock | 1Gbps HBM | 1Gbps HBM | 1Gbps HBM | ||

| VRAM | 4GB | 4GB | 4GB | ||

| Maximum ASIC Power | 216W | 300W | 300W | ||

| Length | 12" | 12" | 12" | ||

| Width | Double Slot | Double Slot | Double Slot | ||

| Cooler Type | Open Air | Open Air | Open Air | ||

| Launch Date | 07/14/15 | 07/14/15 | 07/14/15 | ||

| Price | $579 | $549 | $569 | ||

With only a single card, ASUS has decided to split the difference between reference and OC cards and offer one card with both features. Out of the box the STRIX is a reference clocked card, with a GPU clockspeed of 1000MHz and memory rate of 1Gbps. However Asus also officially supports an OC mode, which when accessed through their GPU Tweak II software bumps up the clockspeed 20MHz to 1020MHz. With OC mode offering sub-2% performance gains there’s not much to say about performance; the gesture is appreciated, but with such a small overclock the performance gains are pretty trivial in the long run. Otherwise at stock the card should see performance similar to Sapphire’s reference clocked R9 Fury card.

Diving right into matters, for their R9 Fury card ASUS has opted to go with a fully custom design, pairing up a custom PCB with one of the company’s well-known DirectCU III coolers. The PCB itself is quite large, measuring 10.6” long and extending a further .6” above the top of the I/O bracket. Unfortunately we’re not able to get a clear shot of the PCB since we need to maintain the card in working order, but judging from the design ASUS has clearly overbuilt it for greater purposes. There are voltage monitoring points at the front of the card and unpopulated positions that look to be for switches. Consequently I wouldn’t be all that surprised if we saw this PCB used in a higher end card in the future.

Moving on, since this is a custom PCB ASUS has outfitted the card with their own power delivery system. ASUS is using a 12 phase design here, backed by the company’s Super Alloy Power II discrete components. With their components and their “auto-extreme” build process ASUS is looking to make the argument that the STRIX is a higher quality card, and while we’re not discounting those claims they’re more or less impossible to verify, especially compared to the significant quality of AMD’s own reference design.

Meanwhile it comes as a bit of a surprise that even with such a high phase count, ASUS’s default power limits are set relatively low. We’re told that the card’s default ASIC power limit is just 216W, and our testing largely concurs with this. The overall board TBP is still going to be close to AMD’s 275W value, but this means that Asus has clamped down on the bulk of the card’s TDP headroom by default. The card has enough headroom to sustain 1000MHz in all of our games – which is what really matters – while FurMark runs at a significantly lower frequency than any R9 Fury series cards built on AMD’s PCB as a result of the lower power limit. As a result ASUS also bumps up the power limit by 10% when in OC mode to make sure there’s enough headroom for the higher clockspeeds. Ultimately this doesn’t have a performance impact that we can find, and outside of FurMark it’s unlikely to save any power, but given what Fiji is capable of with respect to both performance and power consumption, this is an interesting design choice on ASUS’s part.

PCB aside, let’s cover the rest of the card. While the PCB is only 10.6” long, ASUS’s DirectCU III cooler is larger yet, slightly overhanging the PCB and extending the total length of the card to 12”. Here ASUS uses a collection of stiffeners, screws, and a backplate to reinforce the card and support the bulky heatsink, giving the resulting card a very sturdy design. In a first for any design we’ve seen thus far, the backplate is actually larger than the card, running the full 12” to match up with the heatsink, and like the Sapphire backplate includes a hole immediately behind the Fiji GPU to allow the many capacitors to better cool. Meanwhile builders with large hands and/or tiny cases will want to make note of the card’s additional height; while the card will fit most cases fine, you may want a magnetic screwdriver to secure the I/O bracket screws, as the additional height doesn’t leave much room for fingers.

For the STRIX ASUS is using one of the company’s triple-fan DirectCU III coolers. Starting at the top of the card with the fans, ASUS calls the fans on this design their “wing-blade” fans. Measuring 90mm in diameter, ASUS tells us that this fan design has been optimized to increase the amount of air pressure on the edge of the fans.

Meanwhile the STRIX also implements ASUS’s variation of zero fan speed idle technology, which the company calls 0dB Fan technology. As one of the first companies to implement zero fan speed idling, the STRIX series has become well known for this feature and the STRIX R9 Fury is no exception. Thanks to the card’s large heatsink ASUS is able to power down the fans entirely while the card is near or at idle, allowing the card to be virtually silent under those scenarios. In our testing this STRIX card has its fans kick in at 55C and shutting off again at 46C.

| ASUS STRIX R9 Fury Zero Fan Idle Points | ||||

| GPU Temperature | Fan Speed | |||

| Turn On | 55C | 28% | ||

| Turn Off | 46C | 25% | ||

As for the DirectCU III heatsink on the STRIX, as one would expect ASUS has gone with a large and very powerful heatsink to cool the Fiji GPU underneath. The aluminum heatsink runs just shy of the full length of the card and features 5 different copper heatpipes, the largest of the two coming in at 10mm in diameter. The heatpipes in turn make almost direct contact with the GPU and HBM, with ASUS having installed a thin heatspeader of sorts to compensate for the uneven nature of the GPU and HBM stacks.

In terms of cooling performance AMD’s Catalyst Control Center reports that ASUS has capped the card at 39% fan speed, though in our experience the card actually tops out at 44%. At this level the card will typically reach 44% by the time it hits 70C, at which point temperatures will rise a bit more before the card reaches homeostasis. We’ve yet to see the card need to ramp past 44%, though if the temperature were to exceed the temperature target we expect that the fans would start to ramp up further. Without overclocking the highest temperature measured was 78C for FurMark, while Crysis 3 topped out at a cooler 71C.

Moving on, ASUS has also adorned the STRIX with a few cosmetic adjustments of their own. The top of the card features a backlit STRIX logo, which pulsates when the card is turned on. And like some prior ASUS cards, there are LEDs next to each of the PCIe power sockets to indicate whether there is a full connection. On that note, with the DirectCU III heatsink extending past the PCIe sockets, ASUS has once again flipped the sockets so that the tabs face the rear of the card, making it easier to plug and unplug the card even with the large heatsink.

Since this is an ASUS custom PCB, it also means that ASUS has been able to work in their own Display I/O configuration. Unlike the AMD reference PCB, for their custom PCB ASUS has retained a DL-DVI-D port, giving the card a total of 3x DisplayPorts, 1x HDMI port, and 1x DL-DVI-D port. So buyers with DL-DVI monitors not wanting to purchase adapters will want to pay special attention to ASUS’s card.

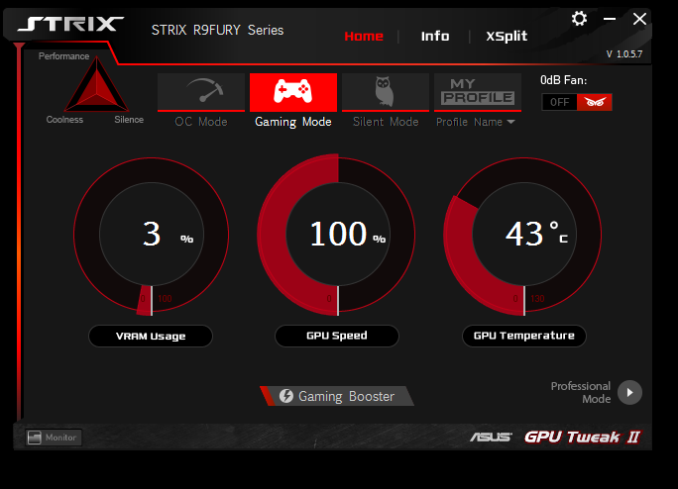

Finally, on the software front, the STRIX includes the latest iteration of ASUS’s GPU Tweak software, which is now called GPU Tweak II. Since the last time we took at look at GPU Tweak the software has undergone a significant UI overhaul, with ASUS giving it more distinct basic and professional modes. It’s through GPU Tweak II that the card’s OC mode can be accessed, which bumps up the card’s clockspeed to 1020MHz. Meanwhile the other basic overclocking and monitoring functions one would expect from a good overclocking software package are present; GPU Tweak II allows control over clockspeeds, fan speeds, and power targets, while also monitoring all of these features and more.

GPU Tweak II also includes a built-in copy of the XSplit game broadcasting software, along with a 1 year premium license. Finally, perhaps the oddest feature of GPU Tweak II is the software’s Gaming Booster feature, which is ASUS’s system optimization utility. Gaming Booster can adjust the system visual effects, system services, and perform memory defragmentation. To be frank, ASUS seems like they were struggling to come up with something to differentiate GPU Tweak II here; messing with system services is a bad idea, and system memory defragmentation is rarely necessary given the nature and abilities of Random Access Memory.

Wrapping things up, the ASUS STRIX R9 Fury will be the most expensive of the R9 Fury launch cards. ASUS is charging a $30 premium for the card, putting the MSRP at $579.

288 Comments

View All Comments

Socius - Saturday, July 11, 2015 - link

Yes...comparing an overclocked 3rd party pimped model against a non-OC'd reference design card that is $100 less in price and then saying it's a tough choice between the 2 as the R9 Fury is faster than the GTX 980...lol...totally balanced perspective there bro.Ryan Smith - Saturday, July 11, 2015 - link

Whenever a pure reference card isn't available, like is the case for R9 Fury, we always test a card at reference clockspeeds in one form or another. For example in this article we have the ASUS STRIX, which ships at reference clockspeeds. No comparisons are made between the factory overclocked Sapphire card and the GTX 980 (at the most we'll say something along the lines of "both R9 Fury cards", where something that is true for the ASUS is true for the Sapphire as well).And if you ever feel like we aren't being consistent or fair on that matter, please let us know.

Socius - Saturday, July 11, 2015 - link

I just want to understand something clearly. Are you saying that when you are talking about performance/value, you ignore the fact that one card can OC 4%, and the other can OC 30% as you don't believe it's relevant? Let me pose the question another way. If you had a friend who was looking to spend $500~ on a card and was leaning between the R9 Fury and the GTX 980. Knowing that the GTX 980 will give him better performance once OC'd, at $450, would you even consider telling him to get the R9 Fury at $550?My concern here is that you're not giving a good representation of real world performance gamers will get. As a result, people get misled into thinking spending the extra money on the R9 Fury is actually going to net them higher frame rate...not realizing they could get better performance, for even less money, if someone decided to actually look at overclocking potential...

Now if you weren't interested in overclocking results in general, I'd say fine. I disagree, but it's your choice. But then you do show overclocking results with the R9 Fury. I'm finding it really hard to understand what your intent is with these articles, if not to educate people and help them make an actual informed decision when making their next purchase.

As I mentioned in your previous article on the Fury X...you seem to have a soft spot for AMD. And I'm not exactly sure why. I will admit that I'm currently a big Nvidia fan. Only because of the features and performance I get. If the Fury X had come out, and could OC like the 980ti and had 8gb HBM memory, I'd have become an AMD fan. I'm a fan of whoever has the best technology at any given moment. And if I were looking to make a decision on my next card purchase, your article here would give a false impression of what I would get if I spent $100 more on an R9 fury, than on a GTX 980...

jardows2 - Saturday, July 11, 2015 - link

Apparently, OC is your thing. I get it. There are plenty of OC sites that are just for you. For some of us, we really don't want to see how much we can shorten the life of something we pay good money for, when the factory performance does what we need. I, for one, am more interested in how something will perform without me potentially damaging my computer, and I appreciate the way that AT does their benchmarks.Socius - Saturday, July 11, 2015 - link

I think the fact that you believe overclocking will "damage your computer" or in any meaningful way shorten the lifespan of the product, is all the more reason to talk about overclocking. I'd be more than welcome to share a little info.Generally speaking, what kills the product is heat (minus high current degradation that was a bigger problem on older fab processes) So let's say you have a GPU that runs 60 degrees Celsius under load, at 1000MHz with a 50% fan speed profile. Now let's imagine 2 scenarios:

1) You underclock the GPU to 900MHz and set a 30% fan profile to make your system more quiet. Under load, your GPU now hits 70 degrees Celsius.

2) You overclock the GPU to 1100MHz and set a 75% fan profile for more performance at the cost of extra sound. Under load, your GPU now hits 58 degrees Celsius due to increased fan speed.

Which one of these devices would you think is likely to last the longest? If you said the Overclocked one, you'd be correct. In fact...the overclocked one is likely to last even longer than the stock 1000MHz at the 50% fan speed profile, because despite using more power and giving more performance, the fan is working harder to keep it cooler, thus reducing the stress on the components.

Now. Let's talk about why that card was clocked at 1000MHz to start! When a chip is designed, the exact clock speed is an unknown. Not just between designs...but between individual wafers and dies cut out from those wafers. As an example, I had an i7 3770k that would use 1.55v to hit 4.7GHz. I now have one that uses 1.45v to hit 5.2GHz and 1.38v to hit 5GHz. Why am I telling you this? Well...because it's important! When designing a product like a CPU or GPU, and setting a base clock, you have to account for a few things:

1) How much power do I want to feed it?

2) How much of the heat generated by that power can I dissipate with my fan design?

3) How loud do I want my fan to be?

4) What's the highest clock rate my lowest end wafer can hit while remaining stable and at an acceptable voltage requirement?

So here's the fun part. So while chips themselves can vary greatly, there are tons of precautions added when dealing with stock speeds. Just for a point of reference...a single GTX Titan X is guaranteed to overclock 1400MHz-1550MHz with proper settings, if you put the fan at 100% full blast. That's a 30%-44% overclock! So why wouldn't Nvidia do that? Well it's a few things.

1) Noise! Super important here. Your clockspeed is determined by your ability to cool it. And if you're cooling by means of a fan, the faster that fan, the more noise, the more complaints by consumers.

2) Power/Heat variability. Since each chip is different, as you go into the higher ranges, each will require a different amount of power in order to be stable at that frequency. If you're curious, you can see what's called an ASIC quality for your GPU using a program like GPU-Z. This number will tell you roughly how good of a chip you have, in terms of how much of a clock it can achieve with how much power. The higher the % of your ASIC quality, the better overclocking potential you have on air because it'll require less power, and therefore create less heat to do it!

3) Overclocking potential. This is actually important in Marketing. And it's something AMD and Nvidia are both pretty bad at, actually. But AMD's bit a bit worse, to their own detriment. In their R9 Fury and Fury X press release performance numbers they set expectations for their cards to completely outperform the 980ti using best case scenarios and hand picked settings. And they also said it overclocks like a beast. Now...here's why that's bad. Customers like to feel they're getting more than what they pay for. That's why companies like BMW always list very modest 0-60 times for their cars. When I say modest, I mean they set the 0-60 times to show they're worse than what the car is actually capable of. That's why every car review program you see will show 0-60 times being 0.2 to 0.5 seconds faster than what BMW has actually listed. This works because you're sold on a great product, only to find it's even greater than that.

Got off track a bit there. I apologize. Back to AMD and the Fiji lineup and why this long post was necessary. When AMD announced the Fury X being an all in one cooler design, I instantly knew what was up. The chip wasn't able to hold up to the limitations we talked about above (power requirement/heat/fan noise/stability). But they needed to put out a stock clock that would allow the card to be competitive with Nvidia, but they also didn't want it to sound like a hair dryer. That's why they opted for the all in one cooler design. Otherwise, a chip that big on an air cooled design would likely have been clocked around the 850-900MHz range that the original GTX Titan had. But they wanted the extra performance, which created extra heat and required more power, and used a better cooler design to be able to accomplish that across the board with all their chips. That's great, right? Well...yes and no. I'll explain.

Essentially the Fiji lineup is "factory overclocked" by AMD. This is the same as putting a turbo on a car engine. And as any car enthusiast will tell you, a 2L engine with a turbo on it may be able to produce 330 horsepower, which could otherwise take a naturally aspirated 4L V8 to accomplish. But then you're limited for increased horsepower even further. Sure you could put a bigger supercharger on that 2L engine. But it already had a boosted performance for that size engine. So your gains will be minimal. But with that naturally aspirated engine...you can drop a supercharger on it and realize massive gains. This is very much the same as what's happening with the Fury X, for example.

And this is why I believe it's incredibly important to point this out. I built a system for my friend recently with a GTX 970. I overclocked that to 1550MHz on first try, without even maxing out the voltage. That was a $300 model card. And even that would challenge the performance of the R9 Fury if you don't plan on overclocking (not that you could, anyway, with that 4% overclock limit).

So...yes, I do think Overclocking needs to be talked about more, as it's become far easier and safer to do than in the past. Even if you don't plan to do extreme overclocking, you can keep all your fan speed automated profiles the same, don't touch voltage, and just increase power limit and a slight increase in the gpu clock for simple free performance. It's something I could teach my mom to do. So I hope it's something that is done by more and more people, as there's really no reason not to do it.

And that's why I think informing people of these differences with regard to overclocking will help people save money, and get more performance. And not doing just keeps people in the dark, and does them a great disservice. Why keep your audience oblivious and allow them to remain ignorant to these things when you can take some time to help them? Overclocking today is far different from the overclocking of a few years ago. And everybody should give it a try.

mdriftmeyer - Sunday, July 12, 2015 - link

Ryan is biased in these comparisons. That's a fact. Your knowledge silencing the person defending Ryan's unbalanced comparisons is a fact.jardows2 - Monday, July 13, 2015 - link

What a hoot! He didn't silence me. I just have better things to do on the weekend than check all the Internet forums I may have posted to!jardows2 - Monday, July 13, 2015 - link

@SociusThat was a good post explaining your interest in OC. From my perspective, I come from the days when OC'ing would void your warranty and would shorten the life span of your system. I also come from a more business and channel oriented background, meaning that we stay with "officially supported" configurations. That background stays with you. Even today, when building my personal computer or a web browsing computer for a family member, I do everything I can to stay with the QVL of the motherboard.

I am more concerned with out of box experience, and almost always skip over the OC results of benchmarks whenever they are presented. If a device can not perform properly OOB, then it was not properly configured from the start, which does not give me the best impression about the part, regardless of the potential of individual tweaks.

In the end, you are looking for OC results, I am only concerned with OOB experience. Different target audience, both with valid concerns. I just don't think it is worth bashing a reviewer's method that focuses on one experience over the other.

Mugur - Saturday, July 11, 2015 - link

Good review as always, Ryan. I wouldn't throw away Asus's approach. Nice power efficiency gains. Funny how overclocking for a few fps more heated the discussion... All in all, I think AMD is back in business with Fury non-X. Waiting for 3xx reviews, hopefully for the whole R9 line, with 2, 4 and 8 GB.3ogdy - Saturday, July 11, 2015 - link

What a disappointment again. Man...the Fury cards really aren't worth the hassle at all, are they? It's sad to see this, especially coming from an FX-8350 & 2xHD6950s owner. So the 980Ti beats the custom cards (we knew Fury X was quite at its limits from the beginning, but still - despite all the improvements, custom cards sometimes perform even worse than the stock one. The 980Ti really beats the Fury X most of the time.What is that, nVidia's blower is only 8dB louder than the highest end of the fans used by arguably the two best board partners AMD has? Wow! This is where I realize nVidia really must have done an amazing job with the 980Ti and Maxwell in general.

HBM this HBM that...this card is beaten at 4K by the GTX980Ti and gameplay seems to be smoother on the nVidia card too. What the hell? Where are the reasons to buy any of the Fury cards?