The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTFinal Words

Bringing this review to a close, AMD has certainly thrown a great deal at us with the Radeon R9 Fury X. After the company’s stumble with their last single-GPU flagship, the Radeon R9 290X, they have reevaluated what they want to do, how they want to build their cards, and what kind of performance they want to aim for. As a result the R9 Fury X is an immensely interesting card.

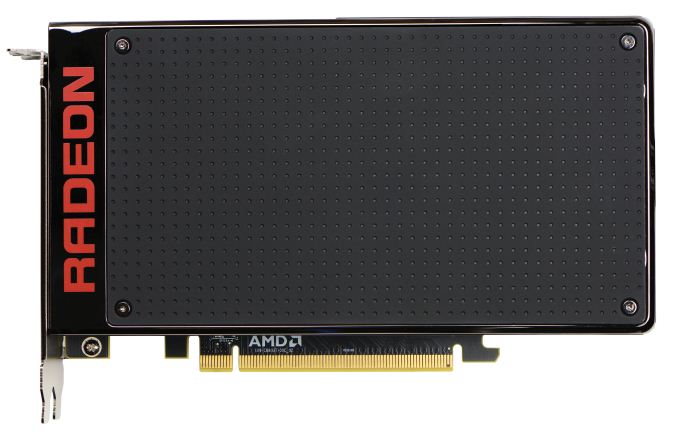

From a technical perspective AMD has done a ton right here. The build quality is excellent, the load acoustic performance is unrivaled, the performance is great. Meanwhile although the ultimate value of High Bandwidth Memory to the buyer is only as great as the card’s performance, from a hobbyist perspective I am excited for what it means for future cards. The massive bandwidth improvements, the power savings, and the space savings have all done wonderful things for the R9 Fury X, and will do so for other cards in the future as well.

Compared to the R9 290X then, AMD has gone and done virtually everything they have needed to do in order to right what was wrong, and to compete with an NVIDIA energized by GTX Titan and the Maxwell architecture. As self-admittedly one of the harshest critics of the R9 290X and R9 290 due to the 290 series’ poor reference acoustic performance, I believe AMD has built an amazing card with the R9 Fury X. I dare say AMD finally “gets it” on card quality and so much more.

Had this card launched against the GTX Titan X a couple of months ago, where we would be today is talking about how AMD doesn’t quite dethrone the NVIDIA flagship, but instead how they serve as a massive spoiler, delivering so much of GTX Titan X’s performance for a fraction of the cost. But, unfortunately for AMD, this is not what has happened. The competition for the R9 Fury X is not an overpriced GTX Titan X, but a well-priced GTX 980 Ti, which to add insult to injury launched first, even though it was in all likelihood NVIDIA’s reaction to R9 Fury X.

The problem for AMD is that the R9 Fury X is only 90% of the way there, and without a price spoiler effect the R9 Fury X doesn’t go quite far enough. At 4K it trails the GTX 980 Ti by 4%, which is to say that AMD could not manage a strict tie or to take the lead. To be fair to AMD, a 4% difference in absolute terms is unlikely to matter in the long run, and for most practical purposes the R9 Fury X is a viable alternative to the GTX 980 Ti at 4K. None the less it does technically trail the GTX 980 Ti here, and that’s not the only issue that dogs such a capable card.

At 2560x1440 the card loses its status as a viable alternative. AMD’s performance deficit is over 10% at this point, and as we’ve seen in a couple of our games, AMD is hitting some very real CPU bottlenecking even on our high-end system. Absolute framerates are high enough that this only occurs at lower resolutions thanks to the high framerates these resolutions afford (and not a problem for 60Hz monitors), however at the same time AMD is also promoting 2560x1440@144Hz Freesync monitors, which these CPU bottlenecking issues greatly undercut.

The bigger issue, I suppose, is that while the R9 Fury X is very fast, I don’t feel we’ve reached the point where 4K gaming on a single GPU is the best way to go; too often we still need to cut back on image quality to reach playable framerates. 4K is arguably still the domain of multi-GPU setups, meanwhile cards like the R9 Fury X and GTX 980 Ti are excellent cards for 2560x1440 gaming, or even 1080p gaming for owners who want to take advantage of the image quality improvements from Virtual Super Resolution.

The last issue that dogs AMD here is VRAM capacity. At the end of the day first-generation HBM limits them to 4GB of VRAM, and while they’ve made a solid effort to work around the problem, there is only so much they can do. 4GB is enough right now, but I am concerned that R9 Fury X owners will run into VRAM capacity issues before the card is due for a replacement even under an accelerated 2 year replacement schedule.

Once you get to a straight-up comparison, the problem AMD faces is that the GTX 980 Ti is the safer bet. On average it performs better at every resolution, it has more VRAM, it consumes a bit less power, and NVIDIA’s drivers are lean enough that we aren’t seeing CPU bottlenecking that would impact owners of 144Hz displays. To that end the R9 Fury X is by no means a bad card – in fact it’s quite a good card – but NVIDIA struck first and struck with a slightly better card, and this is the situation AMD must face. At the end of the day one could do just fine with the R9 Fury X, it’s just not what I believe to be the best card at $649.

With that said, the R9 Fury X does have some advantages, that at least in comparing reference cards to reference cards, NVIDIA cannot touch, and these advantages give the R9 Fury X a great niche to reside in. The acoustic performance is absolutely amazing, and while it’s not enough to overcome some of the card’s other issues overall, if you absolutely must have the lowest load noise possible from a reference card, the R9 Fury X should easily impress you. I doubt that even the forthcoming R9 Nano can match what AMD has done with the R9 Fury X in this respect. Meanwhile, although the radiator does present its own challenges, the smaller size of the card should be a boon to small system builders who need something a bit different than standard 10.5” cards. Throw a couple of these into a Micro-ATX SFF PC, and it will be the PSU, not the video cards, that become your biggest concern.

Ultimately I believe AMD deserves every bit of credit they get for the R9 Fury X. They have put together a solid card that shows an impressive improvement over what they gave us 2 years ago with R9 290X. With that said, as someone who would like to see AMD succeed and prosper, the fact that they get so close only to be outmaneuvered by NVIDIA once again makes the current situation all the more painful; it’s one thing to lose to NVIDIA by feet, but to lose by inches only reminds you of just how close they got, how they almost upset NVIDIA. At the end of the day I think AMD can at least take home credit for forcing the GTX 980 Ti in to existence, which has benefitted the wider hobbyist community. Still, looking at AMD’s situation I can’t help but wonder what happens from here, as it seems like AMD badly needed a win they won’t quite get.

Finally, with the launch of the R9 Fury X behind us, it’s time to turn our gaze towards the future, the very near future. The R9 Fury X’s younger sibling, the R9 Fury, launches in 2 weeks. Though certainly slower by virtue of its cut-down Fiji GPU, it is also $100 cheaper, and is a more traditional air-cooled card design as well. With NVIDIA still selling the 4GB GTX 980 for $500, the playing field is going to be much different below the R9 Fury X, so I am curious to see just how things shape up on the 14th.

458 Comments

View All Comments

bennyg - Saturday, July 4, 2015 - link

Marketing performance. Exactly.Except efficiency was not good enough across the generations of 28nm GCN in an era where efficiency + thermal/power limits constrain performance, and look what Nvidia did over a similar era from Fermi (which was at market when GCN 1.0 was released) to Kepler to Maxwell. Plus efficiency is kind of the ultimate marketing buzzword in all areas of tech and not having any ability to mention it (plus having generally inferor products) hamstrung their marketing all along

xenol - Monday, July 6, 2015 - link

Efficiency is important because of three things:1. If your TDP is through the rough, you'll have issues with your cooling setup. Any time you introduce a bigger cooling setup because your cards run that hot, you're going to be mocked for it and people are going to be weary of it. With 22nm or 20nm nowhere in sight for GPUs, efficiency had to be a priority, otherwise you're going to ship cards that take up three slots or ship with water coolers.

2. You also can't just play to the desktop market. Laptops are still the preferred computing platform and even if people are going for a desktop, AIOs are looking much more appealing than a monitor/tower combo. So you want to have any shot in either market, you have to build an efficient chip. And you have to convince people they "need" this chip, because Intel's iGPUs do what most people want just fine anyway.

3. Businesses and such with "always on" computers would like it if their computers ate less power. Even if you can save a handful of watts, multiplying that by thousands and they add up to an appreciable amount of savings.

xenol - Monday, July 6, 2015 - link

(Also by "computing platform" I mean the platform people choose when they want a computer)medi03 - Sunday, July 5, 2015 - link

ATI is the reason both Microsoft and Sony use AMDs APUs to power their consoles.It might be the reason why APUs even exist.

tipoo - Thursday, July 2, 2015 - link

That was then, this is now. Now, AMD together with the acquisition, has a lower market cap than Nvidia.Murloc - Thursday, July 2, 2015 - link

yeah, no.ddriver - Thursday, July 2, 2015 - link

ATI wasn't bigger, AMD just paid a preposterous and entirely unrealistic amount of money for it. Soon after the merger, AMD + ATI was worth less than what they paid for the latter, ultimately leading to the loss of its foundries, putting it in an even worse position. Let's face it, AMD was, and historically has always been betrayed, its sole purpose is to create the illusion of competition so that the big boys don't look bad for running unopposed, even if this is what happens in practice.Just when AMD got lucky with Athlon a mole was sent to make sure AMD stays down.

testbug00 - Sunday, July 5, 2015 - link

foundries didn't go because AMD bought ATI. That might have accelerated it by a few years however.Foundry issue and cost to AMD dates back to the 1990's and 2000-2001.

5150Joker - Thursday, July 2, 2015 - link

True, AMD was at a much better position in 2006 vs NVIDIA, they just got owned.3DVagabond - Friday, July 3, 2015 - link

When was Intel the underdog? Because that's who's knocked them down (The aren't out yet.).