The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTBattlefield 4

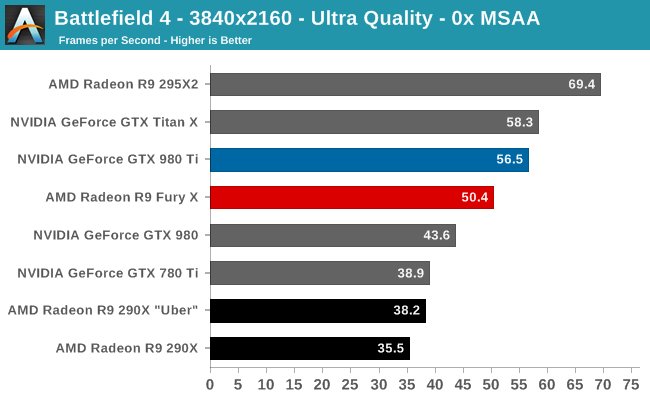

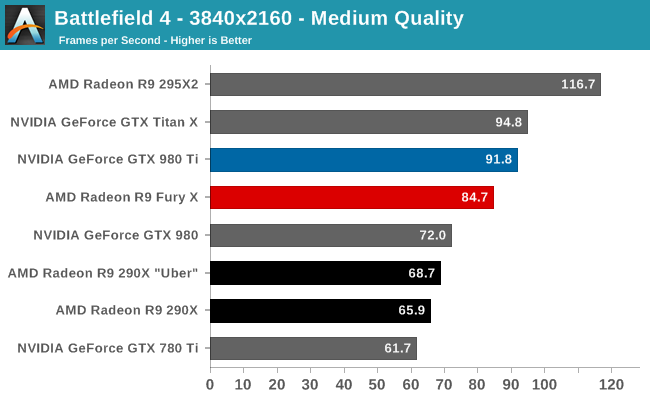

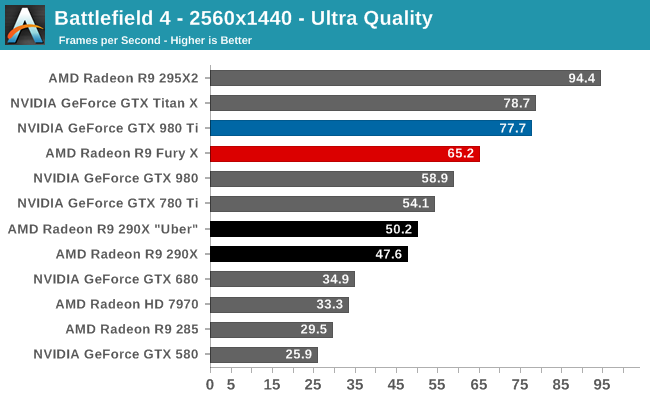

Kicking off our benchmark suite is Battlefield 4, DICE’s 2013 multiplayer military shooter. After a rocky start, Battlefield 4 has since become a challenging game in its own right and a showcase title for low-level graphics APIs. As these benchmarks are from single player mode, based on our experiences our rule of thumb here is that multiplayer framerates will dip to half our single player framerates, which means a card needs to be able to average at least 60fps if it’s to be able to hold up in multiplayer.

As we briefly mentioned in our testing notes, our Battlefield 4 testing has been slightly modified as of this review to accommodate the changes in how AMD is supporting Mantle. This benchmark still defaults to Mantle for GCN 1.0 and GCN 1.1 cards (7970, 290X), but we’re using Direct3D for GCN 1.2 cards like the R9 Fury X. This is due to the lack of Mantle driver optimizations on AMD’s part, and as a result the R9 Fury X sees poorer performance here, especially at 2560x1440 (65.2fps vs. 54.3fps).

In any case, regardless of the renderer you pick, our first test does not go especially well for AMD and the R9 Fury X. The R9 Fury X does not take the lead at any resolution, and in fact this is one of the worse games for the card. At 4K AMD trails by 8-10%, and at 1440p that’s 16%. In fact the latter is closer to the GTX 980 than it is the GTX 980 Ti. Even with the significant performance improvement from the R9 Fury X, it’s not enough to catch up to NVIDIA here.

Meanwhile the performance improvement over the R9 290X “Uber” stands at between 23% and 32% depending on the resolution. AMD not only scales better than NVIDIA with higher resolutions, but R9 Fury X is scaling better than R9 290X as well.

458 Comments

View All Comments

looncraz - Friday, July 3, 2015 - link

75MHz on a factory low-volting GPU is actually to be expected. If the voltage scaled automatically, like nVidia's, there is no telling where it would go. Hopefully someone cracks the voltage lock and gets to cranking of the hertz.chizow - Friday, July 3, 2015 - link

North of 400W is probably where we'll go, but I look forward to AMD exposing these voltage controls, it makes you wonder why they didn't release them from the outset given they made the claims the card was an "Overclocker's Dream" despite the fact it is anything but this.Refuge - Friday, July 3, 2015 - link

It isn't unlocked yet, so nobody has overclocked it yet.chizow - Monday, July 6, 2015 - link

But but...AMD claimed it was an Overclocker's Dream??? Just another good example of what AMD says and reality being incongruent.Thatguy97 - Thursday, July 2, 2015 - link

would you say amd is now the "geforce fx 5800"sabrewings - Thursday, July 2, 2015 - link

That wasn't so much due to ATI's excellence. It had a lot to do with NVIDIA dropping the ball horribly, off a cliff, into a black hole.They learned their lessons and turned it around. I don't think either company "lost" necessarily, but I will say NVIDIA won. They do more with less. More performance with less power, less transistors, less SPs, and less bandwidth. Both cards perform admirably, but we all know the Fury X would've been more expensive had the 980 Ti not launched where it did. So, to perform arguably on par, AMD is living with smaller margins on probably smaller volume while Nvidia has plenty of volume with the 980 Ti and their base cost is less as they're essentially using Titan X throw away chips.

looncraz - Thursday, July 2, 2015 - link

They still had to pay for those "Titan X throw away chips" and they cost more per chip to produce than AMD's Fiji GPU. Also, nVidia apparently had to not cut down the GPU as much as they were planning as a response to AMD's suspected performance. Consumers win, of course, but it isn't like nVidia did something magical, they simply bit the bullet and undercut their own offerings by barely cutting down the Titan X to make the 980Ti.That said, it is very telling that the AMD GCN architecture is less balanced in relation to modern games than the nVidia architecture, however the GCN architecture has far more features that are going unused. That is one long-standing habit ATi and, now, AMD engineers have had: plan for the future in their current chips. It's actually a bad habit as it uses silicon and transistors just sitting around sucking up power and wasting space for, usually, years before the features finally become useful... and then, by that time, the performance level delivered by those dormant bits is intentionally outdone by the competition to make AMD look inferior.

AMD had tessellation years before nVidia, but it went unused until DX11, by which time nVidia knew AMD's capabilities and intentionally designed a way to stay ahead in tessellation. AMD's own technology being used against it only because it released it so early. HBM, I fear, will be another example of this. AMD helped to develop HBM and interposer technologies and used them first, but I bet nVidia will benefit most from them.

AMD's only possible upcoming saving grace could be that they might be on Samsung's 14nm LPP FinFet tech at GloFo and nVidia will be on TSMC's 16nm FinFet tech. If AMD plays it right they can keep this advantage for a couple generations and maximize the benefits that could bring.

vladx - Thursday, July 2, 2015 - link

Afaik, even though TSMC's GinFet will be 16nm it's a superior process overall to GloFo's 14nm FF so I dount AMD will gain any advantage.testbug00 - Sunday, July 5, 2015 - link

TSMC's FinFET 16nm process might be better than GloFo's own canceled 14XM or whatever they called it.Better than Samsung's 14nm? Dubious. Very unlikely.

chizow - Sunday, July 5, 2015 - link

Why is it dubious? What's the biggest chip Samsung has fabbed? If they start producing chips bigger than the 100mm^2 chips for Apple, then we can talk but as much flak as TSMC gets flak over delays/problems, they still produce what are arguably the world's most advanced seminconductors, right there next to Intel's biggest chips in size and complexity.