The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTGRID Autosport

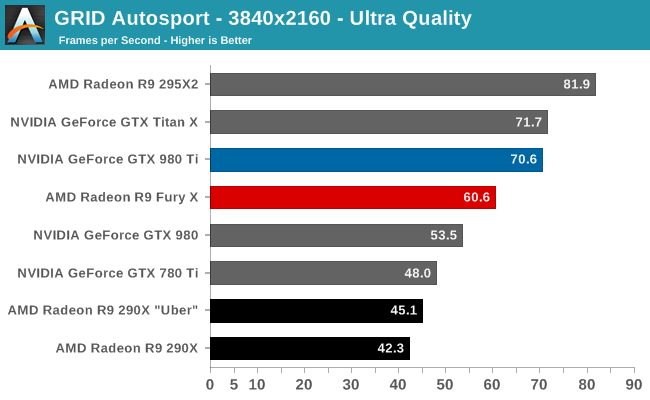

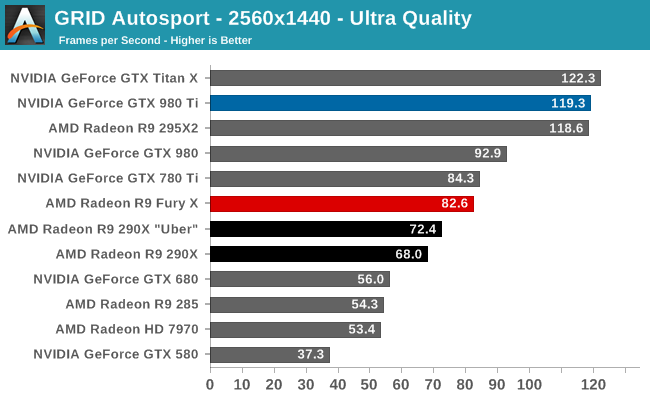

For the racing game in our benchmark suite we have Codemasters’ GRID Autosport. Codemasters continues to set the bar for graphical fidelity in racing games, delivering realistic looking environments with layed with additional graphical effects. Based on their in-house EGO engine, GRID Autosport includes a DirectCompute based advanced lighting system in its highest quality settings, which incurs a significant performance penalty on lower-end cards but does a good job of emulating more realistic lighting within the game world.

Unfortunately for AMD, after a streak of wins and ties for AMD, things start going off the rails with GRID, very off the rails.

At 4K Ultra this is AMD’s single biggest 4K performance deficit; the card trails the GTX 980 Ti by 14%. The good news is that in the process the card cracks 60fps, so framerates are solid on an absolute basis, though there are still going to be some frames below 60fps for racing purists to contend with.

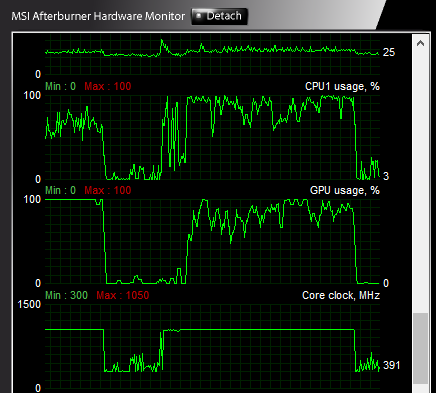

Where things get really bad is at 1440p, in a situation we have never seen before in a high-end AMD video card review. The R9 Fury X gets pummeled here, trailing the GTX 980 Ti by 30%, and even falling behind the GTX 980 and GTX 780 Ti. The reason it’s getting pummeled is because the R9 Fury X is CPU bottlenecked here; no matter what resolution we pick, the R9 Fury X can’t spit out more than about 82fps here at Ultra quality.

With GPU performance outgrowing CPU performance year after year, this is something that was due to happen sooner or later, and is a big reason that low-level APIs are about to come into the fold. And if it was going to happen anywhere, it would happen with a flagship level video card. Still, with an overclocked Core i7-4960X driving our testbed, this is also one of the most powerful systems available with respect to CPU performance, so AMD’s drivers are burning an incredible amount of CPU time here.

Ultimately GRID serves to cement our concerns about AMD’s performance at 1440p, as it’s very possible that this is the tip of the iceberg. DirectX 11 will go away eventually, but it will still take some time. In the meantime there are a number of 1440p gamers out there, especially with R9 Fury X otherwise being such a good fit for high frame rate 1440p gaming. Perhaps the biggest issue here is that this makes it very hard to justify pairing 1440p 144Hz monitors with AMD’s GPUs, as although 82.6fps is fine for a 60Hz monitor, these CPU issues are making it hard for AMD to deliver framerates more suitable/desirable for those high performance monitors.

458 Comments

View All Comments

testbug00 - Sunday, July 5, 2015 - link

You don't need architecture improvements to use DX12/Vulkan/etc. The APIs merely allow you to implement them over DX11 if you choose to. You can write a DX12 game without optimizing for any GPUs (although, not doing so for GCN given consoles are GCN would be a tad silly).If developers are aiming to put low level stuff in whenever they can than the issue becomes that due to AMD's "GCN everywhere" approach developers may just start coding for PS4, porting that code to Xbox DX12 and than porting that to PC with higher textures/better shadows/effects. In which Nvidia could take massive performance deficites to AMD due to not getting the same amount of extra performance from DX12.

Don't see that happening in the next 5 years. At least, not with most games that are console+PC and need huge performance. You may see it in a lot of Indie/small studio cross platform games however.

RG1975 - Thursday, July 2, 2015 - link

AMD is getting there but, they still have a little bit to go to bring us a new "9700 Pro". That card devastated all Nvidia cards back then. That's what I'm waiting for to come from AMD before I switch back.Thatguy97 - Thursday, July 2, 2015 - link

would you say amd is now the "geforce fx 5800"piroroadkill - Thursday, July 2, 2015 - link

Everyone who bought a Geforce FX card should feel bad, because the AMD offerings were massively better. But now AMD is close to NVIDIA, it's still time to rag on AMD, huh?That said, of course if I had $650 to spend, you bet your ass I'd buy a 980 Ti.

Thatguy97 - Thursday, July 2, 2015 - link

oh believe me i remember they felt bad lol but im not ragging on amd but nvidia stole their thunder with the 980 tiKateH - Thursday, July 2, 2015 - link

C'mon, Fury isn't even close to the Geforce FX level of fail. It's really hard to overstate how bad the FX5800 was, compared to the Radeon 9700 and even the Geforce 4600Ti.The Fury X wins some 4K benchmarks, the 980Ti wins some. The 980Ti uses a bit less power but the Fury X is cooler and quieter.

Geforce FX level of fail would be if the Fury X was released 3 months from now to go up against the 980Ti with 390X levels of performance and an air cooler.

Thatguy97 - Thursday, July 2, 2015 - link

To be fair the 5950 ultra was actually decentMorawka - Thursday, July 2, 2015 - link

your understating nvidia's scores.. the won 90% of all benchmarks, not just "some". a full 120W more power under furmark load and they are using HBM!!looncraz - Thursday, July 2, 2015 - link

Furmark power load means nothing, it is just a good way to stress test and see how much power the GPU is capable of pulling in a worst-case scenario and how it behaves in that scenario.While gaming, the difference is miniscule and no one will care one bit.

Also, they didn't win 90% of the benchmarks at 4K, though they certainly did at 1440. However, the real world isn't that simple. A 10% performance difference in GPUs may as well be zero difference, there are pretty much no game features which only require a 10% higher performance GPU to use... or even 15%.

As for the value argument, I'd say they are about even. The Fury X will run cooler and quieter, take up less space, and will undoubtedly improve to parity or beyond the 980Ti in performance with driver updates. For a number of reasons, the Fury X should actually age better, as well. But that really only matters for people who keep their cards for three years or more (which most people usually do). The 980Ti has a RAM capacity advantage and an excellent - and known - overclocking capacity and currently performs unnoticeably better.

I'd also expect two Fury X cards to outperform two 980Ti cards with XFire currently having better scaling than SLI.

chizow - Thursday, July 2, 2015 - link

The differences in minimums aren't miniscule at all, and you also seem to be discounting the fact 980Ti overclocks much better than Fury X. Sure XDMA CF scales better when it works, but AMD has shown time and again, they're completely unreliable for timely CF fixes for popular games to the point CF is clearly a negative for them right now.