GIGABYTE GB-BXi7H-5500 Broadwell BRIX Review

by Ganesh T S on January 29, 2015 7:00 AM ESTPower Consumption and Thermal Performance

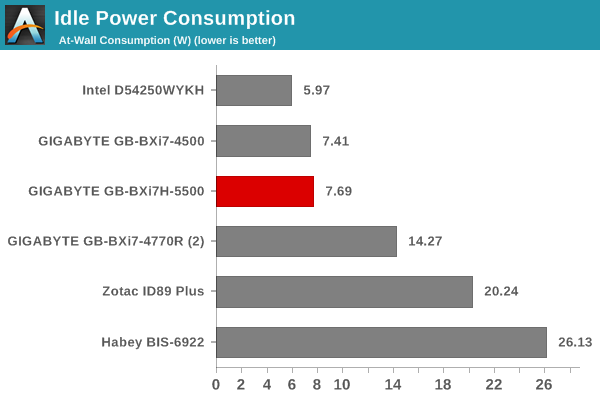

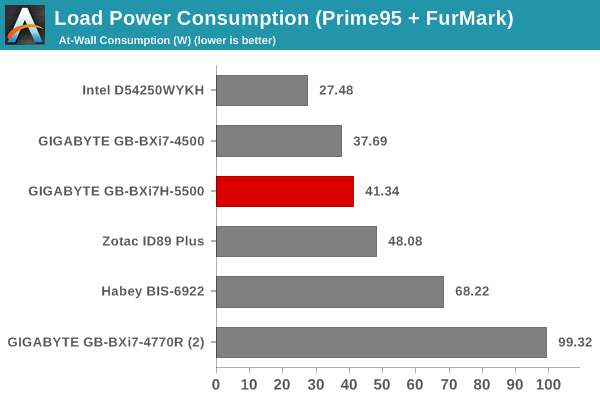

The power consumption at the wall was measured with a 1080p display being driven through the HDMI port. In the graphs below, we compare the idle and load power of the GIGABYTE GB-BXi7H-5500 with other low power PCs evaluated before. For load power consumption, we ran Furmark 1.15.0 and Prime95 v28.5 together. The numbers are not beyond the realm of reason for the combination of hardware components in the machine.

The slightly higher base clocks in the Core i7-5500U (compared to the Core i7-4500U) are probably the reason for the Haswell-based unit appearing more power efficient than the Broadwell counterpart - but, make no mistake here - the Broadwell unit wins the performance per watt test quite easily.

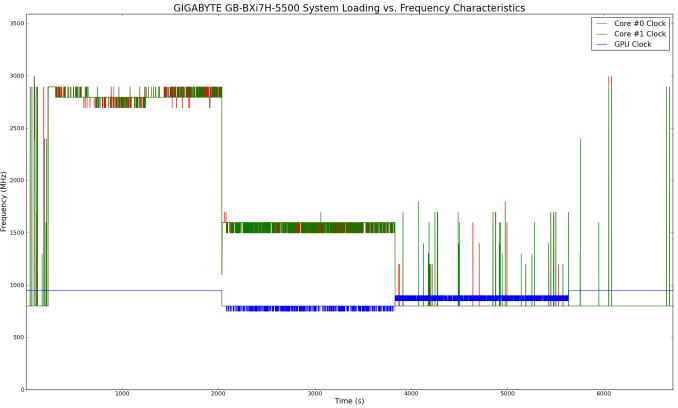

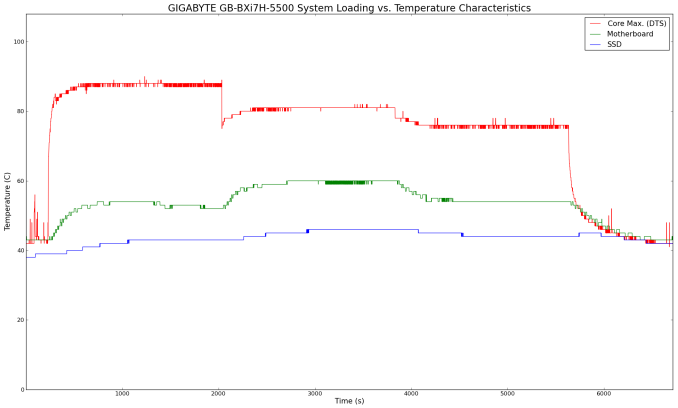

The evaluation of the thermal performance was performed by monitoring the various clocks in the system as well as the temperatures with the unit when subject to the following workload. We start with the system at idle, followed by 30 minutes of pure CPU loading. This is followed by another 30 minutes of both CPU and GPU being loaded simultaneously. After this, the CPU load was removed, allowing the GPU to be loaded alone for another 30 minutes.

In the pure CPU loading scenario, the cpre frequencies stay well above the suggested base value of 2.4 GHz (indicating that GIGABYTE's trust in their cooling solution). The core temperature doesn't cross 90 C during this time (the junction temperature is 105 C). On the other hand, when the CPU and GPU are both loaded, the frequencies drop down to around 1.6 GHz for the cores. The GPU is advertised to run at a base clock of 300 MHz, with a turbo mode of 950 MHz. The actual frequency stays above 800 MHz comfortably throughout our stress test. In the absence of any CPU load, the cores drop down to 800 MHz. The temperatures are also below 80 C throughout the time that the GPU is loaded up.

All in all, the thermal solution is very effective. Given that the acoustic side-effects were not irksome (subjectively), we wonder if GIGABYTE has missed a trick by dialing down the overclocking and not allowing the full performance potential of the system to come through.

53 Comments

View All Comments

gonchuki - Thursday, January 29, 2015 - link

Please define "decimates". In non-OpenCL and non-GPU bound tests, it's a 5-7% win at most, which can be easily explained by the 33% higher base clock of the CPU cores, plus the die shrink that allows for better thermals (more headroom for higher bins of turbo boost).All of the test results point to Broadwell having the exact same IPC as Haswell in all situations. If anything improved it can only be because of the new stepping that might have fixed some errata.

nathanddrews - Thursday, January 29, 2015 - link

Agreed, the word "decimates" is a bit extreme - but I consider anything in the 10-20% range to be significantly better.Refuge - Thursday, January 29, 2015 - link

These days I agree, long gone are the days of Sandybridge... Tis a shame, they were fun.Laststop311 - Friday, January 30, 2015 - link

I'm glad some 1 else noticed this. In the benchmarks that strictly use only the cpu, broadwells haswell equivalent is barely and i mean barely any faster. Sure the gpu is a pretty decent improvement but who cares about intels integrated gpu's? Anyone that relies heavily on an integrated gpu is going to get an apu from amd. The only reason the gpu is so much better is its such a poor performing part to begin with, it's a lot easier to improve lower performing things than things that are already highly optimized like the cpu.This is bad news for people using desktops with discrete gpu's and were hoping broadwell would be a decent boost. In those situations the iGPU means nothing so big deal it got better. This also means broadwell-e is going to rly suck and be basically identical to haswell-e almost no reason to even bother designing broadwell-e chips since they dont even use iGPU there is no performance increase at all to talk about in those.

The silver lining though is we get to save money another year. With intel having no pressure on them we get to save our money till there is a real performance boost. Basically anyone with an i7-920 or higher doesn't have to spend money on a pc upgrade till maybe skylake/skylake-e MAYBE, intel has put out underwhelming tocks lately as well. My x58 i7-980x system still has no cpu bottleneck. This allowed me to buy a 55" LG OLED tv as normally i was buying a new pc every 2-3 years before the core i7 series started then all the sudden performance upgrades became pathetic, my new pc fund built up and i found the oled tv for 3000 and figured why not i can easily go another couple years with the same pc. So thanks intel for making no progress i got a new oled tv.

BrokenCrayons - Friday, January 30, 2015 - link

I care about iGPU benchmarks and the computer I use for gaming has an Intel HD3000 and probably will do so for at least another year or more before even thinking about an upgrade. Having dedicated graphics in my laptop seems pointless when I can just wait 5-7 years or so to play a game after it's fully patched and usually avaiable with all of it's DLC for very little cost plus runs well on something that doesn't need a higher end graphics processor. So yes, for serious gaming, iGPUs are fine if you manage expectations and play things your computer can easily handle.purerice - Saturday, January 31, 2015 - link

BrokenCrayons, agreed 100%!! I recently upgraded from Merom to Ivy Bridge myself.There are tons of games now selling for $5-$10 that wouldn't run on Merom when the games cost $40-$60. In addition to being patched and DLC'd, guides and walkthroughs exist to get through any of the "less awesome" parts. More money saved for real life and less frustration to interrupt gaming. Patience pays indeed.

Seeing the ~20% boost over 4500U in Ice Storm and Cinebench Open GL was actually exciting, even if it represents performance below 90% of other Anandtech users' current levels.

DrMrLordX - Tuesday, February 3, 2015 - link

Decimates means to destroy something by 10% of its whole. All things considered, I'd rather be decimated than . . . you know, devastated, or annihilated.DanNeely - Thursday, January 29, 2015 - link

While I agree that replacing 1280x1024 is past due; I disagree with picking 1280x720. Back when it was picked 1280x1024 was the most common resolution on low end monitors. Today the default low end resolution is 1366x768 (26.65% on steam); it's also the second most commonly used one (after 1080p).Oxford Guy - Thursday, January 29, 2015 - link

Agreed. It's pretty silly to "replace" a higher resolution with a lower one.frozentundra123456 - Saturday, January 31, 2015 - link

I would disagree. It is quite reasonable, because many laptops use 768p, as well as cheap TVs.