The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

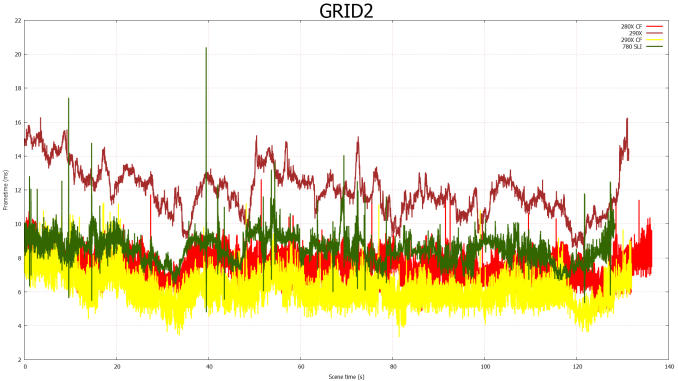

GRID 2

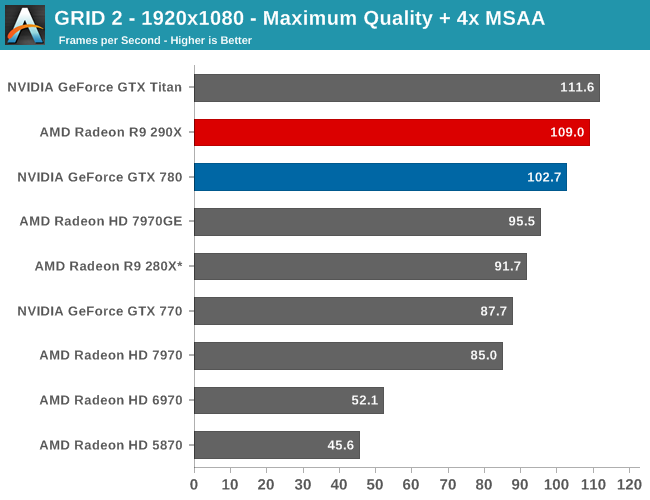

The final game in our benchmark suite is also our racing entry, Codemasters’ GRID 2. Codemasters continues to set the bar for graphical fidelity in racing games, and with GRID 2 they’ve gone back to racing on the pavement, bringing to life cities and highways alike. Based on their in-house EGO engine, GRID 2 includes a DirectCompute based advanced lighting system in its highest quality settings, which incurs a significant performance penalty but does a good job of emulating more realistic lighting within the game world.

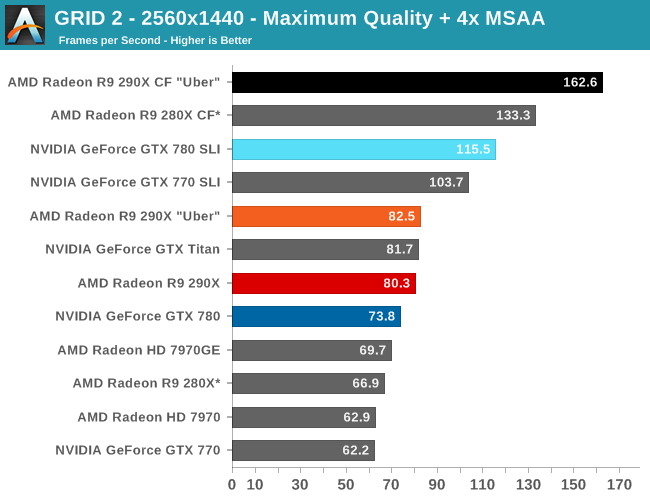

For as good looking as GRID 2 is, it continues to surprise us just how easy it is to run with everything cranked up, even the DirectCompute lighting system and MSAA (Forward Rendering for the win!). At 2560 the 290X has the performance advantage by 9%, but we are getting somewhat academic since it’s 80fps versus 74fps, placing both well above 60fps. Though 120Hz gamers may still find the gap of interest.

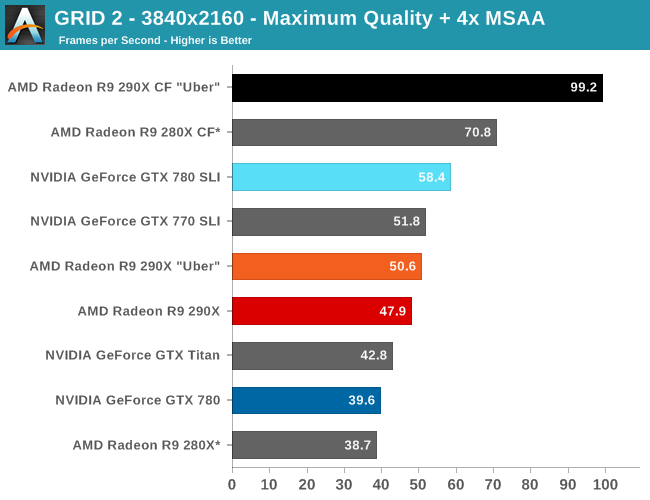

Moving up to 4K, we can still keep everything turned up including the MSAA, while pulling off respectable single-GPU framerates and great multi-GPU framerates. To no surprise at this point, the 290X further extends its lead at 4K to 21%, but as usually is the case you really want two GPUs here to get the best framerates. In which case the 290X CF is the runaway winner, achieving a scaling factor of 96% at 4K versus NVIDIA’s 47%, and 97% versus 57% at 2560. This means the GTX 780 SLI is going to fall just short of 60fps once more at 4K, leaving the 290X CF alone at 99fps.

Unfortunately for AMD their drivers coupled with GRID 2 currently blows a gasket when trying to use 4K @ 60Hz, as GRID 2 immediately crashes when trying to load with 4K/Eyefinity enabled. We can still test at 30Hz, but those stellar 4K framerates aren’t going to be usable for gaming until AMD and Codemasters get that bug sorted out.

Finally, it’s interesting to note that for the 290X this is the game where it gains the least on the 280X. The 290X performance advantage here is just 20%, 5% lower than any other game and 10% lower than the average. The framerates at 2560 are high enough that this isn’t quite as important as in other games, but it does show that the 290X isn’t always going to maintain that 30% lead over its predecessor.

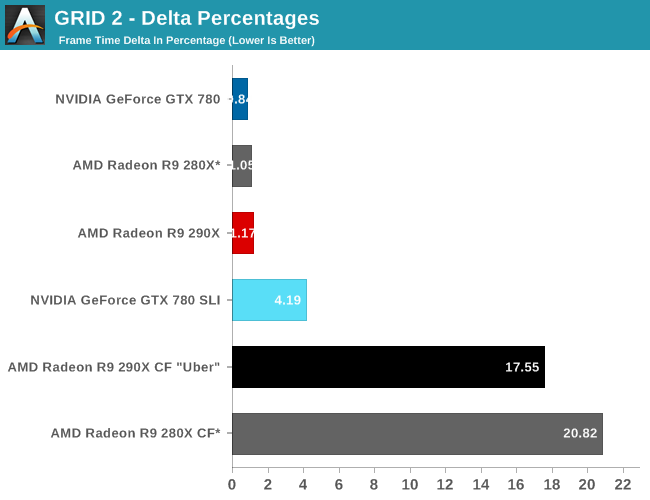

Without any capturable 4K FCAT frametimes, we’re left with the delta percentages at 2560, which more so than any other game are simply not in AMD’s favor. The GTX 780 SLI is extremely consistent here, to the point of being almost absurdly so for a multi-GPU setup. 4% is the kind of variance we expect to find with a single-GPU setup, not something incorporating multiple GPUs. AMD on the other hand, though improving over the 280X by a few percent, is merely adequate at 17%. The low frame times will further reduce the real world impact of the difference between the GTX 780 SLI and 290X CF here, but this is another game AMD could stand some improvements, even if it costs AMD some of the 290X’s very strong CF scaling factor.

396 Comments

View All Comments

Sandcat - Friday, October 25, 2013 - link

That depends on what you define as 'acceptable frame rates'. Yeah, you do need a $500 card if you have a high refresh rate monitor and use it for 3d games, or just improved smoothness in non-3d games. A single 780 with my brothers' 144hz Asus monitor is required to get ~90 fps (i7-930 @ 4.0) in BF3 on Ultra with MSAA.The 290x almost requires liduid...the noise is offensive. Kudos to those with the equipment, but really, AMD cheaped out on the cooler in order to hit the price point. Good move, imho, but too loud for me.

hoboville - Thursday, October 24, 2013 - link

Yup, and it's hot. It will be worth buying once the manufacturers can add their own coolers and heat pipes.AMD has always been slower at lower res, but better in the 3x1080p to 6x1080p arena. They have always aimed for high-bandwidth memory, which is always performs better at high res. This is good for you as a buyer because it means you'll get better scaling at high res. It's essentially forward-looking tech, which is good for those who will be upgrading monitors in the new few years when 1440p IPS starts to be more affordable. At low res the bottleneck isn't RAM, but computer power. Regardless, buying a Titan / 780 / 290X for anything less than 1440p is silly, you'll be way past the 60-70 fps human eye limit anyway.

eddieveenstra - Sunday, October 27, 2013 - link

Maybe 60-70fps is the limit. but at 120Hz 60FPS will give noticable lag. 75 is about the minimum. That or i'm having eagle eyes. The 780gtx still dips in the low framerates at 120Hz (1920x1080). So the whole debate about titan or 780 being overkill @1080P is just nonsense. (780gtx 120Hz gamer here)hoboville - Sunday, October 27, 2013 - link

That really depends a lot on your monitor. When they talked about Gsync and frame lag and smoothness, they mentioned when FPS doesn't exactly match the refresh rate you get latency and bad frame timing. That you have this problem with a 120 Hz monitor is no surprise as at anything less than 120 FPS you'll see some form of stuttering. When we talk about FPS > refresh rate then you won't notice this. At home I use a 2048x1152 @ 60 Hz and beyond 60 FPS all the extra frames are dropped, where as in your case you'll have some frames "hang" when you are getting less than 120 FPS, because the frames have to "sit" on the screen for an interval until the next one is displayed. This appears to be stuttering, and you need to get a higher FPS from the game in order for the frame delivery to appear smoother. This is because apparent delay decreases as a ratio of [delivered frames (FPS) / monitor refresh speed]. Once the ratio is small enough, you can no longer detect apparent delay. In essence 120 Hz was a bad idea, unless you get Gsync (which means a new monitor).Get a good 1440p IPS at 60 Hz and you won't have that problem, and the image fidelity will make you wonder why you ever bought a monitor with 56% of 1440p pixels in the first place...

eddieveenstra - Sunday, October 27, 2013 - link

To be honnest. I would never think about going back to 60Hz. I love 120Hz but don't know a thing about IPS monitors. Thanks for the response....Just checked it and that sounds good. When becoming more affordable i will start thinking about that. Seems like the IPS monitors are better with colors and have less blur@60Hz than TN. link:http://en.wikipedia.org/wiki/IPS_panel

Spunjji - Friday, October 25, 2013 - link

Step 1) Take data irrespective of different collection methods.Step 2) Perform average of data.

Step 3) Completely useless results!

Congratulations, sir; you have broken Science.

nutingut - Saturday, October 26, 2013 - link

But who cares if you can play at 90 vs 100 fps?MousE007 - Thursday, October 24, 2013 - link

Very true, but remember, the only reason nvidia prices their cards where they are is because they could. (Eg Intel CPUs v AMD) Having said that, I truly welcome the competition as it makes it better for all of us, regardless of which side of the fence you sit.valkyrie743 - Thursday, October 24, 2013 - link

the card runs at 95C and sucks power like no tomorrow. only only beats the 780 by a very little. does not overclock well.http://www.youtube.com/watch?v=-lZ3Z6Niir4

and

http://www.youtube.com/watch?v=3OHKWMgBhvA

http://www.overclock3d.net/reviews/gpu_displays/am...

i like his review. its pure honest and shows the facts. im not a nvidia fanboy nore am i a amd fanboy. but ill take nvidia right how over amd.

i do like how this card is priced and the performance for the price. makes the titan not worth 1000 bucks (or the 850 bucks it goes used on forums) but as for the 780. if you get a non reference 780. it will be faster than the 290x and put out LESS heat and LESS noise. as well as use less power.

plus gtx 780 TI is coming out in mid November which will probably cut the cost of the current 780 too 550 and and this card would be probably aorund 600 and beat this card even more.

jljaynes - Friday, October 25, 2013 - link

you say the review sticks with the facts - he starts off talking about how ugly the card is so it needs to beat a titan. and then the next sentence he says the R9-290X will cost $699.he sure seems to stick with the facts.