The Crucial/Micron M500 Review (960GB, 480GB, 240GB, 120GB)

by Anand Lal Shimpi on April 9, 2013 9:59 AM ESTPerformance Consistency

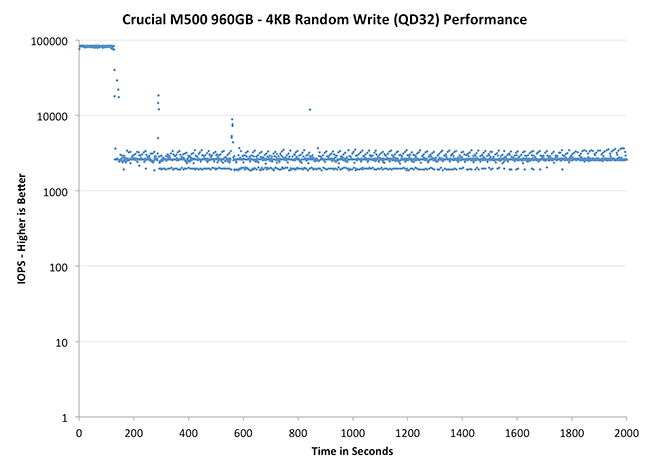

In our Intel SSD DC S3700 review I introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

To generate the data below I took a freshly secure erased SSD and filled it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next I kicked off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. I ran the test for just over half an hour, no where near what we run our steady state tests for but enough to give me a good look at drive behavior once all spare area filled up.

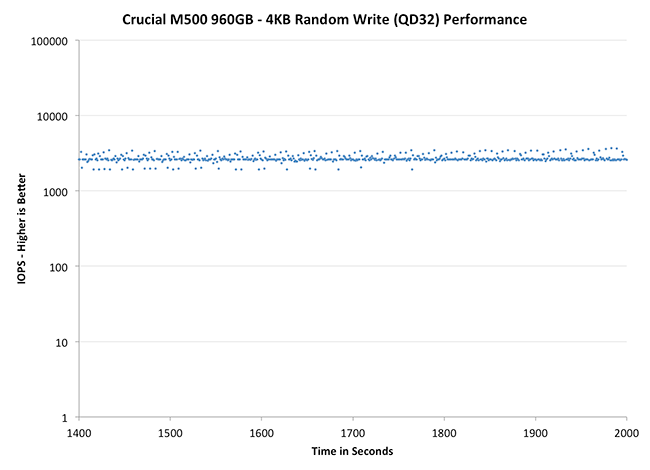

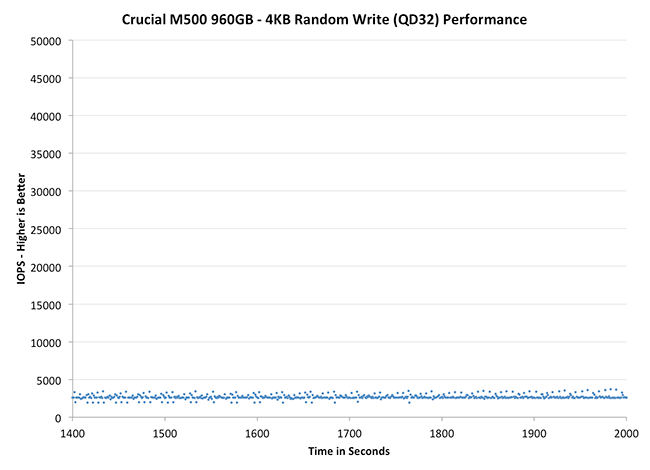

I recorded instantaneous IOPS every second for the duration of the test. I then plotted IOPS vs. time and generated the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 40K IOPS for better visualization of differences between drives.

The high level testing methodology remains unchanged from our S3700 review. Unlike in previous reviews however, I did vary the percentage of the drive that I filled/tested depending on the amount of spare area I was trying to simulate. The buttons are labeled with the advertised user capacity had the SSD vendor decided to use that specific amount of spare area. If you want to replicate this on your own all you need to do is create a partition smaller than the total capacity of the drive and leave the remaining space unused to simulate a larger amount of spare area. The partitioning step isn't absolutely necessary in every case but it's an easy way to make sure you never exceed your allocated spare area. It's a good idea to do this from the start (e.g. secure erase, partition, then install Windows), but if you are working backwards you can always create the spare area partition, format it to TRIM it, then delete the partition. Finally, this method of creating spare area works on the drives we've tested here but not all controllers may behave the same way.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive allocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

|

|||||||||

| Corsair Neutron 240GB | Crucial m4 256GB | Crucial M500 960GB | Plextor M5 Pro Xtreme 256GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% Spare Area | |||||||||

Like most consumer drives, the M500 exhibits the same pattern of awesome performance for a short while before substantial degradation. The improvement over the m4 is just insane though. Whereas the M500 sees its floor at roughly 2600 IOPS, the m4 will drop down to as low as 28 IOPS. That's slower than mechanical hard drive performance and around the speed of random IO in an mainstream ARM based tablet. To say that Crucial has significantly improved IO consistency from the m4 to the M500 would be an understatement.

Plextor's M5 Pro is an interesting comparison because it uses the same Marvell 9187 controller. While both drives attempt to be as consistent as possible, you can see differences in firmware/gc routines clearly in these charts. Plextor's performance is more consistent and higher than the M500 as well.

The 840 Pro comparison is interesting because Samsung manages better average performance, but has considerably worse consistency compared to the M500. The 840 Pro does an amazing job with 25% additional spare area however, something that can't be said for the M500. Although performance definitely improves with 25% spare area, the gains aren't as dramatic as what happens with Samsung. Although I didn't have time to run through additional spare are points, I do wonder if we might see better improvements with even more spare area when you take into account that ~7% of the 25% spare area is reserved for RAIN.

|

|||||||||

| Corsair Neutron 240GB | Crucial m4 256GB | Crucial M500 960GB | Plextor M5 Pro Xtreme 256GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% Spare Area | |||||||||

I am relatively pleased by the M500's IO consistency without any additional over provisioning. I suspect that anyone investing in a 960GB SSD would want to use as much of it as possible. At least in the out of box scenario, the M500 does better than the 840 Pro from a consistency standpoint. None of these drives however holds a candle to Corsair's Neutron however. The Neutron's LAMD controller shows its enterprise roots and delivers remarkably high and consistent performance out of the box.

|

|||||||||

| Corsair Neutron 240GB | Crucial m4 256GB | Crucial M500 960GB | Plextor M5 Pro Xtreme 256GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% Spare Area | |||||||||

111 Comments

View All Comments

BHSPitMonkey - Tuesday, April 9, 2013 - link

Correction: "securily" should read "securely" in the section about encryption.iaco - Tuesday, April 9, 2013 - link

Only 72 TB of writes? That must be a mistake. That's even worse than Samsung's TLC NAND with 1000 write cycles. At 500 GB, 1000 cycles is equal to 500 TB. 3000 cycles for MLC NAND is 1500 TB. Anand, please tell me the spec is wrong, otherwise this drive is not worth the price.Anand Lal Shimpi - Tuesday, April 9, 2013 - link

That's directly from the M500 datasheet. Note that Intel rates the 335 at 20GB of writes per day for 3 years or 21.9TB but explicitly calls that out as a minimum endurance. I suspect that's what this 72TB rating is as well. Samsung doesn't publish similar numbers for the 840 and everyone comes up with their endurance numbers in different ways so they wouldn't likely be comparable either.The NAND is no less reliable than previous 20nm versions, so I have no reason to believe we won't see significantly longer lifespan out of the M500 than just 72TB of writes.

Take care,

Anand

microlithx - Tuesday, April 9, 2013 - link

If you look at Micron's data sheets, particularly at the enterprise SATA SSDs, you'll see they report 7 PB. They won't guarantee it but they'll probably reach that if you overprovision accordingly.NotablePerson - Tuesday, April 9, 2013 - link

What I'm confused about is how the 72TB endurance rating is the same across the board for all four of the SSDs. Shouldn't there be at least SOME variance in their ratings on account of the additional NAND?Kristian Vättö - Tuesday, April 9, 2013 - link

I don't have the datasheet with me (I'm travelling this week) but that 72TB was not sequential writes. IIRC it was 90% random and 10% sequential (and a couple of different IO sizes too), hence the endurance rating. Anand should be able to confirm the exact methodology but 72TB sounds normal in my ears, some have ~30TB (but 100% 4KB random writes).Solid State Brain - Wednesday, April 10, 2013 - link

I believe this is their way of telling buyers that they do not officially support or endorse enterprise usage (ie more than 40 GiB/day) on these drives, although their NAND flash memory is specced for way more than just 72 TiB of writes especially on higher capacity models.I would expect the 960 GB (1 TiB) drive to unofficially endure for at least 1.5 PiB of writes (at 2x write amplification).

Solid State Brain - Wednesday, April 10, 2013 - link

I meant to say that the 960 GB model (894.07 GiB) has 1 TiB of flash memory installed on its PCB. The "missing" capacity is for overprovisioning purposes.comomolo - Friday, May 3, 2013 - link

I can't believe they sell a 1TB drive that will day after fully writing it just 72 times.theduckofdeath - Tuesday, April 9, 2013 - link

This was a bit disappointing, I think. Hopefully a FW update or two will improve the numbers a bit, otherwise it just feels like a step backwards if you're not going for the 1TB model.