The Crucial/Micron M500 Review (960GB, 480GB, 240GB, 120GB)

by Anand Lal Shimpi on April 9, 2013 9:59 AM ESTEncryption Done Right?

Arguably one of the most interesting features of the M500 is its hardware encryption engine. Like many modern drives, the M500 features 256-bit AES encryption engine - all data written to the drive is stored encrypted. By default you don't need to supply a password to access the data, the key is just stored in the controller and everything is encrypted/decrypted on the fly. As with most SSDs with hardware encryption, if you set an ATA password you'll force the generation of a new key and that'll ensure no one gets access to your data.

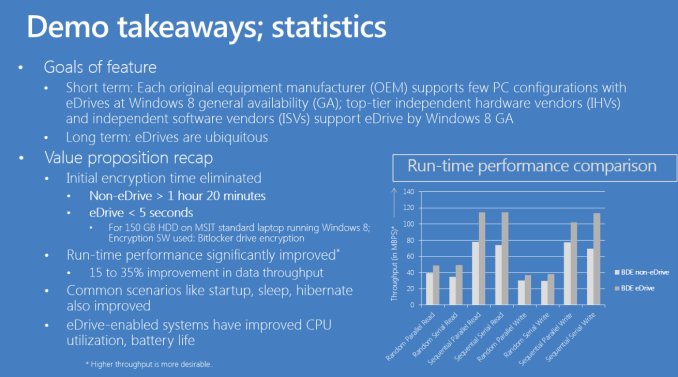

Unfortunately, most ATA passwords aren't very secure so the AES-256 engine ends up being a bit overkill when used in this way. Here's where the M500 sets itself apart from the pack. The M500's firmware is TCG Opal 2.0 and IEEE-1667 compliant. The TCG Opal support alone lets you leverage third party encryption tools to more securily lock down your system. The combination of these two compliances however makes the M500 compatible with Microsoft's eDrive standard.

In theory, Windows 8's BitLocker should leverage the M500's hardware encryption engine instead of using a software encryption layer on top of it. The result should be better performance and power consumption. Simply enabling BitLocker didn't seem to work for me (initial encryption time should take a few seconds not 1+ hours if it's truly leveraging the M500's hardware encryption), however according to Crucial it's a matter of making sure both my test platform and the drive support the eDrive spec. There's hardly any good info about this online so I'm still digging on how to make it work. Once I figure it out I'll update this post. Update: It works!

Assuming this does work however, the M500 is likely going to be one of the first drives that's a must have if you need to run with BitLocker enabled on Windows 8. The performance impact of software encryption isn't huge on non-SandForce drives, but minimizing it to effectively nothing would be awesome.

Crucial is also printing a physical security ID on all M500 drives. The PSID is on the M500's information label and is used in the event that you have a password protected drive that you've lost the auth code for. In the past you'd have a brick on your hand. With the M500 and its PSID, you can do a PSID revert using 3rd party software and at least get your drive back. The data will obviously be lost forever but the drive will be in an unlocked and usable state. I'm also waiting to hear back from Crucial on what utilities can successfully do a PSID reset on the M500.

NAND Configurations, Spare Area & DRAM

I've got the full lineup of M500s here for review. All of the drives are 2.5" 7mm form factor designs, but they all ship with a spacer you can stick on the drive for use in trays that require a 9.5mm drive (mSATA and M.2/NGFF versions will ship in Q2). The M500 chassis is otherwise a pretty straightforward 8 screw design (4 hold the chassis together, 4 hold the PCB in place). There's a single large thermal pad that covers both the Marvell 9187 controller and DDR3-1600 DRAM, allowing them to use the metal chassis for heat dissipation. The M500 is thermally managed. Should the controller temperature exceed 70C, the firmware will instruct the drive to reduce performance until it returns to normal operating temperature. The drive reduces speed without changing SATA PHY rate, so it should be transparent to the host.

The M500 is Crucial's first SSD to use 20nm NAND, which means this is the first time it has had to deal with error and defect rates at 20nm. For the most part, really clever work at the fabs and on the firmware side keeps the move to 20nm from being a big problem. Performance goes down but endurance stays constant. According to Crucial however, defects are more prevalent at 20nm - especially today when the process, particularly for these new 128Gbit die parts, is still quite new. To deal with potentially higher defect rates, Crucial introduced RAIN (Redundant Array of Independent NAND) support to the M500. We've seen RAIN used on Micron's enterprise SSDs before, but this is the first time we're seeing it used on a consumer drive.

You'll notice that Crucial uses SandForce-like capacity points with the M500. While the m4/C400 had an industry standard ~7% of its NAND set aside as spare area, the M500 roughly doubles that amount. The extra spare area is used exclusively for RAIN and to curb failure due to NAND defects, not to reduce write amplification. Despite the larger amount of spare area, if you want more consistent performance you're going to have to overprovision the M500 as if it were a standard 7% OP drive.

The breakdown of capacities vs. NAND/DRAM on-board is below:

| Crucial M500 NAND/DRAM Configuration | |||||||||||

| # of NAND Packages | # of Die per Package | Total NAND on-board | DRAM | ||||||||

| 960GB | 16 | 4 | 1024GB | 1GB | |||||||

| 480GB | 16 | 2 | 512GB | 512MB | |||||||

| 240GB | 16 | 1 | 256GB | 256MB | |||||||

| 120GB | 8 | 1 | 128GB | 256MB | |||||||

As with any transition to higher density NAND, there's a reduction in the number of individual NAND die and packages in any given configuration. The 9187 controller has 8 NAND channels and can interleave requests on each channel. In general we've seen the best results when 16 or 32 devices are connected to an 8-channel controller. In other words, you can expect a substantial drop off in performance when going to the 120GB M500. Peak performance will come with the 480GB and 960GB drives.

You'll also note the lack of a 60GB offering. Given the density of this NAND, a 60GB drive would only populate four channels - cutting peak sequential performance in half. Crucial felt it would be best not to come out with a 60GB drive at this point, and simply release a version that uses 64Gbit die at some point in the future.

The heavy DRAM requirements point to a flat indirection table, similar to what we saw Intel move to with the S3700. Less than 5MB of user data is ever stored in the M500's DRAM at any given time, the bulk of the DRAM is used to cache the drive's OS, firmware and logical to physical mapping (indirection) table. Relatively flat maps should be easy to defragment, but that's assuming the M500's garbage collection and internal defragmentation routines are optimal.

111 Comments

View All Comments

blackmagnum - Tuesday, April 9, 2013 - link

How times have changed. SSDs now have better bang-for-the-buck than hard disk drives. No noise, low power, shock resistance... the works.Flunk - Tuesday, April 9, 2013 - link

That's not quantitatively true. 2TB hard drives are available for about $100 which is 0.05/GB no SSD can match that.SSDs have better power usage, performance, shock resistance but they lag in capacity.

ABR - Tuesday, April 9, 2013 - link

Actually they tend NOT to have better power usage, at least when compared against 2.5" laptop hard drives. But everyone thinks they do anyway since it just seems like a purely electronic device should use less energy than a mechanical one.akedia - Tuesday, April 9, 2013 - link

I don't believe that's correct. Sure, sometimes, some SSDs in some usage scenarios might use more, but it's generally correct that SSDs use less power than even 2.5" HDDs. Below I've linked to recent (within the last year) StorageReview.com reviews for two current generation examples, the WD Scorpio Blue and the Samsung 840 Pro. The SSD beats the SSD on all measures other than writing, which over the course of time is unlikely to tip the scales, and even then they have to note that the system was tested in a desktop which didn't have DIPM enabled. So this is a worst-case for the SSD, without all of its power-saving features enabled, and it still comes out generally on top, while providing vastly superior performance on all measures.It's not true that ALL SSDs beat ALL HDDs at ALL times for ALL usages in ALL circumstances, but it's also not true that 2.5" HDDs have better power usage in general. They don't. And that's without even considering how much less time such a higher performing device would take to read or write a given amount of data, spending much less time out of power-sipping idle. Cheers.

http://www.storagereview.com/western_digital_scorp...

http://www.storagereview.com/samsung_ssd_840_pro_r...

akedia - Tuesday, April 9, 2013 - link

*editThe SSD beats the HDD on all measures other than writing, it doesn't beat itself. *facepalm*

ABR - Tuesday, April 9, 2013 - link

The SSD link you site, together with another review on Tom's Hardware, reportvery different values for power usage than most places I've seen. For example,

here on Anandtech:

http://www.anandtech.com/show/6328/samsung-ssd-840...

Generally averaging 3-5 watts, whereas good HDDs are in the 1.5-2.5 range. It would be good to know the reason for the discrepancies. It does seem that smaller processes are starting to help the SSDs catch up though.

tfranzese - Tuesday, April 9, 2013 - link

You do realize that SSD's can get their work done quicker and get back to idle much faster than any mechanical drive? Unless you're looking at a SSD that has horrible idle power characteristics there's little hope in hell for a HDD to compete as far as power efficiency goes.ABR - Tuesday, April 9, 2013 - link

What I realize is that there is a lot of hand-waving and warm fuzzy thinking in this area, but few hard numbers. The ones that I *have* seen tend to suggest SSDs are still catching up in power efficiency.MrSpadge - Tuesday, April 9, 2013 - link

+1Consuming about as much power (give or take a few 10%) for one or two orders of magnitude less task completion time results in one to two orders of magnitude less energy consumed to complete the task. And that's what really counts, for the wallet and the battery.

mayankleoboy1 - Wednesday, April 10, 2013 - link

Instead of "Power usage" , lets see the "Energy usage" of the whole system, (that is powerused*time)I strongly suspect that SSD's will easily beat any HDD here.