Khronos Announces OpenGL ES 3.0, OpenGL 4.3, ASTC Texture Compression, & CLU

by Ryan Smith on August 6, 2012 9:00 AM ESTAdaptive Scalable Texture Compression

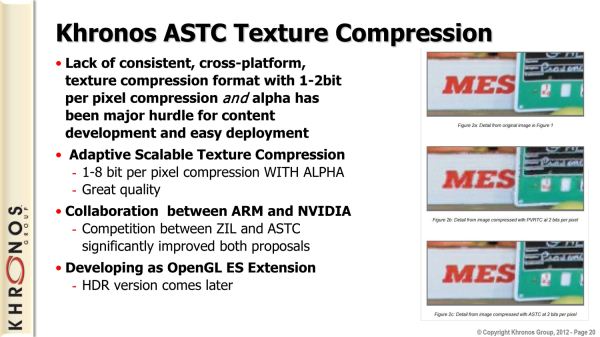

As we’ve noted in our rundowns of OpenGL and OpenGL ES, the inclusion of ETC texture compression support as part of the core OpenGL standards has finally given OpenGL a standard texture compression format after a number of years. At the same time however, the ETC format itself is approaching several years old, and not unlike S3TC it’s only designed for a limited number of cases. So while Khronos has ETC right now, in the future they want better texture compression and are now taking the first steps to make that happen.

The reward at the end of that quest is Adaptive Scalable Texture Compression (ASTC), a new texture compression format first introduced by ARM in late in 2011. ASTC was the winning proposal in Khronos's search for a next-generation texture compression format, with the ARM/AMD bloc beating out NVIDIA and their ZIL proposal.

As the winning proposal in that search, if all goes according to plan ASTC will eventually become OpenGL and OpenGL ES’s mandatory next generation texture compression algorithm. In the meantime Khronos is introducing it as an optional feature of OpenGL ES 3.0 and OpenGL 4.3 in order to solicit feedback from hardware and software developers. Only once all parties are satisfied with ASTC to the point that it’s ready to be implemented into hardware can it meaningfully be moved into the core OpenGL specifications.

So what makes ASTC next-generation anyhow? Since the introduction of S3TC in the 90s, various parties have been attempting to improve on texture compression with limited results. In the Direct3D world where S3TC is standard, we’ve seen Microsoft add specialized formats for normal maps and other texture types that are not color maps, but only relatively recently did they add another color map compression method with BC7. BC7 in turn is a high quality but lower compression ratio algorithm that solves the gradient issues S3TC faces, but for a 24bit RGB texture it’s only a 3:1 compression ratio versus 6:1 for S3TC (32bit RGBA fares better; both are 4:1).

ASTC Image Quality Comparison: Original, 4x4 (8bpp), 6x6 (3.56bpp), & 8x8 (2bpp) block size

Meanwhile in the mobile space we’ve seen the industry’s respective GPU manufacturers create their own texture compression formats to get around the fact that S3TC is not royalty free (and as such can’t be included in OpenGL). And while Imagination Technologies in particular has an interesting method in PVRTC that unlike the other formats is not block based – and thereby can offer a 2bpp (16:1) compression ratio – it has its own pros and cons. Then of course there’s the matter trying to convince holders of these compression methods to freely license them for inclusion in OpenGL, when S3/VIA has over the years made a tidy profit off of S3TC’s inclusion in Direct3D.

The end result is that the industry is ripe for a royalty free next generation texture compression format, and ARM + NVIDIA intend to deliver on that with the backing of Khronos.

While ASTC is another block based texture compression format, it does have some very interesting functionality that pushes it beyond S3TC or any other previous texture compression format. ASTC’s primary trick is that unlike other block based texture compression formats, it is not based around a fixed size 4x4 texel block. Rather ASTC has a fixed size of 128bits (16 bytes) with a variable size block ranging from 4x4 to 12x12, in effect offering RGBA compression ratios from 8bpp (4:1) all the way up to an incredible 0.89bpp (36:1). The larger block size not only allows for higher compression ratios, but it also offers developers a much finer grained range of compression ratios to work with compared to previous texture compression formats.

| Block Size | Bits Per Px | Comp. Ratio |

| 4x4 | 8.00 | 4:1 |

| 5x4 | 6.40 | 5:1 |

| 5x5 | 5.12 | 6.25:1 |

| 6x5 | 4.27 | 7.5:1 |

| 6x6 | 3.56 | 9:1 |

| 8x5 | 3.20 | 10:1 |

| 8x6 | 2.67 | 12:1 |

| 10x5 | 2.56 | 12.5:1 |

| 10x6 | 2.13 | 15:1 |

| 8x8 | 2.00 | 16:1 |

| 10x8 | 1.60 | 20:1 |

| 10x10 | 1.28 | 25:1 |

| 12x10 | 1.07 | 30:1 |

| 12x12 | 0.89 | 36:1 |

Alongside a wide range of compression ratios for traditional color maps, ASTC would also support additional types of textures. With support for normal maps ASTC would also replace other texture compression formats as the preferred format for normal maps, and it would also be the first texture compression format with support for 3D textures. Even HDR textures are on the table, though for the time being Khronos is starting with only support for regular (LDR) textures. With any luck, ASTC will become the all-uses texture compression format for OpenGL.

As you can imagine, Khronos is rather excited about the potential for ASTC. With their strong position in the mobile graphics space they need to provide paths to improving mobile graphics quality and performance amidst the reality of Moore’s Law and the other realities of SoC manufacturing. Specifically, mobile GPU bandwidth isn’t expected to grow by leaps and bounds like shading performance, meaning Khronos and its members need to do more with what amounts to less memory bandwidth. For Khronos texture compression is key, as ASTC will allow developers to pack in smaller textures and/or improve their texture quality without using larger textures, thereby making the most of the limited memory bandwidth available.

Of course the desktop world also stands to benefit. ARM’s objective PSNR data for ASTC has it performing far better than S3TC at the same compression ratio, which would bring higher quality texture compression to the desktop at the same texture size. And since ASTC is being developed by Khronos members and released royalty free, at this point there’s no reason to believe that Direct3D couldn’t adopt it in the future, especially since all major Direct3D GPUs also support OpenGL in the first place, so the requisite hardware would already be in place.

With all of that said, there’s still quite a bit of a distance to go between where ASTC is at today and where Khronos would like it to end up. For the time being ASTC needs to prove itself as an optional extension, so that GPU vendors are willing to implement it in hardware. It’s only after it becomes a hardware feature that ASTC can be widely adopted by developers.

46 Comments

View All Comments

whooleo - Monday, August 6, 2012 - link

Concerning the desktop, OpenGL is hardly being used in games due to DirectX being better and because many of the Linux and Mac OS X users aren't gamers. I mean there are games on Mac OS X but not many gamers on Macs. Plus it shows, Apple's graphics drivers aren't where they should be compared to Linux and Windows along with the graphics cards they include with their PCs. Enough about Mac OS X, Linux also has too many issues with graphics cards and drivers to be friendly enough to the average gamer. This is just a summary of the reasons why desktop OpenGL adoption is low. Now don't get me wrong, OpenGL can be a great open source alternative to DirectX but some things need to be addressed first.bobvodka - Tuesday, August 7, 2012 - link

Just a minor correction; OpenGL isn't 'open source' it is an 'open standard' - you get no source code for it :)whooleo - Tuesday, August 7, 2012 - link

Whoops! Thanks for the correction!beginner99 - Tuesday, August 7, 2012 - link

maybe I got it all wrong but aren't the normal gaming gpus rather lacking in OpenGL performance? I always thought that that was one of the factors the workstations cards differ in (due to driver). Wouldn't that impact game performance as well?Cogman - Tuesday, August 7, 2012 - link

> As OpenCL was designed to be a relatively low level language (ANSI C)OpenCL was BASED on C99. It is not, however, C99. They are two different languages (in other words, you can't take OpenCL code and throw it into a C compiler and vice versa).

Sorry for the nit pick, however, it is important to note that OpenCL is its own language (Just like Cuda is its own language).

UrQuan3 - Thursday, August 16, 2012 - link

Maybe someone can set me straight on this. Years ago, I had a PowerVR card for my desktop (Kyro II). While this was not a high end card by any means, I seem to remember a checkmark box "Force S3TC Compression". The card would then load the uncompressed textures from the game and compress them using S3TC before putting them in video RAM. The FPS performance increase was very noticeable although load times went up a little.Am I confused about that? If I'm not, why isn't that more common? Seems like that would solve the problem of supporting multiple compression schemes. Of course, if a compression scheme isn't general purpose, that could cause problems.