OCZ Vertex 4 Review (256GB, 512GB)

by Anand Lal Shimpi on April 4, 2012 9:00 AM ESTSequential Read/Write Speed

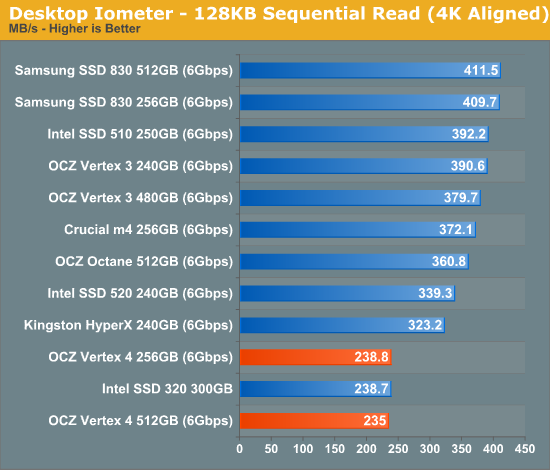

To measure sequential performance I ran a 1 minute long 128KB sequential test over the entire span of the drive at a queue depth of 1. The results reported are in average MB/s over the entire test length.

As impressive as the random read/write speeds were, at low queue depths the Vertex 4's sequential read speed is problematic:

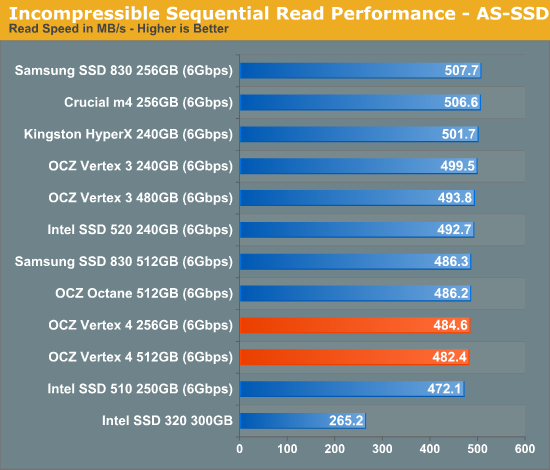

Curious as to what's going on, I ran AS-SSD and came away with much better results:

Finally I turn to ATTO, giving me the answer I'm looking for. The Vertex 4's sequential read speed is slow at low queue depths with certain workloads, move to larger transfer sizes or high queue depths and the problem resolves itself:

The problem is that many sequential read operations for client workloads occur at 64 – 128KB transfer sizes, and at a queue depth of 1 - 3. Looking at the ATTO data above you'll see that this is exactly the weak point of the Vertex 4.

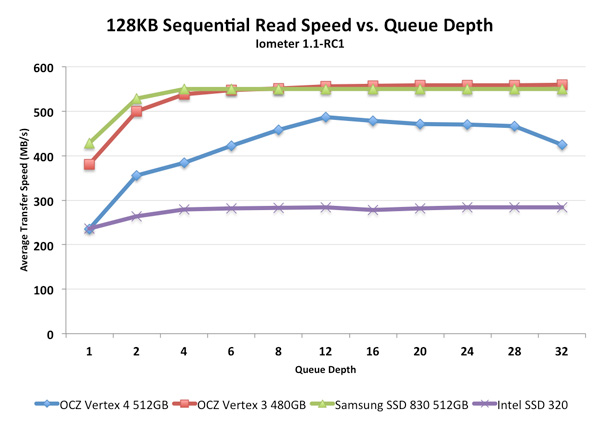

I went back to Iometer and varied queue depth with our 128KB sequential read test and got a good characterization of the Vertex 4's large block, sequential read performance:

The Vertex 4 performs better with heavier workloads. While other drives extract enough parallelism to deliver fairly high performance with only a single IO in the queue, the Vertex 4 needs 2 or more for large block sequential reads. Heavier read workloads do wonderfully on the drive, ironically enough it's the lighter workloads that are a problem. It's the exact opposite of what we're used to seeing. As this seemed like a bit of an oversight, I presented OCZ with my data and got some clarification.

Everest 2 was optimized primarily for non-light workloads where higher queuing is to be expected. Extending performance gains to lower queue depths is indeed possible (the Everest 1 based Octane obviously does fine here) but it wasn't deemed a priority for the initial firmware release. OCZ instead felt it was far more important to have a high-end alternative to SandForce in its lineup. Given that we're still seeing some isolated issues on non-Intel SF-2281 drives, the sense of urgency does make sense.

There are two causes for the lower than expected, low queue depth sequential read performance. First, OCZ doesn't currently enable NCQ streaming for queue depths less than 3. This one is a simple fix. Secondly, the Everest 2 doesn't currently allow pipelined read access from more than 8 concurrent NAND die. For larger transfers and queue depths this isn't an issue, but smaller transfers and lower queue depths end up delivering much lower than expected performance.

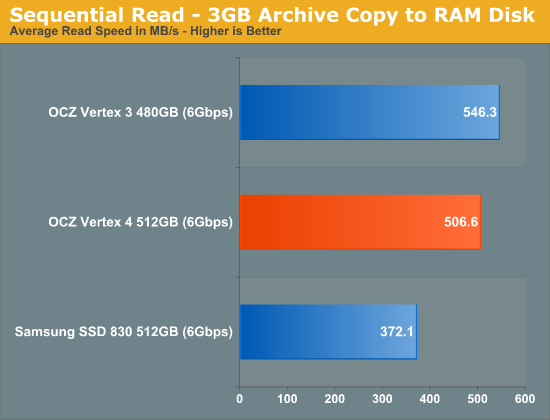

To confirm that I wasn't crazy and the Vertex 4 was capable of high, real-world sequential read speeds I created a simple test. I took a 3GB archive and copied it from the Vertex 4 to a RAM drive (to eliminate any write speed bottlenecks). The Vertex 4's performance was very good:

Clearly the Vertex 4 is capable of reading at very high rates – particularly when it matters, however the current firmware doesn't seem tuned for any sort of low queue depth operation.

Both of these issues are apparently being worked on at the time of publication and should be rolled into the next firmware release for the drive (due out sometime in late April). Again, OCZ's aim was to deliver a high-end drive that could be offered as an alternative to the Vertex 3 as quickly as possible.

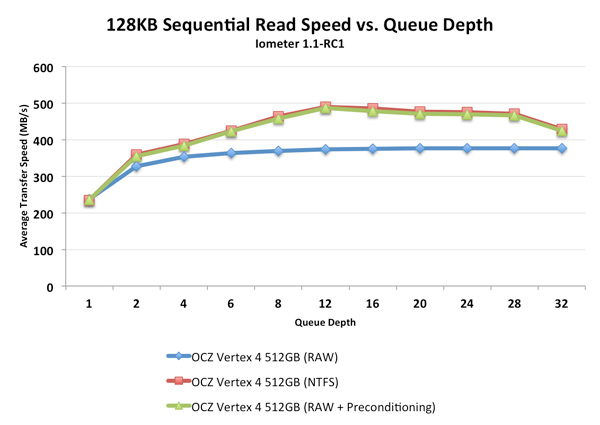

Update: Many have been reporting that the Vertex 4's performance is dependent on having an active partition on the drive due to its NCQ streaming support. While this is true, it's not the reason you'll see gains in synthetic tests like Iometer. If you don't fill the drive with valid data before conducting read tests, the Vertex 4 returns lower performance numbers. Running Iometer on a live partition requires that the drive is first filled with data before the benchmark runs, similar to what we do for our Iometer read tests anyway. The chart below shows the difference in performance between running an Iometer sequential read test on a physical disk (no partition), an NTFS partition on the same drive and finally the physical disk after all LBAs have been written to:

Notice how the NTFS and RAW+precondition lines are identical, it's because the reason for the performance gain here isn't NCQ streaming but rather the presence of valid data that you're reading back. Most SSDs tend to give unrealistically high performance numbers if you read from them immediately following a secure erase so we always precondition our drives before running Iometer. The Vertex 4 just happens to do the opposite, but this has no bearing on real world performance as you'll always be reading actual files in actual use.

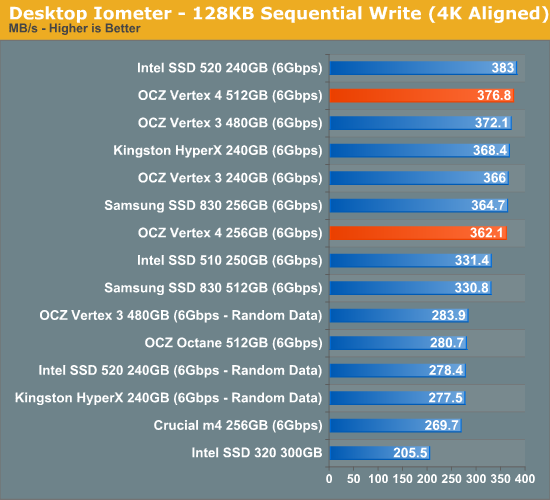

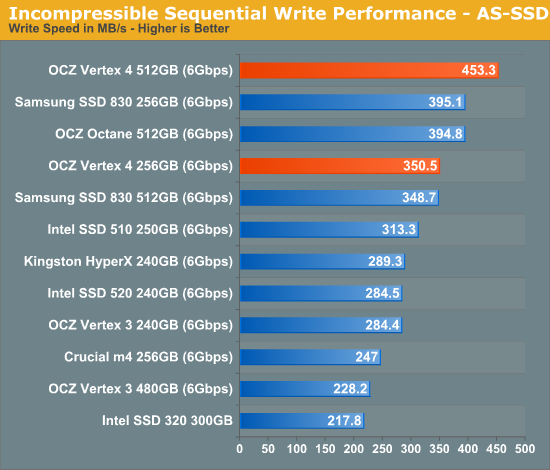

Despite the shortcomings with low queue depth sequential read performance, the Vertex 4 dominated our sequential write tests, even at low queue depths. Only the Samsung SSD 830 is able to compete:

Technically the SF-2281 drives equal the Vertex 4's performance, but that's only with highly compressible data. Large sequential writes are very often composed of already compressed data, which makes the real world performance advantage of the Vertex 4 tangible.

AS-SSD gives us another taste of the performance of incompressible data, which again is very good on the Vertex 4. As far as writes are concerned, there's really no beating the Vertex 4.

127 Comments

View All Comments

Kristian Vättö - Wednesday, April 4, 2012 - link

240GB Vertex 3 is actually faster than 480GB Vertex 3:http://www.anandtech.com/bench/Product/352?vs=561

http://www.ocztechnology.com/res/manuals/OCZ_Verte...

MarkLuvsCS - Wednesday, April 4, 2012 - link

256gb and 512gb should perform nearly identical because they have the same number of NAND packages - 16. the 512gb version just uses 32gb vs 16gb NAND in the 256gb version. The differences between the 256 and 512 gb drives are negligible.Iketh - Wednesday, April 4, 2012 - link

that concept of yours depends entirely on how each line of SSD is architected... it goes without saying that each manufacturer implements different architectures....your comment is what is misleading

Glock24 - Wednesday, April 4, 2012 - link

"...a single TRIM pass is able to restore performance to new"I've seen statements similar to this on previous reviews, but how do you force a TRIM pass? Do you use a third party application? Is there a console command?

Kristian Vättö - Wednesday, April 4, 2012 - link

Just format the drive using Windows' Disk Management :-)Glock24 - Wednesday, April 4, 2012 - link

Well, I will ask this another way:Is there a way to force the TRIM command that wil nor destroy the data in the drive?

Kristian Vättö - Wednesday, April 4, 2012 - link

If you've had TRIM enabled throughout the life of the drive, then there is no need to TRIM it as the empty space should already be TRIM'ed.One way of forcing it would be to multiply a big file (e.g. an archive or movie file) until the drive runs out of space. Then delete the multiples.

PartEleven - Wednesday, April 4, 2012 - link

I was also curious about this, and hope you can clarify some more. So my understanding is that Windows 7 has TRIM enabled by default if you have an SSD right? So are you saying that if you have TRIM enable throughout the life of the drive, Windows should automagically TRIM the empty space regularly?adamantinepiggy - Wednesday, April 4, 2012 - link

http://ssd.windows98.co.uk/downloads/ssdtool.exeThis tool will initiate a trim manually. Problem is that unless you can monitor the SSD, you won't know it has actually done anything. I know it works with Crucial Drives on Win7 as I can see the SSD's initiate a trim from the monitoring port of the SSD when I use this app. I can only "assume" it works on other SSD's too but since I can't monitor them, I can't know for sure.

Glock24 - Wednesday, April 4, 2012 - link

I'll try that tool.For those using Linux, I've used a tool bundled with hdparm calles wiper.sh:

wiper.sh: Linux SATA SSD TRIM utility, version 3.4, by Mark Lord.

Linux tune-up (TRIM) utility for SATA SSDs

Usage: /usr/sbin/wiper.sh [--max-ranges <num>] [--verbose] [--commit] <mount_point|block_device>

Eg: /usr/sbin/wiper.sh /dev/sda1