Promise Pegasus R6 & Mac Thunderbolt Review

by Anand Lal Shimpi on July 8, 2011 2:01 AM ESTThe Pegasus: Performance

A single 2TB Hitachi Deskstar 7K3000 is good for sequential transfer rates of up to ~150MB/s. With six in a RAID-5 configuration, we should be able to easily hit several Gbps in bandwidth to the Pegasus R6. The problem is, there's no single drive source that can come close to delivering that sort of bandwidth.

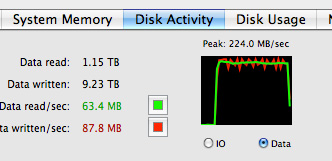

Apple sent over a 15-inch MacBook Pro with a 256GB Apple SSD. This was the first MacBook Pro I've ever tested with Apple's own SSD, so I was excited to give it a try. The model number implies a Toshiba controller and I'll get to its performance characteristics in a separate article. But as a relatively modern 3Gbps SSD, this drive should be good for roughly 200MB/s. Copying a large video file from the SSD to the Pegasus R6 over Thunderbolt proved this to be true:

Apple's SSD maxed out at 224MB/s to the Thunderbolt array, likely the peak sequential read speed from the SSD itself. Average performance was around 209MB/s.

That's a peak of nearly 1.8Gbps and we've still got 8.2Gbps left upstream on the PCIe channel. I needed another option.

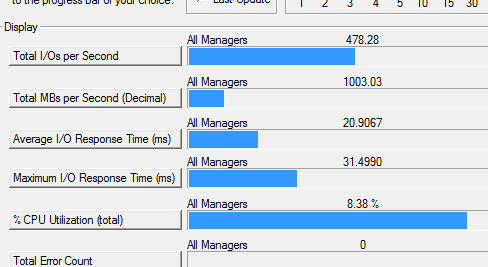

Without a second Thunderbolt source to copy to the array at closer to the interface's max speed, we had to generate data. I turned to Iometer to perform a 2MB sequential access across the first 1TB of the Pegasus R6's RAID-5 array. I ran the test for 5 minutes, the results are below:

| Promise Pegasus R6 12TB (10TB RAID-5) Performance | ||||||

| Sequential Read | Sequential Write | 4KB Random Read (QD16) | 4KB Random Write (QD16) | |||

| Promise Pegasus R6 (RAID-5) | 673.7 MB/s | 683.9 MB/s | 1.24 MB/s | 0.98 MB/s | ||

The best performance I saw was 683.9MB/s from our sequential write test, or 5471Mbps. Note that I played with higher queue depths but couldn't get beyond these numbers on the stock configuration. Obviously these are hard drives so random performance is pretty disappointing.

That's best case sequential performance, what about worst case? To find out I wrote a single 10TB file across the entire RAID-5 array then had Iometer measure read/write performance to that file in the last 1TB of the array's capacity:

| Promise Pegasus R6 12TB (10TB RAID-5) Performance | ||||||

| Sequential Read (Beginning) | Sequential Write (Beginning) | Sequential Read (End) | Sequential Write (End) | |||

| Promise Pegasus R6 (RAID-5) | 673.7 MB/s | 683.9 MB/s | 422.7 MB/s | 463.0 MB/s | ||

Minimum sequential read performance dropped to 422MB/s or 3.3Gbps. This is of course the downside to any platter based storage array. Performance on outer tracks is much better than on the inner tracks, so the more you have written to the drive the slower subsequent writes will be.

At over 5Gbps we're getting decent performance but I still wanted to see how far I could push the interface. I deleted the RAID-5 array and created a 12TB RAID-0 array. I ran the same tests as above:

| Promise Pegasus R6 12TB (10TB RAID-5) Performance | ||||||

| Sequential Read | Sequential Write | 4KB Random Read (QD16) | 4KB Random Write (QD16) | |||

| Promise Pegasus R6 (RAID-5) | 673.7 MB/s | 683.9 MB/s | 1.24 MB/s | 0.98 MB/s | ||

| Promise Pegasus R6 (RAID-0) | 782.2 MB/s | 757.8 MB/s | 1.27 MB/s | 5.86 MB/s | ||

Sequential read performance jumped up to 782MB/s or 6257Mbps. We're now operating at just over 60% of the peak theoretical performance of a single upstream Thunderbolt channel. For a HDD based drive array, this is likely the best we'll get.

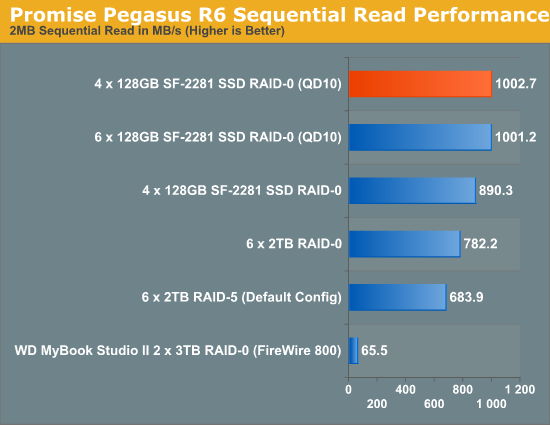

To see how far we could push things I pulled out all six drives and swapped in four SF-2281 based SSDs. To really test the limits of the interface I created a 4-drive RAID-0 array sized at only 25GB. This would keep drive performance as high as possible and reduce the time required to fill and test the drives.

Unlike the hard drive based arrays, I had to take the queue depth up to 16 in order to get peak performance out of these SSDs. The chart below shows all of my performance attempts:

With highly compressible data, I managed to get just over 1000MB/s (8021Mbps to be exact) to the 4-drive SF-2281 Pegasus R6. Note that this isn't a shipping configuration, but it does show us the limits of the platform. I'm not entirely convinced that we're limited by Thunderbolt here either - it could very well be the Pegasus' internal controller that's limiting performance. Until we get some other Thunderbolt RAID devices in house it's impossible to tell but at around 8Gbps, this is clearly an interface that has legs.

88 Comments

View All Comments

Conner_36 - Friday, July 8, 2011 - link

Or even in the office, to able to take your entire project and move between the rooms carrying ALL of the data? That's unheard of!From what I understand with HD movie editing I/O is the bottleneck.

All we need now is an SVN hardware device with thunderbolt to sync across multiple thunderbolt RAIDs. Once thats out you could have a production studio with some real mobile capabilities.

Exodite - Friday, July 8, 2011 - link

I wager pretty much any usage scenario can come up with a high-performance 12TB storage solution for significantly less than 2000 USD.You're right though, it's definitely not the solution for me.

Or anyone I know, or am likely to ever know. *shrug*

Zandros - Friday, July 8, 2011 - link

What happens if you try the Macbook Pro -> Pegasus -> iMac in Target Display Mode -> Cinema Display connection chain?Focher - Saturday, July 9, 2011 - link

Pretty sure the DP monitor has to be the last device in the chain. Maybe that is just a current limitation because there are no Thunderbolt displays.Zandros - Monday, July 11, 2011 - link

AFAICT, the iMac is a Thunderbolt display, since it does not support Target Display Mode from Display Port sources with Display Port cables.tipoo - Friday, July 8, 2011 - link

Is there a way to make it shut off the drives after idling for a while?piroroadkill - Friday, July 8, 2011 - link

But when you saw the file creation maxed out at 9TB, on 10TB array..Since.. uh, Snow Leopard, Apple changed file and drive sizes to display decimal bytes as used by the manufacturers, which is the same as the 10TB array.

However every other thing ever reports in binary bytes, such as windows describing "gigabytes" even though it means gibibytes in reality.

Ugh, anyway, what I'm trying to get at is that maybe you did infact fill the array. That said, the thing shouldn't have fucked up..

CharonPDX - Friday, July 8, 2011 - link

If I had way too much money, my usage model for Target Display Mode would be to use the iMac as a Virtual Machine host/server, connected to either a second iMac or a MacBook Pro as a dual-screen workstation.With the minimum 27" iMac, you're basically buying a 27" Cinema Display plus a $700 Mac mini-on-steroids. If you want a second Apple display for your iMac or MacBook Pro, and want a Mac Mini to use as a server, that is an excellent value to instead just get a second iMac. (That value may drop depending on the next Mac Mini update, of course.)

etamin - Friday, July 8, 2011 - link

in the block diagram on the first page, why is the Thunderbolt Controller connected to the PCH thru PCIe rather than to the processor? I thought PCIe connections came off the processor/NB?repoman27 - Sunday, July 10, 2011 - link

The lanes that come off the processor/NB are usually used for dGPU. On the new MacBook Pros, Apple borrowed four of them for the Thunderbolt controller. Apparently on the new iMacs, however, they decided to give all 16 lanes from the CPU to the graphics card and pulled four from the PCH instead.