The Sandy Bridge Preview

by Anand Lal Shimpi on August 27, 2010 2:38 PM ESTSandy Bridge Integrated Graphics Performance

With Clarkdale/Arrandale, Intel improved integrated graphics by a large enough margin that I can honestly say we were impressed with what Intel had done. That being said, the performance of Intel's HD Graphics was honestly not enough. For years integrated graphics have been fast enough to run games like the Sims but not quick enough to play anything more taxing, at least not at reasonable quality settings. The 'dales made Intel competitive in the integrated graphics market, but they didn't change what we thought of integrated graphics.

Sandy Bridge could be different.

Architecturally, Sandy Bridge is a significant revision from what's internally referred to as Intel Gen graphics. While the past two generations of Intel integrated graphics have been a part of the Gen 5 series, Sandy brings the first Gen 6 graphics die to market. With a tremendous increase in IPC and a large L3 cache to partake in, Sandy Bridge's graphics is another significant move forward.

Is it enough to kill all discrete graphics? No. But it's good enough to really threaten the entry level discrete market. Take a look:

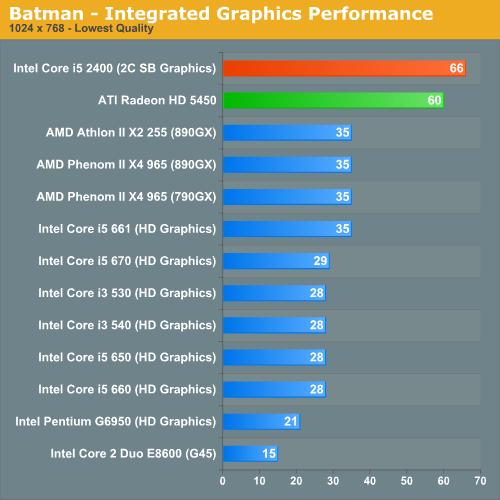

Batman: Arkham Asylum

It's unclear whether or not graphics turbo was working on the part I was testing. If it was, this is the best it'll be for the 6 EU parts. If it wasn't, things will be even faster. Comparisons to current integrated graphics solutions are almost worthless. Sandy Bridge's graphics perform like a low end discrete part, not an integrated GPU. In this case, we're about 10% faster than a Radeon HD 5450.

Assuming Sandy Bridge retains the same HTPC features that Clarkdale has, I'm not sure there's a reason for these low end discrete GPUs anymore. At least not unless they get significantly faster.

Note that despite the early nature of the drivers, I didn't notice any rendering artifacts or image quality issues while testing Sandy Bridge's integrated graphics.

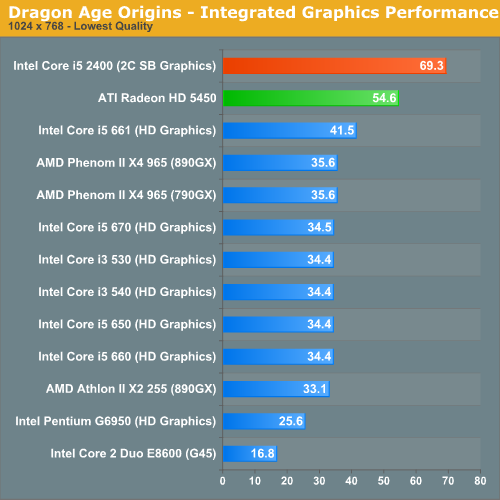

Dragon Age Origins

The Sandy Bridge advantage actually grows under Dragon Age. At these frame rates you can either enjoy smoother gameplay or actually up the resolution/quality settings to bring it back down to ~30 fps.

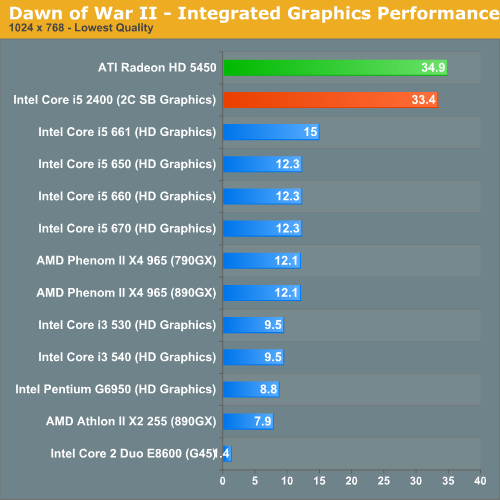

Dawn of War II

It's not always a clear victory for Sandy Bridge. In our Dawn of War II test the 5450 pulls ahead, although by only a small margin.

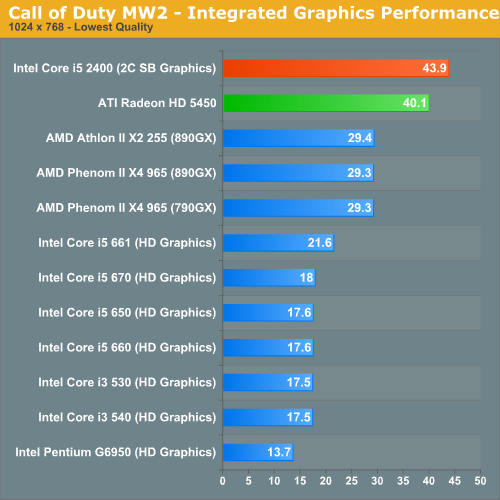

Call of Duty Modern Warfare 2

Sandy is one again on top of the 5450 in Modern Warfare 2. Although I'm not sure these frame rates are high enough to really up quality settings any more, they are at least smooth - which is more than I can say for the first gen HD Graphics.

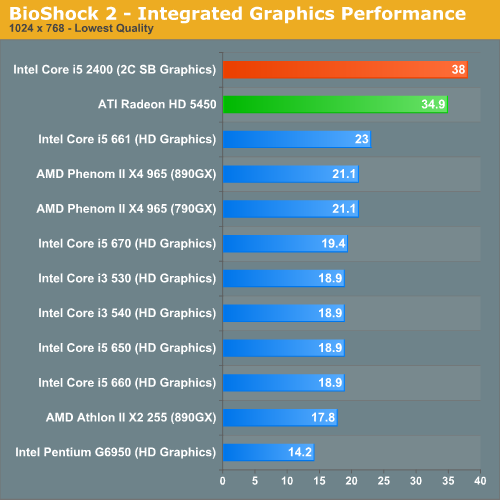

BioShock 2

Intel promised to deliver a 2x improvement in integrated graphics performance with Sandy Bridge. We're getting a bit more than that here in BioShock 2.

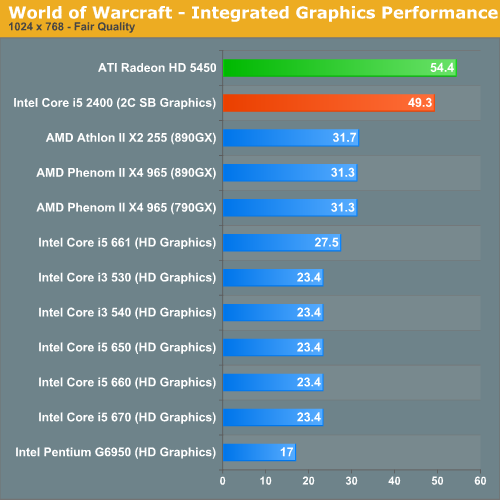

World of Warcraft

World of Warcraft is finally playable with Intel's Sandy Bridge graphics. The Radeon HD 5450 is 10% faster here.

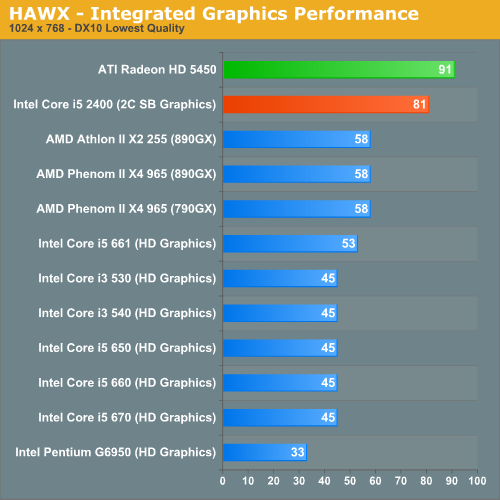

HAWX

Sandy Bridge Graphics Performance Summary

This is still a very early look. Drivers and hardware both aren't final, but the initial results are very promising. Sandy Bridge puts all current integrated graphics solutions to shame, and even looks to nip at the heels of low end discrete GPUs. For HTPC users, Clarkdale did a good enough job - but for light gaming there wasn't enough horsepower under the hood. With Sandy Bridge you can actually play modern titles, albeit at low quality settings.

If this is the low end of what to expect, I'm not sure we'll need more than integrated graphics for non-gaming specific notebooks. Update: It looks like all notebook Sandy Bridge parts, at least initially, will use the 12 EU IGPs. Our SB sample may also have been a 12 EU part, we're still awaiting confirmation.

200 Comments

View All Comments

iwodo - Sunday, August 29, 2010 - link

The GPU is on the same die, So depending on what you mean by true "Fusion" product. It is by AMD's definition ( the creator of the tech terms "Fusion" ) a fusion product.iwodo - Sunday, August 29, 2010 - link

You get 10% of IPC on average. It varies widely from 5 % to ~30% clock per clock.None of these Test have had AVX coded. I am not sure if you need to recompile to take advantage of the additional width for faster SSE Code. ( I am thinking such changes in coding of instruction should require one. ) AVX should offer some more improvement in many areas.

So much performance is here with even less Peak Power usage. If you factor in the Turbo Mode, Sandy Bridge actually give you a huge boost in Performance / Watts!!!

So i dont understand why people are complaining.

yuhong - Sunday, August 29, 2010 - link

Yes AVX requires software changes, as well as OS support for using XSAVE to save AVX state.BD2003 - Sunday, August 29, 2010 - link

It sounds like intel has a home run here. At least for my needs. Right now I'm running entirely on core 2 chips, but I can definitely find a use for all these.For my main/gaming desktop, the quad core i5s seem like theyll be the first upgrade thats big enough to move me away from my e6300 from 4 years ago.

For my HTPC, the integrated graphics seem like theyre getting to a point where I can move past my e2180 + 9400 IGP. I need at least some 3d graphics, and the current i3/i5 don't cut it. Even lower power consumption + faster CPU, all in a presumably smaller package - win.

For my home server, I'd love to put the lowest end i3 in there for great idle power consumption but with the speed to make things happen when it needs to. I'd been contemplating throwing in a quad core, but if the on-die video transcoding engine is legitimate there will be no need for that.

Thats still my main unanswered question: what's the deal with the video encoder/transcoder? Does it require explicit software support, or is it compatible with anything that's already out there? I'm mainly interested in real time streaming over LAN/internet to devices such as an ipad or even a laptop - if it can put out good quality 720-1080p h264 at decent bitrates in real time, especially on a low end chip, I'll be absolutely blown away. Any more info on this?

_Q_ - Sunday, August 29, 2010 - link

I do understand some complains, but Intel is running a business and so they do what is in their best interest.Yet, concerning USB 3 it seems to be too much of a disservice to the costumers that it should be in, without any third party add-on chip!

I think it is shameful of them to delay this further just so that they can get their LightPeak thing into to the market. Of which I read nothing in this review so I wonder, when will even that one come?!

I can only hope AMD does support it (haven't read about it) and they start getting more market, maybe that will show these near sighted Intel guys.

tatertot - Sunday, August 29, 2010 - link

Lightpeak would be chipset functionality, at least at first.Also, lightpeak is not a protocol, it's protocol-agnostic, and can in fact carry USB 3.0.

But, rant away if you want...

Guimar - Sunday, August 29, 2010 - link

Really need oneTriple Omega - Sunday, August 29, 2010 - link

I'm really interested to see how Intel is going to price the higher of these new CPU's, as there are several hurdles:1) The non-K's are going up against highly overclockable 1366 and 1156 parts. So pricing the K-models too high could mean trouble.

2) The LGA-1356 platform housing the new consumer high-end(LGA-2011 will be server-only) will also arrive later in 2011. Since these are expected to have up to 8 cores, pricing the higher 1155 CPU's too high will force a massive price-drop when 1356 arrives.(Or the P67 platform will collapse.) And 1366 has shown that such a high-end platform needs the equivalent of an i7 920 to be successful. So pricing the 2600K @ $500 seems impossible. Even $300 would not leave room for a $300 1356 part as that will, with 6-8 cores, easily outperform the 2600K.

It will also be quite interesting to see the development of those limits on overclocking when 1356 comes out. As imposing limits there too, could make the entire platform fail.(OCed 2600K better then 6-core 1356 CPU for example.) And of course AMD's response to all this. Will they profit from the overclocking limits of Intel? Will they grab back some high-end? Will they force Intel to change their pricing on 1155/1356?

@Anand:

It would be nice to see another PCIe 2.0 x8 SLI/CF bottleneck test with the new HD 6xxx series when the time comes. I'm interested to see if the GPU's will catch up with Intels limited platform choice.

thewhat - Sunday, August 29, 2010 - link

I'm disappointed that you didn't test it against 1366 quads. The triple channel memory and a more powerful platform in general have a significant advantage over 1156, so a lot of us are looking at those CPUs. Especially since the i7 950 is about to have its price reduced.A $1000 six-core 980X doesn't really fit in there, since it's at a totally different price point.

I was all for the 1366 as my next upgrade, but the low power consumption of Sandy Bridge looks very promising in terms of silent computing (less heat).

SteelCity1981 - Sunday, August 29, 2010 - link

What do you think the Core i7 980x uses? An LGA 1366 socket with triple channel memory support. So what makes you think that the Core i7 950 is going to perform any diff?????