Low Power Server CPUs: the energy saving choice?

by Johan De Gelas on July 15, 2010 4:54 AM EST- Posted in

- IT Computing

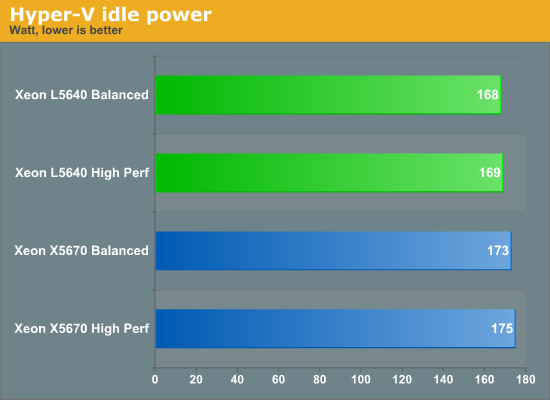

Idle power

We start measuring idle power running on the two most “used” Power Plans of Windows 2008 R2 Enterprise (Hyper-V enabled): Balanced or High Performance. We described both Power Plans and the resulting effect on the server here. This is the power consumption of the complete system, measured at the electrical outlet.

The Xeon family has made large steps forward in the power management department: fine grained clock gating and core power gating reduces power significantly. This however also results in a very small difference between the low power Xeon and the “Performance” Xeon. When running in idle, the Power management hardware (PCU) shuts down 5 cores and clockgates all components of the remaining core that are not necessary. The result of all these hardware tricks is that it hardly matters if you run those CPUs at 1.6 GHz or 2.26/2.93 GHz. The power plan “balanced” allows the CPU to scale back to 1.6 GHz, the power plan “high performance” never clocks lower than the advertised clockspeed (2.26/2.93 GHz). The amazing thing is that even at the higher clockspeed and voltage, the CPU only needs 2W more at the power outlet. So the real difference at the CPU level is even lower.

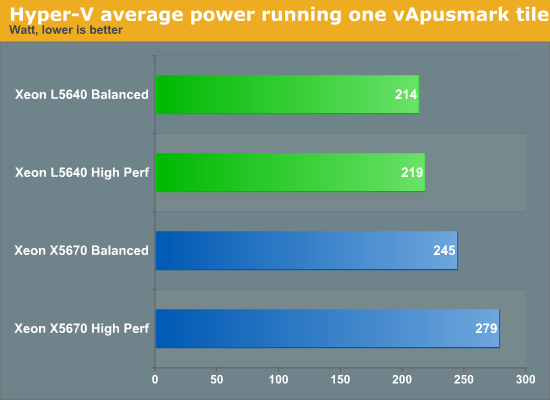

Let us put some load on those servers. One tile of vApus Mark I demands 12 virtual CPUs, and as we described before, it will demand about 25-45% of the dual CPU configuration.

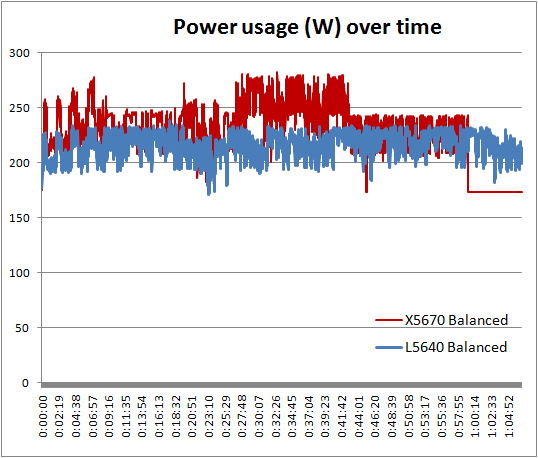

If we calculate the average power, everything seems to be “as expected”. However, the problem with this calculation is that the some of the tests took longer than others. For example the test on the L5640 took about 66 minutes, while the Xeon X5670 needed only 59 minutes.

And that was a real surprise to us: as we were not loading the CPU to 100%, we did not expect that one test would take so much longer than the other. But you can clearly see that the fastest Xeon went more quickly to an idle state.

49 Comments

View All Comments

cserwin - Thursday, July 15, 2010 - link

Some props for Johan, too, maybe... nice article.JohanAnandtech - Thursday, July 15, 2010 - link

Thanks! We have more data on "low power choices", but we decided to cut them up in several article to keep it readable.DavC - Thursday, July 15, 2010 - link

not sure whats going on with your electricity cost calcs on your first page. firstly your converting current unnessacarily from watts to amps (meaning your unnessacarily splitting into US and europe figures).basically here in the UK, 1kW which is what your your 4 PCs in your example consume, costs roughly 10p per hour. working on an average of 720 hours in a month, that would give a grand total of £72 a month to run those 4 PCs 24/7.

£72 to you US guys is around $110. And I cant imagine you're electricity is priced any dearer than ours.

giving a 4 year life cycle cost of $5280.

have I missed something obvious here or are you just out with the maths?

JohanAnandtech - Thursday, July 15, 2010 - link

You are calculating from the POV of a datacenter. I take the POV of a datacenter client, which has to pay per amp that he/she "reserves". AFAIK, datacenters almost always count with amps, not Watts.(also 10p per KWh seems low)

MrSpadge - Thursday, July 15, 2010 - link

With P=V*I at constant voltage power and amps are really just a different name for the same thing, i.e. equivalent. Personally I prefer W, because this is what matters in the end: it's what I pay for and what heats my room. Amps by themselves don't mean much (as long as you're not melting the wires), as voltages can easily be converted.Maybe the datacenter guys just like to juggle around smaller numbers? Maybe the should switch over to hecto watts instead? ;)

MrS

JohanAnandtech - Thursday, July 15, 2010 - link

I am surprised the electrical engineers have not jumped in yet :-). As you indicate yourself, the circuits/wires are made for a certain amount of amps, not watts. That is probably the reason datacenters specify the amount of power you get in watt.JohanAnandtech - Thursday, July 15, 2010 - link

I meant amps in that last sentence of course.knedle - Thursday, July 15, 2010 - link

Watts are universal, doesn't matter if you're in UK, or US - 220W is still 220W, but with ampers it's different. Since in the Europe voltage is higher than in the USA (EU=220V, US=110V), and P=U*I, you've got twice as much power for 1A, which means that in USA your server will use 2A, while the same server in UK will use only 1A...has407 - Friday, July 16, 2010 - link

No, not all Watts are the same.Watts in a decent datacenter come with power distribution, cooling, UPS, etc. Those typically add 3-4x to the power your server actually consumes. Add to that the amortized cost of the infrastructure and you're looking at 6-10x the cost of the power your server consumes directly.

Such is the fallacy of simplistic power/cost comparisons (and Johan, you should know better). Can we now dispense with the idiotic cost/KWH calculations?

Penti - Saturday, July 17, 2010 - link

A high-performance server probably can't be used on 1A 230V which is the cheapest options in some datacenters. However something like half a rack or 1/4 would probably have 10A/230V, more then enough for a small servercollection of 4 moderate servers. The big cost is cooling, normal racks might handle 4kW (up to 6kW over that then it's high density) of heat/power just. Then you need more expensive stuff. A cheap rack won't handle 40 250W servers in other regards. 6 kW power/cooling and 2x16A/230V shouldn't be that expensive. Any way you also pay for cooling (and UPS). Even cheap solutions normally charge per used kW here though. 4 2U is about 1/4 rack anyway. And like 15 amps is needed if in the states.