Dynamic Power Management: A Quantitative Approach

by Johan De Gelas on January 18, 2010 2:00 AM EST- Posted in

- IT Computing

AMD Power Management

Variable clock rate and CPU power management started with the Intel 386SL, but that would take us a bit too far back in history. Let's start with the introduction of the K6-2+ and PIII mobile. From that moment on, both Intel and AMD have been using Dynamic Frequency and Voltage Scaling (DFVS) per CPU. DFVS has been marketed as "PowerNow!", "SpeedStep" and many other names. In a multi-core CPU this means that all the cores will clock at the clock speed of highest loaded core. A clock speed requires a corresponding core voltage, so all cores also use the same voltage.

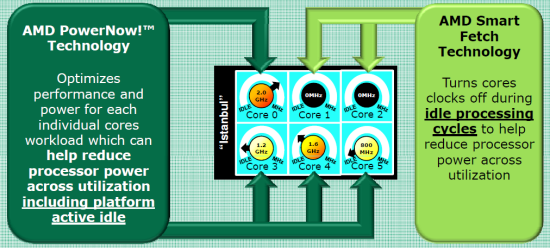

With the introduction of the K10h family (aka "Barcelona") in 2007, AMD reduced dynamic power by three different technologies:

- Dynamic Frequency Scaling per Core. Each core runs at its own clock.

- Separate power planes for the core and "uncore" part of the CPU.

- Clock gating at the CPU block level

The effect of (1) on performance/watt is not a complete success story: power is linear with frequency, and some OS schedulers will always try to "load balance" across the cores to avoid having one core get hot (which increases static power). As a result the power savings due to (1) are relatively small, and the lag in transitioning from one P-state to another reduces performance as our benchmarks will confirm. AMD Opterons typically support 4-5 P-states. The Opteron "Shanghai" 2389 in this test supports 2.9, 2.3, 1.7 and 0.8GHz. The six-core Opteron 2435 supports 2.6, 2.1, 1.7, 1.4 and 0.8GHz. [2]

Separate power planes provide several benefits. The first benefit is that the cores can go to a sleep (C-state) while the memory controller is still working for another external device (e.g. via DMA). Another advantage is that AMD is able to run the Northbridge and L3 cache out of sync with the cores. This lowers power significantly, while performance only decreases slightly. Overall, performance/watt is clearly increased.

Clock gating reduces power by 20 to 40% according to some publications [3]. This is probably the most important technology for the server market: as server code does not perform floating point code a lot, disabling the clock to the FPU by a clock gate saves quite a bit of power. As a matter of fact, the highest power numbers are measured by floating point intensive benchmarks like LINPAC; typical server benchmarks based on databases or web servers do not even come close. LINPAC needs 20-25% more power than our integer based benchmarks, despite the fact that in both situations the CPU reports utilization as 100%.

AMD added "Smart Fetch" to the newer "Shanghai" Opteron, which is essentially clock gating at the core level (making it new technology number four). The main goal is to make idling cores go to a "clock disabled" sleep state (AMD's C1-state) instead of a low frequency state (P-state). The problem is that snoops from the active core(s) might wake up the sleeping core too quickly, and those snoops would get a very slow "just woke up" answer. To avoid this, the idle core will dump the contents of its L1 and L2 caches into the L3 cache before it goes to the clock gated C1 state. This could not be done on Barcelona, as the 2MB L3 cache would fill up quickly if three cores dumped their L1 and L2 data into the L3 cache. However, it is important to remark that even when three cores are clock gated, it is unlikely they will take 1.7MB away (512 KB * 3 + 64 KB * 3) as shared cachelines between the cores are always kept inside the otherwise exclusive L3 cache of all quad-core Opterons. Clock gating at the core level reduces dynamic power to zero, which allows the new Opteron to save up to 5W per core.

That was quite impressive: a Shanghai Opteron uses about 10W for a quad-core in idle, while a quad-core "Barcelona" Opteron uses around 25W. This is also confirmed in the measurement on desktop CPUs performed by LostCircuits. AMD still has some catching up to do: the six-core Opteron "Lisbon" (set to launch around March 2010) will go from C1 to the hardware controlled C1E state.

Intel Power Management

Intel moved to pretty aggressive clock gating at the CPU block level in its "Woodcrest" server CPU in 2006. Intel also introduced cache sizing: the necessary data in the L2 cache is reduced to a minimum and cache blocks are turned off. While Intel was an innovator when it came to block clock gating and cache power reductions, AMD was first with independent power planes and independent core frequencies. It shows that even in the power management race, AMD and Intel are leapfrogging each other. Intel caught up with AMD and leapfrogged AMD again when it introduced the Xeon "Nehalem" 5500 series, where core and uncore got independent power planes.

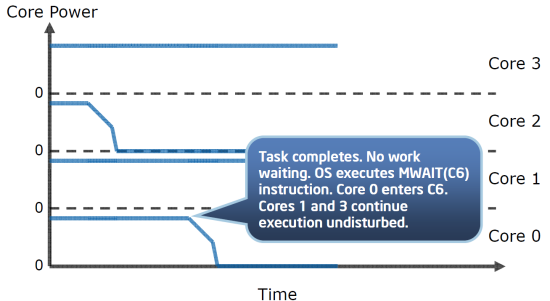

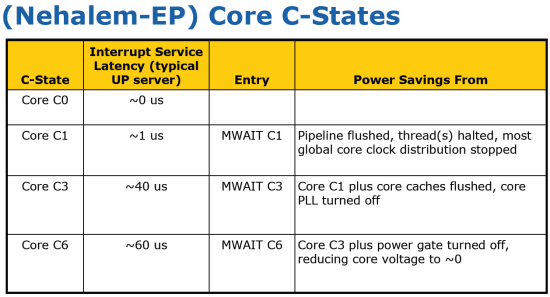

However, Intel went one step further. It not only enabled clock gating for each core, but also power gating. Clock gating only reduces the dynamic power, while power gating reduces both dynamic and static (mostly leakage) power. Thanks to the built-in Power Control Unit (PCU, hardware circuit), Intel promises us that cores can go to the lowest C6 sleep state while other cores continue to work "undisturbed".

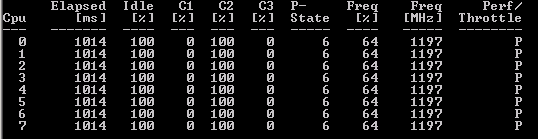

Below you can see how the operating system sees this. We asked the Windows 2008 kernel to tell us what ACPI state the cores use when the CPU is running completely idle. Notice that the clock speed of each logical core is reduced to 1.2GHz, another sign that the CPU is not processing anything significant.

So while the operating system demands the CPU to go the ACPI C2-state, the PCU overrides the orders of the operating system and should force the idle cores to go relatively quickly to C6, achieving lower power consumption. In C6, the core is not only completely clock gated, it is power gated too. So that means that the leakage of the idle core is reduced to almost zero. The older 5400 Xeon series was only capable of placing two cores into C6 at the same time (i.e. if only one core was idle, it couldn't enter C6). And the deeper the sleep, the slower the core wakes up. Intel severely lowered the time that is necessary to go to the C6 state and back in the Nehalem architecture.

The real magic of the "Nehalem" based architecture is that the integrated power switch makes this transition extremely quickly. Instead of 200µs [4] in the older Penryn processor (the Xeon 54xx is based on this architecture), the transition time is reduced to only 60µs. This should allow the Xeon 5500, 3500, and 3400 series to transition quickly to C6 with a small performance impact. We will check these claims.

The latest Intel Xeons have lots of P-states: one for each 133MHz speed bin from 1.2GHz to the maximum advertised clock speed. In other words, every 133MHz ratio between the lowest frequency P-state and the highest frequency P-state is a valid P-state.

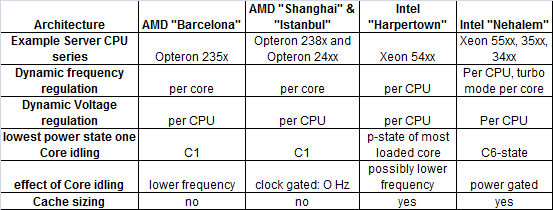

Below you will find an overview of AMD's and Intel's techniques to reduce power while processing.

Intel supports lots of P-states but makes much less use of them than AMD. Despite the fact that the infrastructure is there (each core has its own PLL) Intel doesn't generally run cores at different clock speeds. Sanjay Sharma of Intel:

In the steady state, all active cores run at the same frequency, which equals the highest requested frequency of any of the active cores. When there is a frequency change request from one core that results in a change in the resolved frequency, all cores will change to that new resolved frequency. However, not all cores will necessarily change frequency at the same time, since the instruction stream on each core needs to reach an end of macro instruction boundary before it can change frequency. If a core is running a very long instruction when the frequency change request arrives, that core will change frequency later than the other cores that reached the interruptible point sooner. As a result, for very short time periods, it is possible that cores could be running at different frequencies.

The most likely reason why Intel does not allow cores to run at different clock speeds for prolonged times is the fact that you have to keep the voltage that is needed for the highest clock speed. AMD has some catching up to do, as the lowest C-state of an idle core is only C1. This situation will improve when the improved Magny-cours and Lisbon Opterons arrive, as those CPUs will support a C1E state like their notebook siblings.

35 Comments

View All Comments

UrQuan3 - Thursday, January 21, 2010 - link

I'm trying to remember for 2008, but wasn't there a way to either force or suggest thread/core affinity? It looks like the scheduler was hopping all over the place on the Opterons.JarredWalton - Thursday, January 21, 2010 - link

You guys better pay attention and answer this post, or his species will try to enslave and/or wipe out the entire galaxy! ;-)mino - Wednesday, January 20, 2010 - link

I mean, not, why do you use them for this article.They are fine examples of low-power platforms, even if from vastly different markets.

But,

WHY ON EARTH DO YOU KEEP TALKING LIKE THEY WERE COMPARABLE THROUGHOUT THE ARTICLE ???

IntelUser2000 - Wednesday, January 20, 2010 - link

By the way, I don't know if you have the settings wrong or that's how it works, the Turbo Boost mode is not affected on the Home PC versions of Windows. Balanced uses Turbo Boost just as well on my Windows 7 Home Premium with Core i5 661.JarredWalton - Wednesday, January 20, 2010 - link

I was wondering this as well, but I'm not familiar with Windows Server... what I do know is that Power Saver on consumer Windows OSes really limits the CPU frequency scaling features, and it sort of looks like Balanced on the Server OS has aspects of consumer "Power Saver" as well as some elements of "Balanced". Odd to see only two power settings available, where Win7 now has at least 3 and often 5.mino - Wednesday, January 20, 2010 - link

It seems a classic example of KISS strategy of choosing the most-sensible options and so reducing decision complexity for IT people.Modes like "Max battery" have anyway no reason for existence on a server box.

RobinBee - Tuesday, January 19, 2010 - link

If you use your pc as a music server:Power saving methods ruin sound quality even if using a good sound card. The problem is »electronic« sound distortion. I do not know why this happens.

Also: The chosen number of IRQ pr. second in a net card can ruin sound quality too. Why, I do not know.

Anato - Tuesday, January 19, 2010 - link

I'm interested to see results from different operating systems which may be better at controlling processes in different CPU's. Namely no CPU hopping and is their power management as efficient as Windows is.Most interested at:

Linux and Solaris

JohanAnandtech - Tuesday, January 19, 2010 - link

Excellent suggestion :-). Problem is to keep the application the same. We currently tested SQL Server 2008 on Windows 2008 and of course this can not be done on Linux. However, I am not stranger to linux as a server.I am no fan of MySQL on Windows, but maybe this has improved. Would MySQL on Windows and Linux makes sense as a comparison?

maveric7911 - Tuesday, January 19, 2010 - link

Why not use oracle ;)