Better Image Quality: CSAA & TMAA

NVIDIA’s next big trick for image quality is that they’ve revised Coverage Sample Anti-Aliasing. CSAA, which was originally introduced with the G80, is a lightweight method of better determining how much of a polygon actually covers a pixel. By merely testing polygon coverage and storing the results, the ROP can get more information without the expense of fetching and storing additional color and Z data as done with a regular sample under MSAA. The quality improvement isn’t as pronounced as just using more multisamples, but coverage samples are much, much cheaper.

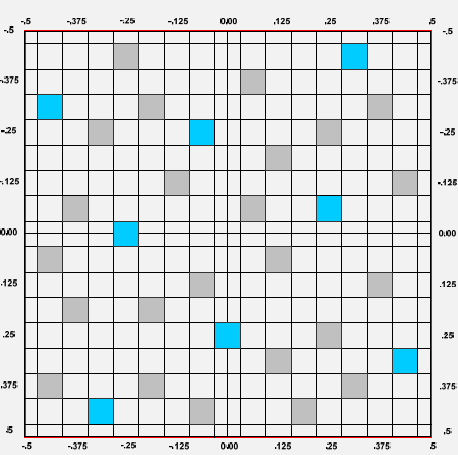

32x CSAA sampling pattern

For the G80 and GT200, CSAA could only test polygon edges. That’s great for resolving aliasing at polygon edges, but it doesn’t solve other kinds of aliasing. In particular, GF100 will be waging a war on billboards – flat geometry that uses textures with transparency to simulate what would otherwise require complex geometry. Fences, leaves, and patches of grass in fields are three very common uses of billboards, as they are “minor” visual effects that would be very expensive to do with real geometry, and would benefit little from the quality improvement.

Since billboards are faking geometry, regular MSAA techniques do not remove the aliasing within the billboard. To resolve that DX10 introduced alpha to coverage functionality, which allows MSAA to anti-alias the fake geometry by using the alpha mask as a coverage mask for the MSAA process. The end result of this process is that the GPU creates varying levels of transparency around the fake geometry, so that it blends better with its surroundings.

It’s a great technique, but it wasn’t done all that well by the G80 and GT200. In order to determine the level of transparency to use on an alpha to coverage sampled pixel, the anti-aliasing hardware on those GPUs used MSAA samples to test the coverage. With up to 8 samples (8xQ MSAA mode), the hardware could only compute 9 levels of transparency, which isn’t nearly enough to establish a smooth gradient. The result was that while alpha to coverage testing allowed for some anti-aliasing of billboards, the result wasn’t great. The only way to achieve really good results was to use super-sampling on billboards through Transparency Super-Sample Anti-Aliasing, which was ridiculously expensive given that when billboards are used, they usually cover most of the screen.

For GF100, NVIDIA has made two tweaks to CSAA. First, additional CSAA modes have been unlocked – GF100 can do up to 24 coverage samples per pixel as opposed 16. The second change is that the CSAA hardware can now participate in alpha to coverage testing, a natural extension of CSAA’s coverage testing capabilities. With this ability CSAA can test the coverage of the fake geometry in a billboard along with MSAA samples, allowing the anti-aliasing hardware to fetch up to 32 samples per pixel. This gives the hardware the ability to compute 33 levels of transparency, which while not perfect allows for much smoother gradients.

The example NVIDIA has given us for this is a pair of screenshots taken from a field in Age of Conan, a DX10 game. The first screenshot is from a GT200 based video card running the game with NVIDIA’s 16xQ anti-aliasing mode, which is composed of 8 MSAA samples and 8 CSAA samples. Since the GT200 can’t do alpha to coverage testing using the CSAA samples, the resulting grass blades are only blended with 9 levels of transparency based on the 8 MSAA samples, giving them a dithered look.

Age of Conan grass, GT200 16x AA

The second screenshot is from GF100 running in NVIDIA’s new 32x anti-aliasing mode, which is composed of 8 MSAA samples and 24 CSAA samples. Here the CSAA and MSAA samples can be used in alpha to coverage, giving the hardware 32 samples from which to compute 33 levels of transparency. The result is that the blades of grass are still somewhat banded, but overall much smoother than what the GT200 produced. Bear in mind that since 8x MSAA is faster on the GF100 than it was GT200, and CSAA has very little overhead in comparison (NVIDIA estimates 32x has 93% of the performance of 8xQ), the entire process should be faster on GF100 even if it were running at the same speeds as GT200. Image quality improved, and at the same time the performance improved too.

Age of Conan grass, GF100 32x AA

The ability to use CSAA on billboards left us with a question however: isn’t this what Transparency Anti-Aliasing was for? The answer as it turns out is both yes and no.

Transparency Anti-Aliasing was introduced on the G70 (GeForce 7800GTX) and was intended to help remove aliasing on billboards, exactly what NVIDIA is doing today with MSAA. The difference is that while DX10 has alpha to coverage, DX9 does not – and DX9 was all there was when G70 was released. Transparency Multi-Sample Anti-Aliasing (TMAA) as implemented today is effectively a shader replacement routine to make up for what DX9 lacks. With it, DX9 games can have alpha to coverage testing done on their billboards in spite of DX9 not having this feature, allowing for image quality improvements on games still using DX9. Under DX10 TMAA is superseded by alpha to coverage in the API, but TMAA is still alive and well due to the large number of older games using DX9 and the large number of games yet to come that will still use DX9.

Because TMAA is functionally just enabling alpha to coverage on DX9 games, all of the changes we just mentioned to the CSAA hardware filter down to TMAA. This is excellent news, as TMAA has delivered lackluster results in the past – it was better than nothing, but only Transparency Super-Sample Anti-Aliasing (TSAA) really fixed billboard aliasing, and only at a high cost. Ultimately this means that a number of cases in the past where only TSAA was suitable are suddenly opened up to using the much faster TMAA, in essence making good billboard anti-aliasing finally affordable on newer DX9 games on NVIDIA hardware.

As a consequence of this change, TMAA’s tendency to have fake geometry on billboards pop in and out of existence is also solved. Here we have a set of screenshots from Left 4 Dead 2 showcasing this in action. The GF100 with TMAA generates softer edges on the vertical bars in this picture, which is what stops the popping from the GT200.

Left 4 Dead 2: TMAA on GT200

Left 4 Dead 2: TMAA on GF100

115 Comments

View All Comments

Ryan Smith - Wednesday, January 20, 2010 - link

From my perspective, unless they can deliver better than 5870 performance at a reasonable price, then their image quality improvements aren't going to be enough to seal the deal. If they can meet those two factors however, then yes, image quality needs to be factored in to some degree.At this point I'm not sure where that would be, and part of that is diminishing returns. Tessellation will return better models, but adding polygons will result in diminishing returns. We're going to have to see what games do in order to see if the extra geometry that GF100 is supposed to be able to generate can really result in a noticeable difference.

Will game makers take advantage of it? That's the million-dollar question right now. NVIDIA is counting on them doing so, but it remains to be seen just how many devs are going to make meaningful use of tessellation (beyond just n-patching things for better curves), since DX11 game development is so young.

Consoles certainly have a lot to do with it. One very real possibility is that the bulk of games continue to be at the DX9 level until the next generation of consoles hits with DX11-like GPUs. I'll answer the rest of this in your next question.

The good news is that it takes very little work. Game assets are almost always designed at a much greater level of detail than what they ship at. The textbook example is Doom3, where the models were designed on the order of 1mil polygons; they needed to be designed that detailed in order to compute proper bump maps and parallax maps. Tessellation and the displacement map is just one more derived map in that regard - for the most part you only need to export an appropriate displacement map from your original assets, and NV is counting on this.

The only downsides to NV's plan are that: 1) Not everything is done at this high of a detail level (models are usually highly detailed, the world geometry not so much), and 2) Higher quality displacement maps aren't "free". Since a game will have multiple displacement maps (you have to MIP-chain them just like you do any other kind of map), a dev is basically looking at needing to include at least 1 more level that's even bigger than the others. Conceivably, not everyone is going to have extra disc space to spend on such assets. Although most games currently still have space to spare on a DVD-9, so I can't quantify how much of a problem that might be.

FITCamaro - Monday, January 18, 2010 - link

It will be fast. But from the size of it, its going to be expensive as hell.I question how much success nvidia will have with yet another fast but hot and expensive card. Especially with the entire world in recession.

beginner99 - Monday, January 18, 2010 - link

Sounds nice but I doubt it's useful yet. DX11, probably takes at least 1-2 year till it takes off and the geometry power could be useful. Meaning could have easly waited a generation longer.Power consumption will probably be deciding. The new Radeons do rather well in that area.

But anyway, i'm gonna wait. unless it is complete crap, it will at least help for Radeon prices going south, even if you don't buy one.

just4U - Monday, January 18, 2010 - link

On Amd pricing. It seems pretty fair for the 57XX line. Cheaper overall then the 4850 and 4870 on their launches with similiar performance and added DX11 features.It would be nice to see the 5850 and 5870 priced about one third cheaper.. but here in Canada the cards are always sold out or of very limited stock so... I guess there is some justification for the higher pricing.

I still can't get a 275 cheap either. It's priced 30-40% higher then the 4870.

The only card(s) I've purchased so far are the 5750s as I feel the last gen products are still viable at their current pricing ... and I buy a fair amount of video cards (20-100 per year)

solgae1784 - Monday, January 18, 2010 - link

Let's just hope this GF100 doesn't become another disaster that was "Geforce FX".setzer - Monday, January 18, 2010 - link

While on paper these specs look great for the High-End market (>500€ cards) how much will the mainstream market lose, as in the cards that sell around the 150~300€ bracket, which coincidently are the cards the most people tend to buy. Nvidia tends to scale down the specifications but how much will it be scaled down, what is the interest of the new IQ improvements if you can only use them on high-end cards because the mainstream cards can't handle it.The 5 series radeons are similar, the new generation only has appeal if you go for the 58xx++ cards, which are overpriced, if you already have a 4850 you can hold out from buying a new card for at least one extra year, take the 5670, it has dx11 support but hasn't the horse power to use it effectively neutering the card from start as far as dx11 goes.

So even if Nvidia goes with a March launch of GF100, I'm guessing it will not be until June or July that we see the GeForce 10600GT (like or GX600GT, phun on ATI 10000 series :P), which will just have the effect of Radeon prices to stay where they are (high) and not where they should be in terms of performance (slightly on par with the HD 4000 series).

Beno - Monday, January 18, 2010 - link

page 2 isnt workingZool - Monday, January 18, 2010 - link

It will be interesting how much of the geometry performance will be true in the end from all these hype. I wouldnt put my hand into fire on nvidias pr slides and in house demos. Like the pr graph with 600% teselation performance increase over ati card. It will surely have some dark sides too like everything around. Nothing is free. Until real benchmarks u cant trust too much to pr graphs these days.haplo602 - Monday, January 18, 2010 - link

This looks similar to what Riva TNT used to be. Nvidia was promising everything including a cure for cancer. It turned out to be barely better than 3Dfx at that time because of clock/power/heat problems.Seems Fermi will be a big bang in workstation/HPC markets. Gaming not so much.

DominionSeraph - Monday, January 18, 2010 - link

Anyone with at least half a brain had a TNT. Tech noobs saw "Voodoo" and went with the gimped Banshee, and those with money to burn threw in dual Voodoo 2's.How does this at all compare to Fermi, whose performance will almost certainly not justify its price. The 5870's doesn't, not with the 5850 in town. Such is the nature of the bleeding edge.

Do you just type things out at random?