Intel's 32nm Update: The Follow-on to Core i7 and More

by Anand Lal Shimpi on February 11, 2009 12:00 AM EST- Posted in

- CPUs

Seven billion dollars.

That’s the amount that Intel is going to spend in the US alone on bringing up its 32nm manufacturing process in 2009 and 2010.

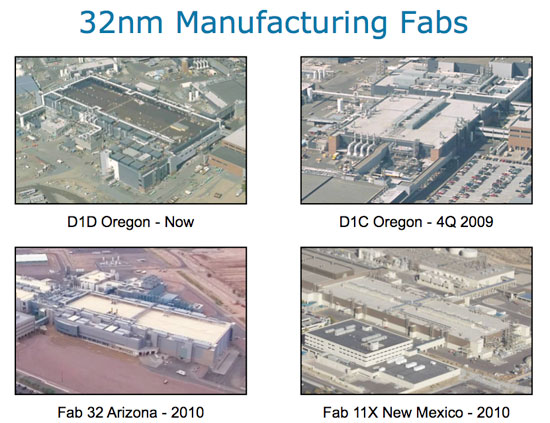

These are the fabs Intel is converting to 32nm:

In Oregon Intel has the D1D fab which is already producing 32nm parts, and D1C which is scheduled to start 32nm production at the end of this year. Then two fabs in Arizona: Fab 32 and Fab 11X. Both of them come on line in 2010.

By the end of next year the total investment just to enable 32nm production in the US will be approximately eight billion dollars. In a time where all we hear about are bailouts, cutbacks and recession, this is welcome news.

If anything, Intel should have a renewed focus on competition given that its chief competitor finally woke up. That focus is there. The show must go on. 32nm will happen this year. Let’s talk about how.

64 Comments

View All Comments

Targon - Wednesday, February 11, 2009 - link

For the CPU market, the problem is the ever growing amount of cache memory. Intel processors are designed with the large cache being their solution to improvements that AMD brings to the table.I suspect that Intel will have more trouble after this move to the new fab process because the difficulty in moving to a new process node grows at an exponential rate. We saw Intel hit a wall with the Pentium 3 line because they were not ready for a new process shrink at that point, so the P4 came out. When Intel got their process technology on track, the people at Intel could go back to the Pentium 3 design(with improvements) to release the Core and Core 2 Duo.

There will come a time when an all new design will be needed in order to hold on to their lead, and that is when AMD will probably catch back up, if AMD can survive until then.

BSMonitor - Thursday, February 12, 2009 - link

What an utter load of BS. Thanks fanboy.You get all that from wiki?

PrinceGaz - Wednesday, February 11, 2009 - link

Even though my last three CPUs were all from AMD (they made sense at the time- K6-III/400, Athlon XP 1700+, Athlon 64 X2 4400+), I have to disagree with your comment about the improvements (presumably the integrated memory controller) which AMD brings to the table.With Core i7, Intel has effectively removed the one last technological advantage AMD had- faster memory access. The fact that Intel chips still tend to have larger L3 caches is quite simply because they can afford to give it to them, as they are ahead of AMD on the fab-process. For a high-end desktop chip where there is die-space to spare, you could add some more cores which will probably sit idle (keeping four busy is hard enough, especially with HT), but adding more L3 cache (so long as the latency of it is not adversely affected) is a very cheap and easy way to use up the space and provide a bit of a speedup in almost everything.

AMD is currently fighting a losing game. The Phenom II (bug-fixed Phenom) cannot compete with Core i7 with AMDs current fabs, and unlike Intel who have the tick-tock steady new-process, then new-design with large teams working on each step; AMD seem to have one team working on a new design, which has to be made to work with whichever process looks like the best option at the time.

We need AMD to survive for the x86 (or x64, who came up with that :p ) CPU market to be competitive, but I think the head of AMD is going to have to get into bed with the head of IBM, else they are doomed to fall ever further behind Intel in chip-design. The K10 is promising, but a long way off still, and AMD hasn't exactly been raking in the billions of dollars of profits recently to do that R&D. VIA have found an x86 CPU niche they can compete in, I fear that unless AMD pull an elephant out the hat with the K10, they'll have to slot in between VIA and Intel in providing CPUs specialising in a particular performance-sector, with Intel being the undisputed leader.

JonnyDough - Wednesday, February 11, 2009 - link

Well said. I concur.