Intel's 32nm Update: The Follow-on to Core i7 and More

by Anand Lal Shimpi on February 11, 2009 12:00 AM EST- Posted in

- CPUs

The Manufacturing Roadmap

The tick-tock cadence may have come about at the microprocessor level, but its roots have always been in manufacturing. As long as I’ve been running AnandTech, Intel has introduced a new manufacturing process every two years. In fact, since 1989 Intel has kept up this two year cycle.

We saw the first 45nm CPUs with the Penryn core back in late 2007. Penryn, released at the very high end, spent most of 2008 making its way mainstream. Now you can buy a 45nm Penryn CPU for less than $100.

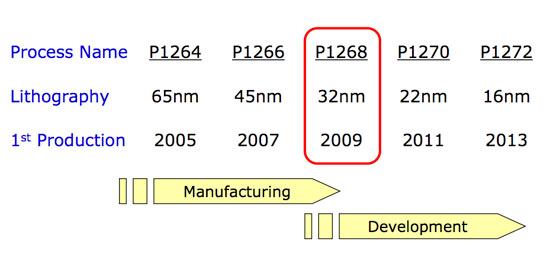

The next process technology, which Intel refers to internally as P1268, shrinks transistor feature size down to 32nm. The table above shows you that first production will be in 2009 and, after a brief pause to check your calendars, that means this year. More specifically, Q4 of this year.

I’ll get to the products in a moment, but first let’s talk about the manufacturing process itself.

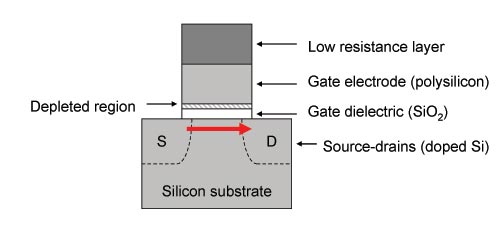

Here we have our basic CMOS transistor:

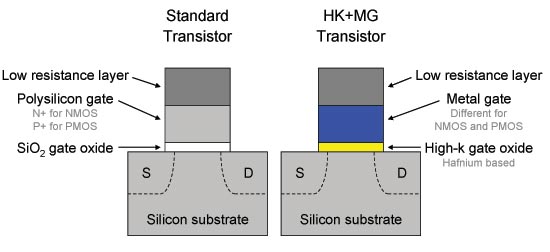

Current flows from source to drain when the transistor is on, and it isn’t supposed to flow when it’s off. Now as you shrink the transistor, all of its parts shrink. At 65nm Intel found that it couldn’t shrink the gate dielectric any more without leaking too much current through the gate itself. Back then the gate dielectric was 1.2nm thick (about the thickness of 5 atoms), but at 45nm Intel’s switched from a SiO2 gate dielectric to a high-k one using Hafnium. That’s where the high-k comes from.

The gate electrode also got replaced at 45nm with a metal to help increase drive current (more current flows when you want it to). That’s where the metal gate comes from.

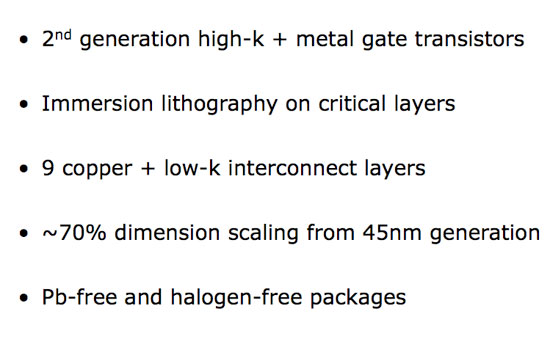

The combination of the two changes to the basic transistor gave us Intel’s high-k + metal gate transistors at 45nm, and at 32nm we have the second generation of those improvements.

The high-k gate dielectric gets a little thinner (equivalent to a 0.9nm SiO2 gate, but presumably thicker since it’s Hafnium based, down from 1.0nm at 45nm ) and we’ve still got a metal gate.

At 32nm the transistors are approximately 70% the size of Intel’s 45nm hk + mg transistors, allowing Intel to pack more in a smaller area.

The big change here is that Intel is using immersion lithography on critical metal layers in order to continue to use existing 193nm lithography equipment. The smaller your transistors are, the higher resolution your equipment has to be in order to actually build them. Immersion lithography is used to increase the resolution of existing lithography equipment without requiring new technologies. It is a costlier approach, but one that becomes necessary as you scale below 45nm. Note that AMD to made the switch to immersion lithography at 45nm.

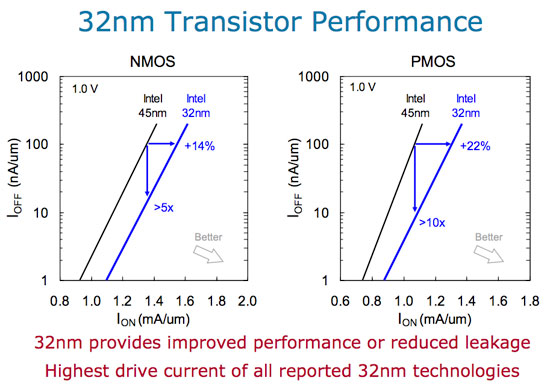

Intel reported significant gains in transistor performance at 32nm; the graphs below help explain:

We’re looking at the comparison of leakage current vs. drive current for both 32nm NMOS and PMOS transistors. The new transistors showcase a huge improvement in power efficiency. You can either run them faster or run them at the same speed and significantly reduce leakage current by a magnitude of greater than 5 - 10x compared to Intel’s 45nm transistors. Intel claims that its 32nm transistors boast the highest drive current of all reported 32nm technologies at this point, which admittedly there aren’t many.

The power/performance characteristics of Intel’s 32nm process make it particularly attractive for mobile applications. But more on that later.

64 Comments

View All Comments

Oyvind - Wednesday, April 15, 2009 - link

7 - 10 post are about the fact that todays sw don't use more than 2 cores in a efficent way. Well 2 - 3 years ago, there was close to none. Did Valve, Epic and others build frameworks for using multi cpu's before the hardware base was in place. The answer is no. Do most big software house today put a big effort in scaling over more cores? The answer is yes. Should Intel/Amd wait until the sw houses catch up? I don't understand it, but the spoken majority seems to answer this with a yes?My question: When the big sw house is done with the mulri cpu frameworks, do you son't belive they then will scale over n numbers of cpu's. Userinput, rendering/gpu stuff, AI x n the deept of today etc. All real lifte arhitechure is paralell, sw is not yet, but hopefully that will change.

----

If lifte is good, and you have insane to much money, you stop developing, you dont need to priortize and you slowly fall back in your pillow. Yes AMD fight uphill, but if they manage to survive, nature has proven that fhigting uneven odds, will give you and middel to long term edge (ok if you survive). Tons of money dont save anything. Not sure they suvive, but if they don't a new company with clever enginers will rise somwhere in the future. Yes we need competition and there always will be.

mattigreenbay - Friday, March 6, 2009 - link

This is the end of AMD. Unless this turns out like P4 (not likely), AMD will have to release their process first or soon after [or better yet, a 16nm ultra-fast processor, and while I'm still dreaming, make it free] and have it perform better (also not likely). Poor AMD. I was going to buy a Phenom II, but Intel seems the way to go, future-wise. AMD will be liquidated, as well as VIA and Intel will go back to selling way overpriced processors that perform less than a i386 [Windows 7 certified].mattigreenbay - Friday, March 6, 2009 - link

But it'll come with a free super fast Intel GPU. (bye bye Nvidia too) :(arbiter378 - Sunday, November 22, 2009 - link

Intel doesn't make fast gpu's. Even when they tried with that agp gpu ati and nvidia killed it. They won't let a new playing into a graphics market with out a fight. Lastly intel has been trying to beat amd for 40 something years, and there still not even close to beating them. Now that amd has acquired amd they have superior graphics patents.LeadSled - Friday, February 20, 2009 - link

What is really amazing, is the shrink proccess timetable. It looks like they will meet the timetable for our first Quantum DOT procersors. It is theorized to occure at the 1.5nm proccess and by the year 2020.KeepSix - Saturday, February 14, 2009 - link

I guess I can't blame them for changing sockets all the time, but I'm not sure if I'll be switching any time soon. My Q6600 hasn't gone past 50% usage yet, even when extreme multi-tasking (editing HD video, etc.)I'd love to build an i7 right now, but I just can't justify it.

Hrel - Thursday, February 12, 2009 - link

On the mainstream quad-core side, it may not make sense to try to upgrade to 32nm quad-core until Sandy Bridge at the end of 2010. If you buy Lynnfield this year, chances are that you won’t feel a need to upgrade until late 2010/2011.So if you buy a quad core 8 thread 3.0 Ghz processor you will "NEED" to upgrade in one year?! What?! It doesn't make sense to upgrade just for the sake of having the latest. Upgrade when your computer can't run the programs you need it to anymore; or when you have the extra money and you'll see at least a 30 percent minimum increase in performance. You should be good for at least 2 years with Lynnfield and probably 4 or 5 years.

QChronoD - Thursday, February 12, 2009 - link

He's saying that the people who have no qualms about throwing down a grand on just the processor are going to want to upgrade to the 32nm next year.However for the rest of us that don't shit gold, picking up a Lynnfield later this year will tide us over until 2011 fairly happily.

AnnonymousCoward - Thursday, February 12, 2009 - link

> However for the rest of us that don't shit gold, picking up a Lynnfield later this year will tide us over until 2011 fairly happily.My C2D@3GHz will hold me over to 2011...

MadBoris - Thursday, February 12, 2009 - link

I watch roadmaps from time to time and I know where AMD has potential.Simplify the damn roadmap, platforms, chipsets, sockets!

Seriously, I need a spread sheet and calculator to keep it all straight.

Glad Anand gave kind of a summary for were and when it makes sense to upgrade but I just don't have the patience to filter through it all to the end I get a working knowledge of it.

One thing AMD has been good at in the past if they continue, is to keep upgrades simple. I don't want a new motherboard and new socket on near every CPU upgrade. I'm not sure if mobo makers love it or hate it, obviously they get new sales but it's kind of nuts.

This alone, knowing I have some future proofing on the mobo, makes CPU upgrades appealing and easy and something I would take advantage of.

As far as the GPU/CPU it's nothing I will need for years to come. We will have to wait until it permeates the market before it gets used by devs, just like multicore. It will at least take consoles implementing it before game devs start utilizing it, and even then it's liable to take a lot of steps back in performance (it's only hype now)...