The Intel SSD 670p (2TB) Review: Improving QLC, But Crazy Pricing?!?

by Billy Tallis on March 1, 2021 12:00 PM ESTAdvanced Synthetic Tests

Our benchmark suite includes a variety of tests that are less about replicating any real-world IO patterns, and more about exposing the inner workings of a drive with narrowly-focused tests. Many of these tests will show exaggerated differences between drives, and for the most part that should not be taken as a sign that one drive will be drastically faster for real-world usage. These tests are about satisfying curiosity, and are not good measures of overall drive performance. For more details, please see the overview of our 2021 Consumer SSD Benchmark Suite.

Whole-Drive Fill

|

|||||||||

| Pass 1 | |||||||||

| Pass 2 | |||||||||

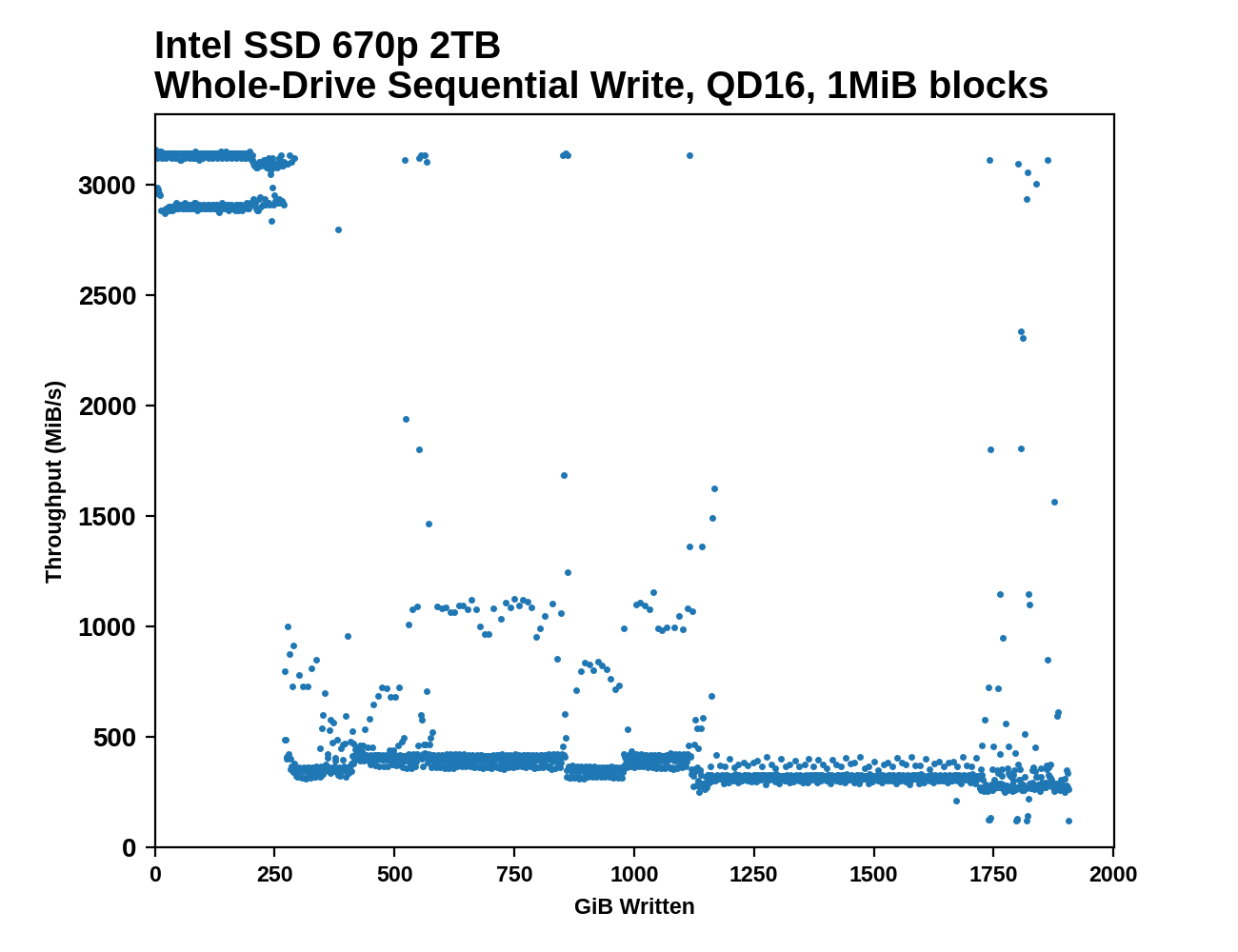

The Intel SSD 670p shows less consistent performance than the 660p during the sequential drive write. Writing to the SLC cache bounces between two performance levels, just above and just below 3 GB/s. The cache runs out more or less on schedule and performance drops down into SATA territory with sporadic outliers that are faster than normal. There's a bit of a stepped downward trend in performance as the drive approaches full. On the second pass that overwrites data on a full drive, the 670p is even more inconsistent with short bursts up to SLC write speed throughout the process.

|

|||||||||

| Average Throughput for last 16 GB | Overall Average Throughput | ||||||||

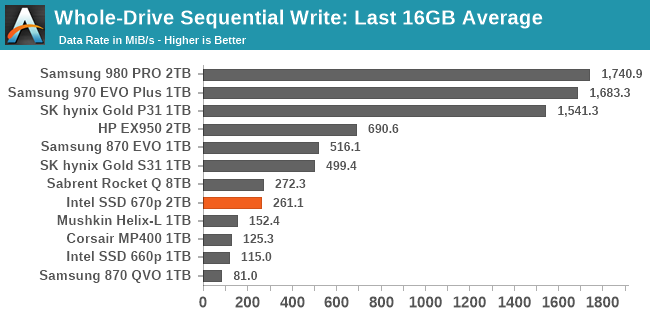

The overall average write speed of the 670p is now almost enough to saturate a SATA interface. At the tail end of the filling process it does dip down to hard drive speeds, but any large sequential transfer of data onto the 670p will definitely complete more quickly than any one hard drive can handle. The controller upgrade helps some here (primarily with the SLC cache write speed), but for the most part the NAND itself is still the bottleneck, which means the smaller capacities of the 670p will not perform as well.

Working Set Size

|

|||||||||

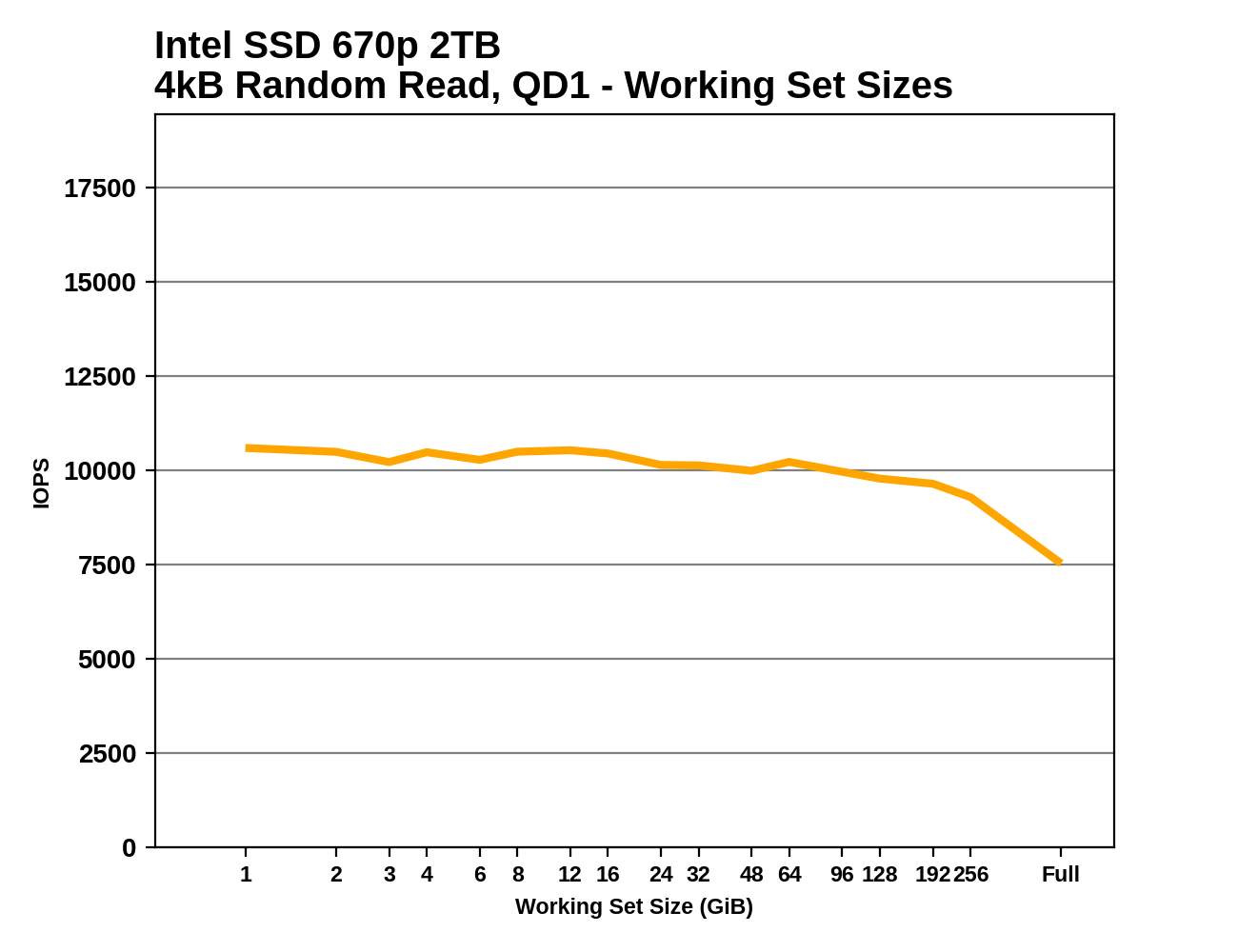

Intel is still clearly using a reduced DRAM design with the 670p rather than the full 1GB per 1TB ratio that mainstream SSDs use. The drop in performance at large working set sizes closely mirrors what we saw with the 660p, albeit with higher performance across the board thanks to the lower latency of the 144L NAND.

Performance vs Block Size

|

|||||||||

| Random Read | |||||||||

| Random Write | |||||||||

| Sequential Read | |||||||||

| Sequential Write | |||||||||

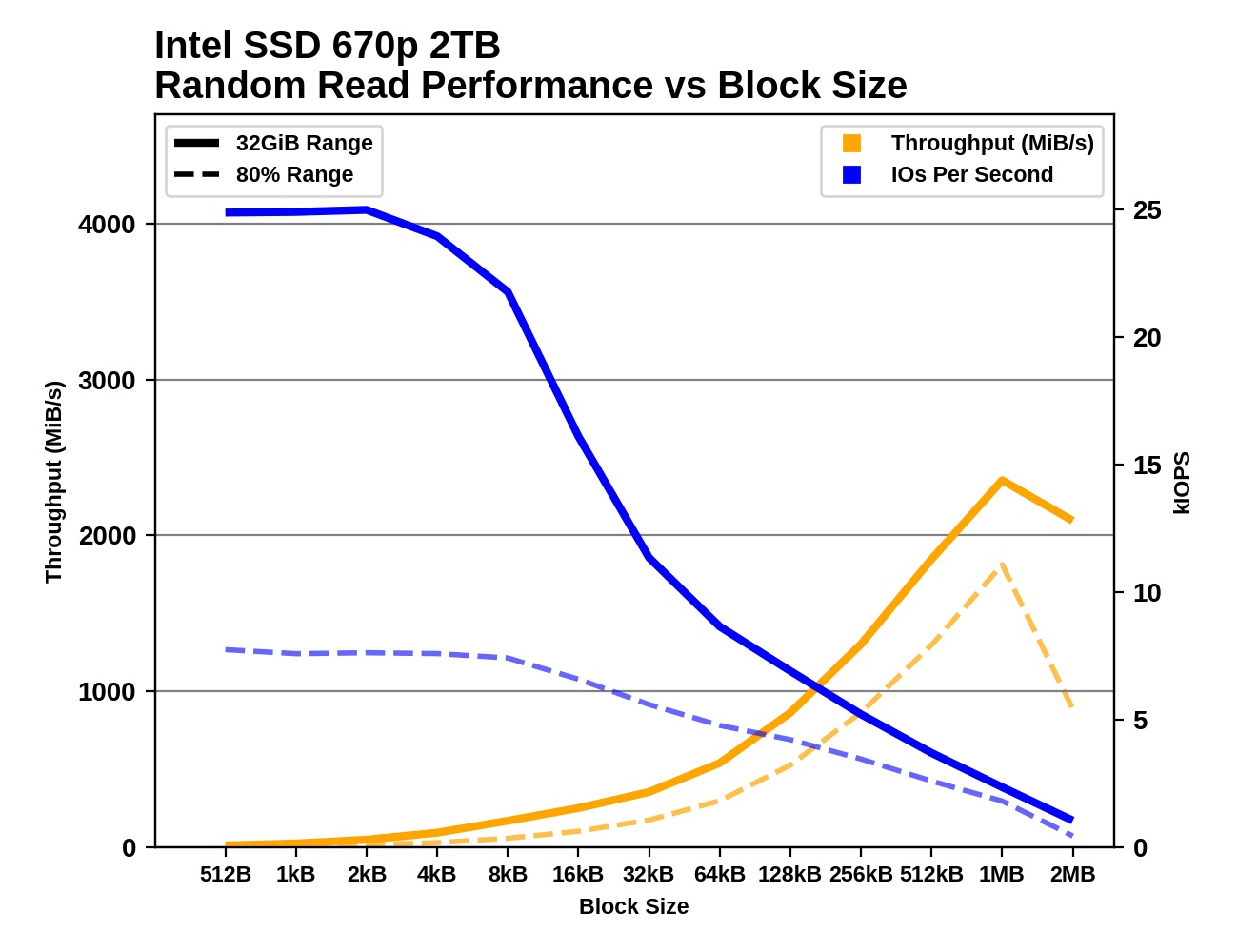

With the 670p, Intel has eliminated the IOPS penalty that random reads smaller than 4kB suffer on the 660p, but that effect is still present for random writes. The IOPS difference between the short-range tests that hit the SLC cache and the 80% full drive tests is bigger for the 670p than the 660p; the newer drive has generally improved performance, but is in some ways even more reliant on the SLC cache.

Sequential throughput on the 670p keeps increasing with larger block sizes, long past the point where the 660p saturated its controller's limits. The performance trends for both sequential reads and writes are well-behaved with little disparity between the short-range tests and the 80% full drive tests, and no indication of the SLC cache running out during the sequential write tests.

72 Comments

View All Comments

Wereweeb - Tuesday, March 2, 2021 - link

What are you smoking? Four bits per cell is indeed 33% more bits per cell than three bits per cell.Bp_968 - Tuesday, March 2, 2021 - link

He's smoking "math". Lol. 3 bits per cell is 8 voltage states, and 4 bits per cell is 16 voltage states, which is double. If your going to comment with authority on an advanced subject you should learn the basics, and binary math is one of the basics.bug77 - Tuesday, March 2, 2021 - link

Yeah, it doesn't work like that.3 bits is 3 bits. They hold 8 possible combinations, but they're independent of each other.

4 bits is 33% more than 3.

Billy Tallis - Tuesday, March 2, 2021 - link

3 bits per cell is 8 POSSIBLE voltage states, but any given cell can only exist in one of those states at a time. The possible voltage states are not the cell's data storage capacity. The number of bits per cell is the cell's data storage capacity.FunBunny2 - Tuesday, March 2, 2021 - link

"3 bits per cell is 8 POSSIBLE voltage states, but any given cell can only exist in one of those states at a time."which incites a lower brain stem question: does NAND and/or controllers implement storage with a coding along the lines of RLL or s/pdif (eliminate long 'strings' of either 1 or 0) in order to lower the actual voltages required? if only across logically concatenated cells, so 1,000,000 would store as 1,00X where X is interpreted as 4 0? I can't think of a way off the top of my head, but there must be some really smart engineer out there who has?

code65536 - Tuesday, March 2, 2021 - link

Um, voltage states are not storage--it's instead a measure of the difficulty of storing that many bits. QLC is 4 bits per cell, and needs to be able to discern 16 voltage states to store those 4 bits. TLC needs to discern only 8 stages in order to store 3 bits. What that means is that QLC stores 33% more data at the expense of 100% more difficulty. Each bit added doubles the difficulty of working with that data. SLC->MLC was 100% more difficulty for 100% more storage. MLC->TLC was 100% more difficulty for 50% more storage. TLC->QLC was 100% more difficulty for 33% more storage. And QLC->PLC will be 100% difficulty for only 25% more storage.Spunjji - Thursday, March 4, 2021 - link

I think at this stage it's also worse than double the difficulty - the performance and endurance penalties are very, very high.HarryVoyager - Monday, March 8, 2021 - link

The 660p initially showed a significant price advantage; I was able to get a 2TB at $180, but it has since disappeared.That said, in day to day practical use, I haven't seen much difference between a 860 Pro, the 660p and an XPG Gamix X7.

All of them have been considerable faster than my harddrives, and pretty much all of them can feed data faster than my CPUs or network can process it.

I know at some point that will change, and we will see games and consumer software designed to take advantage of the sort of data rates that NVME SSDs can provide, but I'll likely get a dedicated NVME drive for that, when that day comes.

RSAUser - Tuesday, March 2, 2021 - link

No one who looked at the actual daily write warranty said that.I've never had a TLC drive file as a host OS drive, only the two times I bought a QLC one after two years.

So my Motto is TLC for host, QLC for mass storage.

yetanotherhuman - Tuesday, March 2, 2021 - link

Nope, in my mind TLC is still a cheap toy, fit for a less important machine or maybe a games drive. MLC, 2-bit per cell, is still the right way to go, and QLC is so shit that it should be given away in cereal boxes.