Intel Rocket Lake (14nm) Review: Core i9-11900K, Core i7-11700K, and Core i5-11600K

by Dr. Ian Cutress on March 30, 2021 10:03 AM EST- Posted in

- CPUs

- Intel

- LGA1200

- 11th Gen

- Rocket Lake

- Z590

- B560

- Core i9-11900K

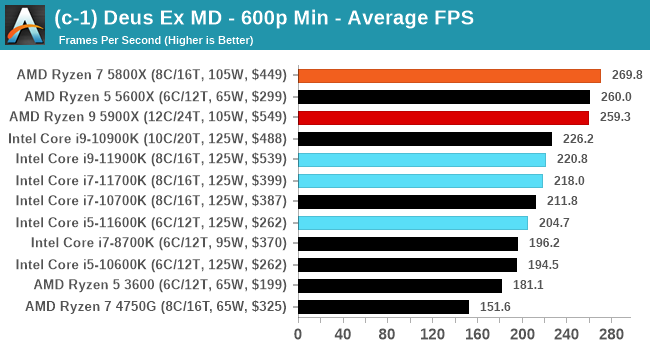

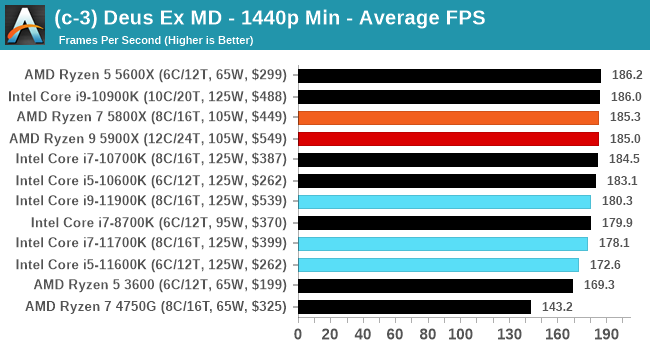

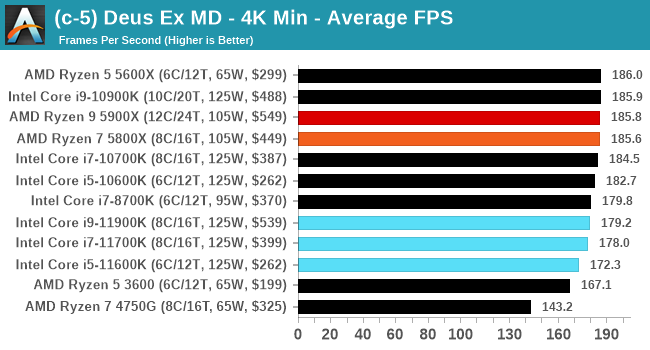

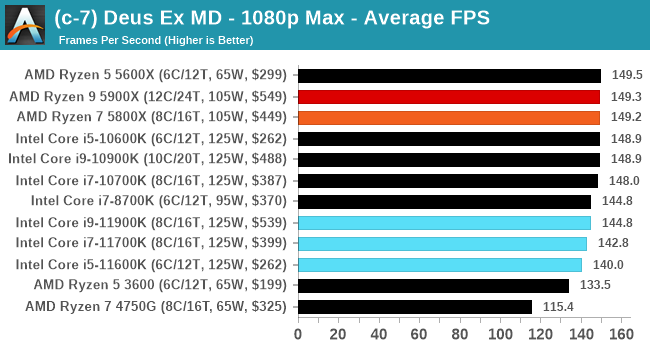

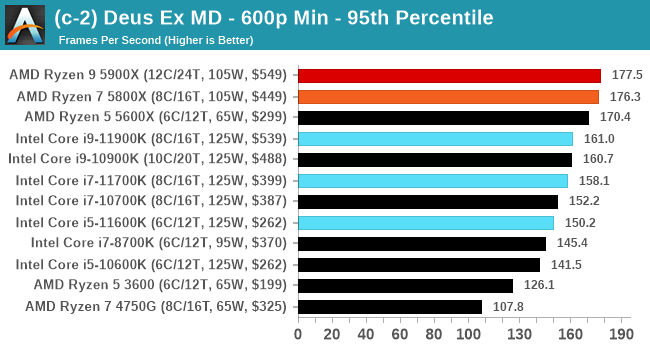

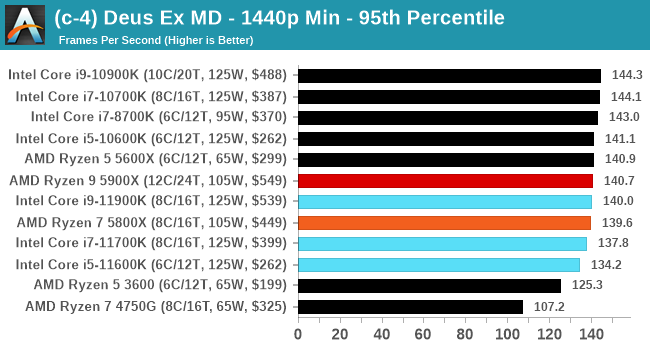

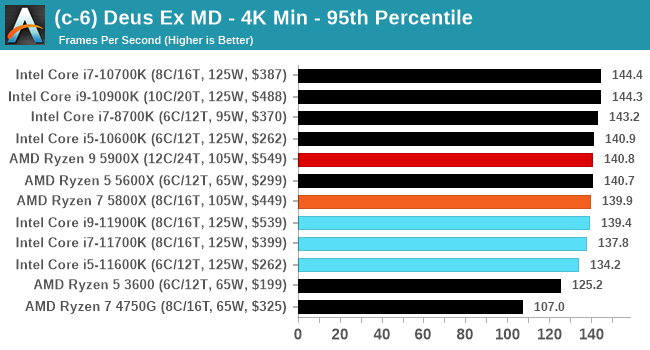

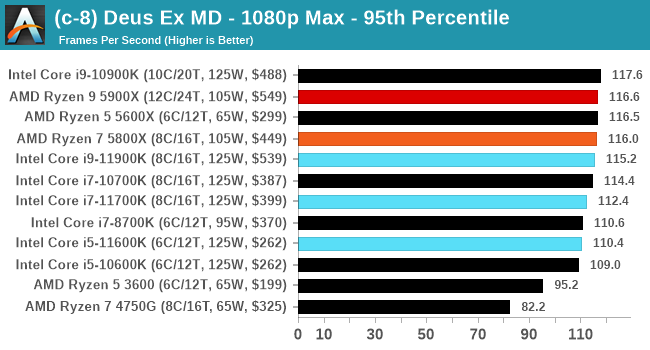

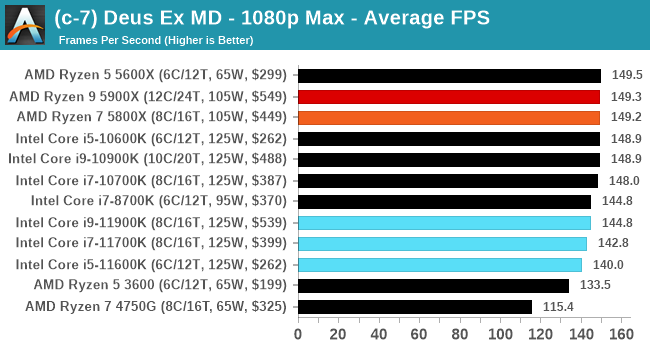

Gaming Tests: Deus Ex Mankind Divided

Deus Ex is a franchise with a wide level of popularity. Despite the Deus Ex: Mankind Divided (DEMD) version being released in 2016, it has often been heralded as a game that taxes the CPU. It uses the Dawn Engine to create a very complex first-person action game with science-fiction based weapons and interfaces. The game combines first-person, stealth, and role-playing elements, with the game set in Prague, dealing with themes of transhumanism, conspiracy theories, and a cyberpunk future. The game allows the player to select their own path (stealth, gun-toting maniac) and offers multiple solutions to its puzzles.

DEMD has an in-game benchmark, an on-rails look around an environment showcasing some of the game’s most stunning effects, such as lighting, texturing, and others. Even in 2020, it’s still an impressive graphical showcase when everything is jumped up to the max. For this title, we are testing the following resolutions:

- 600p Low, 1440p Low, 4K Low, 1080p Max

The benchmark runs for about 90 seconds. We do as many runs within 10 minutes per resolution/setting combination, and then take averages and percentiles.

| AnandTech | Low Resolution Low Quality |

Medium Resolution Low Quality |

High Resolution Low Quality |

Medium Resolution Max Quality |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

All of our benchmark results can also be found in our benchmark engine, Bench.

279 Comments

View All Comments

blppt - Tuesday, March 30, 2021 - link

I disagree. I had a 9590 (which shipped WITH a small AIO cooler!) and the thing was shaky at best for stability, easily topping 90c at stock settings.Not the mobo fault either, I had the top end ASUS CHVF-Z 990FX, which was such a mature chipset it practically had grey hairs.

TheinsanegamerN - Wednesday, March 31, 2021 - link

the 9000 series all had stability issues. Backing off 1 clock bin or tinkering with voltage would usually fix them.Bulldozer didnt have the thermal density issues modern CPUs have. If you had the cooling, it would work. Bulldozer's issue was the sheer amount of heat being being generated would overwhelm many CPU coolers of the time, which were built aroudn the more tradiitonal ~100w power draw of intel I7s and the ~125-140 of phenoms. The 200+ that bulldozer was pulling was new territory.

Oxford Guy - Wednesday, March 31, 2021 - link

Certain motherboard makers played loose with the VRMs. AsRock in particular was known for its 9000-series-certified boards frying. MSI was also bad. Only a few boards were suited to the 9000 series and any enthusiast would have skipped the 9000 series in favor of one of the lower-leakage chips, which could be overclocked to the same 4.7 GHz. 5 GHz with Piledriver was not stable, requiring too much voltage. ASUS tried to hide that by under-reporting the voltage used in its flagship board. 4.4 GHz was optimal, 4.5 was okay, and 4.7 was as far as one wanted to go for frequent use. That's with the lower-leakage 'E' parts."The Stilt" said AMD would have sent the 9000 series to the crusher had it not come up with an after-the-fact lower standard for leakage. So, Hruska gets his take spectacularly wrong in his Rocket Lake article. The 9000 series was not aimed at 'the enthusiast faithful'. Those people knew better than to buy a 9000 series chip, even though there were a few astroturfers trying to get people to buy them — like one guy who claimed his was running at 5.1 GHz 24/7.

It was aimed at people who could be tricked by the 5 Ghz number. It was the most cynical cash grab possible. Not only did AMD offer only 4 FPU cores (important for gaming) it offered a CPU that was priced into the stratosphere while having un-fixable single-core performance.

Piledriver's fatal flaw was its abysmal single-thread performance, not its power consumption. It could have been okay enough with the lower-leakage standard (and a more strict socket standard as Zen 1 had). But, reportedly, the 32nm SOI wasn't very good for some time (Bulldozer and the first generation of Piledriver), so AMD let the AM3+ spec be pretty loose (although not as loose as FM).

Overclocking Piledriver even to 5 GHz wasn't enough to give it decent single-thread performance.

I do have to agree that the 9590 was the single worst consumer CPU product ever released. It even edges out the Pentium III that wasn't stable — since that one was actually pulled from the market. Not only was the 9590 100% cynical exploitation of consumer ignorance, it was really bad technologically. Figures that Hruska would praise it.

(If, though, one lived in Iceland with a solar array backed by an iron-nickle battery complex, the 9590 would have been okay for playing Deserts of Kharak, provided one didn't buy it at its original price.)

blppt - Thursday, April 1, 2021 - link

"Those people knew better than to buy a 9000 series chip, even though there were a few astroturfers trying to get people to buy them — like one guy who claimed his was running at 5.1 GHz 24/7."What is especially sad here is that even IF he managed to pump the 250-300W into that 9590 to run at 5.1 (all cores), it was probably still slower than a 4790K at stock speeds.

Oxford Guy - Saturday, April 3, 2021 - link

In single core, certainly. However, 2011 is stamped onto the spreaders of Piledriver and it hit the market in 2012. The 4790K hit the market in Q2 2014.In 2014, the only FX to consider was the 8320E. Not only was it cheap (at least at MicroCenter), it could run in any AM3+ board without killing it — and could be overclocked better than a 9000 series with anything below nitrogen, due to its much superior leakage.

The 8320E was the only FX worth anyone’s time. Paired with a UD3P board it could do 4.4 GHz readily and could manage 4.7 with a fast fan angled at the VRM sink. Total cost was very low for the CPU and board from MicroCenter, which is why I recommended that setup to the tightest budget people. But, the bad single core was a problem for frametime consistency.

AMD should have been publicly tarred and feathered by the tech press for the 9590. All the light mockery wasn’t enough.

Spunjji - Friday, April 9, 2021 - link

Broadly agreed, but I'd note that the 6300 was also reasonable if you were on a painfully low budget. I suggested it to a friend (his alternative was a Sandy Bridge i3) and it lasted him until a year back as his main gaming system. It's now moved on to another friend, who still uses it for games. Those chips have aged surprisingly well, all things considered, though it is probably holding his RX 470 back a little bit.Oxford Guy - Wednesday, March 31, 2021 - link

• The 9590 posted the highest results in the game Deserts of Kharak, in a dual 980 Ti setup at only 1080 or 1440. And, SLI setups showed competitive 4K scores for many games back then.• The overclocked 'The Stilt' said the 9000 series is not the chip to judge the design by because it has the worst leakage characteristics and would have been sent to the crusher had AMD not decided to create a lower standard after the fact. Instead, the chips that should be used to represent Piledriver are the 'E' series. They have the lowest leakage and can manage the same 4.7 GHz the 9590 uses with much more reasonable (although still non-competitive) demands. The 9000 series was really AMD's gift to Intel, by making the bad ancient Piledriver design look much worse.

• AMD was a small cash-strapped company, thanks to Intel's monopoly abuses. When AMD was leading the x86 industry Intel kept it from getting the profit. So, Piledriver, although very bad in a number of ways, will never be as bad as Rocket Lake. The 9000 series is the only exception, though, since it was a purely cynical cash grab by AMD, using '5 GHz' to sucker people.

blppt - Thursday, April 1, 2021 - link

"The 9590 posted the highest results in the game Deserts of Kharak, in a dual 980 Ti setup at only 1080 or 1440. And, SLI setups showed competitive 4K scores for many games back then."As I stated, in the (exceedingly rare) case where a game or app can saturate all 8 cores, when the 9590 was in its prime, it could be competitive.

That almost never happened, especially in games. About the only 2 I can think of offhand that could do that in the 9590's prime was GTA5 and Company of Heroes 2. And even then, you were using 150+ more watts to get the same or slightly better performance than Intel's high-end quad cores. Along with the required AIO water cooling and required high-end mobo with a beastly VRM setup. As far as I know, only 3 pricey mobos were approved for the 9590, my CHVF-Z, one Gigabyte board, and an ASRock.

9590 was one of the worst cpus ever. Probably the single worst (special edition) cpu. I had one for years.

This rocket lake, while disappointing, hot, and power consuming, is consistently competitive in every game versus its direct competitors. The 9590 cannot come close to saying that.

Oxford Guy - Saturday, April 3, 2021 - link

I cite Desert of Kharak because it’s the only game I’ve seen put the FX ahead of Intel at below 4K.Not only would the game need to be able to leverage 8 integer cores without needing more than 4 FPU cores, it would have to be able to saturate a narrow deep pipeline and not rely heavily on single thread IPC. It should also scale with clock and not need the best RAM and L3 performance. RTS is probably the best genre for the Piledriver design.

Gondalf - Tuesday, March 30, 2021 - link

AMD FX-9590 had not AVX-512. Very high performance have a cost.Try to image Zen 3 with AVX-512, it could not be a champion in low power consumption at all.

If you do not like high power draw, simply disable AVX-512.