The 2020 Mac Mini Unleashed: Putting Apple Silicon M1 To The Test

by Andrei Frumusanu on November 17, 2020 9:00 AM ESTSection by Ryan Smith

M1 GPU Performance: Integrated King, Discrete Rival

While the bulk of the focus from the switch to Apple’s chips is on the CPU cores, and for good reason – changing the underlying CPU architecture if your computers is no trivial matter – the GPU aspects of the M1 are not to be ignored. Like their CPU cores, Apple has been developing their own GPU technology for a number of years now, and with the shift to Apple Silicon, those GPU designs are coming to the Mac for the very first time. And from a performance standpoint, it’s arguably an even bigger deal than Apple’s CPU.

Apple, of course, has long held a reputation for demanding better GPU performance than the average PC OEM. Whereas many of Intel’s partners were happy to ship systems with Intel UHD graphics and other baseline solutions even in some of their 15-inch laptops, Apple opted to ship a discrete GPU in their 15-inch MacBook Pro. And when they couldn’t fit a dGPU in the 13-inch model, they instead used Intel’s premium Iris GPU configurations with larger GPUs and an on-chip eDRAM cache, becoming one of the only regular customers for those more powerful chips.

So it’s been clear for some time now that Apple has long-wanted better GPU performance than what Intel offers by default. By switching to their own silicon, Apple finally gets to put their money where their mouth is, so to speak, by building a laptop SoC with all the GPU performance they’ve ever wanted.

Meanwhile, unlike the CPU side of this transition to Apple Silicon, the higher-level nature of graphics programming means that Apple isn’t nearly as reliant on devs to immediately prepare universal applications to take advantage of Apple’s GPU. To be sure, native CPU code is still going to produce better results since a workload that’s purely GPU-limited is almost unheard of, but the fact that existing Metal (and even OpenGL) code can be run on top of Apple’s GPU today means that it immediately benefits all games and other GPU-bound workloads.

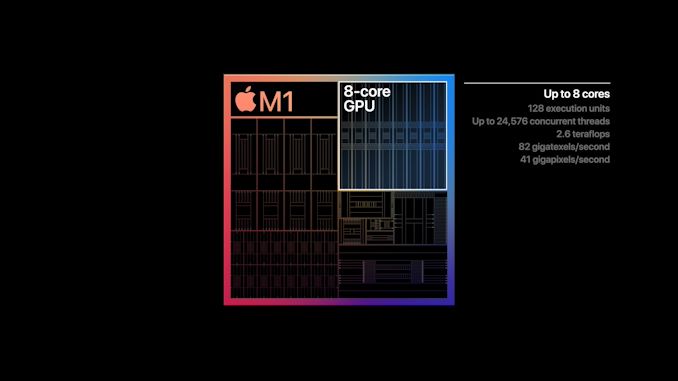

As for the M1 SoC’s GPU, unsurprisingly it looks a lot like the GPU from the A14. Apple will have needed to tweak their design a bit to account for Mac sensibilities (e.g. various GPU texture and surface formats), but by and large the difference is abstracted away at the API level. Overall, with M1 being A14-but-bigger, Apple has scaled up their 4 core GPU design from that SoC to 8 cores for the M1. Unfortunately we have even less insight into GPU clockspeeds than we do CPU clockspeeds, so it’s not clear if Apple has increased those at all; but I would be a bit surprised if the GPU clocks haven’t at least gone up a small amount. Overall, A14’s 4 core GPU design was already quite potent by smartphone standards, so an 8 core design is even more so. M1’s integrated GPU isn’t just designed to outpace AMD and Intel’s integrated GPUs, but it’s designed to chase after discrete GPUs as well.

| A Educated Guess At Apple GPU Specifications | |||

| M1 | |||

| ALUs | 1024 (128 EUs/8 Cores) |

||

| Texture Units | 64 | ||

| ROPs | 32 | ||

| Peak Clock | 1278MHz | ||

| Throughput (FP32) | 2.6 TFLOPS | ||

| Memory Clock | LPDDR4X-4266 | ||

| Memory Bus Width | 128-bit (IMC) |

||

Finally, it should be noted that Apple is shipping two different GPU configurations for the M1. The Mac Mini and MacBook Pro get chips with all 8 GPU cores enabled. Meanwhile for the Macbook Air, it depends on the SKU: the entry-level model gets a 7-core configuration, while the higher-tier model gets 8 cores. This means the entry-level Air gets the weakest GPU on paper – trailing a full M1 by around 12% – but it will be interesting to see how the shut-off core influences thermal throttling on that passively-cooled laptop.

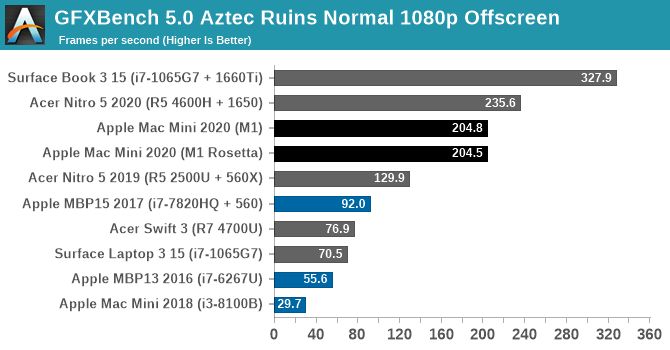

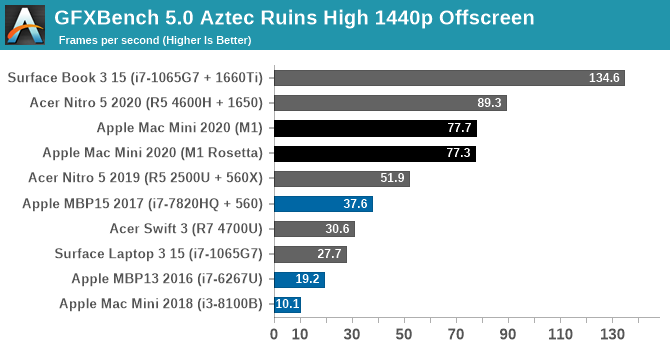

Kicking off our look at GPU performance, let’s start with GFXBench 5.0. This is one of our regular benchmarks for laptop reviews as well, so it gives us a good opportunity to compare the M1-based Mac Mini to a variety of other CPU/GPU combinations inside and outside the Mac ecosystem. Overall this isn’t an entirely fair test since the Mac Mini is a small desktop rather than a laptop, but as M1 is a laptop-focused chip, this at least gives us an idea of how M1 performs when it gets to put its best foot forward.

Overall, the M1’s GPU starts off very strong here. At both Normal and High settings it’s well ahead of any other integrated GPU, and even a discrete Radeon RX 560X. Only once we get to NVIDIA’s GTX 1650 and better does the M1 finally run out of gas.

The difference compared to the 2018 Intel Mac Mini is especially night-and-day. The Intel UHD graphics (Gen 9.5) GPU in that system is vastly outclassed to the point of near-absurdity, delivering a performance gain over 6x. And even other configurations such as the 13-inch MBP with Iris graphics, or a PC with a Ryzen 4700U (Vega 7 graphics) are all handily surpassed. In short, the M1 in the new Mac Mini is delivering discrete GPU-levels of performance.

As an aside, I also took the liberty of running the x86 version of the benchmark through Rosetta, in order to take a look at the performance penalty. In GFXBench Aztec Ruins, at least, there is none. GPU performance is all but identical with both the native binary and with binary translation.

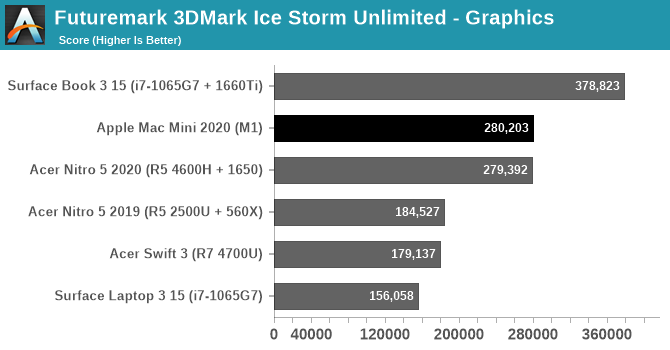

Taking one last quick look at the wider field with an utterly silly synthetic benchmark, we have 3DMark Ice Storm Unlimited. Thanks to the ability for Apple Silicon Macs to run iPhone/iPad applications, we’re able to run this benchmark on a Mac for the first time by running the iOS version. This is a very old benchmark, built for the OpenGL ES 2.0 era, but it’s interesting that it fares even better than GFXBench. The Mac Mini performs just well enough to slide past a GTX 1650 equipped laptop here, and while this won’t be a regular occurrence, it goes to show just how potent M1 can be.

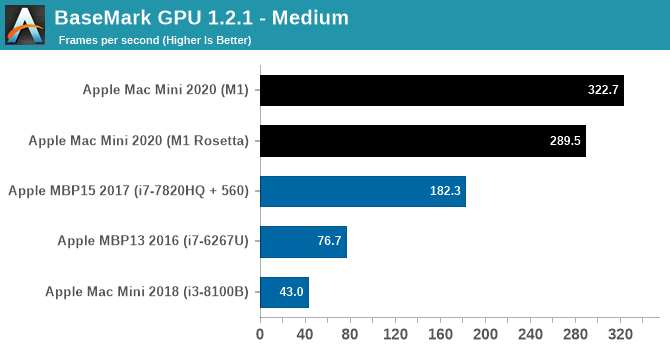

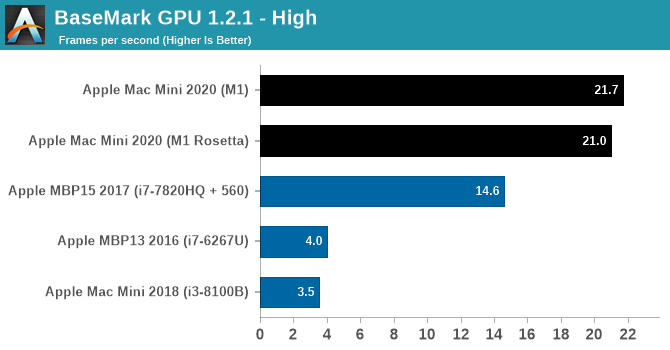

Another GPU benchmark that’s been updated for the launch of Apple’s new Macs is BaseMark GPU. This isn’t a regular benchmark for us, so we don’t have scores for other, non-Mac laptops on hand, but it gives us another look at how M1 compares to other Mac GPU offerings. The 2020 Mac Mini still leaves the 2018 Intel-based Mac Mini in the dust, and for that matter it’s at least 50% faster than the 2017 MacBook Pro with a Radeon Pro 560 as well. Newer MacBook Pros will do better, of course, but keep in mind that this is an integrated GPU with the entire chip drawing less power than just a MacBook Pro’s CPU, never mind the discrete GPU.

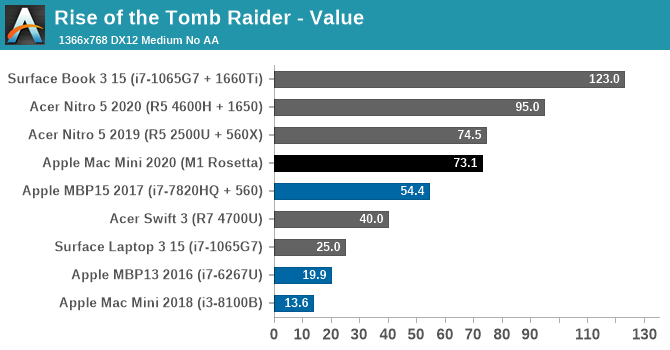

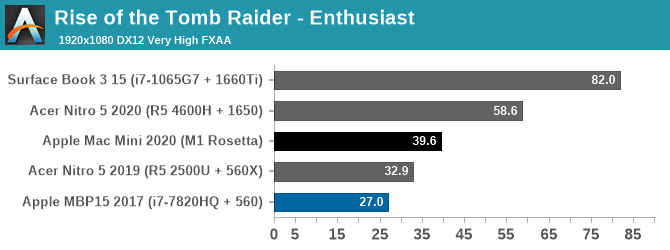

Finally, putting theory to practice, we have Rise of the Tomb Raider. Released in 2016, this game has a proper Mac port and a built-in benchmark, allowing us to look at the M1 in a gaming scenario and compare it to some other Windows laptops. This game is admittedly slightly older, but its performance requirements are a good match for the kind of performance the M1 is designed to offer. Finally, it should be noted that this is an x86 game – it hasn’t been ported over to Arm – so the CPU side of the game is running through Rosetta.

At our 768p Value settings, the Mac Mini is delivering well over 60fps here. Once again it’s vastly ahead of the 2018 Intel-based Mac Mini, as well as every other integrated GPU in this stack. Even the 15-inch MBP and its Radeon Pro 560 are still trailing the Mac Mini by over 25%, and it takes a Ryzen laptop with a Radeon 560X to finally pull even with the Mac Mini.

Meanwhile cranking things up to 1080p with Enthusiast settings finds that the M1-based Mac Mini is still delivering just shy of 40fps, and it’s now over 20% ahead of the aforementioned Ryzen + 560X system. This does leave the Mini well behind the GTX 1650 here – with Rosetta and general API inefficiencies likely playing a part – but it goes to show what it takes to beat Apple’s integrated GPU. At 39.6fps, the Mac Mini is quite playable at 1080p with good image quality settings, and it would be fairly easy to knock down either the resolution or image quality a bit to get that back above 60fps. All on an integrated GPU.

Update 11-17, 7pm: Since the publication of this article, we've been able to get access to the necessary tools to measure the power consumption of Apple's SoC at the package and core level. So I've gone back and captured power data for GFXBench Aztec Ruins at High, and Rise of the Tomb Raider at Enthusiast settings.

| Power Consumption - Mac Mini 2020 (M1) | ||||

| Rise of the Tomb Raider (Enthusiast) | GFXBench Aztec (High) |

|||

| Package Power | 16.5 Watts | 11.5 Watts | ||

| GPU Power | 7 Watts | 10 Watts | ||

| CPU Power | 7.5 Watts | 0.16 Watts | ||

| DRAM Power | 1.5 Watts | 0.75 Watts | ||

The two workloads are significantly different in what they're doing under the hood. Aztec is a synthetic test that's run offscreen in order to be as pure of a GPU test as possible. As a result it records the highest GPU power consumption – 10 Watts – but it also barely scratches the CPU cores virtually untouched (and for that matter other elements like the display controlller). Meanwhile Rise of the Tomb Raider is a workload from an actual game, and we can see that it's giving the entire SoC a workout. GPU power consumption hovers around 7 Watts, and while CPU power consumption is much more variable, it too tops out just a bit higher.

But regardless of the benchmark used, the end result is the same: the M1 SoC is delivering all of this performance at ultrabook-levels of power consumption. Delivering low-end discrete GPU performance in 10 Watts (or less) is a significant part of why M1 is so potent: it means Apple is able to give their small devices far more GPU horsepower than they (or PC OEMs) otherwise could.

Ultimately, these benchmarks are very solid proof that the M1’s integrated GPU is going to live up to Apple’s reputation for high-performing GPUs. The first Apple-built GPU for a Mac is significantly faster than any integrated GPU we’ve been able to get our hands on, and will no doubt set a new high bar for GPU performance in a laptop. Based on Apple’s own die shots, it’s clear that they spent a sizable portion of the M1’s die on the GPU and associated hardware, and the payoff is a GPU that can rival even low-end discrete GPUs. Given that the M1 is merely the baseline of things to come – Apple will need even more powerful GPUs for high-end laptops and their remaining desktops – it’s going to be very interesting to see what Apple and its developer ecosystem can do when the baseline GPU performance for even the cheapest Mac is this high.

682 Comments

View All Comments

tkSteveFOX - Friday, November 20, 2020 - link

Just imagine if they up the TDP to 40W on the next 3nm process next year?Perhaps the CPU part won't get big gains (let's face it, CPU is better than anything on the market up to 60W), but GPU should double the performance. By that time 95% of apps will be native as well, so M1 performance will gain another 10-20% additional performance in all scenarios.

mdriftmeyer - Saturday, November 21, 2020 - link

GPU double performance. That's delusional right there. Nothing in Apple's licensed IP will ever touch AMD GPU performance moving forward. Your claim the CPU is better than anything on the market up to 60W is also delusional.Enjoy 2021 when very little changes inside the M Series but AMD keeps moving forward. Being a NeXT/Apple alum I was hoping my former colleagues were wiser and moved to AMD three years ago and pushed back this ARM jump two more years.

ARM has reached its zenith in designs in the embedded space and that is the reason everything wowed is about Camera Lenses. Fab processes are reaching their zenith as well.

M1 is 12 years of ARM development by Apple's teams after buying PA Semi in 2008. It took 12 years with unlimited budgets to produce the M1. It's far less impressive than people realize.

Most of Apple's frameworks are already fully optimized after the past 8 years of in-house development. People keep thinking this code base is young. It's not. It's mature. We never released anything young back at NeXT or Apple Engineering. That hasn't change since I left. It's the mantra from day 1.

The architecture teams at AMD have decades more experience in CPU designs and nothing released here is something they haven't already worked on in-house.

Intel's arrogance is one of the greatest falls from the top in computing history. And it's only going to get worse for the next five years.

dontlistentome - Saturday, November 21, 2020 - link

Not sure when Intel will learn - they let the Gigahertz marketeers ruin them last time AMD had a lead, and this time it was the accountants. Wondering who screws them up again 15 years from now?corinthos - Monday, November 23, 2020 - link

Intel made so many mistakes and brought in outsiders who just didn't have the goods to set it on a good path forward. The board members who chose these folks are partially to blame. Larrabee was just one of the earlier warning signs.corinthos - Monday, November 23, 2020 - link

So are you saying that there's more limited growth opportunity for Apple going down the ARM path than people realize, and that the prospect of AMD producing competitive/superior low-powered processors is going to be much better?For now, it seems that for the power consumed, the M1 products have a leg up in power-performance over Intel or AMD-based competing products. Can Apple take that and scale it upwards to be competitive or even a leader in the desktop space?

I think about how there are some test results coming in already showing how a 2019 Mac Pro with a 10-core cpu and expensive discrete amd gpu and loads of ram being outshined by these M1's in some video editing workloads and wonder if a powerhouse desktop is such a good investment these days. That thing came out like a year ago and cost probably around $10K.

Focher - Tuesday, November 24, 2020 - link

From your post, I suspect you are not going to enjoy the next 2 years. Saying things like the code is already fully optimized is so ridiculous on its face, it’s hard to believe someone wrote it. If time led to full optimization, then what’s the magic time horizon where that happens? If you think Apple just played its full hand with the M1, you’ve never paid attention to Apple.blackcrayon - Tuesday, November 24, 2020 - link

I would think doubling GPU performance would be one of the easier updates they could make. More cores, more transistors with their existing design - the same thing they've done year after year to make "X" versions of their iPhone chips for the iPad. The M1 isn't at the point where doubling the GPU cores would make it gigantic and unsuitable for a higher end laptop or desktop. Unless you thought he meant "double Nvidia's best performance" or something which isn't going to be possible currently :)zodiacfml - Friday, November 20, 2020 - link

Coming back here just to leave a comment though the M1 truly leaves any previous Apple x86 product in the dust, it is far from the performance of a Ryzen 4800U which has TDP of 15W. The M1 is at 5nm while consuming 20-24W.The M1 iGPU is mighty though, which can only be equaled or beaten by next year/generation APU or Intel iGPU

thunng8 - Saturday, November 21, 2020 - link

The 4800u uses up to 50w running benchmarks and under load. You will never see a current gen Ryzen without active cooling. In laptops running benchmarks, the fans ramps up to 6000rpm while the m1 can run in the MacBook Air with no cooling with hardly any performance degradation. And there’s also the issue of battery life where the m1 laptop with a smaller battery can far outlast any Ryzen laptop.In short you cannot compare intel and AMDs dubious tdp numbers with the number measured for the m1.

BushLin - Saturday, November 21, 2020 - link

M1 and 4800U (in 15W mode) are consuming similar 22-24W power when the 4800U is showing better Cinebench performance, no denying the single thread advantage of the M1 though.If you've seen a 4800U anywhere near 50W, it won't have been in its 15W mode and running an unrealistic test like Prime95.

Just the facts Jack.