Intel’s Tiger Lake 11th Gen Core i7-1185G7 Review and Deep Dive: Baskin’ for the Exotic

by Dr. Ian Cutress & Andrei Frumusanu on September 17, 2020 9:35 AM EST- Posted in

- CPUs

- Intel

- 10nm

- Tiger Lake

- Xe-LP

- Willow Cove

- SuperFin

- 11th Gen

- i7-1185G7

- Tiger King

CPU Performance: Office and Web

Our previous set of ‘office’ benchmarks have often been a mix of science and synthetics, so this time we wanted to keep our office section purely on real world performance.

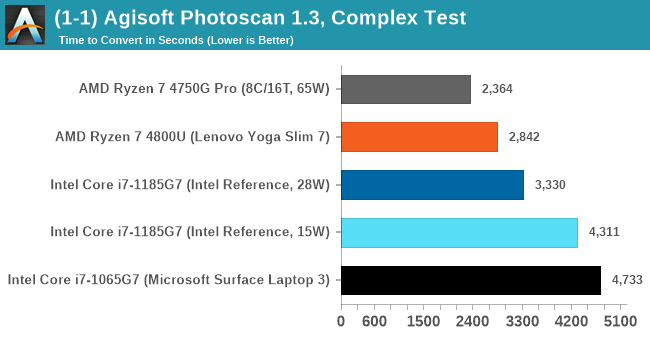

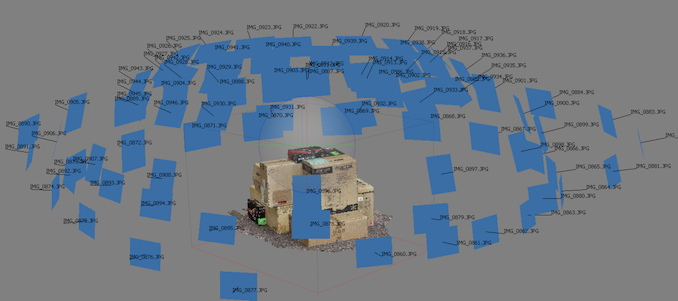

Agisoft Photoscan 1.3.3: link

Photoscan stays in our benchmark suite from the previous benchmark scripts, but is updated to the 1.3.3 Pro version. As this benchmark has evolved, features such as Speed Shift or XFR on the latest processors come into play as it has many segments in a variable threaded workload.

The concept of Photoscan is about translating many 2D images into a 3D model - so the more detailed the images, and the more you have, the better the final 3D model in both spatial accuracy and texturing accuracy. The algorithm has four stages, with some parts of the stages being single-threaded and others multi-threaded, along with some cache/memory dependency in there as well. For some of the more variable threaded workload, features such as Speed Shift and XFR will be able to take advantage of CPU stalls or downtime, giving sizeable speedups on newer microarchitectures.

For the update to version 1.3.3, the Agisoft software now supports command line operation. Agisoft provided us with a set of new images for this version of the test, and a python script to run it. We’ve modified the script slightly by changing some quality settings for the sake of the benchmark suite length, as well as adjusting how the final timing data is recorded. The python script dumps the results file in the format of our choosing. For our test we obtain the time for each stage of the benchmark, as well as the overall time.

The final result is a table that looks like this:

As explained in the power tests, the 4800U with double the cores wins out here, and due to the vector pressure also wins on power efficiency. There’s still a sizeable uplift from Ice Lake to Tiger Lake at 15 W, although 28 W is needed to get something sizeable.

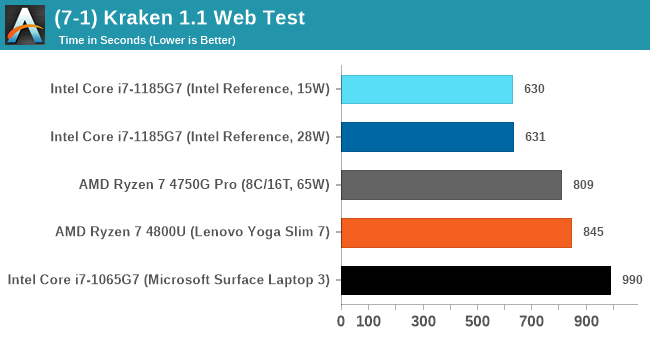

Mozilla Kraken 1.1

Kraken is a 2010 benchmark from Mozilla and does a series of JavaScript tests. These tests are a little more involved than previous tests, looking at artificial intelligence, audio manipulation, image manipulation, json parsing, and cryptographic functions. The benchmark starts with an initial download of data for the audio and imaging, and then runs through 10 times giving a timed result.

Automation involves loading the direct webpage where the test is run and putting it through. All CPUs finish the test in under a couple of minutes, so we put that as the end point and copy the page contents into the clipboard before parsing the result. Each run of the test on most CPUs takes from half-a-second to a few seconds.

Both the Tiger Lake results are very fast, not showing much difference between the power modes. Intel pushes ahead of AMD here, and ultimately a sizable jump over Ice Lake.

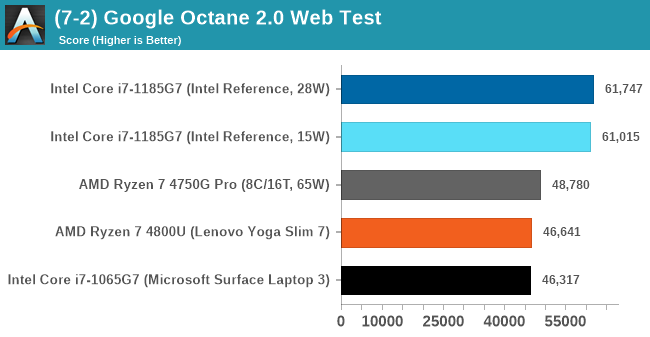

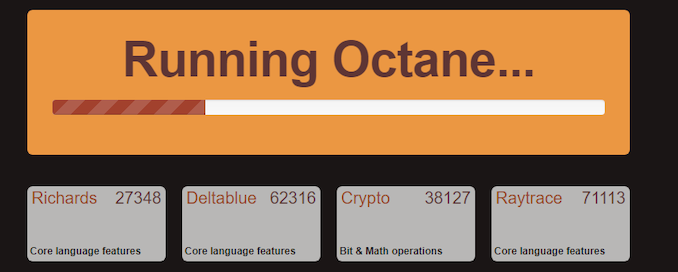

Google Octane 2.0

Our second test is also JavaScript based, but uses a lot more variation of newer JS techniques, such as object-oriented programming, kernel simulation, object creation/destruction, garbage collection, array manipulations, compiler latency and code execution.

Octane was developed after the discontinuation of other tests, with the goal of being more web-like than previous tests. It has been a popular benchmark, making it an obvious target for optimizations in the JavaScript engines. Ultimately it was retired in early 2017 due to this, although it is still widely used as a tool to determine general CPU performance in a number of web tasks.

Octane’s automation is a little different than the others: there is no direct website to go to in order to run the benchmark. The benchmark page is opened, but the user has to navigate to the ‘start’ button or open the console and initiate the JavaScript required to run the test. The test also does not show an obvious end-point, but luckily does try and aim for a fixed time for each processor. This is similar to some of our other tests, that loop around a fixed time before ending. Unfortunately this doesn’t work if the first loop goes beyond that fixed time, as the loop still has to finish. For Octane, we have set it to 75 seconds per run, and we loop the whole test four times.

The Tiger Lake system reaches new records in Optane. If there’s anything this system is fast at, it is web workloads.

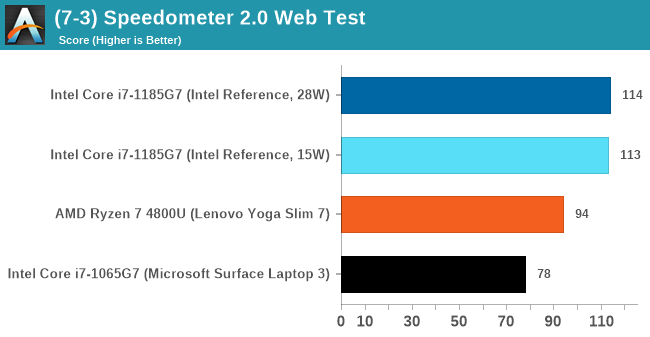

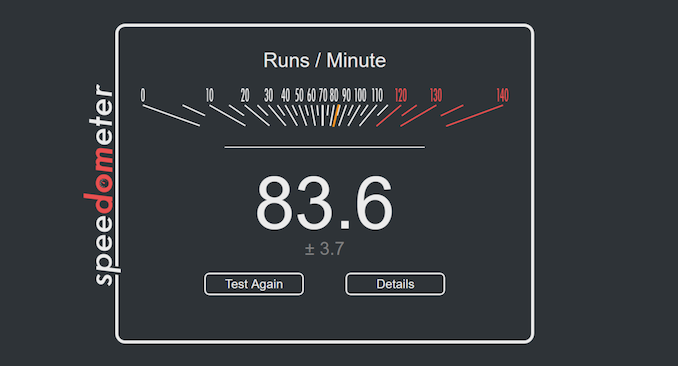

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a test over a series of JavaScript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics. Rather than use the main interface, we go to the admin interface through the about page and manage the results there. It involves saving the webpage when the test is complete and parsing the final result.

We repeat over the benchmark for a dozen loops, taking the average of the last five.

Again, another good win for Tiger Lake.

253 Comments

View All Comments

SplinesNS - Friday, September 18, 2020 - link

The Sardine oil and Tiger King references make it hard for an international reader to actually make sense of content here. I am not sure who the target audience is for this website but I would kindly request you not to use very culture specific references on a technology website.Bik - Friday, September 18, 2020 - link

It is subjective, but I think it fun and I'm an international reader. Without these references the article would be too dry. I think one can still get 100% technical detail and not knowing the puns.Spunjji - Saturday, September 19, 2020 - link

Seconded.Samus - Friday, September 18, 2020 - link

This is criminal. They are going to sell a CPU of the same model and allow OEM's to have it perform vastly different without disclosing the actual performance? What's next, bring back the PR rating?Spunjji - Friday, September 18, 2020 - link

To be fair, this isn't new. Intel CPUs have differed significantly in performance depending on cooling implementation on the final product for a while, and AMD have similar issues now.Oxford Guy - Sunday, September 20, 2020 - link

How new this is is less important than the fact that it's a scam.Spunjji - Sunday, September 20, 2020 - link

It's relevant when someone's talking about it like it's a new problem..?maroon1 - Friday, September 18, 2020 - link

Clock for clock comparison is uselessThe fact that Tiger Lake beats Ice Lake at same power means than Tiger Lake is superior out of the two. Period

Also iGPU performance boost is huge. It beats 65w APU in some cases. But there is some inconsistency because some cases it does not beat 15w APU. It might be because of drivers ??!

yeeeeman - Friday, September 18, 2020 - link

Damn, willow cove is actually lower ipc vs sunny??? Wow, that was unexpected! Intel needs to improve the ipc of the next gen core massively if they want to stay on top. I know the rumours say 50% better than skylake but even that, if it will happen will not be sufficient.m53 - Friday, September 18, 2020 - link

Willow cove has ~20% better IPC than Zen2. Golden Cove is rumored to add another 25% taking the lead to ~45% by mid 2021. Will Zen3 be able to close the 45% IPC deficit? AMD says no. By their own best case projection they expect 15% IPC. That would pul Zen3 at a 30% IPC deficit vs Golden Cove. As you can see the IPC deficit keeps widening.