AMD Ryzen 5 3600 Review: Why Is This Amazon's Best Selling CPU?

by Dr. Ian Cutress on May 18, 2020 9:00 AM ESTCPU Performance: Synthetic, Web and Legacy Tests

While more the focus of low-end and small form factor systems, web-based benchmarks are notoriously difficult to standardize. Modern web browsers are frequently updated, with no recourse to disable those updates, and as such there is difficulty in keeping a common platform. The fast paced nature of browser development means that version numbers (and performance) can change from week to week. Despite this, web tests are often a good measure of user experience: a lot of what most office work is today revolves around web applications, particularly email and office apps, but also interfaces and development environments. Our web tests include some of the industry standard tests, as well as a few popular but older tests.

We have also included our legacy benchmarks in this section, representing a stack of older code for popular benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

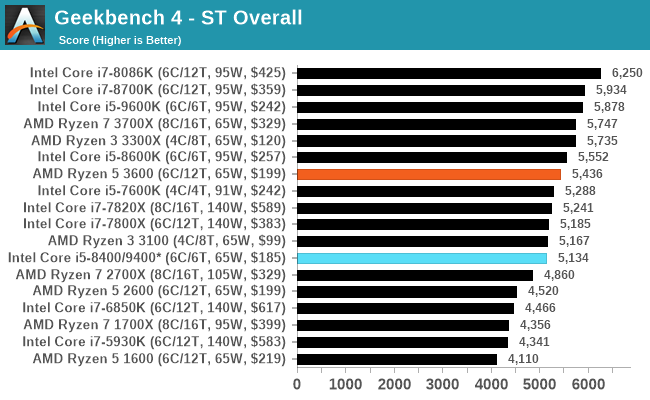

GeekBench4: Synthetics

A common tool for cross-platform testing between mobile, PC, and Mac, GeekBench 4 is an ultimate exercise in synthetic testing across a range of algorithms looking for peak throughput. Tests include encryption, compression, fast Fourier transform, memory operations, n-body physics, matrix operations, histogram manipulation, and HTML parsing.

I’m including this test due to popular demand, although the results do come across as overly synthetic, and a lot of users often put a lot of weight behind the test due to the fact that it is compiled across different platforms (although with different compilers).

We record the main subtest scores (Crypto, Integer, Floating Point, Memory) in our benchmark database, but for the review we post the overall single and multi-threaded results.

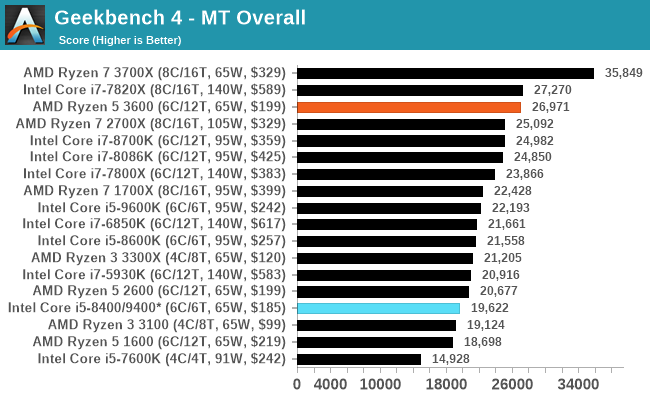

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a accrued test over a series of javascript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics. We report this final score.

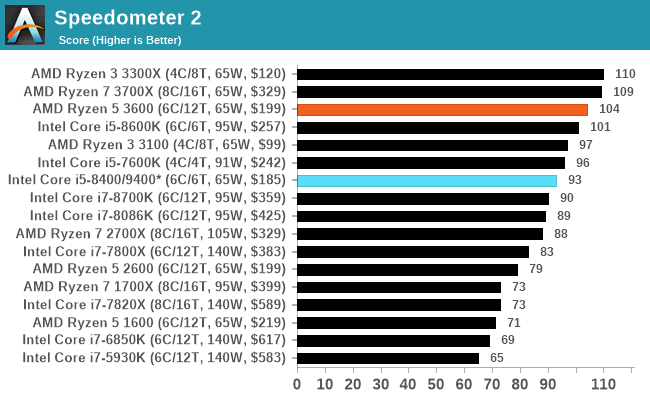

Google Octane 2.0: Core Web Compute

A popular web test for several years, but now no longer being updated, is Octane, developed by Google. Version 2.0 of the test performs the best part of two-dozen compute related tasks, such as regular expressions, cryptography, ray tracing, emulation, and Navier-Stokes physics calculations.

The test gives each sub-test a score and produces a geometric mean of the set as a final result. We run the full benchmark four times, and average the final results.

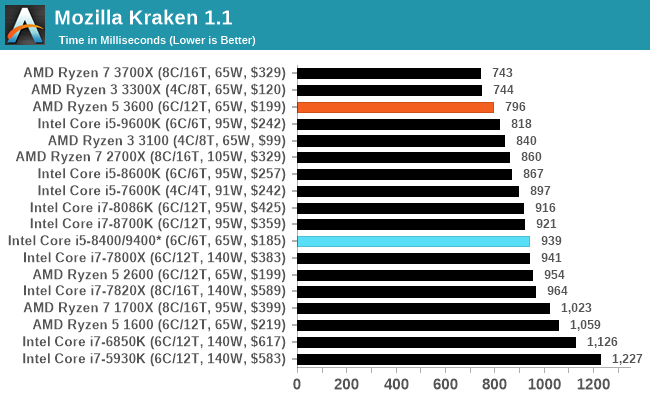

Mozilla Kraken 1.1: Core Web Compute

Even older than Octane is Kraken, this time developed by Mozilla. This is an older test that does similar computational mechanics, such as audio processing or image filtering. Kraken seems to produce a highly variable result depending on the browser version, as it is a test that is keenly optimized for.

The main benchmark runs through each of the sub-tests ten times and produces an average time to completion for each loop, given in milliseconds. We run the full benchmark four times and take an average of the time taken.

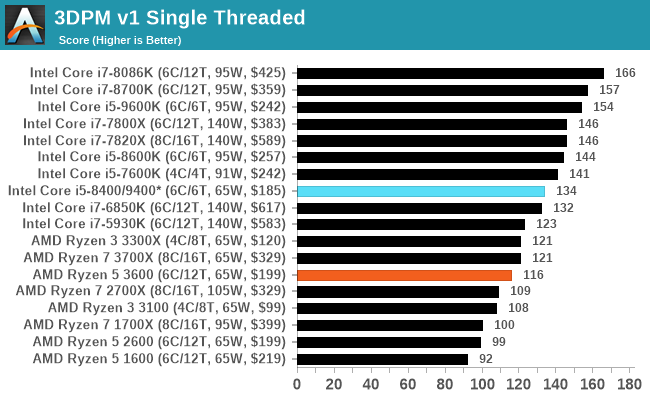

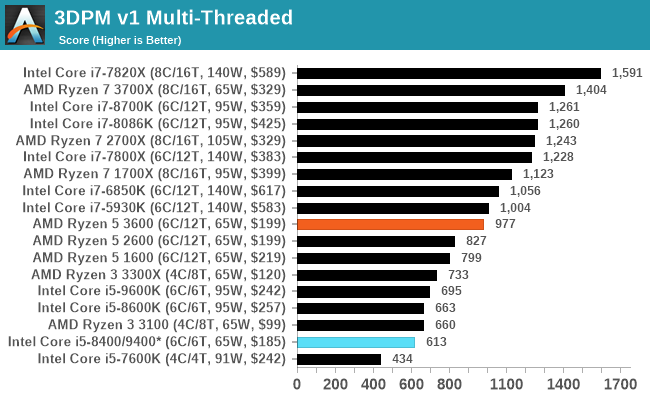

3DPM v1: Naïve Code Variant of 3DPM v2.1

The first legacy test in the suite is the first version of our 3DPM benchmark. This is the ultimate naïve version of the code, as if it was written by scientist with no knowledge of how computer hardware, compilers, or optimization works (which in fact, it was at the start). This represents a large body of scientific simulation out in the wild, where getting the answer is more important than it being fast (getting a result in 4 days is acceptable if it’s correct, rather than sending someone away for a year to learn to code and getting the result in 5 minutes).

In this version, the only real optimization was in the compiler flags (-O2, -fp:fast), compiling it in release mode, and enabling OpenMP in the main compute loops. The loops were not configured for function size, and one of the key slowdowns is false sharing in the cache. It also has long dependency chains based on the random number generation, which leads to relatively poor performance on specific compute microarchitectures.

3DPM v1 can be downloaded with our 3DPM v2 code here: 3DPMv2.1.rar (13.0 MB)

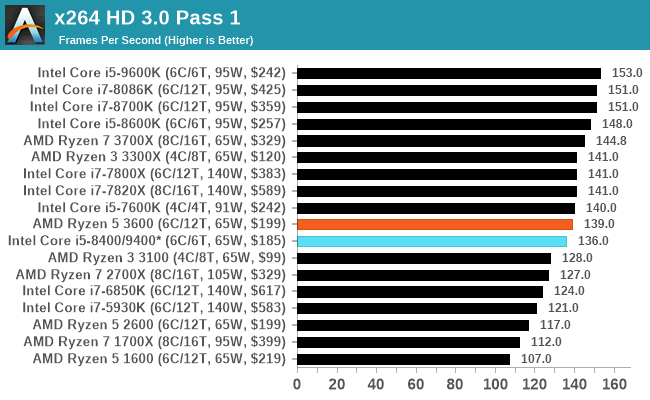

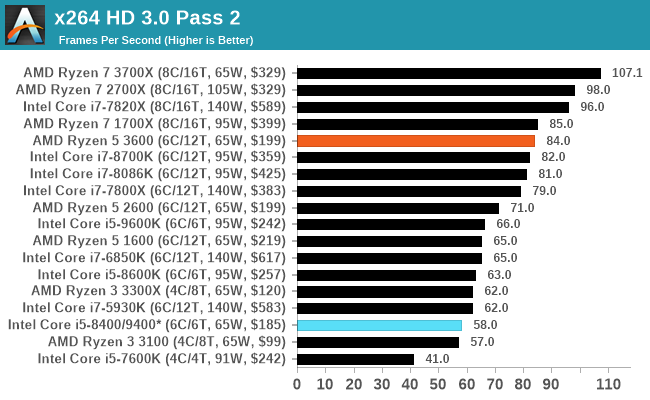

x264 HD 3.0: Older Transcode Test

This transcoding test is super old, and was used by Anand back in the day of Pentium 4 and Athlon II processors. Here a standardized 720p video is transcoded with a two-pass conversion, with the benchmark showing the frames-per-second of each pass. This benchmark is single-threaded, and between some micro-architectures we seem to actually hit an instructions-per-clock wall.

114 Comments

View All Comments

Sonik7x - Monday, May 18, 2020 - link

Would be nice to see 1440p benchmarks across all games, also would be nice to see a comparison against an i7-5930K running which is also a 6c/12T CPUET - Monday, May 18, 2020 - link

Would be even nicer to see newer games. Anandtech reviews seem to be stuck in 2018, both for games and for apps, and that makes them a lot less relevant a read than they could be.Dolda2000 - Monday, May 18, 2020 - link

You exaggerate. The point of a benchmark suite can't really be to contain the specific workload you're going to put on the CPU (since that's extremely unlikely to be the case anyway), but to be representative of typical workloads, and I think the application selection here is quite adequate for that. In comparison, I find it much more important to have more comparison data in the Bench database. There may be a stronger case to be made for games, but I find even that slightly doubtful.MASSAMKULABOX - Saturday, May 23, 2020 - link

Not only that , but slightly older games are much more stable and have most of the performance ironed out. New games are getting patches and downloads all the time, which often affect perfomance. I want to see "E" versions I.e 35/45wThreeDee912 - Monday, May 18, 2020 - link

They already mentioned in the 3300X review they'll be going back and adding in new games like Borderlands 3 and Gears Tactics: https://www.anandtech.com/show/15774/the-amd-ryzen...flyingpants265 - Monday, May 18, 2020 - link

I haven't used AnandTech benchmarks for years. They don't use enough CPUs/GPUs, they never include enough results from the previous generations, which is the most important thing when considering upgrades and $ value.Also, the "bench" tool does not include enough tests or hardware.

jabber - Tuesday, May 19, 2020 - link

Yeah nothing annoys me more than Tech Site benchmarks that only compare the new stuff to hardware than came out 6 months before it. If I see say a new GPU tested I want to see how it compares to my RX480 (that a lot of us will be looking to upgrade this year) than just a 5700XT.johnthacker - Tuesday, May 19, 2020 - link

Eh, nothing annoys me more than Tech Site benchmarks that only compare the new stuff to other new stuff. If I have an existing GPU or CPU and I'm not sure if it's worth it for me to upgrade or stick with what I've got, I want to see how something new compares to my existing hardware so I can know whether it's worth upgrading or whether I might as well wait.Pewzor - Monday, May 18, 2020 - link

I mean Gamer's Nexus uses old games as well.Crazyeyeskillah - Tuesday, May 19, 2020 - link

Just to make this crystal clear, the reason they HAVE to use older games is because all of the PAST data has been run using those games. Most review sites only get sample hardware for a week or less to run the tests then return it in the mail. You literally wouldn't have anything to compare the data to if you only ran tests on the latest and greatest games and benchmarks.When I see people making this complaint I understand that they are new to computers and just want them to understand that there is a reason why benchmarks are limited. Most hardware review sites don't make any money, or if they do, it's enough to pay one or MAYBE two staff members (poorly.) Ad revenue is garbage due to add blockers on all your browsers, and legitimate sites that don't spam blickbaity rumors as news are shutting down. Just look what happened to Hardocp.com, one of the last true honest review sites.

The idea that hardware sites all have stockpiles of every system imaginable and the thousands of hours it would take to constantly setup and run all the new games and benchmarks is pretty comical.