AMD’s Mobile Revival: Redefining the Notebook Business with the Ryzen 9 4900HS (A Review)

by Dr. Ian Cutress on April 9, 2020 9:00 AM ESTTesting the Ryzen 9 4900HS Integrated Graphics

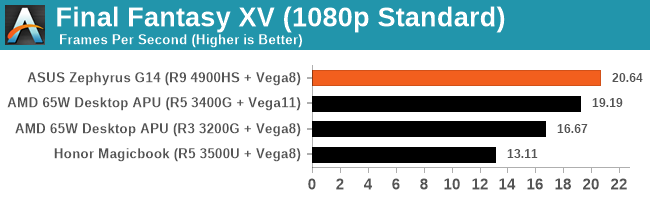

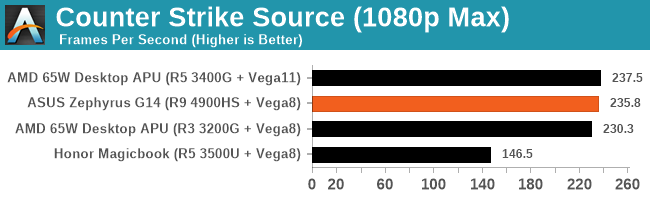

Under the hood of the Ryzen 9 4900HS, aside from the eight Zen 2 cores, is an enhanced Vega 8 graphics solution. For this generation of mobile processors, AMD is keeping the top number of compute units to 8, whereas in the previous generation it went up to Vega 11. Just by the name, one would assume that AMD has lowered the performance of the integrated graphics. This is not the case.

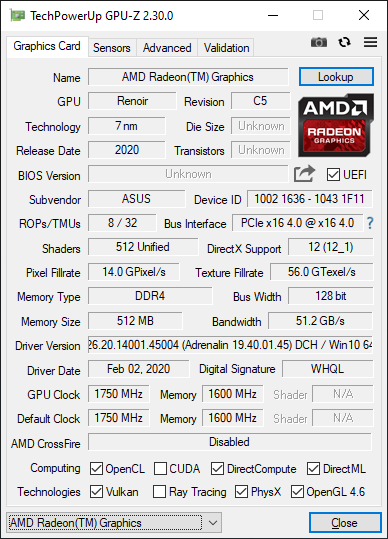

For the new Ryzen Mobile 4000 processors, the Vega graphics here are enhanced in three main ways over the previous generation. First is that it is built on the 7nm process node, and AMD put a lot of effort into physical design, allowing for a more optimized version that has a wider voltage/frequency window compared to the previous generation. Secondly, and somewhat connected, is the frequency: the new processors top out at 1750 MHz, rather than 1400 MHz, which would naturally give a simple 25 % boost with all other things being equal. Third on the list is memory, as the new platform supports up to DDR4-3200, rather than DDR4-2400, providing an immediate bandwidth boost which is what integrated graphics loves. There’s also the nature of the CPU cores themselves, having larger L3 caches, which often improves integrated graphics workloads that interact a lot with the CPU.

Normally, with the ASUS Zephryus G14, the switching between the integrated graphics and the discrete graphics should be automatic. There is a setting in the NVIDIA Control Panel to let the system auto-switch between integrated and discrete, and we would expect the system to be on the IGP when off the wall power, but on the discrete card when gaming (note, we had issues in our battery life test where the discrete card was on, but ASUS couldn’t reproduce the issue). In order to force the integrated graphics for our testing, because the NVIDIA Control Panel didn’t seem to catch all of our tests to force them onto the integrated graphics, we went into the device manager and actually disabled the NVIDIA graphics.

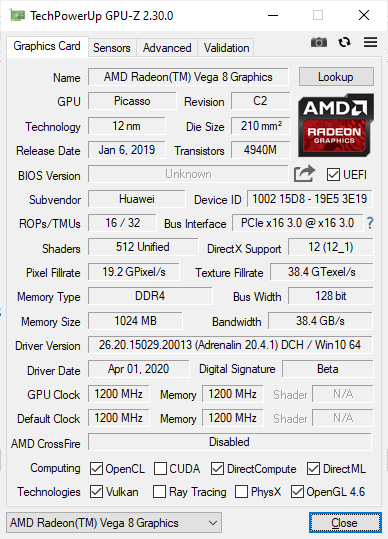

This left us with AMD’s best integrated graphics in its Ryzen Mobile 4000 series: 1750 MHz of enhanced Vega 8 running at DDR4-3200.

Renoir with Vega 8 – updated to 20.4 after this screenshot was taken

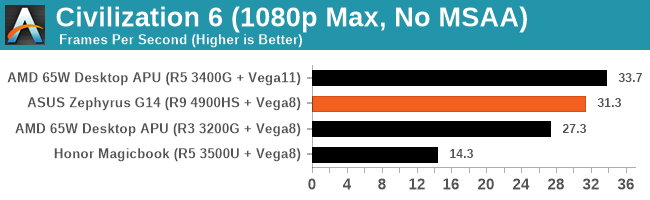

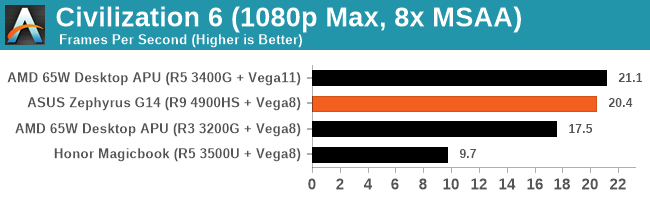

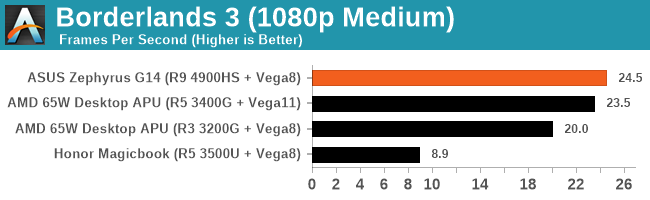

Our comparison point here is actually a fairly tricky one to set up. Unfortunately we do not have a Ryzen 7 3750H from the previous generation for comparison, but we do have an Honor Magicbook 14, which has a Ryzen 5 3500U.

This is a 15 W processor, running at 1200 MHz and DDR4-2400, which again makes the comparison a little tricky, but it is better than comparing it to the Intel HD630 graphics in the Razer Blade.

We also re-ran the benchmarks on the latest drivers with AMD's 65 Desktop APUs, the Ryzen 5 3400G (with Vega11) and the Ryzen 3 3200G (with Vega 8). These are running at DDR4-2933, the AMD maximum officially supported by these APUs (which means anything above this is overclocking).

This is a pretty substantial difference, no joke.

Hopefully we will get more variants of the Ryzen integrated graphics to test, along with an Ice Lake system.

267 Comments

View All Comments

Qasar - Thursday, April 9, 2020 - link

peachncream i actually have opened the notebooks up after i have had them for a while, and just unplugged the ribbon cable, and then removed the tape i put over it :-)if the Armoury Crate option is enabled in the BIOS it will ask to install it." problem solved, just disable it in the bios. did you even read this part ? :-) :-) i bet the finger scanner could be disabled as well.... but you would probable stick with the ancient notebook you have any, so no difference :-)

PeachNCream - Thursday, April 9, 2020 - link

I did miss the part where the installer could be disabled. Thanks for catching that. As for disabling finger readers, that's a setting I don't really trust to work. A physical barrier is really the only sure way to keep yourself safe.In the end, you are right. I will likely use older hardware, however, as time moves forward that older hardware ends up being pretty useless so I get newer older hardware. Security holes like these tend to percolate down to the secondary market over time so I hope that integration of print scanners remains a niche, but I see falling costs and slow yet steady spread so it may one day be a problem for even information security professionals like us to avoid this sort of hole.

eastcoast_pete - Thursday, April 9, 2020 - link

I actually share your dislike for a built-in webcam that doesn't have a slider integrated in it. Unfortunately, that seems to be the last thing on the mind of many laptop designers. I would like a webcam in my laptop, as I often have to videoconference with clients, even when we're not under a "shelter in place" orderFataliity - Friday, April 10, 2020 - link

Just use a phone or buy a decent webcam for 20-50 bucks. The quality on built in laptop cameras is horrendous. literally anything is better.Kamen Rider Blade - Thursday, April 9, 2020 - link

Dr. Ian Cutress, your Inter-Core Latency table might have a few mistakes on it!!!!How is it that a 3900X can have consistent Latency when it crosses CCX/CCD boundries:

https://i.redd.it/mvo9nk2r94931.png

Yet your 3950X has Zen+ like latency when it crosses CCX/CCD boundaries?

Did you screw up your table when you copied & pasted?

mattkiss - Thursday, April 9, 2020 - link

For Zen 2 desktop CPUs, the CCD/IOD link for memory operations is 32B/cycle while reading and 16B/cycle for writing. I am curious what the values are for the Renoir architecure. Also, I would be interested in seeing an AIDA64 memory benchmark run for the review system for both DDR4 3200 and 2666.Khato - Thursday, April 9, 2020 - link

The investigation regarding the low web browsing battery life result on the Zephyrus G14 is quite interesting. One question though, was the following statement confirmed? "With the Razer Blade, it was clear that the system was forced into a 60 Hz only mode, the discrete GPU gets shut down, and power is saved."Few reasons for that question. The numbers and analysis in this article piqued my curiosity due to how close the Razer Blade and 120Hz Asus Zephyrus numbers were. Deriving average power use from those run times plus battery capacity arrives at 16.3W for the 120Hz Asus Zephyrus, 14W for the Razer Blade, and 6.1W for the 60Hz Asus Zephyrus. So roughly a 10W delta for increased refresh rate plus discrete graphics. Performing the same exercise on the recent Surface Laptop 3 review yields 6.1W for the R7 3780U variant and 4.5W for the i7 1065G7 variant. Note that the R7 3780U variant shows same average power consumption as the 60Hz Asus Zephyrus, while the Razer Blade is 9.5W/3x higher than the i7 1065G7 variant. It makes no sense for Intel efficiency to be that much worse... unless the discrete graphics is still at play.

The above conclusion matches up with the only laptop I have access to with discrete graphics, an HP zbook G6 with the Quadro RTX 3000. On battery with just normal web browser and office applications open the discrete graphics is still active with hwinfo reporting a constant 8W of power usage.

Fataliity - Friday, April 10, 2020 - link

That's partly because for a Zen2 core to ramp up to turbo, it uses much less power. Intel can hit their 35W budget from one core going up to 4.5-4.8Ghz. Ryzen can hit their 4.4 at about 12W. And it turbos faster too. So it uses less power, finishes the job quicker, is more responsive, etc.For an example, look at the new Galaxy S20 review on here with the 120hz screen. When its turned on it shaves off over 50% of its battery life.

Khato - Friday, April 10, 2020 - link

Those arguments could have some merit if the results were particular to the web browsing battery life tests. However, the exact same trend exists for both web browsing and video playback, and h.264 playback doesn't require a system to leave minimum CPU frequency. This is clear evidence that the difference in power consumption has nothing to do with compute efficiency of the CPU, but rather the platform.Regarding the comparison to the S20. Performing the same exercise of dividing battery Wh by hours of web browsing battery life run time for the S20 Ultra with Snapdragon 865 arrives at 1.37W at 60Hz and 1.7W at 120Hz. Even if you assumed multiplicative scaling that would only increase the 6.1W figure for the 60Hz Asus Zephyrus up to 7.6W... and it's not multiplicative scaling.

As far as I can tell from my own limited testing, Optimus simply isn't working like it should. It's frequently activating the discrete GPU on trivial windows workloads which could easily be handled by the integrated graphics. My guess is that this is the normal state for Intel based windows laptops with discrete NVIDIA graphics. Wouldn't necessarily affect AMD as driver setup is different, which is definitely a selling point for AMD unless Intel/NVIDIA take notice and fix their driver to match.

eastcoast_pete - Thursday, April 9, 2020 - link

Thanks Ian, glad I waited with my overdue laptop refresh! Yes, it'll be Intel outside this time, unless the i7 plus dGPU prices come down a lot; the Ryzen 4800/4900 are the price/performance champs in that segment for now.The one fly in the ointment is the omission of a webcam in the Zephyrus. I can (prefer) to do without the LED bling on the lid cover, but really need a webcam, especially right now with "shelter in place" due to Covid. However, I don't think ASUS designed the Zephyrus with someone like me in mind. Too bad, maybe another Ryzen 4800 laptop will fit the bill .