Amazon's Arm-based Graviton2 Against AMD and Intel: Comparing Cloud Compute

by Andrei Frumusanu on March 10, 2020 8:30 AM EST- Posted in

- Servers

- CPUs

- Cloud Computing

- Amazon

- AWS

- Neoverse N1

- Graviton2

SPEC - MT Performance (16xlarge 64vCPU)

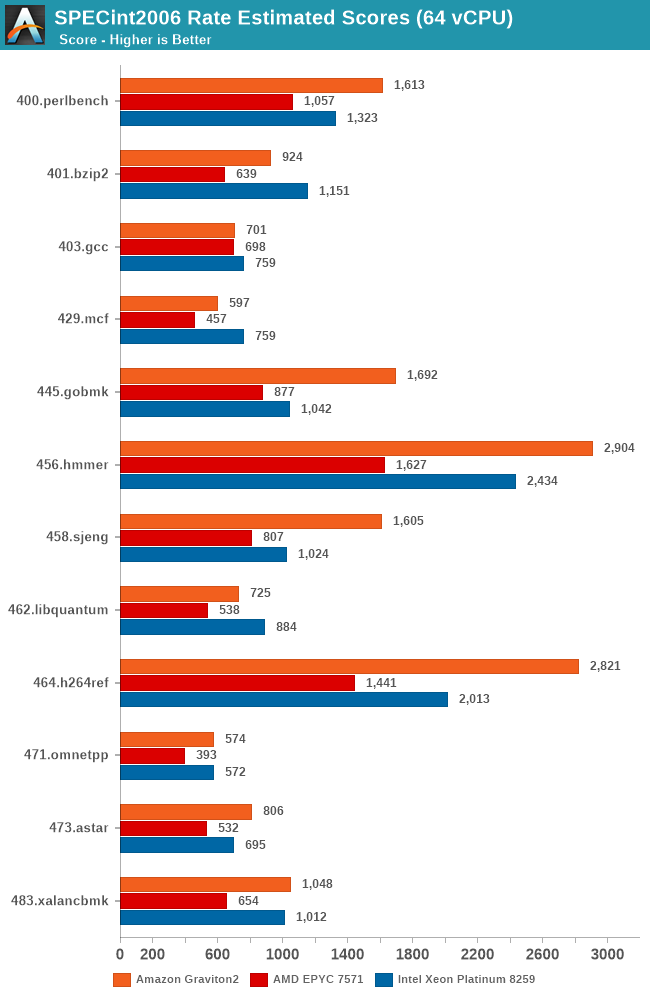

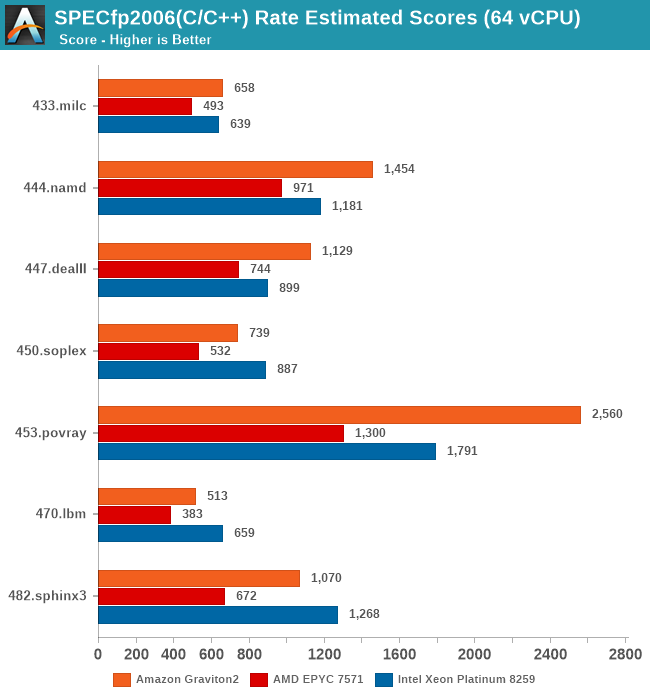

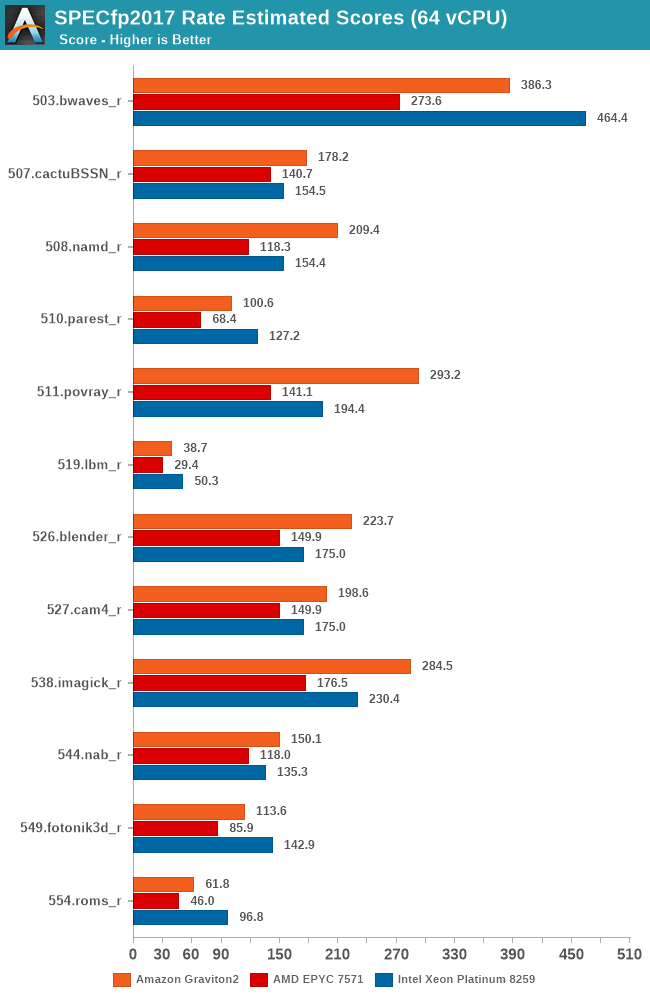

While the core scaling figures are interesting from an academical standpoint, what’s even more interesting is seeing the absolute throughput numbers compared to the competition. We’re starting off with SPECrate results with 64-rate runs, fully utilising the vCPUs of the EC2 16xlarge instances.

Again, there’s the conundrum of the apples-and-oranges comparison between the Graviton2’s 64 physical cores versus the 32 cores plus SMT setups of the AMD and Intel platforms, but again, that’s how Amazon is positioning these systems in terms of throughput capacity and instance pricing. You could argue that if you can parallelise your workload above a certain amount of threads, it doesn’t matter on whether you can achieve the higher throughput through more cores or through mechanisms such as SMT. Remember, when talking about silicon die area, you could at minimum probably fit 2 N1 cores in the same area than an AMD Zen core or an Intel core (probably an even higher number in the latter comparison).

The Graviton2’s performance is absolutely impressive across the board, beating the Intel Cascade Lake system by quite larger margins in a lot of the workloads. AMD’s Epyc system here doesn’t fare well at all and is showing its age.

It’s particularly in the non-memory bound workloads that the Graviton2 manages to position itself significantly ahead, and here the advantage of having a two-fold physical core lead with essentially double the execution resources shows its benefits.

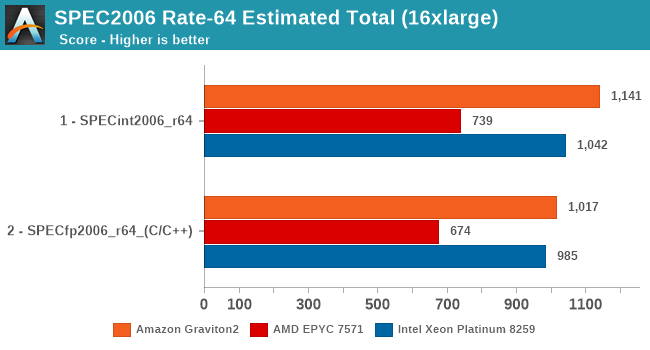

In the overall SPECrate2006 results, the Graviton2 is shy of Arm’s projection of a 1300 score, but again the Amazon chip does clock in a bit lower and has less cache than what Arm had envisioned in their presentations a year ago.

Nevertheless, the Graviton2 has the performance lead here even against the Intel Cascade Lake based EC2 instances, which is quite surprising given the latter’s cost structure, and indicator of what to come later in the cost analysis.

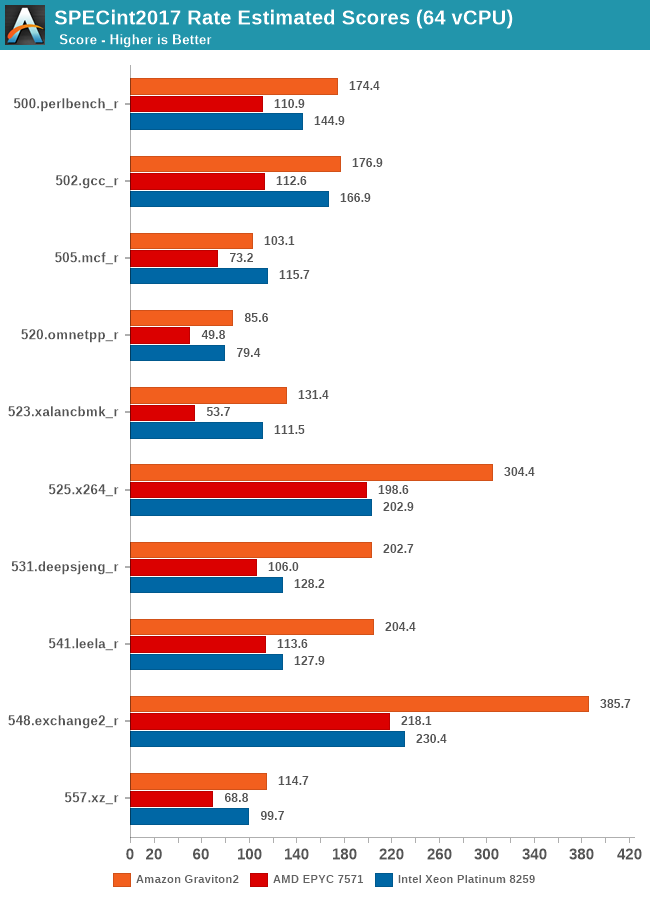

Arm’s physical core count advantage here continues to show in the execution intensive workloads of SPECint2017, showcasing some very large performance leads in many workloads. The performance leap on important workloads such as 502.gcc again isn’t too great over the Intel system for example – Amazon and Arm definitely could do better here if the chip would have had more cache available.

In SPECfp2017, there’s more workloads in which the Xeon system’s 2-socket setup with a 50% memory channel advantage does show up, able to result in more available bandwidth and thus give the more memory intensive workloads in this suite a good performance advantage over the Graviton2 system. Still, the Arm chip fares very competitively and does put the older AMD EPYC processor in its place, and yes again, we have to remind ourselves that things would be quite different here if we’d be able to include Rome in our charts.

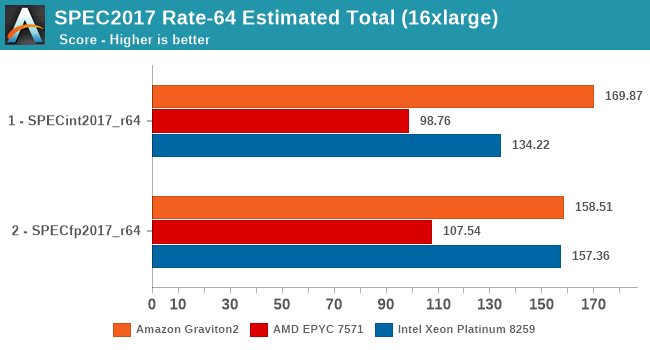

Overall, the Graviton2 system has an undisputed lead in the SPECint2017 suite, whilst just edging out on average the Xeon system in the FP suite, only losing out in situations where the Xeon’s higher memory bandwidth comes at play.

96 Comments

View All Comments

jbrower - Saturday, July 24, 2021 - link

Well at least you have a troll -- mark of success for authors, heheProDigit - Wednesday, March 11, 2020 - link

110W is very pessimistic, and would make no sense at all, considering that the ryzen 9 3900x uses 105W at 12 cores 24 threads at 4.6Ghz and 7nm, and the 3950 does the same with 4 more cores.Plus, regular arm based (AMLogic) boxes use 3Watt in total under load (that includes CPU+Ethernet+RAM+Emmc) for 4 CPU cores running at 1,9Ghz.

If you ask me, 64 core arm CPUs running at 2Ghz should run at around just over 1 watt per core, making it a 65W tdp chip

Andrei Frumusanu - Wednesday, March 11, 2020 - link

There's 64 PCIe4 lanes and 8 memory controllers in there as well.cdome - Wednesday, March 11, 2020 - link

Quick question. Does Graviton2 have support for SVE2 vector extension? if yes how wide are execution units? thank youAndrei Frumusanu - Wednesday, March 11, 2020 - link

No, there's 2x128b v8 ASIMD/NEON pipes.Soulkeeper - Wednesday, March 11, 2020 - link

What was used to generate the images on page 2 ?ie: https://images.anandtech.com/doci/15578/AMD-Epyc-6...

Is this app/source available to download ?

Thanks

sharath.naik - Wednesday, March 11, 2020 - link

Whats behind the name Annapurna? The name is Indian in origin but the company is Israeli.nijimon - Thursday, March 12, 2020 - link

Judging by the logo it could be referring to the massif in the Himalayas.https://en.wikipedia.org/wiki/Annapurna_Massif

Andy Chow - Thursday, March 12, 2020 - link

"I recently had the time to write a new custom microbenchmark for testing synchronisation latencies of CPU cores, exhibiting some of the cache-coherency as well as physical layouts of current designs."Wow, and what a benchmark that turned out to be. Please consider packaging it and releasing it. Or giving us the code so we can run it. I would really love to run that test on a few of my machines. I am frustrated with current benchmarks on this area also, and you seem to have built the perfect solution.

ballsystemlord - Thursday, March 12, 2020 - link

1 Grammar error:"Overall, it's a bit odd to see GCC ahead in that many workloads given that LLVM the is the primary compiler for billions of Arm devices in the mobile space."

Extra "the":

"Overall, it's a bit odd to see GCC ahead in that many workloads given that LLVM is the primary compiler for billions of Arm devices in the mobile space."