The AMD Ryzen 9 3950X Review: 16 Cores on 7nm with PCIe 4.0

by Dr. Ian Cutress on November 14, 2019 9:00 AM ESTCPU Performance: Rendering Tests

Rendering is often a key target for processor workloads, lending itself to a professional environment. It comes in different formats as well, from 3D rendering through rasterization, such as games, or by ray tracing, and invokes the ability of the software to manage meshes, textures, collisions, aliasing, physics (in animations), and discarding unnecessary work. Most renderers offer CPU code paths, while a few use GPUs and select environments use FPGAs or dedicated ASICs. For big studios however, CPUs are still the hardware of choice.

All of our benchmark results can also be found in our benchmark engine, Bench.

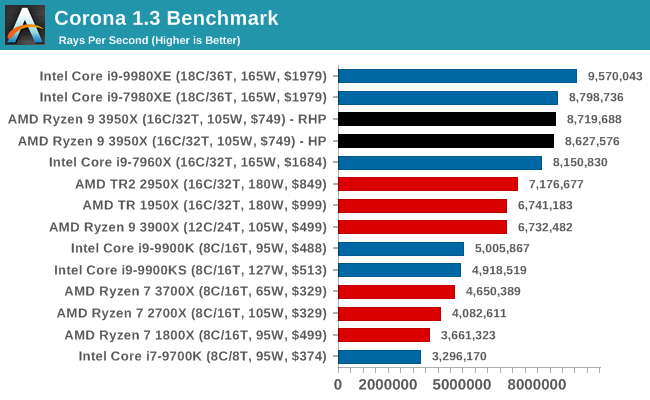

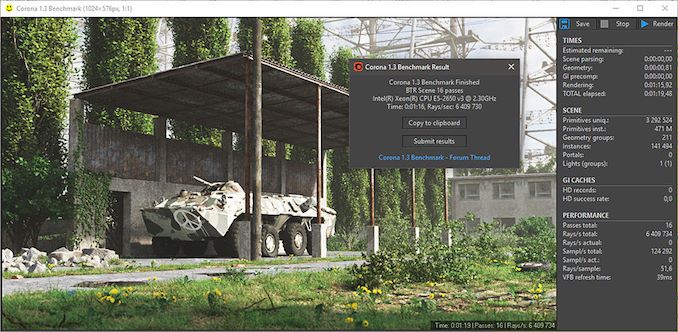

Corona 1.3: Performance Render

An advanced performance based renderer for software such as 3ds Max and Cinema 4D, the Corona benchmark renders a generated scene as a standard under its 1.3 software version. Normally the GUI implementation of the benchmark shows the scene being built, and allows the user to upload the result as a ‘time to complete’.

We got in contact with the developer who gave us a command line version of the benchmark that does a direct output of results. Rather than reporting time, we report the average number of rays per second across six runs, as the performance scaling of a result per unit time is typically visually easier to understand.

The Corona benchmark website can be found at https://corona-renderer.com/benchmark

Intel's HEDT chips are quite good at Corona, but if we compare the 3900X to the 3950X, we still see some good scaling.

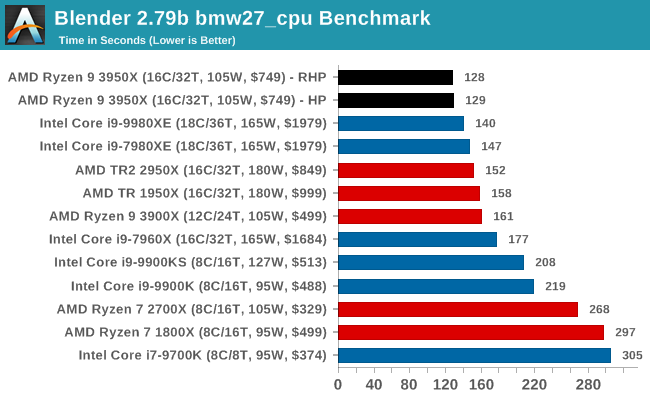

Blender 2.79b: 3D Creation Suite

A high profile rendering tool, Blender is open-source allowing for massive amounts of configurability, and is used by a number of high-profile animation studios worldwide. The organization recently released a Blender benchmark package, a couple of weeks after we had narrowed our Blender test for our new suite, however their test can take over an hour. For our results, we run one of the sub-tests in that suite through the command line - a standard ‘bmw27’ scene in CPU only mode, and measure the time to complete the render.

Blender can be downloaded at https://www.blender.org/download/

AMD is taking the lead in our blender test, with the 16-core chips easily going through Intel's latest 18-core hardware.

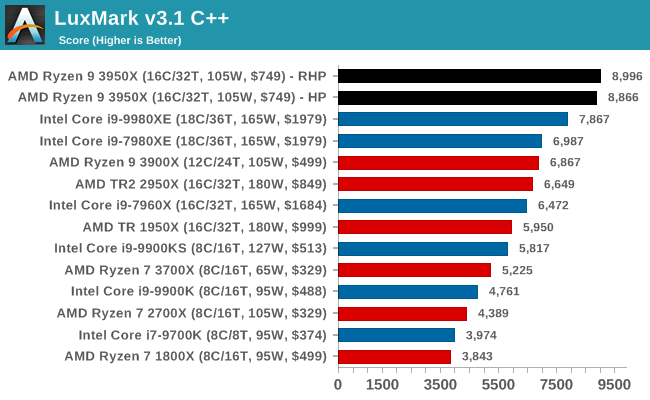

LuxMark v3.1: LuxRender via Different Code Paths

As stated at the top, there are many different ways to process rendering data: CPU, GPU, Accelerator, and others. On top of that, there are many frameworks and APIs in which to program, depending on how the software will be used. LuxMark, a benchmark developed using the LuxRender engine, offers several different scenes and APIs.

In our test, we run the simple ‘Ball’ scene on both the C++ code path, in CPU mode. This scene starts with a rough render and slowly improves the quality over two minutes, giving a final result in what is essentially an average ‘kilorays per second’.

Despite using Intel's Embree engine, again AMD's 16-cores easily win out against Intel's 18-core chips, at under half the cost.

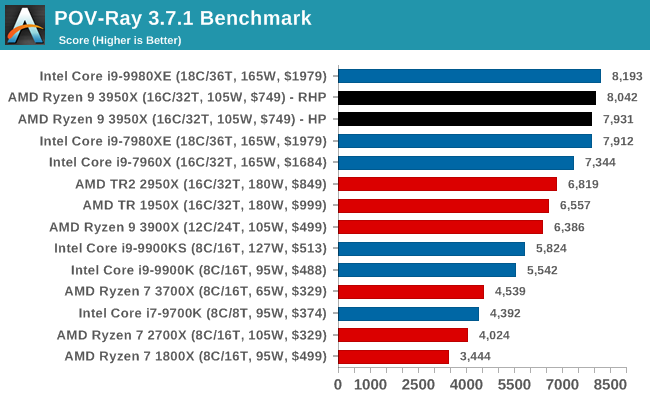

POV-Ray 3.7.1: Ray Tracing

The Persistence of Vision ray tracing engine is another well-known benchmarking tool, which was in a state of relative hibernation until AMD released its Zen processors, to which suddenly both Intel and AMD were submitting code to the main branch of the open source project. For our test, we use the built-in benchmark for all-cores, called from the command line.

POV-Ray can be downloaded from http://www.povray.org/

POV-Ray ends up with AMD 16-core splitting the two Intel 18-core parts, which means we're likely to see the Intel Core i9-10980XE at the top here. It would have been interesting to see where an Intel 16-core Core-X on Cascade would end up for a direct comparison, but Intel has no new 16-core chip planned.

206 Comments

View All Comments

nathanddrews - Friday, November 15, 2019 - link

That's typically the main problem: minimum frames. Across most benchmarks, Intel can maintain significantly higher minimum frame rates for 144Hz and up. Obviously these metrics are going to be very game-dependent and settings-dependent, but the data are very clear: Intel's higher frequencies give it a significant advantage for minimum frames.treichst - Thursday, November 14, 2019 - link

165hz monitord have existed for a while now. 240hz monitors exist from a number of vendors.RedGreenBlue - Thursday, November 14, 2019 - link

The reason this is okay is the same reason a lot, or most, car engines advertise a.b liter engines when in reality it’s about a.(b - 0.05). It’s a generally accepted point of court precedents, or maybe in the laws themselves, that you can’t sue for rounding, unless it’s an unreasonable difference from the reality. So if you want to sue AMD, expect to be laughed out of court.shaolin81 - Friday, November 15, 2019 - link

Problem most probably relies on using High performance profile. When I use it with my 2700X, idle cores are nearly never parked and therefore there's no room for CPU to get Max Turbo on a single core. When I change it to balanced profile, I most idle cores are parked and I get beyon Max Turbo easily - to 4450Mhz. Anandtech should try this.prophet001 - Friday, November 15, 2019 - link

I think that's me too nathanddrews. I mostly play WoW and though I haven't seen any actual benchmarks, I'm pretty sure that the 9900KS will outperform the Ryzens. WoW just scales with clock speed past like 4 cores.SRB181 - Friday, November 15, 2019 - link

I've often wondered what people are looking for when cpu's get to this point. I'm absolutely positive the 9900ks will outperform my current CPU (1950x), but I'm getting 78 fps at 4k60 in WOW set to ultra (10) in the graphics. Not being a jerk, but what exactly do you notice in gameplay with 9900ks that I would notice/see?prophet001 - Monday, November 18, 2019 - link

I don't have a 9900 I've just seen how WoW behaves with various processors and my own personal experience. I have an old extreme processor x299 chipset with a 1080 GTX. It's highly CPU bound. Even though the processor is 4 core hyper threaded, it won't get past like 45 FPS in Boralus. I know it's not GPU bound because I look at the GPU load in GPU-z and it's like 40% load. I can turn render scale up or down and doesn't really make a difference on my computer.That, along with other people's research, leads me to believe that WoW is highly CPU bound but more specifically, core clock speed bound. People that get 5GHz get much more out of the game than people that leave their CPUs at stock clocks.

Qasar - Monday, November 18, 2019 - link

prophet001 there must be something else going on with your system, as i type this i am sitting in Boralus harbor, right above where the ship from SW docks looking out towards the mountain, with the ship from SW coming into dock on the left. i am getting a minimum of 65 FPS as i spin in the spot. im running a Asus stix, 1060 gaming, with a i7 5930K @ 4.2 ghz. @ 1080P in other zones, i have seen as high as the 180s.... cpu and GPU utilization is 30-40% and a solid 25% respectfully.maybe there is something else with your system that is causing this ?

Qasar - Monday, November 18, 2019 - link

should also mention, thats with pretty much max on the graphics options.WaltC - Friday, November 15, 2019 - link

Wrong answer...;) AMD has only ever said "Max single core boost", emphasis on the word "max," which evidently must be translated for the benefit of people mindlessly trying to pick it apart because the meaning of "max" ever eludes them!...;) Really, I've seen all kinds of stupid come out on this one. AMD does not say, "guaranteed single core boost of 4.7GHz" because it's not guaranteed at all--it is the "max" single-core boost obtainable--not the "only" single-core boost the CPU is capable of! Uh--I mean, I'm embarrassed I actually have to explain this, but *any single-core boost clock above the base clock of the cpu* is a *genuine boost of the core* and "max" of course means only the very maximum single core boost clock obtainable at any given time, depending on all of the attendant conditions! So, take a situation in which all the cores boost to 4.5Ghz, 4.6GHz or 4.3Ghz--every single one of them is providing the advertised single-core boost! And yes, people are indeed seeing 4.7GHz *maximums*--but not all of the time, of course, since "max" doesn't mean "all of the time every time," does it,? In their zeal to defend an otherwise indefendable Intel--people have completely butchered the "max" single core boost concept (well, Intel people have butchered it, I should say..;)). Gee--if the only boost these CPUs ever did was 4.7GHz, then none of them would be "max," would they--they'd be the *normal clock* and the damn CPU would be running at 4.7GHz continuously on all cores!...;) I mean, is it possible for people to wax anymore stupid on this subject than this? "Max" absolutely does not mean "all the time every time"--else "max" would have no meaning at all. Jeez--the stupid is strong @ Intel these days...;) Also, it's interesting to note that with fewer cores and a slower clock AMD processes data *faster* than Intel even though the Intel CPU has more cores and a higher clock--so please, the confusion over "max single core boost clocks" from AMD is just plain dumb, imo. It's plain enough--always has been. Multicore CPUs do not exist merely to see to what GHz a *single core* might reach @ maximum! Jeez--we graduated from single-thread thinking long years ago...;) (Or, rather, some of us did.)