The Intel Core i9-9990XE Review: All 14 Cores at 5.0 GHz

by Dr. Ian Cutress on October 28, 2019 10:00 AM ESTCPU Performance: Web and Legacy Tests

While more the focus of low-end and small form factor systems, web-based benchmarks are notoriously difficult to standardize. Modern web browsers are frequently updated, with no recourse to disable those updates, and as such there is difficulty in keeping a common platform. The fast paced nature of browser development means that version numbers (and performance) can change from week to week. Despite this, web tests are often a good measure of user experience: a lot of what most office work is today revolves around web applications, particularly email and office apps, but also interfaces and development environments. Our web tests include some of the industry standard tests, as well as a few popular but older tests.

We have also included our legacy benchmarks in this section, representing a stack of older code for popular benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

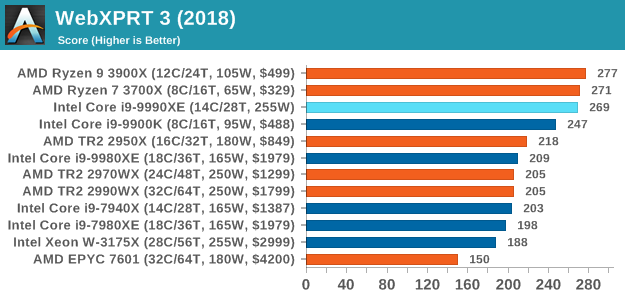

WebXPRT 3: Modern Real-World Web Tasks, including AI

The company behind the XPRT test suites, Principled Technologies, has recently released the latest web-test, and rather than attach a year to the name have just called it ‘3’. This latest test (as we started the suite) has built upon and developed the ethos of previous tests: user interaction, office compute, graph generation, list sorting, HTML5, image manipulation, and even goes as far as some AI testing.

For our benchmark, we run the standard test which goes through the benchmark list seven times and provides a final result. We run this standard test four times, and take an average.

Users can access the WebXPRT test at http://principledtechnologies.com/benchmarkxprt/webxprt/

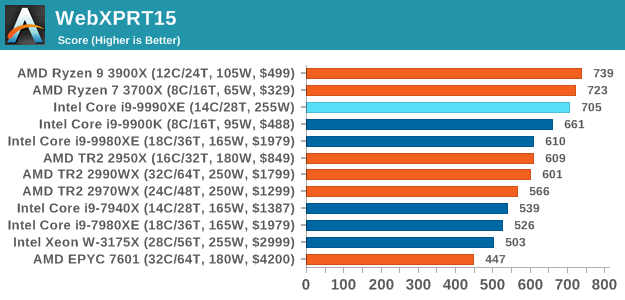

WebXPRT 2015: HTML5 and Javascript Web UX Testing

The older version of WebXPRT is the 2015 edition, which focuses on a slightly different set of web technologies and frameworks that are in use today. This is still a relevant test, especially for users interacting with not-the-latest web applications in the market, of which there are a lot. Web framework development is often very quick but with high turnover, meaning that frameworks are quickly developed, built-upon, used, and then developers move on to the next, and adjusting an application to a new framework is a difficult arduous task, especially with rapid development cycles. This leaves a lot of applications as ‘fixed-in-time’, and relevant to user experience for many years.

Similar to WebXPRT3, the main benchmark is a sectional run repeated seven times, with a final score. We repeat the whole thing four times, and average those final scores.

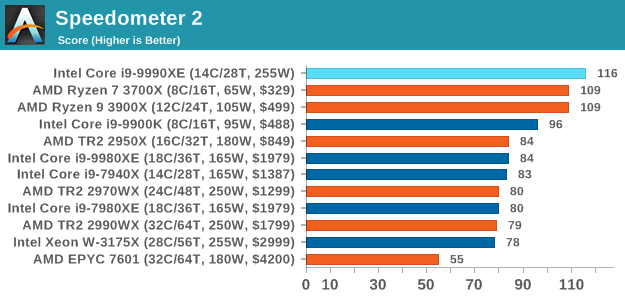

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a accrued test over a series of javascript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics. We report this final score.

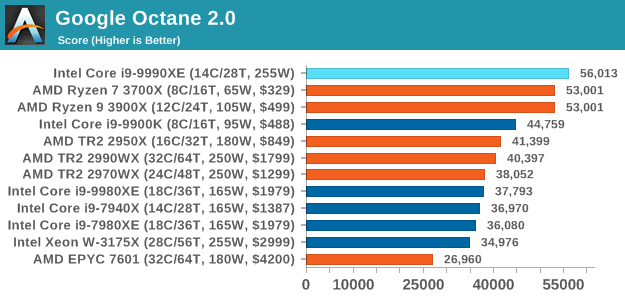

Google Octane 2.0: Core Web Compute

A popular web test for several years, but now no longer being updated, is Octane, developed by Google. Version 2.0 of the test performs the best part of two-dozen compute related tasks, such as regular expressions, cryptography, ray tracing, emulation, and Navier-Stokes physics calculations.

The test gives each sub-test a score and produces a geometric mean of the set as a final result. We run the full benchmark four times, and average the final results.

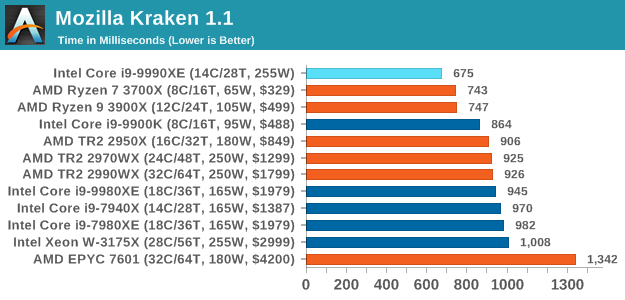

Mozilla Kraken 1.1: Core Web Compute

Even older than Octane is Kraken, this time developed by Mozilla. This is an older test that does similar computational mechanics, such as audio processing or image filtering. Kraken seems to produce a highly variable result depending on the browser version, as it is a test that is keenly optimized for.

The main benchmark runs through each of the sub-tests ten times and produces an average time to completion for each loop, given in milliseconds. We run the full benchmark four times and take an average of the time taken.

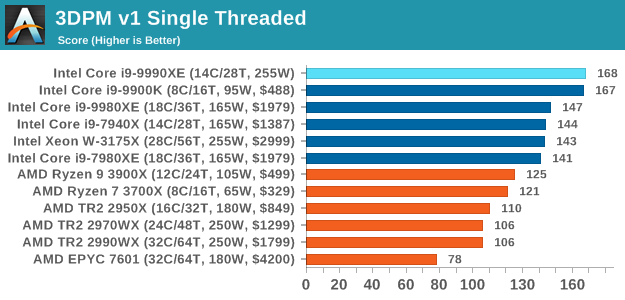

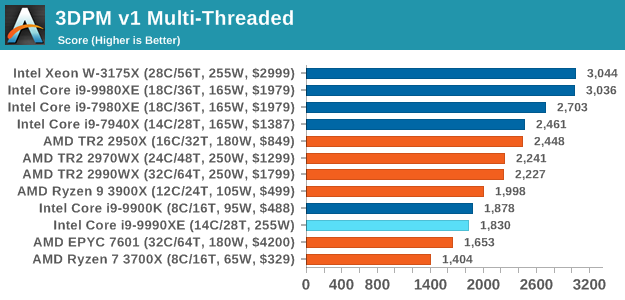

3DPM v1: Naïve Code Variant of 3DPM v2.1

The first legacy test in the suite is the first version of our 3DPM benchmark. This is the ultimate naïve version of the code, as if it was written by scientist with no knowledge of how computer hardware, compilers, or optimization works (which in fact, it was at the start). This represents a large body of scientific simulation out in the wild, where getting the answer is more important than it being fast (getting a result in 4 days is acceptable if it’s correct, rather than sending someone away for a year to learn to code and getting the result in 5 minutes).

In this version, the only real optimization was in the compiler flags (-O2, -fp:fast), compiling it in release mode, and enabling OpenMP in the main compute loops. The loops were not configured for function size, and one of the key slowdowns is false sharing in the cache. It also has long dependency chains based on the random number generation, which leads to relatively poor performance on specific compute microarchitectures.

3DPM v1 can be downloaded with our 3DPM v2 code here: 3DPMv2.1.rar (13.0 MB)

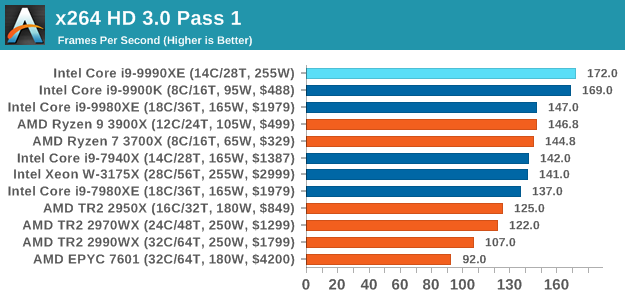

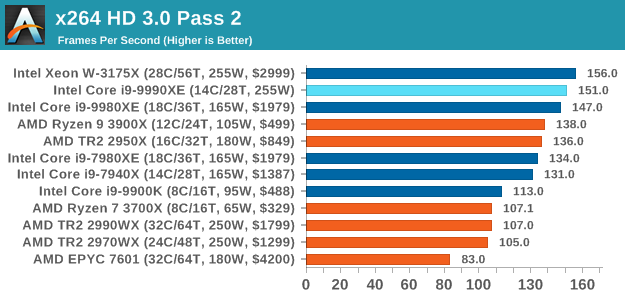

x264 HD 3.0: Older Transcode Test

This transcoding test is super old, and was used by Anand back in the day of Pentium 4 and Athlon II processors. Here a standardized 720p video is transcoded with a two-pass conversion, with the benchmark showing the frames-per-second of each pass. This benchmark is single-threaded, and between some micro-architectures we seem to actually hit an instructions-per-clock wall.

145 Comments

View All Comments

xrror - Monday, October 28, 2019 - link

Hey Ian, please again thank ICC for letting you guys have a full run though with one of these systems.While yes, this will never be a practical option for the vast majority of people, it IS one VERY AWESOME datapoint(s) for benchmark purposes. No more hypothetical "but what if 5Ghz Skylake" no - you have actual numbers, it shows the scaling for Intel's current'ish gen out to the extreme end.

I hope you are able to run more on this box to fill out the numbers in Bench - (which you may have already, I haven't actually looked yet).

Again thanks to ICC and Anandtech for this.

MrAndroidRobot - Monday, October 28, 2019 - link

If like to see how the 3900X fairs in comparison given its 12C/24T and holds up well against current TR/HEDT CPUskrumme - Tuesday, October 29, 2019 - link

I cant really tell how this is different from my 8700k from a performance perspective.Looking at the article i think this is cheap marketing. Good move. But anyways it's crazy 14c cpu is now touted for their single thread performance. Seriously one have to wonder the meaning of all this. What am i doing here? Lol

TheJian - Tuesday, October 29, 2019 - link

Please test SLOWER/VERY SLOW not FAST/FASTER for encoding. I would not STORE anything ripped at FAST/FASTER...LOL. Who rips at this crap quality level? Besides, with 14c why wouldn't you want top quality (or fairly close)? It's not much more time and might yield completely different results. Never understood why people keep running tests that are NOT how we would USE the tested device/game etc. Test it like we USE it or quit wasting your (our?) time.Raise your hand if you're ripping your blurays with fast/faster settings...Nobody. You can rip with SLOWER faster than you can create the content on these chips today, so why ruin your vid? L4.1 HIGH, VERY SLOW. Done (and I do 2pass, control other settings too, but you get the point). Nobody is archiving anything with your settings right? Emulate the pirates (seriously, one NFO file can tell you a LOT about these settings) :) They would NUKE your rip. Mediainfo can tell you all the settings also if you don't know where to get an nfo file from the people who've been ripping since the net started...LOL. Just saying...It's like claiming 1440p is the new enthusiast resolution (Ryan did this in his 660ti article...ROFL - see the comment section where I destroyed that crap), which isn't even true TODAY...LOL. YEARS later. Wake me when 1440p hits 10%. Right now it takes 1440p+4k to hit ~6.5% total...ROFL. 1440p is STILL not even 5% yet (4.98...ROFL). 1080p however 65%! Hmm, where should we spend MOST of our time testing then? Ah, UNDER 1440p with 4k being a complete joke still at 1.6%.

https://store.steampowered.com/hwsurvey/Steam-Hard...

Wrong still for 7yrs Ryan Smith, AND counting. Is 10% even good enough to call it the new enthusiast resolution? Maybe Ryan will be right 2020. I digress...Don't even get me started on the complete BS that 4k testing is (1.6% of 130mil steam users say 4k is still dead). Apparently people don't like turning crap down (that devs meant for us to SEE) as much as Ryan etc think. :)

GreenReaper - Tuesday, October 29, 2019 - link

It's true that relatively few systems have a high-resolution screen. In fact I'd go further and say that for general usage of current systems, the combination of 4K+1440p is closer to 3% (with 1440p being ~2.5% of that). That's what I see on my media hosting website.However, enthusiasts *are* the 3%. Or at least a lot of it. Most people use all-in-ones, work laptops, or school netbooks. They may install Steam on them and game on them, because they have to - they probably didn't buy new hardware specifically to do so. Reviews are all about new hardware.

If they *did* want to buy a new piece of video hardware, they may want to know how it'd perform if also buying a 1440p monitor and plug it in, perhaps once prices come down a bit. Or even 4K!

It's also a better way to measure GPU power than running them in a CPU-limited zone (after all, your GPU may end be paired with a future CPU by the time you buy it). The higher-end cards that tend to be reviewed are also intended to potentially last multiple CPU cycles - in reality I suspect most buy something further down the scale and just use it with one CPU, but it's an option.

Your point is fairer with IGP, but that's what IGP level is for. Most serious gamers are not using IGP. And this review doesn't *have* any GPU tests, though, so your comment may be better saved for one that does; it came off as ranting a little too hard about Ryan. :-)

TheJian - Wednesday, November 6, 2019 - link

Of course reviews are about new hardware...But the point is about HOW you test them. Are you acting like I'll MAYBE, if the wind blows right, stars align, etc, in 5yrs, or are you testing for what we will do with it for the next few years NOW? You know, like what I actually BOUGHT it for, NOW. I'm an enthusiast (know pretty much only them, since only deal with IT people pretty much), and have nothing like what they are pushing (no 4k desire for anyone I know, most not on 1440p). It isn't because I can't afford 4k, just don't care (for many reasons currently, lacking gpus for one, you need TWO still). I can afford those two titans every month too, but what for? They'd fry me in my PC room after 30mins of gaming due to heat in my state. So I'm stuck waiting for a 7nm NV card that takes AMD's 7nm a step further in watts heat (or I'll just downclock their no doubt better 7nm version since they waited) so I can play my next monitor (hopefully xmas this year or next) at max details, and of course my current 1920x1200 will be maxed finally by it until I finally see a monitor I want (c'mon dell 30+ with gsync). I'll pay $1200, just make it!I see nothing wrong with "ranting" (not how I see it, but whatever) if you're still right and it is relevant to 95% of users who are STILL not using stuff like they seem to think we do (and you keep testing stuff WRONG over and over). The point is a pattern of reviewing products in ways we don't actually use them. If 95% of users were running 4k monitors, it would be just as stupid to test 720p all day in every review right? Unless you're trying to prove a specific point by doing said test, there is no reason to wash rinse and repeat this. Your review should cover your audience NOW, with a mention of the future maybe as an afterthought (like RTX on day one, hmm, hope they use it). RTX didn't fly off the shelves until more about the features came out. Most people don't care about the future of their tech, they are buying for today's perf or features they need.

No, The same people buy new titans yearly (Multiple Titans in many cases, 4 at a time, 2080ti's also) according to Jen Hsun himself. The bulk of top sales go to the same rich who can afford them yearly easily. Heck I can afford them too (easy with no Visa bills, no car (cash), no cell, no cabletv (just HSI), just don't care to act rich for not much more perf :)

More than 3% buy enthusiast cards. Heck 3% of sales is likely Titans alone, and that card alone is not what I call enthusiasts (Ryan thought it was 660ti back then, it was NOT the top card, probably correct too, but it wasn't built for 1440p he was pushing). Anything over $250, you're probably more than a casual gamer.

NO serious gamer is using igp...LOL. Do you know serious gamers who play 720p with details down? I don't say NEVER test 4k or 1440p, I say there is no need to spend 2/3 of each review of gpus on this crap (you can read many posts of mine in reviews like this). TEST more of what we PLAY at NOW, RIP at quality levels most would want to watch, etc. When the future comes, I'll be on other hardware (probably most enthusiasts huh?)...ROFL. Test a few games a year in a 4k review, no need to do it repeatedly as if it is used by more than a few %. People are FAR more interested in how it works NOW as I use it, than "futureproof" junk I may never use if nobody supports it ever. I'm not against testing a 4k game per review, but not a 4k test for every game in said review. Same for ripping, I humbly ask who watches this crap quality? Why are all the ripping tests in crap qual? They turn off stuff users specifically BUY NV cards for. You know, like acting CUDA wouldn't be used by a NV buyer if they had a choice. Nobody buys NV to do OpenCL...ROFLMAO. You buy for CUDA if you can for your app. I could go on, but you should get the point: TEST IT LIKE WE USE IT, no matter what you're testing today, tomorrow, etc.

My point is fair for ALL single gpu cards, as there isn't one yet that can do 4K on ALL games without turning tons of crap down on a per game basis. Pricing isn't bad, so this is clearly a big deal to people. No point in buying something new, only to degrade it's perf out of the box just to get enough fps to enjoy your game (not as the dev intended you to see it at this point either). But, then, I don't enjoy that game at this point. I need the details ON.

IF, if, but, maybe...blah...How about spending MOST of your time testing what we actually DO with whatever you are reviewing, instead of wasting time on what YOU WISH we used this stuff for. This is why Anandtech is my last resort these days and tomshardware even less used (same site really now).

mode_13h - Tuesday, October 29, 2019 - link

Isn't the myth of high-frequency traders using tuned CPUs a bit overblown? I'm not saying it doesn't happen, but would they really even go so far as to forego ECC memory?MrSpadge - Tuesday, October 29, 2019 - link

I guess you have to be fastest to earn serious money. And there's no "fast" ECC RAM in terms of desktop OC. If with ECC you can get a guaranteed answer too late, it's not going to matter. Better risk the seldom error without ECC - it's probably going to be fine...Bp_968 - Tuesday, October 29, 2019 - link

So I'm a true blood capitalist but I just don't see the utility or reason for existence for "high speed trading". They are making money on the difference between prices at the millisecond level. It offers nothing back to society and seems to exist only due to how stock and commodity trading works.Stocks should exist for public ownership of companies, to provide funding for those companies and hopefully for the stockholders to benefit from the growth and profit of said companies.

It shouldn't exsist as a glorified casino game, which is essentially what "high speed trading" is.

crotach - Tuesday, October 29, 2019 - link

So it's good at compiling stuff?