The Intel Core i9-9990XE Review: All 14 Cores at 5.0 GHz

by Dr. Ian Cutress on October 28, 2019 10:00 AM ESTCPU Performance: Web and Legacy Tests

While more the focus of low-end and small form factor systems, web-based benchmarks are notoriously difficult to standardize. Modern web browsers are frequently updated, with no recourse to disable those updates, and as such there is difficulty in keeping a common platform. The fast paced nature of browser development means that version numbers (and performance) can change from week to week. Despite this, web tests are often a good measure of user experience: a lot of what most office work is today revolves around web applications, particularly email and office apps, but also interfaces and development environments. Our web tests include some of the industry standard tests, as well as a few popular but older tests.

We have also included our legacy benchmarks in this section, representing a stack of older code for popular benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

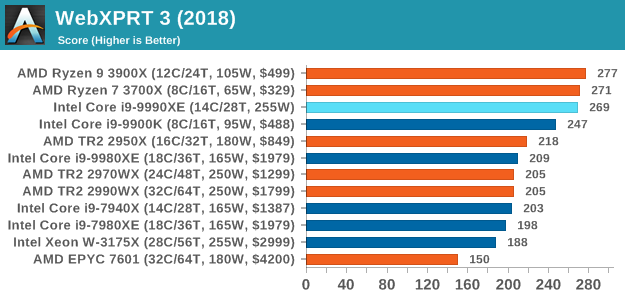

WebXPRT 3: Modern Real-World Web Tasks, including AI

The company behind the XPRT test suites, Principled Technologies, has recently released the latest web-test, and rather than attach a year to the name have just called it ‘3’. This latest test (as we started the suite) has built upon and developed the ethos of previous tests: user interaction, office compute, graph generation, list sorting, HTML5, image manipulation, and even goes as far as some AI testing.

For our benchmark, we run the standard test which goes through the benchmark list seven times and provides a final result. We run this standard test four times, and take an average.

Users can access the WebXPRT test at http://principledtechnologies.com/benchmarkxprt/webxprt/

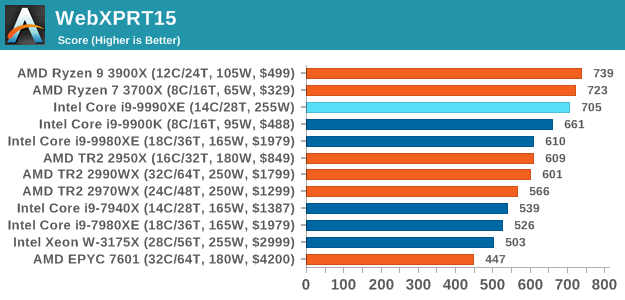

WebXPRT 2015: HTML5 and Javascript Web UX Testing

The older version of WebXPRT is the 2015 edition, which focuses on a slightly different set of web technologies and frameworks that are in use today. This is still a relevant test, especially for users interacting with not-the-latest web applications in the market, of which there are a lot. Web framework development is often very quick but with high turnover, meaning that frameworks are quickly developed, built-upon, used, and then developers move on to the next, and adjusting an application to a new framework is a difficult arduous task, especially with rapid development cycles. This leaves a lot of applications as ‘fixed-in-time’, and relevant to user experience for many years.

Similar to WebXPRT3, the main benchmark is a sectional run repeated seven times, with a final score. We repeat the whole thing four times, and average those final scores.

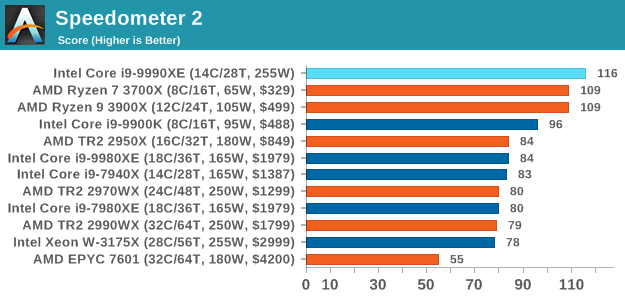

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a accrued test over a series of javascript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics. We report this final score.

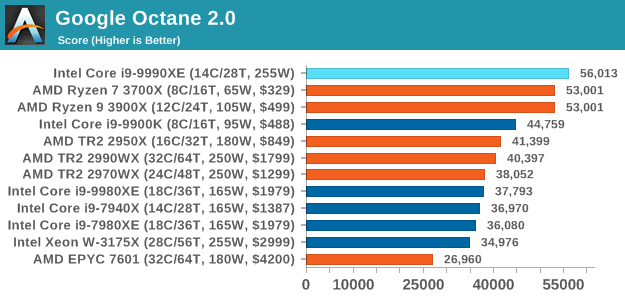

Google Octane 2.0: Core Web Compute

A popular web test for several years, but now no longer being updated, is Octane, developed by Google. Version 2.0 of the test performs the best part of two-dozen compute related tasks, such as regular expressions, cryptography, ray tracing, emulation, and Navier-Stokes physics calculations.

The test gives each sub-test a score and produces a geometric mean of the set as a final result. We run the full benchmark four times, and average the final results.

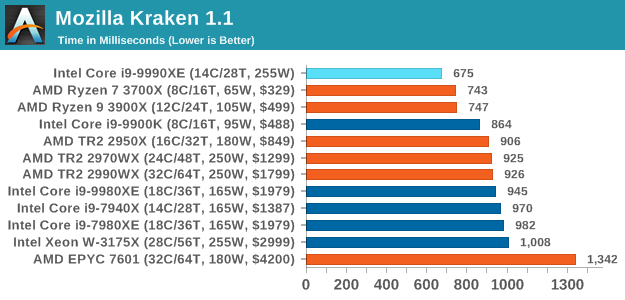

Mozilla Kraken 1.1: Core Web Compute

Even older than Octane is Kraken, this time developed by Mozilla. This is an older test that does similar computational mechanics, such as audio processing or image filtering. Kraken seems to produce a highly variable result depending on the browser version, as it is a test that is keenly optimized for.

The main benchmark runs through each of the sub-tests ten times and produces an average time to completion for each loop, given in milliseconds. We run the full benchmark four times and take an average of the time taken.

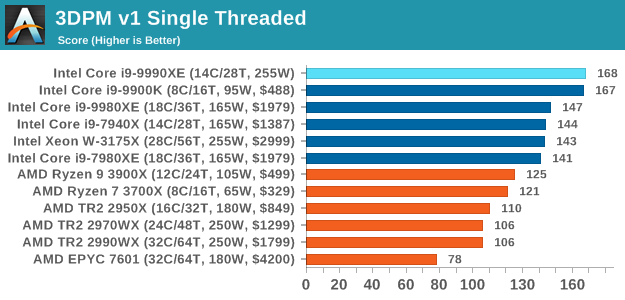

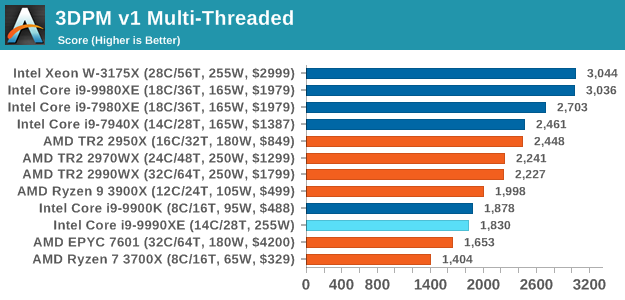

3DPM v1: Naïve Code Variant of 3DPM v2.1

The first legacy test in the suite is the first version of our 3DPM benchmark. This is the ultimate naïve version of the code, as if it was written by scientist with no knowledge of how computer hardware, compilers, or optimization works (which in fact, it was at the start). This represents a large body of scientific simulation out in the wild, where getting the answer is more important than it being fast (getting a result in 4 days is acceptable if it’s correct, rather than sending someone away for a year to learn to code and getting the result in 5 minutes).

In this version, the only real optimization was in the compiler flags (-O2, -fp:fast), compiling it in release mode, and enabling OpenMP in the main compute loops. The loops were not configured for function size, and one of the key slowdowns is false sharing in the cache. It also has long dependency chains based on the random number generation, which leads to relatively poor performance on specific compute microarchitectures.

3DPM v1 can be downloaded with our 3DPM v2 code here: 3DPMv2.1.rar (13.0 MB)

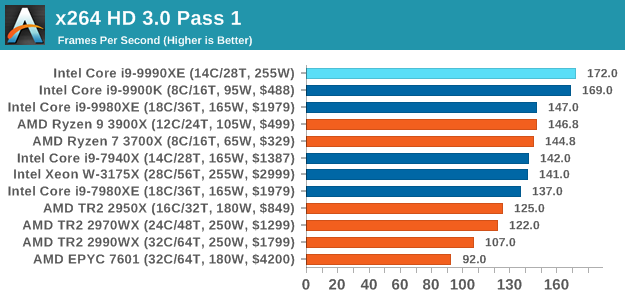

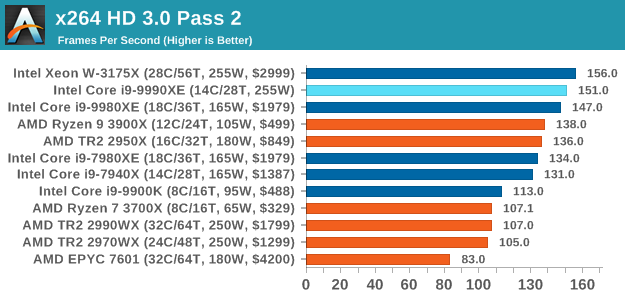

x264 HD 3.0: Older Transcode Test

This transcoding test is super old, and was used by Anand back in the day of Pentium 4 and Athlon II processors. Here a standardized 720p video is transcoded with a two-pass conversion, with the benchmark showing the frames-per-second of each pass. This benchmark is single-threaded, and between some micro-architectures we seem to actually hit an instructions-per-clock wall.

145 Comments

View All Comments

yannigr2 - Monday, October 28, 2019 - link

An over 300W chip that loses in so many cases from chips that cost under $500, with less than half power consumption. And Intel is $auctioning$ it. That isn't even funny. It's tragic.AshlayW - Monday, October 28, 2019 - link

600W for performance that's not even going to be that much over a 3950X (if at all?) Intel is a laughing stock at this point. Can't wait to see the 9900KS, and have a good laugh at the last desperate, dying twitches of the Skylake architecture.29a - Monday, October 28, 2019 - link

HFT should be illegal!MrSpadge - Monday, October 28, 2019 - link

Why? And on which basis would you forbid it? I think the better way to deal with this is to attach a tiny tax to each transaction. So if it's really worth it, they may do it. But the government gets its share and can redistribute to something more useful.RBFL - Monday, October 28, 2019 - link

It has no useful purpose for society, the fact it is difficult to identify the people being harmed does not mean that harm is not taking place.I think making people have a 1 second relationship with a share is not unreasonable. Just because someone can do something doesn't mean we have to allow it. We have speed limits, laws against dishonesty and murder and we could just as easily have one against HFT.

The fact that exchanges sell expensive server space to these companies for lower pings, while purportedly being the arbiters of fair play and price transparency is, of course, another big issue.

rahvin - Monday, October 28, 2019 - link

So anything you in your all knowing capacity deem as not useful for society at large it should be illegal? Doesn't that mean gaming should be illegal?I've yet to meet a single one of these people that complain about HFT that actually understand how the market works, how stock trading function and what HFT even is. Most of them simply heard some talking point they regurgitate without any understanding of how the stock market even works let alone how a stock transaction works or what HFT involves.

shadowx360 - Monday, October 28, 2019 - link

It's a mix of ignorance and jealousy, that they can't be the successful ones rolling in dough, and all investing is somehow evil and taking advantage of the common worker. There are some downsides to HFT, namely increased market volatility and cascading problems where a fall in prices can trigger millions of stop losses that compound the issue, but like you said, most have zero clue.RBFL - Tuesday, October 29, 2019 - link

And that statement is based on?I am not jealous of an industry that has basically bought its way to success through lobbying and every time it explodes it expects the rest of us to reboot it, while mysteriously keeping the proceeds prior to the crash. An industry that gives away billions of our dollars of our money to avoid their own prosecution. Where almost no-one goes to jail after egregious lies, fraud, money laundering,....

All investing is not evil, however many of the practices of the industry are. Lobbying against fiduciary duty for the small investor, for example. If you actually look at the share classes available you will undoubtedly see some that no-one who understood what they were being sold would ever buy. Telling people 'they are responsible for their future' while not cleaning up the industry is like throwing sheep to wolves. And no, it is not everyone's responsibility to know every evil practice in every area of life in which they are forced to deal. It is unrealistic for the well educated, let alone the average citizen. It is why we have laws, which are generally reactive rather than proactive and thus mainly address known abuses rather prospective ones.

Alistair - Monday, October 28, 2019 - link

Nice article, except the final page. Why mention "highest ST for HEDT" and "carves through AMD like butter" etc., then you don't even make mention of any MT performance in summary. You could have said, in MT scenarios, it performs about the same as any Intel 18 or AMD 24 core server CPU or some such.Alistair - Monday, October 28, 2019 - link

my meaning is the summary shouldn't just be for what is awesome, but should also summarize all the results... no mention of multi threading performance on the final page at all, because it isn't special?