AMD Rome Second Generation EPYC Review: 2x 64-core Benchmarked

by Johan De Gelas on August 7, 2019 7:00 PM ESTMemory Subsystem: TinyMemBench

We doublechecked our LMBench numbers with Andrei's custom memory latency test.

The latency tool also measures bandwidth and it became clear than once we move beyond 16 MB, DRAM is accessed. When Andrei compared with our Ryzen 9 3900x numbers, he noted:

The prefetchers on the Rome platform don't look nearly as aggressive as on the Ryzen unit on the L2 and L3

It would appear that parts of the prefetchers are adjusted for Rome compared to Ryzen 3000. In effect, the prefetchers are less aggressive than on the consumer parts, and we believe that AMD has made this choice by the fact that quite a few applications (Java and HPC) suffer a bit if the prefetchers take up too much bandwidth. By making the prefetchers less aggressive in Rome, it could aid performance in those tests.

While we could not retest all our servers with Andrei's memory latency test by the deadline (see the "Murphy's Law" section on page 5), we turned to our open source TinyMemBench benchmark results. The source was compiled for x86 with GCC and the optimization level was set to "-O3". The measurement is described well by the manual of TinyMemBench:

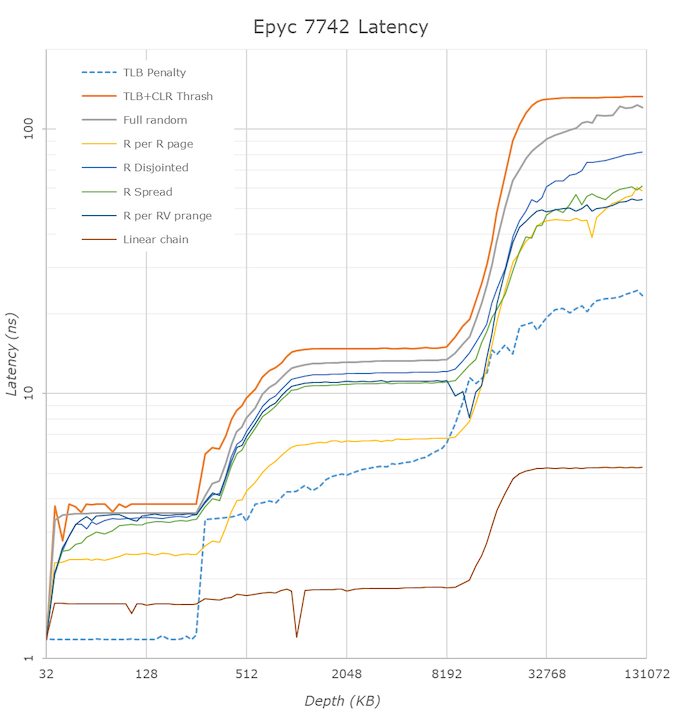

Average time is measured for random memory accesses in the buffers of different sizes. The larger the buffer, the more significant the relative contributions of TLB, L1/L2 cache misses, and DRAM accesses become. All the numbers represent extra time, which needs to be added to L1 cache latency (4 cycles).

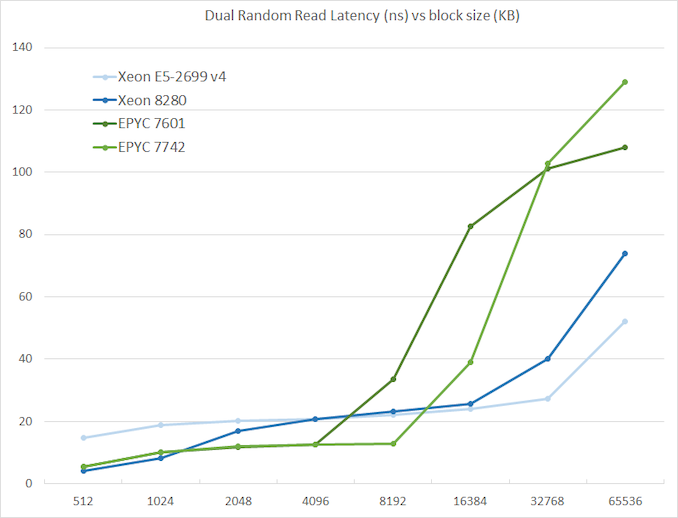

We tested with dual random read, as we wanted to see how the memory system coped with multiple read requests.

The graph shows how the larger L3 cache of the EPYC 7742 resulting in a much lower latency between 4 and 16 MB, compared to the EPYC 7601. The L3 cache inside the CCX is also very fast (2-8 MB) compared to Intel's Mesh (8280) and Ring topologies (E5).

However, once we access more than 16 MB, Intel has a clear advantage due to the slower but much larger shared L3 cache. When we tested the new EPYC CPUs in a more advanced NUMA setting (with NPS = 4 setting, meaning 4 nodes per socket), latency at 64 MB lowered from 129 to 119. We quote AMD's engineering:

In NPS4, the NUMA domains are reported to software in such a way as it chiplets always access the near (2 channels) DRAM. In NPS1 the 8ch are hardware-interleaved and there is more latency to get to further ones. It varies by pairs of DRAM channels, with the furthest one being ~20-25ns (depending on the various speeds) further away than the nearest. Generally, the latencies are +~6-8ns, +~8-10ns, +~20-25ns in pairs of channels vs the physically nearest ones."

So that also explains why AMD states that select workloads achieve better performance with NPS = 4.

180 Comments

View All Comments

schujj07 - Friday, August 9, 2019 - link

The problem is Microsoft went to the Oracle model of licensing for Server 2016/19. That means that you have to license EVERY CPU core it can be run on. Even if you create a VM with only 8 cores, those 8 cores won't always be running on the same cores of the CPU. That is where Rome hurts the pockets of people. You would pay $10k/instance of Server Standard on a single dual 64 core host or $65k/host for Server DataCenter on a dual 64 core host.browned - Saturday, August 10, 2019 - link

We are currently a small MS shop, VMWare with 8 sockets licensed, Windows Datacenter License. 4 Hosts, 2 x 8 core due to Windows Licensing limits. But we are running 120+ majority Windows systems on those hosts.I see our future with 4 x 16 core systems, unless our CPU requirements grow, in which case we could look at 3 x 48 or 2 x 64 core or 4 x 24 core and buy another lot of datacenter licenses. Because we already have 64 cores licensed the uplift to 96 or 128 is not something we would worry about.

We would also get a benefit from only using 2, 3, or 4 of our 8 VMWare socket licenses. We could them implement a better DR system, or use those licenses at another site that currently use Robo licenses.

jgraham11 - Thursday, August 8, 2019 - link

so how does it work with hyper threaded CPUs? And what if the server owner decides to not run Intel Hyperthreading because it is so prone to CPU exploits (most 10 yrs+ old). Does Google still pay for those cores??ianisiam - Thursday, August 8, 2019 - link

You only pay for physical cores, not logical.twotwotwo - Thursday, August 8, 2019 - link

Sort of a fun thing there is that in the past you've had to buy more cores than you need sometimes: lower-end parts that had enough CPU oomph may not support all the RAM or I/O you want, or maybe some feature you wanted was absent or disabled. These seem to let you load up on RAM and I/O at even 8C or 16C (min. 1P or 2P configs).Of course, some CPU-bound apps can't take advantage of that, but in the right situation being able to build as lopsided a machine as you want might even help out the folks who pay by the core.

azfacea - Wednesday, August 7, 2019 - link

FNikosD - Wednesday, August 7, 2019 - link

Ok guys...The Anandtech's team had a "bad luck and timming issues" to offer a true and decent review of the Greatest x86 CPU of all time, so for a proper review of EPYC Rome coming from the most objective and capable site for servers, take a look here:https://www.servethehome.com/amd-epyc-7002-series-...

anactoraaron - Thursday, August 8, 2019 - link

Fphoenix_rizzen - Saturday, August 10, 2019 - link

Review article for new CPU devolves into Windows vs Linux pissing match, completely obscuring any interesting discussion about said hardware. We really haven't reached peak stupid on the internet yet. :(The Benjamins - Wednesday, August 7, 2019 - link

Can we get a C20 benchmark for the lulz?