AMD Rome Second Generation EPYC Review: 2x 64-core Benchmarked

by Johan De Gelas on August 7, 2019 7:00 PM ESTMemory Subsystem: TinyMemBench

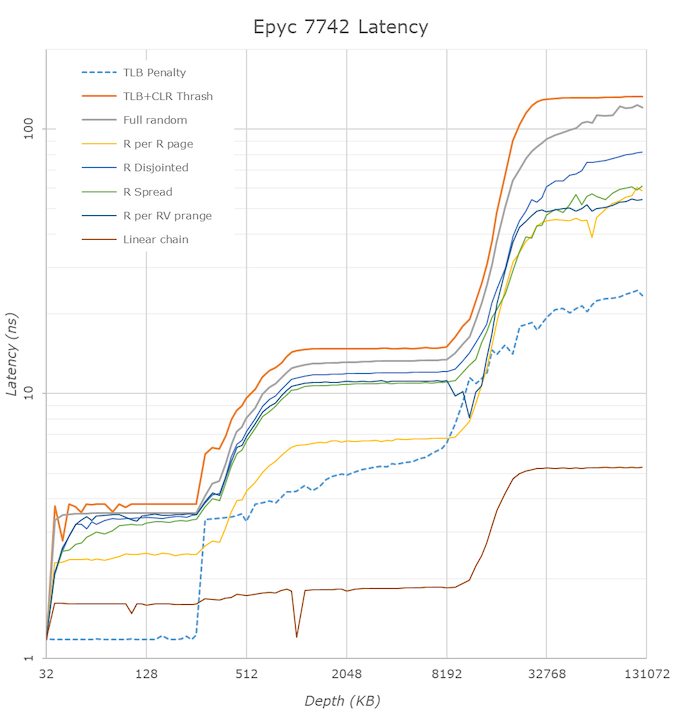

We doublechecked our LMBench numbers with Andrei's custom memory latency test.

The latency tool also measures bandwidth and it became clear than once we move beyond 16 MB, DRAM is accessed. When Andrei compared with our Ryzen 9 3900x numbers, he noted:

The prefetchers on the Rome platform don't look nearly as aggressive as on the Ryzen unit on the L2 and L3

It would appear that parts of the prefetchers are adjusted for Rome compared to Ryzen 3000. In effect, the prefetchers are less aggressive than on the consumer parts, and we believe that AMD has made this choice by the fact that quite a few applications (Java and HPC) suffer a bit if the prefetchers take up too much bandwidth. By making the prefetchers less aggressive in Rome, it could aid performance in those tests.

While we could not retest all our servers with Andrei's memory latency test by the deadline (see the "Murphy's Law" section on page 5), we turned to our open source TinyMemBench benchmark results. The source was compiled for x86 with GCC and the optimization level was set to "-O3". The measurement is described well by the manual of TinyMemBench:

Average time is measured for random memory accesses in the buffers of different sizes. The larger the buffer, the more significant the relative contributions of TLB, L1/L2 cache misses, and DRAM accesses become. All the numbers represent extra time, which needs to be added to L1 cache latency (4 cycles).

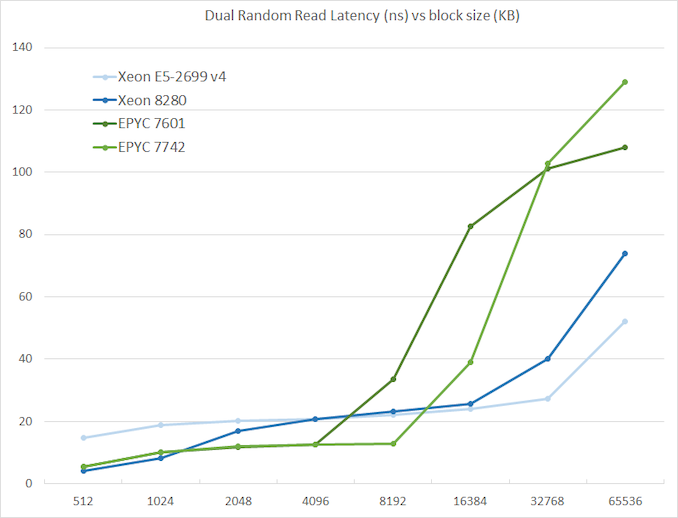

We tested with dual random read, as we wanted to see how the memory system coped with multiple read requests.

The graph shows how the larger L3 cache of the EPYC 7742 resulting in a much lower latency between 4 and 16 MB, compared to the EPYC 7601. The L3 cache inside the CCX is also very fast (2-8 MB) compared to Intel's Mesh (8280) and Ring topologies (E5).

However, once we access more than 16 MB, Intel has a clear advantage due to the slower but much larger shared L3 cache. When we tested the new EPYC CPUs in a more advanced NUMA setting (with NPS = 4 setting, meaning 4 nodes per socket), latency at 64 MB lowered from 129 to 119. We quote AMD's engineering:

In NPS4, the NUMA domains are reported to software in such a way as it chiplets always access the near (2 channels) DRAM. In NPS1 the 8ch are hardware-interleaved and there is more latency to get to further ones. It varies by pairs of DRAM channels, with the furthest one being ~20-25ns (depending on the various speeds) further away than the nearest. Generally, the latencies are +~6-8ns, +~8-10ns, +~20-25ns in pairs of channels vs the physically nearest ones."

So that also explains why AMD states that select workloads achieve better performance with NPS = 4.

180 Comments

View All Comments

AnonCPU - Friday, August 9, 2019 - link

The gain in hmmer on EPYC with GCC8 is not due to TAGE predictor.Hmmer gains a lot on EPYC only because of GCC8.

GCC8 vectorizer has been improved in GCC8 and hmmer gets vectorized heavily while it was not the case for GCC7. The same run on an Intel machine would have shown the same kind of improvement.

JohanAnandtech - Sunday, August 11, 2019 - link

Thanks, do you have a source for that? Interested in learning more!AnonCPU - Monday, August 12, 2019 - link

That should be due to the improvements on loop distribution:https://gcc.gnu.org/gcc-8/changes.html

"The classic loop nest optimization pass -ftree-loop-distribution has been improved and enabled by default at -O3 and above. It supports loop nest distribution in some restricted scenarios;"

There are also some references here in what was missing for hmmer vectorization in GCC some years ago:

https://gcc.gnu.org/ml/gcc/2017-03/msg00012.html

And a page where you can see that LLVM was missing (at least in 2015) a good loop distribution algo useful for hmmer:

https://www.phoronix.com/scan.php?page=news_item&a...

AnonCPU - Monday, August 12, 2019 - link

And more:https://community.arm.com/developer/tools-software...

just4U - Friday, August 9, 2019 - link

I guess the question to ask now is can they churn these puppies out like no tomorrow? Is the demand there? What about other Hardware? Motherboards and the like..Do they have 100 000 of these ready to go? The window of opportunity for AMD is always fleeting.. and if their going to capitalize on this they need to be able to put the product out there.

name99 - Friday, August 9, 2019 - link

No obvious reason why not. The chiplets are not large and TSMC ships 200 million Apple chips a year on essentially the same process. So yields should be there.Manufacturing the chiplet assembly also doesn't look any different from the Naples assembly (details differ, yes, but no new envelopes being pushed: no much higher frequency signals or denser traces -- the flip side to that is that there's scope there for some optimization come Milan...)

So it seems like there is nothing to obviously hold them back...

fallaha56 - Saturday, August 10, 2019 - link

Perhaps Hypertheading should be off on the Intel systems to better reflect eg Google’s reality / proper security standards now we know Intel isn’t secure?Targon - Monday, August 12, 2019 - link

That is why Google is going to be buying many Epyc based servers going forward. Mitigations do not mean a problem has been fixed.imaskar - Wednesday, August 14, 2019 - link

Why do you think AWS, GCP, Azure, etc. mitigated the vulnerabilities? They only patched Meltdown at most. All other things are too costly and hard to execute. They just don't care so much for your data. Too loose 2x cloud capacity for that? No way. And for security conscious serious customers they offer private clusters, so your workloads run on separate servers.ballsystemlord - Saturday, August 10, 2019 - link

Spelling and grammar errors:"This happened in almost every OS, and in some cases we saw reports that system administrators and others had to do quite a bit optimization work to get the best performance out of the EPYC 7001 series."

Missing "of":

"This happened in almost every OS, and in some cases we saw reports that system administrators and others had to do quite a bit of optimization work to get the best performance out of the EPYC 7001 series."

"...to us it is simply is ridiculous that Intel expect enterprise users to cough up another few thousand dollars per CPU for a model that supports 2 TB,..."

Excess "is" and missing "s":

"...to us it is simply ridiculous that Intel expects enterprise users to cough up another few thousand dollars per CPU for a model that supports 2 TB,..."

"Although the 225W TDP CPUs needs extra heatspipes and heatsinks, there are still running on air cooling..."

Excess "s" and incorrect "there",

"Although the 225W TDP CPUs need extra heatspipes and heatsinks, they're still running on air cooling..."

"The Intel L3-cache keeps latency consistingy low as long as you stay within the L3-cache."

"consistently" not "consistingy":

"The Intel L3-cache keeps latency consistently low as long as you stay within the L3-cache."

"For example keeping a large part of the index in the cache improve performance..."

Missing comma and missing "s" (you might also consider making cache plural, but you seem to be talking strictly about the L3):

"For example, keeping a large part of the index in the cache improves performance..."

"That is a real thing is shown by the fact that Intel states that the OLTP hammerDB runs 60% faster on a 28-core Intel Xeon 8280 than on EPYC 7601."

Missing "it":

"That it is a real thing is shown by the fact that Intel states that the OLTP hammerDB runs 60% faster on a 28-core Intel Xeon 8280 than on EPYC 7601."

In general, the beginning of the sentance appears quite poorly worded, how about:

"That L3 cache latency is a matter for concern is shown by the fact that Intel states that the OLTP hammerDB runs 60% faster on a 28-core Intel Xeon 8280 than on EPYC 7601."

"In NPS4, the NUMA domains are reported to software in such a way as it chiplets always access the near (2 channels) DRAM."

Missing "s":

"In NPS4, the NUMA domains are reported to software in such a way as its chiplets always access the near (2 channels) DRAM."

"The fact that the EPYC 7002 has higher DRAM bandwidth is clearly visible."

Wrong numbers (maybet you ment, series?):

"The fact that the EPYC 7742 has higher DRAM bandwidth is clearly visible."

"...but show very significant improvements on EPYC 7002."

Wrong numbers (maybet you ment, series?):

"...but show very significant improvements on EPYC 7742."

"Using older garbage collector because they happen to better at Specjbb"

Badly worded.

"Using an older garbage collector because it happens to be better at Specjbb"

"For those with little time: at the high end with socketed x86 CPUs, AMD offers you up to 50 to 100% higher performance while offering a 40% lower price."

"Up to" requires 1 metric, not 2. Try:

"For those with little time: at the high end with socketed x86 CPUs, AMD offers you from 50 up to 100% higher performance while offering a 40% lower price."