AMD Zen 2 Microarchitecture Analysis: Ryzen 3000 and EPYC Rome

by Dr. Ian Cutress on June 10, 2019 7:22 PM EST- Posted in

- CPUs

- AMD

- Ryzen

- EPYC

- Infinity Fabric

- PCIe 4.0

- Zen 2

- Rome

- Ryzen 3000

- Ryzen 3rd Gen

CCX Size

Moving down in node size brings up a number of challenges in the core and beyond. Even disregarding power and frequency, the ability to put structures into silicon and then integrate that silicon into the package, as well as providing power to the right parts of the silicon through the right connections becomes an exercise in itself. AMD gave us some insight into how 7nm changed some of its designs, as well as the packaging challenges therein.

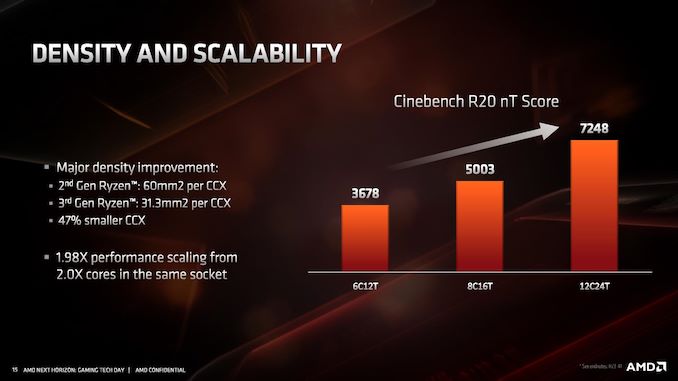

A key metric given up by AMD relates to the core complex: four cores, the associated core structures, and then L2 and L3 caches. With 12nm and the Zen+ core, AMD stated that a single core complex was ~60 square millimeters, which separates into 44mm2 for the cores and 16mm2 for the 8MB of L3 per CCX. Add two of these 60mm2 complexes with a memory controller, PCIe lanes, four IF links, and other IO, and a Zen+ zeppelin die was 213 mm2 in total.

For Zen 2, a single chiplet is 74mm2, of which 31.3 mm2 is a core complex with 16 MB of L3. AMD did not breakdown this 31.3 number into cores and L3, but one might imagine that the L3 might be approaching 50% of that number. The reason the chiplet is so much smaller is that it doesn’t need memory controllers, it only has one IF link, and has no IO, because all of the platform requirements are on the IO die. This allows AMD to make the chiplets extremely compact. However if AMD intends to keep increasing the L3 cache, we might end up with most of the chip as L3.

Overall however, AMD has stated that the CCX (cores plus L3) has decreased in size by 47%. That is showing great scaling, especially if the +15% raw instruction throughput and increased frequency comes into play. Performance per mm2 is going to be a very exciting metric.

Packaging

With Matisse staying in the AM4 socket, and Rome in the EPYC socket, AMD stated that they had to make some bets on its packaging technology in order to maintain compatibility. Invariably some of these bets end up being tradeoffs for continual support, however AMD believes that the extra effort has been worth the continued compatibility.

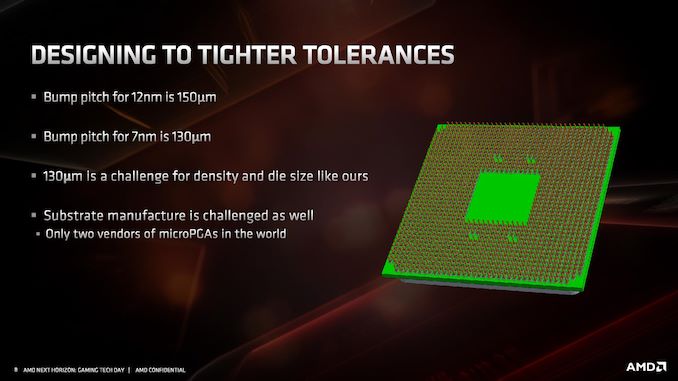

One of the key points AMD spoke about with relation to packaging is how each of the silicon dies are attached to the package. In order to enable a pin-grid array desktop processor, the silicon has to be affixed to the processor in a BGA fashion. AMD stated that due to the 7nm process, the bump pitch (the distance between the solder balls on the silicon die and package) reduced from 150 microns on 12nm to 130 microns on 7nm. This doesn’t sound like much, however AMD stated that there are only two vendors in the world with technology sufficient to do this. The only alternative would be to have a bigger bit of silicon to support a larger bump pitch, ultimately leading to a lot of empty silicon (or a different design paradigm).

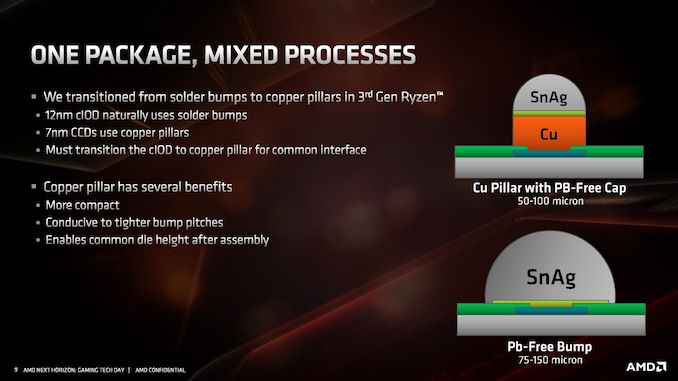

One of the ways in order to enable the tighter bump pitch is to adjust how the bumps are processed on the underside of the die. Normally a solder bump on a package is a blob/ball of lead-free solder, relying on the physics of surface tension and reflow to ensure it is consistent and regular. In order to enable the tighter bump pitches however, AMD had to move to a copper pillar solder bump topology.

In order to enable this feature, copper is epitaxially deposited within a mask in order to create a ‘stand’ on which the reflow solder sits. Due to the diameter of the pillar, less solder mask is needed and it creates a smaller solder radius. AMD also came across another issue, due to its dual die design inside Matisse: if the IO die uses standard solder bump masks, and the chiplets use copper pillars, there needs to be a level of height consistency for integrated heat spreaders. For the smaller copper pillars, this means managing the level of copper pillar growth.

AMD explained that it was actually easier to manage this connection implementation than it would be to build different height heatspreaders, as the stamping process used for heatspreaders would not enable such a low tolerance. AMD expects all of its 7nm designs in the future to use the copper pillar implementation.

Routing

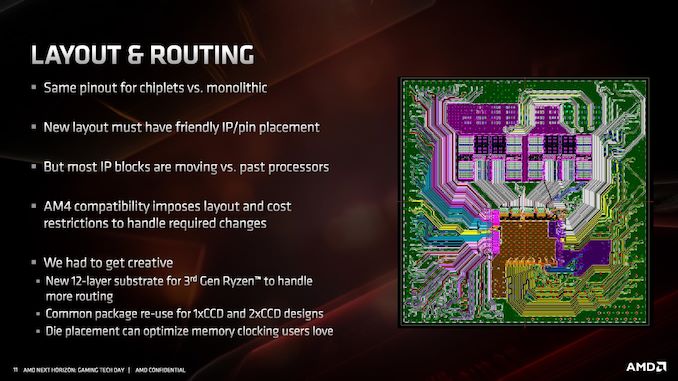

Beyond just putting the silicon onto the organic substrate, that substrate has to manage connections between the die and externally to the die. AMD had to increase the number of substrate layers in the package to 12 for Matisse in order to handle the extra routing (no word on how many layers are required in Rome, perhaps 14). This also becomes somewhat complicated for single core chiplet and dual core chiplet processors, especially when testing the silicon before placing it onto the package.

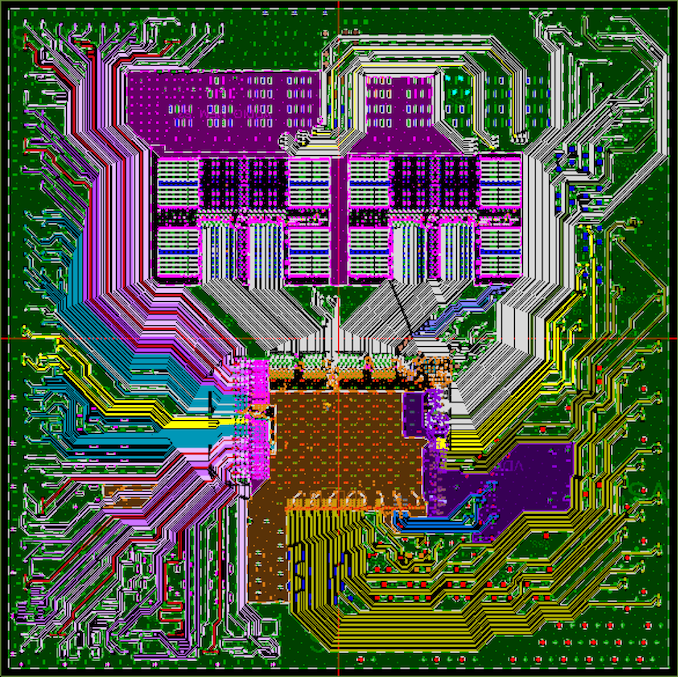

From the diagram we can clearly see the IF links from the two chiplets going to the IO die, with the IO die also handling the memory controllers and what looks like power plane duties as well. There are no in-package links between the chiplets, in case anyone was still wondering: the chiplets have no way of direct communication – all communication between chiplets is handled through the IO die.

AMD stated that with this layout they also had to be mindful of how the processor was placed in the system, as well as cooling and memory layout. Also, when it comes to faster memory support, or the tighter tolerances of PCIe 4.0, all of this also needs to be taken into consideration as provide the optimal path for signaling without interference from other traces and other routing.

216 Comments

View All Comments

Korguz - Monday, June 17, 2019 - link

im glad im not the only one that sees this...Qasar - Monday, June 17, 2019 - link

korguz, you aren't the only one that sees it.Xyler94, i dont hate intel.. but i am sick of what they have done so far to the cpu industry, sticking the mainstream with quad cores for how many years ? i would of loved to get a 6 or 8 core intel chip, but the cost of the platform, made it out of my reach. the little performance gains year over year, come on, thats the best intel can do with all the money they have ?? and the constant lies about 10nm.... then Zen is released and what was it, less then 2 months later, intel all of a sudden has more then 4 cores for the mainstream, and even more cores for the HEDT ? my next upgrade at this point, looks to be zen 2.. but i am waiting till the 7th, to read the reviews. hstewart does glorify intel any chance he can, and it just looks so stupid, cause some one calls him out on it.. and he seems to pretty much vanish from that convo

HStewart - Thursday, June 13, 2019 - link

Notice that I mention unless they change it from dual 128 bit.Targon - Thursday, June 13, 2019 - link

Socket AM4 is limited to a dual-channel memory controller, because you need more pins to add more memory channels. The same applies to the number of PCI Express lanes as well. The only way around this would be to use one of the abilities of Gen-Z where the CPU would just talk to the Gen-Z bus, at which point, dedicated pins for memory and PCI Express could be replaced by a very wide and fast connection to the system bus/fabric. Since that would require a new motherboard and for the CPU to be designed around it, why bother with socket AM4 at that point?Korguz - Thursday, June 13, 2019 - link

why bother?? um upgrade ability ? maybe not quite needed ? the things you suggest, sound like they would be a little expensive to implement. if you need more memory bandwidth and pcie lanes.. grab a TR board and a lower end cpu....austinsguitar - Monday, June 10, 2019 - link

Thank you Ian for this write up. :)megapleb - Monday, June 10, 2019 - link

Why does the 3600X have power consumption of 95W, and the 3700X, with two more cores, four more threads, and the same frequency max, consume only 65W? I'm guessing those two got switched around?anonomouse - Monday, June 10, 2019 - link

higher sustained base clock drives up the tdpmegapleb - Monday, June 10, 2019 - link

200Mhz extra base increases power consumption by 46%? I would have though max power consumption would be all cores operating at maximum frequency so the base would have nothing to do with it?scineram - Tuesday, June 11, 2019 - link

Nobody said anything about power consumption.