AMD Zen 2 Microarchitecture Analysis: Ryzen 3000 and EPYC Rome

by Dr. Ian Cutress on June 10, 2019 7:22 PM EST- Posted in

- CPUs

- AMD

- Ryzen

- EPYC

- Infinity Fabric

- PCIe 4.0

- Zen 2

- Rome

- Ryzen 3000

- Ryzen 3rd Gen

New Instructions

Cache and Memory Bandwidth QoS Control

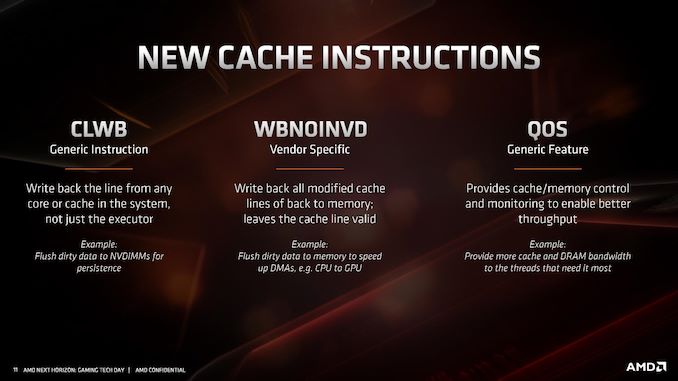

As with most new x86 microarchitectures, there is a drive to increase performance through new instructions, but also try for parity between different vendors in what instructions are supported. For Zen 2, while AMD is not catering to some of the more exotic instruction sets that Intel might do, it is adding in new instructions in three different areas.

The first one, CLWB, has been seen before from Intel processors in relation to non-volatile memory. This instruction allows the program to push data back into the non-volatile memory, just in case the system receives a halting command and data might be lost. There are other instructions associated with securing data to non-volatile memory systems, although this wasn’t explicitly commented on by AMD. It could be an indication that AMD is looking to better support non-volatile memory hardware and structures in future designs, particularly in its EPYC processors.

The second cache instruction, WBNOINVD, is an AMD-only command, but builds on other similar commands such as WBINVD. This command is designed to predict when particular parts of the cache might be needed in the future, and clears them up ready in order to accelerate future calculations. In the event that the cache line needed isn’t ready, a flush command would be processed in advance of the needed operation, increasing latency – by running a cache line flush in advance while the latency-critical instruction is still coming down the pipe helps accelerate its ultimate execution.

The final set of instructions, filed under QoS, actually relates to how cache and memory priorities are assigned.

When a cloud CPU is split into different containers or VMs for different customers, the level of performance is not always consistent as performance could be limited based on what another VM is doing on the system. This is known as the ‘noisy neighbor’ issue: if someone else is eating all the core-to-memory bandwidth, or L3 cache, it can be very difficult for another VM on the system to have access to what it needs. As a result of that noisy neighbor, the other VM will have a highly variable latency on how it can process its workload. Alternatively, if a mission critical VM is on a system and another VM keeps asking for resources, the mission critical one might end up missing its targets as it doesn’t have all the resources it needs access to.

Dealing with noisy neighbors, beyond ensuring full access to the hardware as a single user, is difficult. Most cloud providers and operations won’t even tell you if you have any neighbors, and in the event of live VM migration, those neighbors might change very frequently, so there is no guarantee of sustained performance at any time. This is where a set of dedicated QoS (Quality of Service) instructions come in.

As with Intel’s implementation, when a series of VMs is allocated onto a system on top of a hypervisor, the hypervisor can control how much memory bandwidth and cache that each VM has access to. If a mission critical 8-core VM requires access to 64 MB of L3 and at least 30 GB/s of memory bandwidth, the hypervisor can control that the priority VM will always have access to that amount, and either eliminate it entirely from the pool for other VMs, or intelligently restrict the requirements as the mission critical VM bursts into full access.

Intel only enables this feature on its Xeon Scalable processors, however AMD will enable it up and down its Zen 2 processor family range, for consumers and enterprise users.

The immediate issue I had with this feature is on the consumer side. Imagine if a video game demands access to all the cache and all the memory bandwidth, while some streaming software would get access to none – it could cause havoc on the system. AMD explained that while technically individual programs can request a certain level of QoS, however it will be up to the OS or the hypervisor to control if those requests are both valid and suitable. They see this feature more as an enterprise feature used when hypervisors are in play, rather than bare metal installations on consumer systems.

216 Comments

View All Comments

Korguz - Monday, June 17, 2019 - link

im glad im not the only one that sees this...Qasar - Monday, June 17, 2019 - link

korguz, you aren't the only one that sees it.Xyler94, i dont hate intel.. but i am sick of what they have done so far to the cpu industry, sticking the mainstream with quad cores for how many years ? i would of loved to get a 6 or 8 core intel chip, but the cost of the platform, made it out of my reach. the little performance gains year over year, come on, thats the best intel can do with all the money they have ?? and the constant lies about 10nm.... then Zen is released and what was it, less then 2 months later, intel all of a sudden has more then 4 cores for the mainstream, and even more cores for the HEDT ? my next upgrade at this point, looks to be zen 2.. but i am waiting till the 7th, to read the reviews. hstewart does glorify intel any chance he can, and it just looks so stupid, cause some one calls him out on it.. and he seems to pretty much vanish from that convo

HStewart - Thursday, June 13, 2019 - link

Notice that I mention unless they change it from dual 128 bit.Targon - Thursday, June 13, 2019 - link

Socket AM4 is limited to a dual-channel memory controller, because you need more pins to add more memory channels. The same applies to the number of PCI Express lanes as well. The only way around this would be to use one of the abilities of Gen-Z where the CPU would just talk to the Gen-Z bus, at which point, dedicated pins for memory and PCI Express could be replaced by a very wide and fast connection to the system bus/fabric. Since that would require a new motherboard and for the CPU to be designed around it, why bother with socket AM4 at that point?Korguz - Thursday, June 13, 2019 - link

why bother?? um upgrade ability ? maybe not quite needed ? the things you suggest, sound like they would be a little expensive to implement. if you need more memory bandwidth and pcie lanes.. grab a TR board and a lower end cpu....austinsguitar - Monday, June 10, 2019 - link

Thank you Ian for this write up. :)megapleb - Monday, June 10, 2019 - link

Why does the 3600X have power consumption of 95W, and the 3700X, with two more cores, four more threads, and the same frequency max, consume only 65W? I'm guessing those two got switched around?anonomouse - Monday, June 10, 2019 - link

higher sustained base clock drives up the tdpmegapleb - Monday, June 10, 2019 - link

200Mhz extra base increases power consumption by 46%? I would have though max power consumption would be all cores operating at maximum frequency so the base would have nothing to do with it?scineram - Tuesday, June 11, 2019 - link

Nobody said anything about power consumption.