AMD Zen 2 Microarchitecture Analysis: Ryzen 3000 and EPYC Rome

by Dr. Ian Cutress on June 10, 2019 7:22 PM EST- Posted in

- CPUs

- AMD

- Ryzen

- EPYC

- Infinity Fabric

- PCIe 4.0

- Zen 2

- Rome

- Ryzen 3000

- Ryzen 3rd Gen

Windows Optimizations

One of the key points that have been a pain in the side of non-Intel processors using Windows has been the optimizations and scheduler arrangements in the operating system. We’ve seen in the past how Windows has not been kind to non-Intel microarchitecture layouts, such as AMD’s previous module design in Bulldozer, the Qualcomm hybrid CPU strategy with Windows on Snapdragon, and more recently with multi-die arrangements on Threadripper that introduce different memory latency domains into consumer computing.

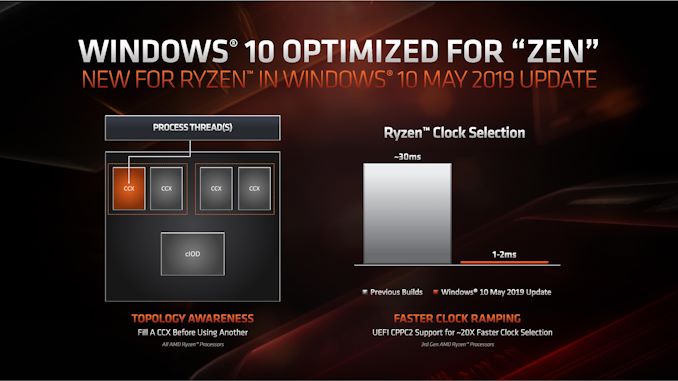

Obviously AMD has a close relationship with Microsoft when it comes down to identifying a non-regular core topology with a processor, and the two companies work towards ensuring that thread and memory assignments, absent of program driven direction, attempt to make the most out of the system. With the May 10th update to Windows, some additional features have been put in place to get the most out of the upcoming Zen 2 microarchitecture and Ryzen 3000 silicon layouts.

The optimizations come on two fronts, both of which are reasonably easy to explain.

Thread Grouping

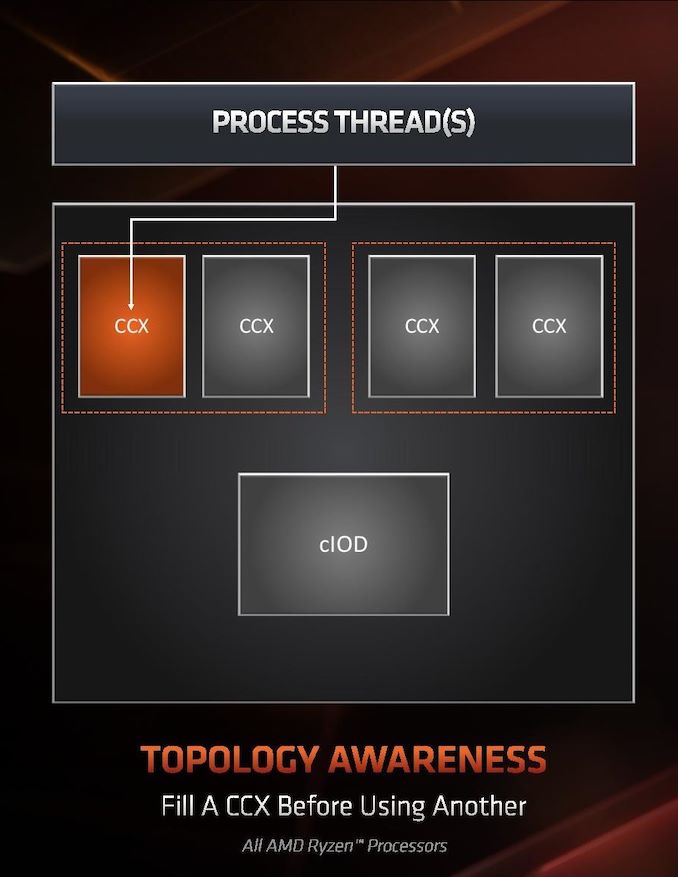

The first is thread allocation. When a processor has different ‘groups’ of CPU cores, there are different ways in which threads are allocated, all of which have pros and cons. The two extremes for thread allocation come down to thread grouping and thread expansion.

Thread grouping is where as new threads are spawned, they will be allocated onto cores directly next to cores that already have threads. This keeps the threads close together, for thread-to-thread communication, however it can create regions of high power density, especially when there are many cores on the processor but only a couple are active.

Thread expansion is where cores are placed as far away from each other as possible. In AMD’s case, this would mean a second thread spawning on a different chiplet, or a different core complex/CCX, as far away as possible. This allows the CPU to maintain high performance by not having regions of high power density, typically providing the best turbo performance across multiple threads.

The danger of thread expansion is when a program spawns two threads that end up on different sides of the CPU. In Threadripper, this could even mean that the second thread was on a part of the CPU that had a long memory latency, causing an imbalance in the potential performance between the two threads, even though the cores those threads were on would have been at the higher turbo frequency.

Because of how modern software, and in particular video games, are now spawning multiple threads rather than relying on a single thread, and those threads need to talk to each other, AMD is moving from a hybrid thread expansion technique to a thread grouping technique. This means that one CCX will fill up with threads before another CCX is even accessed. AMD believes that despite the potential for high power density within a chiplet, while the other might be inactive, is still worth it for overall performance.

For Matisse, this should afford a nice improvement for limited thread scenarios, and on the face of the technology, gaming. It will be interesting to see how much of an affect this has on the upcoming EPYC Rome CPUs or future Threadripper designs. The single benchmark AMD provided in its explanation was Rocket League at 1080p Low, which reported a +15% frame rate gain.

Clock Ramping

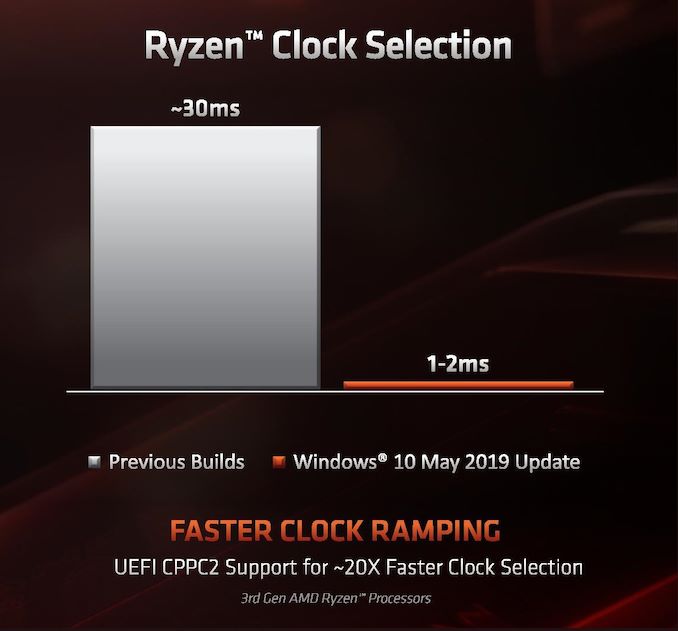

For any of our users familiar with our Skylake microarchitecture deep dive, you may remember that Intel introduced a new feature called Speed Shift that enabled the processor to adjust between different P-states more freely, as well as ramping from idle to load very quickly – from 100 ms to 40ms in the first version in Skylake, then down to 15 ms with Kaby Lake. It did this by handing P-state control back from the OS to the processor, which reacted based on instruction throughput and request. With Zen 2, AMD is now enabling the same feature.

AMD already has sufficiently more granularity in its frequency adjustments over Intel, allowing for 25 MHz differences rather than 100 MHz differences, however enabling a faster ramp-to-load frequency jump is going to help AMD when it comes to very burst-driven workloads, such as WebXPRT (Intel’s favorite for this sort of demonstration). According to AMD, the way that this has been implemented with Zen 2 will require BIOS updates as well as moving to the Windows May 10th update, but it will reduce frequency ramping from ~30 milliseconds on Zen to ~1-2 milliseconds on Zen 2. It should be noted that this is much faster than the numbers Intel tends to provide.

The technical name for AMD’s implementation involves CPPC2, or Collaborative Power Performance Control 2, and AMD’s metrics state that this can increase burst workloads and also application loading. AMD cites a +6% performance gain in application launch times using PCMark10’s app launch sub-test.

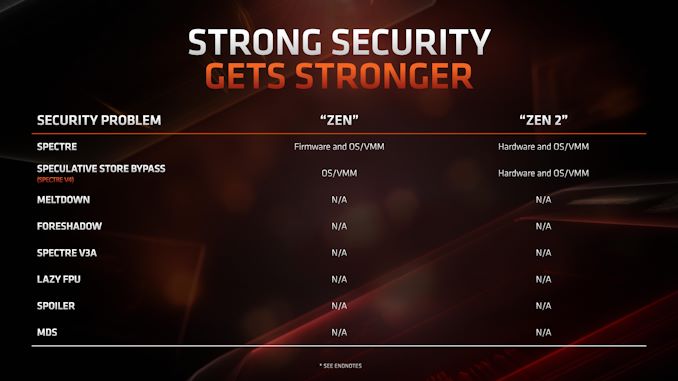

Hardened Security for Zen 2

Another aspect to Zen 2 is AMD’s approach to heightened security requirements of modern processors. As has been reported, a good number of the recent array of side channel exploits do not affect AMD processors, primarily because of how AMD manages its TLB buffers that have always required additional security checks before most of this became an issue. Nonetheless, for the issues to which AMD is vulnerable, it has implemented a full hardware-based security platform for them.

The change here comes for the Speculative Store Bypass, known as Spectre v4, which AMD now has additional hardware to work in conjunction with the OS or virtual memory managers such as hypervisors in order to control. AMD doesn’t expect any performance change from these updates. Newer issues such as Foreshadow and Zombieload do not affect AMD processors.

216 Comments

View All Comments

Targon - Thursday, June 13, 2019 - link

The TDP figures are always a bit vague, because it is about the heat generation, not about power draw. A higher TDP on a chip with the same number of cores on the same design could indicate that it will overclock higher. Intel always sets the TDP to the base clock speed, while AMD has been more about what can be expected in normal usage. The higher the clock speed, the more power will be required, and the higher the amount of heat will be that needs to be handled by the cooler.So, if a chip has a TDP of 105W, then in theory, you should be able to get away with a cooler that can handle 105W of heat output, but if that TDP is based only on the base clock speed, you will want a better cooler to allow for turbo/boost for sustained periods.

wilsonkf - Monday, June 10, 2019 - link

We want faster memory for Zen/Zen+ because we want higher IF clock, so cutting the IF clock by half to enable higher memory freq. does not make sense. However the improved IF could move the bottleneck somewhere else.AlexDaum - Tuesday, June 11, 2019 - link

It seems like IF2 can not hit frequencies higher than about 3733MHz DDR (so 1,8GHz real frequency) for some reason, so they added the ability to scale it down to have higher memory clocks. But it is probably only worth it if you can overclock memory a lot higher than 3733, so that the IF clock gets a bit higher againXyler94 - Tuesday, June 11, 2019 - link

If I recall, IF2's clock speed is decoupled from RAM speed.Cooe - Tuesday, June 11, 2019 - link

This is wrong Xyler. Still completely connected.Xyler94 - Thursday, June 13, 2019 - link

Per this exact Article:"One of the features of IF2 is that the clock has been decoupled from the main DRAM clock. In Zen and Zen+, the IF frequency was coupled to the DRAM frequency, which led to some interesting scenarios where the memory could go a lot faster but the limitations in the IF meant that they were both limited by the lock-step nature of the clock. For Zen 2, AMD has introduced ratios to the IF2, enabling a 1:1 normal ratio or a 2:1 ratio that reduces the IF2 clock in half."

It seems it has been, but it may still benefit from faster RAM still

extide - Monday, June 17, 2019 - link

It is completely connected -- you can just pick a 1:1 or 2:1 divider now but they are absolutely still tightly coupled. YOu can't just set them independently.Cooe - Tuesday, June 11, 2019 - link

You're missing the point for >3733MHz memory overclocked where the IF switches to a 2:1 divider. It's for workloads that highly prioritize memory bandwidth over latency, NOT to try and run your sticks 24/7 at like 5GHz+ for the absolute lowest latency possible (bc even then, 3733MHz will prolly still be lower).Targon - Thursday, June 13, 2019 - link

From what I remember, up to DDR4-3733, Infinity Fabric on Ryzen 3rd generation is now at a 1:1(where previously, Infinity Fabric would run at half the DDR4 speed. You can go above that, but then the improvements are not going to be as significant. For latency, your best bet is to get 3733 or 3600 with as low a CAS rating as you can get.zodiacfml - Tuesday, June 11, 2019 - link

that 105W TDP is a sign that the 8 core is efficient at 50W or a base clock of 3.5 GHz. The AMD 7nm 8-Core Zen 2 chip has a TDP equal or less than my i3-8100.😅