AMD Zen 2 Microarchitecture Analysis: Ryzen 3000 and EPYC Rome

by Dr. Ian Cutress on June 10, 2019 7:22 PM EST- Posted in

- CPUs

- AMD

- Ryzen

- EPYC

- Infinity Fabric

- PCIe 4.0

- Zen 2

- Rome

- Ryzen 3000

- Ryzen 3rd Gen

New Instructions

Cache and Memory Bandwidth QoS Control

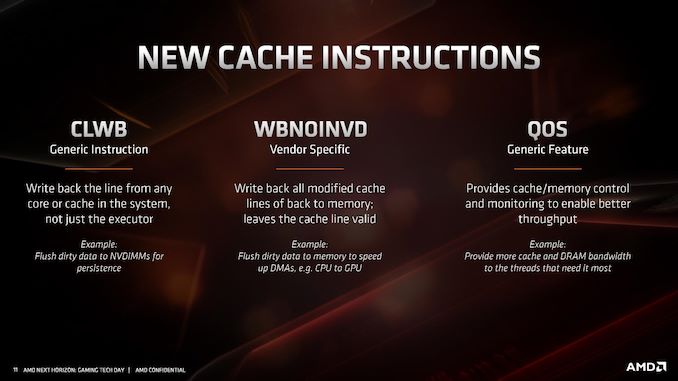

As with most new x86 microarchitectures, there is a drive to increase performance through new instructions, but also try for parity between different vendors in what instructions are supported. For Zen 2, while AMD is not catering to some of the more exotic instruction sets that Intel might do, it is adding in new instructions in three different areas.

The first one, CLWB, has been seen before from Intel processors in relation to non-volatile memory. This instruction allows the program to push data back into the non-volatile memory, just in case the system receives a halting command and data might be lost. There are other instructions associated with securing data to non-volatile memory systems, although this wasn’t explicitly commented on by AMD. It could be an indication that AMD is looking to better support non-volatile memory hardware and structures in future designs, particularly in its EPYC processors.

The second cache instruction, WBNOINVD, is an AMD-only command, but builds on other similar commands such as WBINVD. This command is designed to predict when particular parts of the cache might be needed in the future, and clears them up ready in order to accelerate future calculations. In the event that the cache line needed isn’t ready, a flush command would be processed in advance of the needed operation, increasing latency – by running a cache line flush in advance while the latency-critical instruction is still coming down the pipe helps accelerate its ultimate execution.

The final set of instructions, filed under QoS, actually relates to how cache and memory priorities are assigned.

When a cloud CPU is split into different containers or VMs for different customers, the level of performance is not always consistent as performance could be limited based on what another VM is doing on the system. This is known as the ‘noisy neighbor’ issue: if someone else is eating all the core-to-memory bandwidth, or L3 cache, it can be very difficult for another VM on the system to have access to what it needs. As a result of that noisy neighbor, the other VM will have a highly variable latency on how it can process its workload. Alternatively, if a mission critical VM is on a system and another VM keeps asking for resources, the mission critical one might end up missing its targets as it doesn’t have all the resources it needs access to.

Dealing with noisy neighbors, beyond ensuring full access to the hardware as a single user, is difficult. Most cloud providers and operations won’t even tell you if you have any neighbors, and in the event of live VM migration, those neighbors might change very frequently, so there is no guarantee of sustained performance at any time. This is where a set of dedicated QoS (Quality of Service) instructions come in.

As with Intel’s implementation, when a series of VMs is allocated onto a system on top of a hypervisor, the hypervisor can control how much memory bandwidth and cache that each VM has access to. If a mission critical 8-core VM requires access to 64 MB of L3 and at least 30 GB/s of memory bandwidth, the hypervisor can control that the priority VM will always have access to that amount, and either eliminate it entirely from the pool for other VMs, or intelligently restrict the requirements as the mission critical VM bursts into full access.

Intel only enables this feature on its Xeon Scalable processors, however AMD will enable it up and down its Zen 2 processor family range, for consumers and enterprise users.

The immediate issue I had with this feature is on the consumer side. Imagine if a video game demands access to all the cache and all the memory bandwidth, while some streaming software would get access to none – it could cause havoc on the system. AMD explained that while technically individual programs can request a certain level of QoS, however it will be up to the OS or the hypervisor to control if those requests are both valid and suitable. They see this feature more as an enterprise feature used when hypervisors are in play, rather than bare metal installations on consumer systems.

216 Comments

View All Comments

Thunder 57 - Sunday, June 16, 2019 - link

It appears they traded half the L1 instruction cache to double the uop cache. They doubled the associativity to keep the same hit rate but it will hold fewer instructions. However, the micro-op cache holds already decoded instructions and if there is a hit there it saves a few stages in the pipeline for decoding, which saves power and increases performance.phoenix_rizzen - Tuesday, June 11, 2019 - link

From the article:"Zen 2 will offer greater than a >1.25x performance gain at the same power,"

I don't think that means what you meant. :) 1.25x gain would be 225% or over 2x the performance. I think you meant either:

"Zen 2 will offer greater than a 25% performance gain at the same power,"

or maybe:

"Zen 2 will offer greater than 125% performance at the same power,"

or possibly:

"Zen 2 will offer greater than 1.25x performance at the same power,"

phoenix_rizzen - Tuesday, June 11, 2019 - link

From the article:"With Matisse staying in the AM4 socket, and Rome in the EPYC socket,"

The server socket name is SP3, not EPYC, so this should read:

"With Matisse staying in the AM4 socket, and Rome in the SP3 socket,"

phoenix_rizzen - Tuesday, June 11, 2019 - link

From the article:"This also becomes somewhat complicated for single core chiplet and dual core chiplet processors,"

core is superfluous here. The chiplets are up to 8-core. You probably mean "single chiplet and dual chiplet processors".

scineram - Wednesday, June 12, 2019 - link

No, becausethere is no single chiplet. It is the core chiplet that is either 1 or 2 in number.phoenix_rizzen - Tuesday, June 11, 2019 - link

From the article:"all of this also needs to be taken into consideration as provide the optimal path for signaling"

"as" should be "to"

thesavvymage - Wednesday, June 12, 2019 - link

A 1.25x gain is the exact same as a 25% performance gain, it doesnt meant 225% as you stateddsplover - Tuesday, June 11, 2019 - link

So in other words Anadtech no longer receives engineering samples but tells us what everyone else is saying.Still love coming here as reviews are good, but boy oh boy yuze guys sure slipped down the ladder.

Bring back Anand Shimpli.

Korguz - Wednesday, June 12, 2019 - link

the do still get engineering samples... but usually cpus...not likely.. hes working for apple now....

coburn_c - Wednesday, June 12, 2019 - link

What the heck is UEFI CPPC2?