Intel Cascade Lake Xeon W-3200 Launched: Server Socket, 64 PCIe 3.0 lanes

by Dr. Ian Cutress on June 10, 2019 8:00 AM EST

One of the quiet announcements that came out of Apple’s new Mac Pro announcement was the launch of Intel’s next generation Xeon W processors. Aside from being completely under the radar from a formal announcement, the new line of workstation focused CPUs heralds a few quirks: they are Cascade Lake based, they are only in the LGA3647 socket, and they have 64 available PCIe 3.0 lanes.

The current Xeon W product line, based on Skylake-SP, uses the common high-end desktop socket LGA2066, but with a workstation focused chipset. These processors are available up to eighteen cores, using Intel’s HCC 18-core die as the highest it will go. The other features of these processors include quad channel memory, 512GB ECC support, and up to 48 PCIe 3.0 lanes. What Intel has done for the next generation has bump it up to the server socket, which affords more memory channels, higher TDPs, more memory supported, and a higher core count.

The new Cascade Lake-based Xeon W-3200 family will be offered up to 28 cores, using Intel’s XCC 28-core die as the base for the high-end models. As a Cascade Lake processor, the new Xeon W will come with additional in-hardware fixes for some of the Spectre and Meltdown vulnerabilities, similar to the Cascade Lake Xeon Scalable Processors. Intel has also made a substantial change in the way that it intends to market its Xeon W processors, as the new models also change socket compared to the previous generation.

Historically we are afforded multiple generations of workstation CPU on the same socket, however at this time Intel has decided to forgo this application and move Xeon W from the LGA2066 socket to the LGA3647 socket, limiting users who wish to upgrade from their Xeon W-2100 processors. The new socket, combined with the new silicon, means that Intel can offer new Xeon W owners more features, although much to the chagrin of previous Xeon W owners. This doesn't exclude Intel launching another set of CPUs on the LGA2066 socket for upgrades, however given the lack of fanfare for this launch, it wouldn't seem likely that Intel has plans to do so.

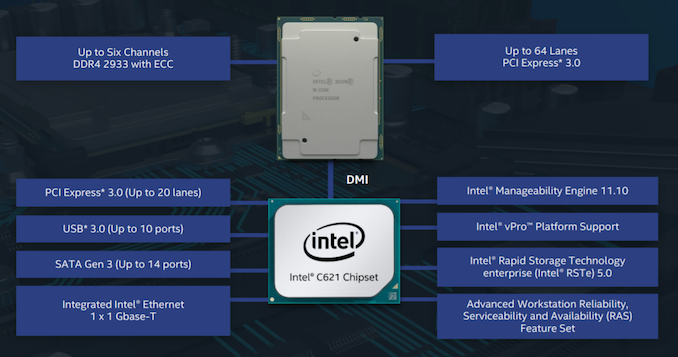

The LGA3647 socket has been used in Xeon Scalable processors since Skylake-SP launch, and affords six full DDR4 memory channels. The silicon inside the new Xeon W does have six memory controllers, allowing it to take full use of this feature. (Technically the previous generation also had six memory controllers in the silicon, but the platform was limited to four, as per Intel’s product positioning strategy.)

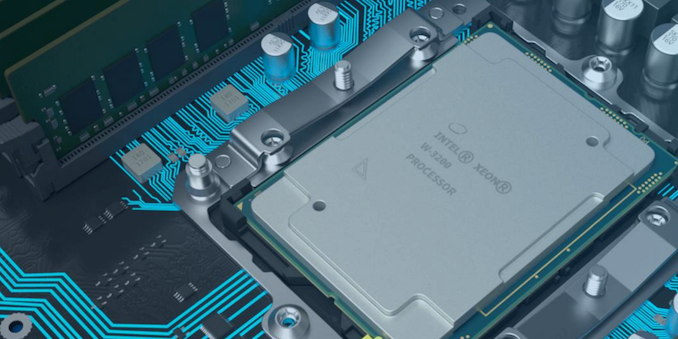

The LGA3647 system Intel sent with the last Xeon W Review Unit

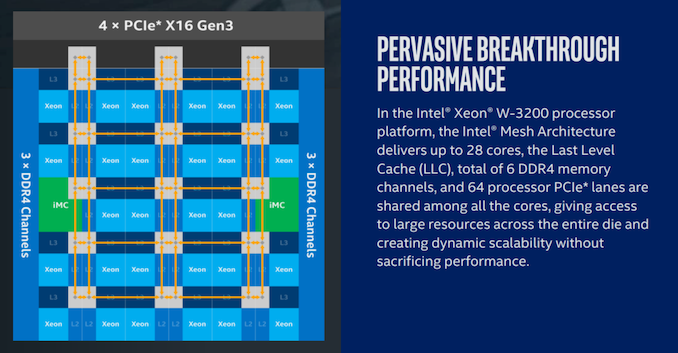

As with the previous Xeon W, the cores are arranged in a block, and inside is a ‘Mesh’ which we’ve described in detail in previous reviews. One of the additional features for the Xeon W-3200 is the upgrade to a full 64 PCIe 3.0 lanes available for PCIe slots, up from the previous 48. This also makes Intel’s new Cascade Lake W-3200 family the x86 product that Intel offers with the most PCIe lanes.

This is a bit of a technicality – previous Xeon SP and Xeon W did actually have 64 lanes on the silicon, however 16 of them were reserved for on-package chips, such as OmniPath. What Intel has done here is re-route them through the socket pins instead, allowing motherboard manufacturers to design a full x16/x16/x16/x16 layout or variants therein. This also means that the LGA3647 socket already had pins ready to be allocated to these PCIe lanes, so it would not have surprised me if Intel has already sold 64 PCIe lane custom versions to customers since the original Skylake-SP launch.

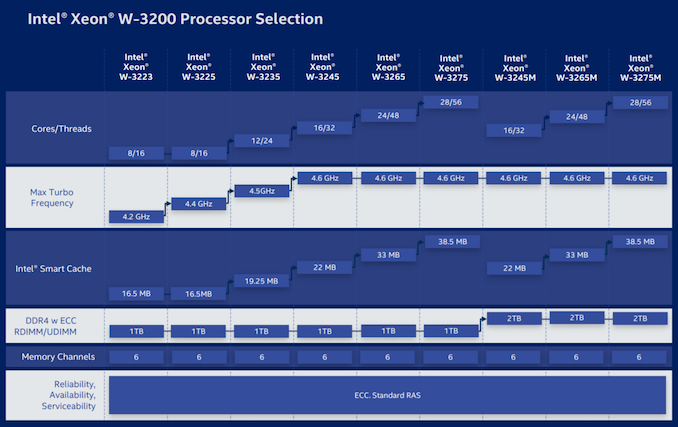

Here are the nine new CPUs:

| Intel Cascade Lake Xeon W-3200 Family | |||||||

| AnandTech | Cores Threads |

TDP | Base Freq |

Turbo 2.0 |

Turbo 3.0 |

DRAM | Price |

| W-3275M | 28C / 56T | 205 W | 2.5 GHz | 4.4 GHz | 4.6 GHz | 2 TiB | $7453 |

| W-3275 | 28C / 56T | 205 W | 2.5 GHz | 4.4 GHz | 4.6 GHz | 1 TiB | $4449 |

| W-3265M | 24C / 48T | 205 W | 2.7 GHz | 4.4 GHz | 4.6 GHz | 2 TiB | $6353 |

| W-3265 | 24C / 48T | 205 W | 2.7 GHz | 4.4 GHz | 4.6 GHz | 1 TiB | $3349 |

| W-3245M | 16C / 32T | 205 W | 3.2 GHz | 4.4 GHz | 4.6 GHz | 2 TiB | $5002 |

| W-3245 | 16C / 32T | 205 W | 3.2 GHz | 4.4 GHz | 4.6 GHz | 1 TiB | $1999 |

| W-3235 | 12C / 24T | 180 W | 3.3 GHz | 4.4 GHz | 4.5 GHz | 1 TiB | $1398 |

| W-3225 | 8C / 16T | 160 W | 3.7 GHz | 4.3 GHz | 4.4 GHz | 1 TiB | $1199 |

| W-3223 | 8C / 16T | 160 W | 3.5 GHz | 4.0 GHz | 4.2 GHz | 1 TiB | $749 |

Each CPU also supports AVX512, with two FMA units across every model. Memory support for all CPUs is listed as six channels of DDR4-2933 (except the 8 core parts , which are DDR4-2666), with a base support of 1 TiB of memory which rises to 2 TiB for the higher memory models (for an additional $3000). This also marks the first instance in which Intel is offering higher memory support SKUs for Xeon W, although it should be noted that Intel seems relatively happy these days to allow support of unbalanced memory configurations. E.g. 1 TiB split between 12 memory slots means 8 x 64GiB + 4 x 128 GiB module arrangements, or 2 TiB means 8 x 128 GiB + 4 x 256 GiB.

Another feature making its way onto these CPUs is Turbo Boost Max 3.0, which is an additional turbo setting on each CPU if the situation allows it. It should be pointed out that in-line with other workstation CPUs, these models are only built for single socket systems.

One interesting feature missing from these CPUs is Optane DC Persistent Memory support. Intel had stated at its Investor Day presentations that Optane DC Persistent Memory support was coming to the workstation processor line by the end of the year. This means that either Intel is planning another Xeon W update between now and Q1 2020, or this feature may be BIOS enabled later in its lifecycle. The other alternative is that Intel will offer Optane capable custom SKUs for specific customers.

Intel’s new Cascade Lake Xeon W processor line is currently available in the new Apple Mac Pro. We expect it to filter down into other platforms over the next two quarters, and perhaps become more widely available at retail.

| Want to keep up to date with all of our Computex 2019 Coverage? | ||||||

Laptops |

Hardware |

Chips |

||||

| Follow AnandTech's breaking news here! | ||||||

56 Comments

View All Comments

Kevin G - Monday, June 10, 2019 - link

Last I checked, Threadripper is only at 64 PCIe 3.0 lanes (four of which go to the chipset) and quad memory channel support.MFinn3333 - Monday, June 10, 2019 - link

/me Checks the PCIe count of EPYC...“It is still 128 people!”

mode_13h - Tuesday, June 11, 2019 - link

ThreadRipper offers higher clocks than EPYC, because workstation users still care about single-thread performance, while server is mostly about aggregate throughput and power-efficiency.So, I would put ThreadRipper up against Xeon W - not EPYC.

azfacea - Monday, June 10, 2019 - link

you are comparing intel CPU from mac pro last week to thread ripper from 2 years ago, intel marketing might want to hire you.rome is 128 lanes pcie4

Kevin G - Monday, June 10, 2019 - link

AMD updated Threadripper roughly a year ago to bring it up to 32 cores. AMD has plans to update it later this year. And yes, Intel is playing catch up here and will likely match what AMD has had on the market for the better part of a year now until AMD's impending update at the end of this year.Rome is targeted as a server part in particular due to its support of two sockets and a few other RAS features. Both Threadripper and these Xeon W's are single socket only. And yes, workstation Epyc boards exist just like workstation Xeon SP boards do as well that serve the high end of the workstation market.

PS: Rome is actually 130 PCIe lanes. AMD added two more by vendor request for IPMI so they don't have to sacrifice lane count for ordinary peripherals.

damianrobertjones - Thursday, June 13, 2019 - link

Logitech and Microsoft sell a range of affordable keyboards with working 'shift' keys. Capitals can be your friend.mode_13h - Tuesday, June 11, 2019 - link

I don't feel like they really need more than 2066, in the HEDT space. For customers going beyond that, they can move into Intel's Xeon line. ThreadRipper competes with both, because unlike Intel, AMD didn't disable ECC support to make a lower-priced model line.abufrejoval - Monday, June 10, 2019 - link

Looks like this could have been shorter:1. Intel is now selling server SKUs configured for higher TDP as workstations

2. Trying to offer a $7500 alternative to the current 32 core ThreadRipper at $1800 vis-à-vis the Rome competition to come in a month or so

Should be interesting to see if AMD responds by offering also some higher clock Epyics to deliver 8 memory channels to those who either need more bandwidth or capacity than TR can provide.

NV-RAM would be really nice to have, preferably at lower prices, higher density and DRAM type endurance. Wish I'd know if Epyic & cousins did include all the instruction set modifications and memory controller logic that's required to support NV-DIMMs like Optane.

Kevin G - Monday, June 10, 2019 - link

1) This has been common practice in the market historically. Main difference has been the IO selection which has favored more graphics and audio where there traditionally has been little need on the server side.2a) This is Intel market segmentation at its finest. Artificially limiting memory capacity is just a stupid move, especially at this time given their competition. Intel can't uncap the Xeon W's as the Xeon SP's all have the memory limitation and this would severely undercut them in price. If anything, they should have segmented on Optane support and let ordinary DRAM capacity be unchecked.

2b) AMD's update to Threadripper is expected in Q4 this year. It looks like AMD had a significant orders placed for Epyc so it appears that all 8 core usable dies are headed that way. There is wide spread rumors that AMD has a 16 core desktop chip waiting for release but the reason why is that they can't spare any 8 core dies yet.

2c) AMD might be holding off on a Threadripper update due producing yet another IO die. They have an embedded market to fulfill with quad channel memory parts but using the eight channel Epyc IO die does not seem appropriate for it. If AMD is going this route, holding off on Threadripper updates for it would make sense.

jamescox - Monday, June 10, 2019 - link

The 8 core desktop part is assumed to be a single cpu chiplet. It could be one with bad power consumption characteristics though. The best power consumption die will go to Epyc. They can us all kinds of different chiplets in Epyc, with 1, 2, 3, or 4 cores active. A 1 core per CCX part (16 cores) would be a strange chip, but the massive per core cache could be useful in some applications. They could also disable entire CCXs for a 32 core part. The main concern is power consumption, so Epyc will get the best bins for those. The 16 core desktop part will need to be a relatively good power consumption bin though, so that may be in short supply and/or expensive.I have wondered if they put some extra links on the desktop IO die to allow them to use two small IO die and 4 cpu chiplets for ThreadRipper. That would allow relatively cheap ThreadRipper processors up to 32 core before switching to the expensive Epyc IO die for higher core count.