Intel's Xeon Cascade Lake vs. NVIDIA Turing: An Analysis in AI

by Johan De Gelas on July 29, 2019 8:30 AM EST

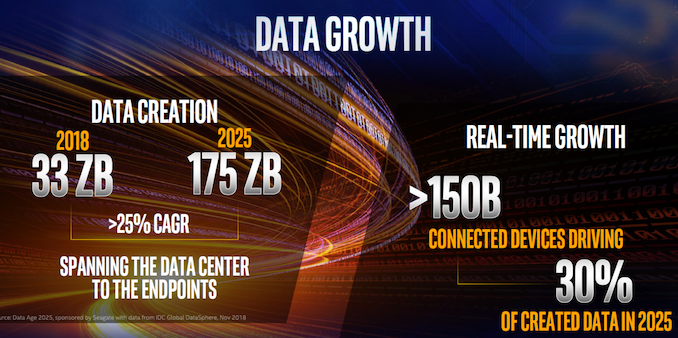

It seems like the new motto for Silicon Valley for the last few years has been “Data is the new oil,” and for good reason. The number of companies employing machine learning-based AI technologies has exploded, and even a few years after all of this has kicked off in earnest, those numbers continue to grow. This form of AI is no longer just an academic thesis or curious research project, but instead machine learning has become an important part of the enterprise market, and the impact on enterprise hardware – both purchasing and development – would be difficult to overstate. This is the era of AI.

At first sight, the hardware choices for these kinds of applications seem simple: Intel Xeon CPUs for storing and preprocessing data, NVIDIA GPUs for (almost) everything AI. And indeed, this has largely been case for the last few years now. However, NVIDIA’s competitors have not been standing idly by the entire time – and that especially goes for Intel, whose enterprise market share all of this ultimately threatens. With everything from dedicated low-power inference processors to purpose-optimized Xeons, Intel is taking aim at every level of the AI market. The net result is that between all of these competitors, we’re seeing AI tackled from many different directions, and the hardware battle for AI era is insanely interesting in our humble opinion.

Today we’re taking a look at what’s perhaps the heart of Intel’s hardware in the AI space, Intel’s second-generation Xeon Scalable processors, better known as "Cascade Lake". Introduced a bit earlier this year, these new processors are still based on the same core Skylake architecture as the first-generation products, but incorporate a number of new instructions to speed up AI performance.

And as far as new technology goes, this is certainly the most interesting aspect of Cascade Lake. While we could talk about the three to six percent general CPU performance improvement, the 56 cores of Intel’s most expensive processor ever, and the "world record benchmarks," these small improvements are close to irrelevant for the near and mid-term future of the IT world. Just look at the very first slide of the Intel press & analyst briefing.

Internet of things, data engineering, and AI. That is where a large part of the growth, the innovation, and the future of IT will be. And this is where Intel wants to be.

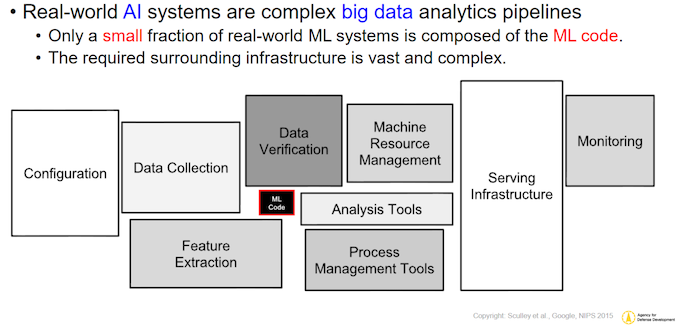

Right now, NVIDIA has a virtual monopoly on the “sexiest” part of this market, which is deep learning and “massively parallel HPC” software. Thanks to a confluence of factors on the hardware and software sides, most of this software is run on NVIDIA GPUs and clusters. So to the general public, it looks likes NVIDIA owns the “AI market”, a picture that is not inaccurate, but also not complete. There’s a lot more to the AI market than just neural network inferencing, and in particular, everything that has to happen to feed the AI model with data gets very little attention. As a result, it’s neural networks and Terminator robots that get all the headlines, even though they’re just part of the of the picture. In reality, the processing web for AI applications is much more like the picture below.

In short, actual machine learning code execution is only a very small part of the software tools necessary to build and AI Application.

Before you can even start, you have to ingest data, decompress, filter, reorder, map, and shuffle it around. Once everything is sorted and shuffled, you have to aggregate the data. As ML algorithms need large amounts of data to produce good predictions, that can be very processing memory intensive. Why? Let us delve a little deeper.

56 Comments

View All Comments

Drumsticks - Monday, July 29, 2019 - link

It's an interesting, valuable take on the challenges of responding to many of the ML workloads of today with a general purpose CPU, thanks! A third party review of Intel's latest against Nvidia, and even throwing AMD in to the mix, is pretty helpful as the two companies have been going at it for a while now.Intel has a lot of stuff going that should make the next few years quite interesting. If they manage to follow through on the Nervana Coprocessor/NNP-I that Toms talked about, or on their discrete GPUs, they'll have a potent lineup. The execution definitely isn't guaranteed, especially given the software reliance these products will have, but if Intel really can manage to transform their product stack, and do it in the next few years, they'll be well on their way to competing in a much larger market, and defending their current one.

OTOH, if they fail with all of them, it'll definitely be bad news for their future. They obviously won't go bankrupt (they'll continue to be larger than AMD for the foreseeable future), but it'll be exponentially harder if not impossible to get back into those markets they missed.

JohanAnandtech - Monday, July 29, 2019 - link

Thanks! Indeed, Nervana coprocessors are indeed Intel's most promising technology in this area.p1esk - Monday, July 29, 2019 - link

No one in their right mind would think "gee, should I get CPU or GPU for my DL app?" More concerning for Intel should be the fact that I bought a Threadripper for my latest DL build.Smell This - Monday, July 29, 2019 - link

You gotta Radeon VII ?I'm thinking Intel, and to a lesser extent, nVidia, is waiting for the next shoe(s) to drop in **Big Compute** --- Cascade Lake has been left at the starting gate.

An AMD Radeon Instinct 'cluster' on a dense specialized 'chiplet' server with hundreds of CPU cores/threads is where this train is headed ...

JohanAnandtech - Monday, July 29, 2019 - link

Spinning up a GPU based instance on Amazon is much more expensive than a CPU one. So for development purposes, this question is asked.p1esk - Tuesday, July 30, 2019 - link

Then you should be answering precisely that question: which instance should I spin up? Your article does not help with that because the CPU you test is more expensive than the GPU.JohnnyClueless - Monday, July 29, 2019 - link

Really surprised Intel, and to a lesser extent AMD, are even trying to fight this battle with nVidia on these terms. It’s a lot like going to a gun fight and developing an extra sharp samurai sword rather than bringing the usual switchblade knife. The sword may be awesome, but it’s always going to be the wrong tool for the gun fight.IMO, a better approach to capture market share in DL/AI/HPC might be to develop a low core count (by 2019 standards) CPU that excelled at sequential single threaded performance. Something like 6-10 GHz. That would provide a huge and tangible boost to any workload that is at least partially single core frequency limited, and that is most DL/AI/HPC workloads. Leave the parallel computing to chips and devices designed to excel at such workloads!

Eris_Floralia - Monday, July 29, 2019 - link

Still living in early 2000s?FunBunny2 - Monday, July 29, 2019 - link

"Something like 6-10 GHz. "IIRC, all the chip tried to get near that, but couldn't. it's not nice to fool Mother Nature.

Santoval - Monday, July 29, 2019 - link

"Something like 6-10 GHz."Google "Dennard scaling" (which ended in ~2005) to find out why this is impossible, at least with silicon based MOSFET transistors (including the GAA-FET based ones of the next decade). Wikipedia has a very informative page with multiple links to various sources for even more. The gist of the end of Dennard scaling is that single core clocks higher than ~5 GHz (at a reasonable TDP of up to ~100W) are explicitly forbidden at *any* node.

When Dennard scaling ended -in combination with the slowing down of Moore's Law- there was another, related consequence : Koomey's law started to slow down. Koomey's law is all about power efficiency, i.e. how many computations you can extract from each Wh or kWh.

Before the early 2000s the number of computations per x unit of energy doubled on average every 1.57 years. In 2011 Koomey himself re-evaluated his law and got an average doubling of computations every 2.6 years for the previous decade, a substantial collapse of power efficiency. Since 2011 Koomey's law has obviously slowed down further.

To make a long story short Moore's law puts a limit to the number of transistors we can fit in each mm^2, and that limit is not too far away. Dennard scaling once allowed us to raise clocks with each new node at the same TDP, and this is ancient history in computing terms. Koomey's law, finally, puts a limit to the power efficiency of our CPUs/GPUs, and this continues to slow down due to the slowing down of Moore's Law (when Moore's Law ends Koomey's law will also end, thus all three fundamental computing laws will be "dead").

Unless we ditch silicon (and even CMOS transistors, if required) and adopt a new computing paradigm we will have neither 6 - 10 GHz clocked CPUs in a couple of decades nor will we able to speed up CPUs, GPUs and computers at all.