The Intel Optane Memory H10 Review: QLC and Optane In One SSD

by Billy Tallis on April 22, 2019 11:50 AM EST

SSD caching has been around for a long time, as a way to reap many of the performance benefits of fast storage without completely abandoning the high capacity and lower prices of slower storage options. In recent years, the fast, small, expensive niche has been ruled by Intel's Optane products using their 3D XPoint non-volatile memory. Intel's third generation of Optane Memory SSD caching products has arrived, bringing the promise of Optane performance to a new product segment. The first Optane Memory products were tiny NVMe SSDs intended to accelerate access to larger slower SATA drives, especially mechanical hard drives. Intel is now supporting using Optane Memory SSDs to cache other NVMe SSDs, with an eye toward the combination of Optane and QLC NAND flash. They've put both types of SSD onto a single M.2 module to create the new Optane Memory H10.

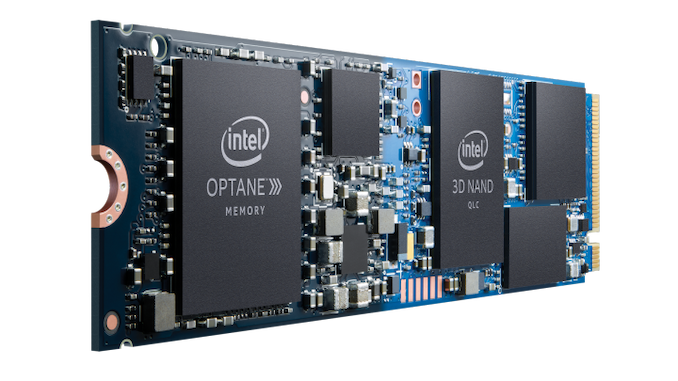

The Intel Optane Memory H10 allows Intel for the first time to put their Optane Memory caching solution into ultrabooks that only have room for one SSD, and have left SATA behind entirely. Squeezing two drives onto a single-sided 80mm long M.2 module is made possible in part by the high density of Intel's four bit per cell 3D QLC NAND flash memory. Intel's 660p QLC SSD has plenty of unused space on the 1TB and 512GB versions, and an Optane cache has great potential to offset the performance and endurance shortcomings of QLC NAND. Putting the two onto one module has some tradeoffs, but for the most part the design of the H10 is very straightforward.

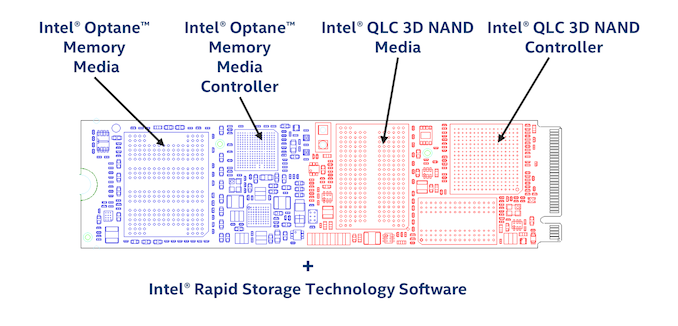

The Optane Memory H10 does not introduce any new ASICs or any hardware to make the Optane and QLC portions of the drive appear as a single device. The caching is managed entirely in software, and the host system accesses the Optane and QLC sides of the H10 independently. Each half of the drive has two PCIe lanes dedicated to it. Earlier Optane Memory SSDs have all been PCIe x2 devices so they aren't losing anything, but the Intel 660p uses a 4-lane Silicon Motion NVMe controller, which is now restricted to just two lanes. In practice, the 660p almost never needed more bandwidth than an x2 link can provide, so this isn't a significant bottleneck.

| Intel Optane Memory H10 Specifications | |||||

| Advertised Capacity | 256 GB | 512 GB | 1TB | ||

| Form Factor | single-sided M.2 2280 | ||||

| NAND Controller | Silicon Motion SM2263 | ||||

| NAND Flash | Intel 64L 3D QLC | ||||

| Optane Controller | Intel SLL3D | ||||

| Optane Media | Intel 128Gb 3D XPoint | ||||

| QLC NAND Capacity | 256 GB | 512 GB | 1024 GB | ||

| Optane Capacity | 16 GB | 32 GB | 32 GB | ||

| Sequential Read | 1450 MB/s | 2300 MB/s | 2400 MB/s | ||

| Sequential Write | 650 MB/s | 1300 MB/s | 1800 MB/s | ||

| Random Read IOPS | 230k | 320k | 330k | ||

| Random Write IOPS | 150k | 250k | 250k | ||

| L1.2 Idle Power | < 15 mW | ||||

| Warranty | 5 years | ||||

| Write Endurance | 75 TB 0.16 DWPD |

150 TB 0.16 DWPD |

300 TB 0.16 DWPD |

||

With a slow QLC SSD and a fast Optane SSD on one device, Intel had to make judgement calls in determining the rated performance specifications. The larger two capacities of H10 are rated for sequential read speeds in excess of 2GB/s, reflecting how Intel's Optane Memory caching software can fetch data from both QLC and Optane portions of the H10 simultaneously. Writes can also be striped, but the maximum rating doesn't exceed any obvious limit for single-device performance. The random IO specs for the H10 fall between the performance of the existing Optane Memory and 660p SSDs, but are much closer to Optane performance. Intel's not trying to advertise a perfect cache hit rate, but they expect it to be pretty good for ordinary real-world usage.

The Optane cache should help reduce the write burden that the QLC portion of the H10 has to bear, but Intel still rates the whole device for the same 0.16 drive writes per day that their 660p QLC SSDs are rated for.

Intel's marketing photos of the Optane Memory H10 show it with a two-tone PCB to emphasize the dual nature of the drive, but in reality it's a solid color. The PCB layout is unique with two controllers and three kinds of memory, but it is also obviously reminiscent of the two discrete products it is based on. The QLC NAND half of the drive is closer to the M.2 connector and features the SM2263 controller and one package each of DRAM and NAND. The familiar Silicon Motion test/debug connections are placed at the boundary between the NAND half and the Optane half. That Optane half contains Intel's small Optane controller, a single package of 3D XPoint memory, and most of the power management components. Both the Intel SSD 660p and the earlier Optane Memory SSDs had very sparse PCBs; the Optane Memory H10 is crowded and may have the highest part count of any M.2 SSD on the market.

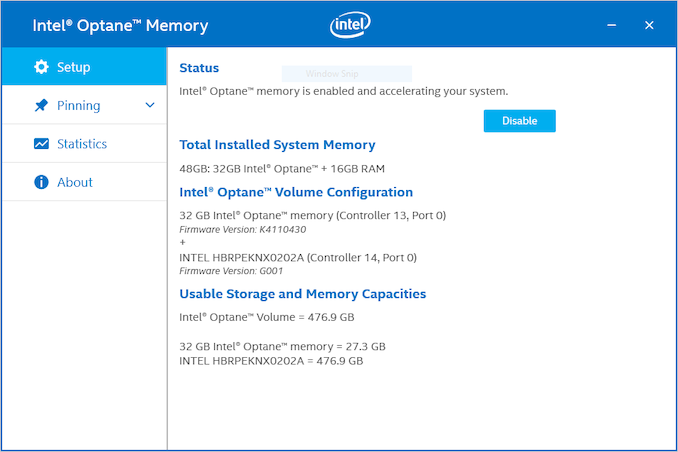

On the surface, little has changed with the Optane Memory software; there's just more flexibility now in which devices can be selected to be cached. (Intel has also opened extended Optane Memory support to Pentium and Celeron branded processors on platforms that were already supported with Core processors.) When the boot volume is cached, Intel's software allows the user to specify files and applications that should be pinned to the cache and be immune from eviction. Other than this, there's no room for tweaking of the cache behavior.

Some OEMs that sell systems equipped with Optane Memory have been advertising memory capacities as the sum of DRAM and Optane capacities, which might be reasonable if we were talking about Optane DC Persistent Memory modules that connect to the CPU's memory controller, but is very misleading when the Optane product in question is an SSD. Intel says to blame the OEMs for this misleading branding, but Intel's own Optane Memory software does the same thing.

Initially, the Optane Memory H10 will be an OEM-only part, available to consumers only pre-installed in new systems—primarily notebooks. Intel is considering bringing the H10 to retail both as a standalone product and as part of a NUC kit, but they have not committed to plans for either. Their motherboard partners have been laying the groundwork for H10 support for almost a year, and many desktop 300-series motherboards already support the H10 with the latest publicly available firmware.

Platform Compatibility

Putting two PCIe devices on one M.2 card is novel to say the least. Intel has put two SSD controllers on one PCB before with high-end enterprise drives like the P3608 and P4608, but those drives use PCIe switch chips to split an x8 host connection into x4 for each of the two NVMe controllers on board. That approach leads to a 40W TDP for the entire card, which is not at all useful when trying to work within the constraints of a M.2 card.

There are also several PCIe add-in cards that allow four M.2 PCIe SSDs to be connected through one PCIe x16 slot. A few of these cards also include PCIe switches, but most rely on the host system supporting PCIe port bifurcation to split a single x16 port into four independent x4 ports. Mainstream consumer CPUs usually don't support this, and are generally limited to x8+x4+x4 or just x8+x8 bifurcation, and only when the lanes are being re-routed to different slots to support multi-GPU use cases. Recent server and workstation CPUs are more likely to support bifurcation down to x4 ports, but motherboard support for enabling this functionality isn't universal.

Even on CPUs where an x16 slot can be split into four x4 ports, further bifurcation down to x2 ports is seldom or never possible. The chips that do support operating a lot of PCIe lanes as narrow x2 or x1 ports are the southbridge/PCH chips on most motherboards. These tend to not support ports any wider than x4, because that's the normal width of the connection upstream to the CPU.

Based on the above, we put theory to the test and tried the Optane Memory H10 with almost every PCIe 3.0 port we had on hand, using whatever adapters were necessary. Our results are summarized below:

| Intel Optane Memory H10 Platform Compatibility |

||||||

| Platform | PCIe Source |

NAND Usable |

Optane Usable |

Optane Memory Caching |

||

| Whiskey Lake | PCH | Yes | Yes | Yes | ||

| Coffee Lake | CPU | Yes | No | No | ||

| PCH | Yes | Yes | No* | |||

| Kaby Lake | CPU | Yes | No | No | ||

| PCH | Yes | No | No | |||

| Skylake | CPU | Yes | No | No | ||

| PCH | Yes | No | No | |||

| Skylake-SP (Purley) |

CPU | Yes | No | No | ||

| PCH | Yes | No | No | |||

| Threadripper | CPU | No | Yes | No | ||

| Avago PLX Switch | Yes | No | No | |||

| Microsemi PFX Switch | No | Yes | No | |||

The Whiskey Lake notebook Intel provided for this review is of course fully compatible with the Optane Memory H10, and will be available for purchase in this configuration soon. Compatibility with older platforms and non-Intel platforms is mostly as expected, with only the NAND side of the H10 accessible—those motherboards don't expect to find two PCIe devices sharing a physical M.2 x4 slot, and aren't configured to detect and initialize both devices. There are a few notable exceptions:

First, the H370 motherboard in our Coffee Lake system is supposed to fully support the H10, but GIGABYTE botched the firmware update that claims to have added H10 support: both the NAND and Optane portions of the H10 are accessible when using a M.2 slot that connects to the PCH, but it isn't possible to enable caching. There are plenty of 300-series motherboards that have successfully added H10 support, and I'm sure GIGABYTE will release a fixed firmware update for this particular board soon. Putting the H10 into a PCIe x16 slot that connects directly to the CPU does not provide access to the Optane side, reflecting the CPU's lack of support for PCIe port bifurcation down to x2+x2.

The only modern AMD system we had on hand was a Threadripper/X399 motherboard. All of the PCIe and M.2 slots we tried led to the Optane side of the H10 being visible instead of the NAND side.

We also connected the H10 through two different brands of PCIe 3.0 switch. Avago's PLX PEX8747 switch only provided access to the NAND side, which is to be expected since it only supports PCIe port bifurcation down to x4 ports. The Microsemi PFX PM8533 switch does claim to support bifurcation down to x2 and we were hoping it would enable access to both sides of the H10, but instead we only got access to the Optane half. The Microsemi switch and Threadripper motherboard may both be just a firmware update away from working with both halves of the H10, and earlier Intel PCH generations might also have that potential, but Intel won't be providing any such updates. Even if these platforms were able to access both halves of the H10, they would not be supported by Intel's Optane Memory caching drivers, but third-party caching software exists.

60 Comments

View All Comments

Valantar - Tuesday, April 23, 2019 - link

"Why hamper it with a slower bus?": cost. This is a low-end product, not a high-end one. The 970 EVO can at best be called "midrange" (though it keeps up with the high end for performance in a lot of cases). Intel doesn't yet have a monolithic controller that can work with both NAND and Optane, so this is (as the review clearly states) two devices on one PCB. The use case is making a cheap but fast OEM drive, where caching to the Optane part _can_ result in noticeable performance increases for everyday consumer workloads, but is unlikely to matter in any kind of stress test. The problem is that adding Optane drives up prices, meaning that this doesn't compete against QLC drives (which it would beat in terms of user experience) but also TLC drives which would likely be faster in all but the most cache-friendly, bursty workloads.I see this kind of concept as the "killer app" for Optane outside of datacenters and high-end workstations, but this implementation is nonsense due to the lack of a suitable controller. If the drive had a single controller with an x4 interface, replaced the DRAM buffer with a sizeable Optane cache, and came in QLC-like capacities, it would be _amazing_. Great capacity, great low-QD speeds (for anything cached), great price. As it stands, it's ... meh.

cb88 - Friday, May 17, 2019 - link

Therein lies the BS... Optane cannot compete as a low end product as it is too expensive.. so they should have settled for being the best premium product with 4x PCIe... probably even maxing out PCIe 4.0 easily once it launches.CheapSushi - Wednesday, April 24, 2019 - link

I think you're mixing up why it would be faster. The lanes are the easier part. It's inherently faster. But you can't magically make x2 PCIe lanes push more bandwidth than x4 PCIe lanes on the same standard (3.0 for example).twotwotwo - Monday, April 22, 2019 - link

Prices not announced, so they can still make it cheaper.Seems like a tricky situation unless it's priced way below anything that performs similarly though. Faster options on one side and really cheap drives that are plenty for mainstream use on the other.

CaedenV - Monday, April 22, 2019 - link

lol cheaper? All of the parts of a traditional SSD, *plus* all of the added R&D, parts, and software for the Optane half of the drive?I will be impressed if this is only 2x the price of a Sammy... and still slower.

DanNeely - Monday, April 22, 2019 - link

Ultimately, to scale this I think Intel is going to have to add an on card PCIe switch. With the company currently dominating the market setting prices to fleece enterprise customers, I suspect that means they'll need to design something in house. PCIe4 will help some, but normal drives will get faster too.kpb321 - Monday, April 22, 2019 - link

I don't think that would end up working out well. As the article mentions PCI-E switches tend to be power hungry which wouldn't work well and would add yet another part to the drive and push the BOM up even higher. For this to work you'd need to deliver TLC level performance or better but at a lower cost. Ultimately the only way I can see that working would be moving to a single integrated controller. From a cost perspective eliminating the DRAM buffer by using a combination of the Optane memory and HBM should probably work. This would probably push it into a largely or completely hardware managed solution and would improve compatibility and eliminate the issues with the PCI-E bifrication and bottlenecks.ksec - Monday, April 22, 2019 - link

Yes, I think we will need a Single Controller to see its true potential and if it has a market fit.Cause right now I am not seeing any real benefits or advantage of using this compared to decent M.2 SSD.

Kevin G - Monday, April 22, 2019 - link

What Intel needs to do for this to really take off is to have a combo NAND + Optane controller capable of handling both types natively. This would eliminate the need for a PCIe switch and free up board space on the small M.2 sticks. A win-win scenario if Intel puts forward the development investment.e1jones - Monday, April 22, 2019 - link

A solution for something in search of a problem. And, typical Intel, clearly incompatible with a lot of modern systems, much less older systems. Why do they keep trying to limit the usability of Optane!?In a world where each half was actually accessible, it might be useful for ZFS/NAS apps, where the Optane could be the log or cache and the QLC could be a WORM storage tier.