Intel's Architecture Day 2018: The Future of Core, Intel GPUs, 10nm, and Hybrid x86

by Dr. Ian Cutress on December 12, 2018 9:00 AM EST- Posted in

- CPUs

- Memory

- Intel

- GPUs

- DRAM

- Architecture

- Microarchitecture

- Xe

Going Beyond Gen11: Announcing the XE Discrete Graphics Brand

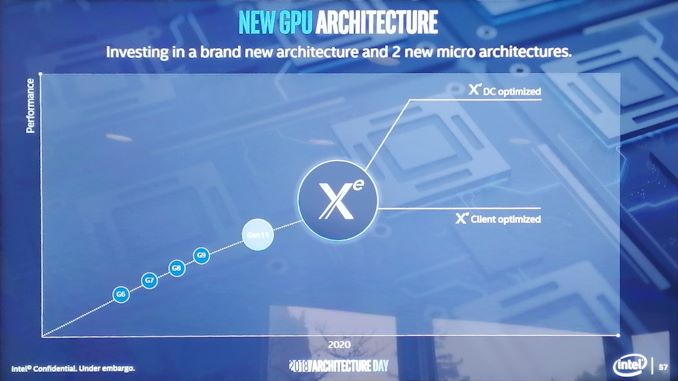

Not content with merely talking about what 2019 will bring, we were given a glimpse into how Intel is going to approach its graphics business in 2020 as well. It was at this point that Raja announced the new product branding for Intel’s discrete graphics business:

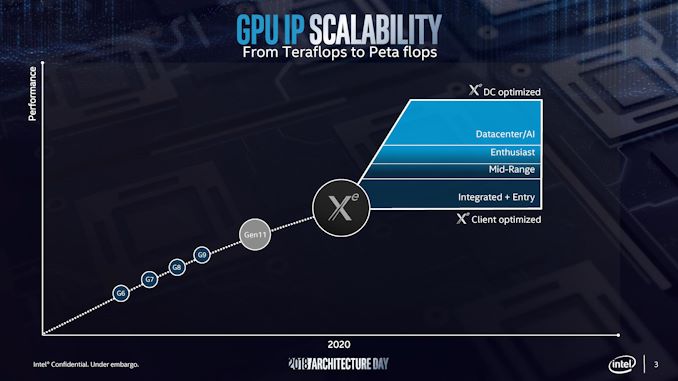

Intel will use the Xe branding for its range of graphics that were unofficially called ‘Gen12’ in previous discussions. Xe will start from 2020 onwards, and cover the range from client graphics all the way to datacenter graphics solutions.

Intel actually divides this market up, showing that Xe also covers the future integrated graphics solutions as well. If this slide is anything to go by, it would appear that Intel wants Xe to go from entry to mid-range to enthusiast and up to AI, competing with the best the competition has to offer.

Intel stated that Xe will start on Intel’s 10nm technology and that it will fall under Intel’s single stack software philosophy, such that Intel wants software developers to be able to take advantage of CPU, GPU, FPGA, and AI, all with one set of APIs. This Xe design will feed the foundation of several generations of graphics, and shows that Intel is now ready to rally around a brand name moving forward.

There was some confusion with one of the slides, as it would appear that Intel might be using the new brand name to also refer to some of it's FPGA and AI solutions. We're going to see if we can get an answer on that in due course.

148 Comments

View All Comments

Spunjji - Thursday, December 13, 2018 - link

Is it better? Their last roadmaps were not worth the powerpoint slides they showed up in, not to mention the whole "tick-tock-optimise-optimise-delay" fiasco.HStewart - Thursday, December 13, 2018 - link

From the look of things in this excellent article - it looks for 2019 Intel is combining both tick and tock together with significant architexture improvement along with process improvements.johannesburgel - Thursday, December 13, 2018 - link

Compared to the latest Xeon roadmaps I have seen in NDA meetings, these desktop roadmaps still seem quite ambitious. They don't expect to ship a "lower core count" 10nm Xeon before mid-2020.HStewart - Thursday, December 13, 2018 - link

Just because Intel did not mention it - does not mean it will not happen.Also remember that Intel is decoupling the process from actual Architexture. In the past, I alway remember the Xeon technologies were forerunner's of base core technology. Hyperthreading is one example and multiple core support.

Vesperan - Wednesday, December 12, 2018 - link

Its 6am for me, and with the mugshots of Jim Keller and Raja Koduri at the end you could have labelled this the AMD architecture day and I would have believed you. It will be an interesting several years as those two put their stamp on Intel CPU/GPUs.The_Assimilator - Wednesday, December 12, 2018 - link

So Intel is going to take another poke at the smartphone market it seems. Well, let's hope Fovoros fares better than the last half-dozen attempts.Rudde - Wednesday, December 12, 2018 - link

7W is too much for a smartphones power budget. Smartphones operate at sub 1W power budget.johannesburgel - Wednesday, December 12, 2018 - link

The just announced Qualcomm Snapdragon 855 has a peak TDP of 5 Watts. Most smartphone manufacturers limit the whole SoC to 4 watts. The average smartphone battery now has >10 Wh, so even at full load the device would still run between 1.5 (display on) and 3 (display off) hours. Which it has to in the hands of those gamer kids.YoloPascual - Wednesday, December 12, 2018 - link

Had the og zenfone with the intel soc. It drains battery as a gas guzzler suv. Never buying a smartphone with intel inside ever again.Mr Perfect - Wednesday, December 12, 2018 - link

It's exciting to see Intel use FreeSync in their graphics. They could have easily gone with some proprietary solution, then we'd have three competing monitor types. Hopefully having both AMD and Intel on FreeSync will prompt Nvidia to at least support it alongside G-Sync.