The AMD Radeon RX 590 Review, feat. XFX & PowerColor: Polaris Returns (Again)

by Nate Oh on November 15, 2018 9:00 AM ESTTotal War: Warhammer II (DX11)

Last in our 2018 game suite is Total War: Warhammer II, built on the same engine of Total War: Warhammer. While there is a more recent Total War title, Total War Saga: Thrones of Britannia, that game was built on the 32-bit version of the engine. The first TW: Warhammer was a DX11 game was to some extent developed with DX12 in mind, with preview builds showcasing DX12 performance. In Warhammer II, the matter, however, appears to have been dropped, with DX12 mode still marked as beta, but also featuring performance regression for both vendors.

It's unfortunate because Creative Assembly themselves have acknowledged the CPU-bound nature of their games, and with re-use of game engines as spin-offs, DX12 optimization would have continued to provide benefits, especially if the future of graphics in RTS-type games will lean towards low-level APIs.

There are now three benchmarks with varying graphics and processor loads; we've opted for the Battle benchmark, which appears to be the most graphics-bound.

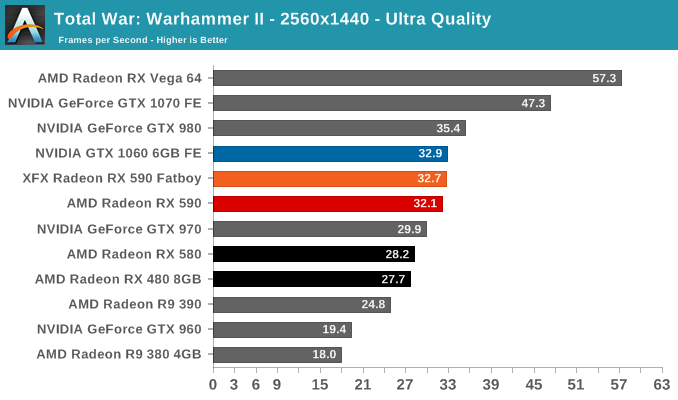

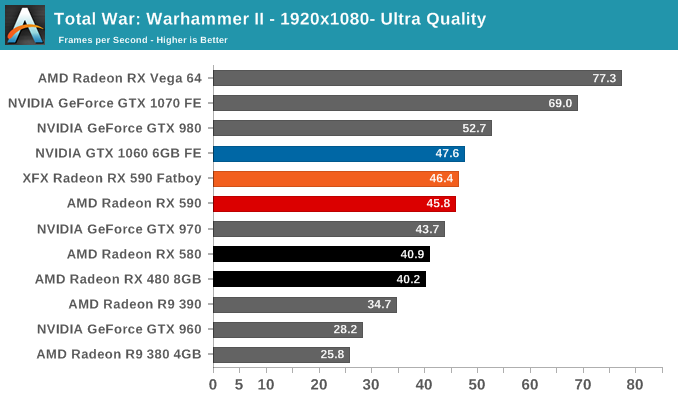

Along with GTA V, Total War: Warhammer II is the other game in our suite where the GTX 1060 6GB FE remains in the lead even against factory-overclocked RX 590s. NVIDIA hardware all fare well here, and for the RX 590 it has at least made up enough ground to nip at the GTX 1060 6GB FE's heels. And while the RX 590 represents a decent jump from R9 390 levels, it is still priced higher and draws more power than the GTX 1060 6GB.

136 Comments

View All Comments

AMD#1 - Tuesday, November 20, 2018 - link

Well, they released a card this year for the consumer market. My hopes are that Navi will be a better option against NVIDIA RTX, good that Radeon will not support RT. Let NVIDIA first support DX12 with hardware shader units, instead of hanging on to DYING DX11.silverblue - Wednesday, November 21, 2018 - link

A couple of reviews seem to be showing the Fatboy at similar power consumption (or slightly higher) levels to the 580 Nitro+ (presumably the 1450MHz version) and Red Devil Golden Sample. That's not so bad when you factor in the significantly increased clocks, but the two 580s hardly sipped power to begin with, and basic 580s don't really perform much worse for what would be much lower power consumption. AMD has a product that kind of bridges the gap between the 1060 and the 1070, but uses more power than a 1080... hardly envious, really. The Fatboy has rather poor thermals as well if you don't ramp the fan speed up.The 590s we're getting are clocked aggressively on core but not on memory; what would really be interesting is a 590 clocked at 580 levels, even factory overclocked 580 levels. Would it be worth getting a 590 just to undervolt and underclock the core, make use of the extra game in the bundle (once they launch, that is), and essentially be running a more efficient 580? I'd be tempted to overclock the memory at the same time as that appears to be where the 580 is being held back, not core speed.

silverblue - Wednesday, November 21, 2018 - link

I'll be fair to the Fatboy; it does have a zero RPM mode which would explain the thermals.WaltC - Sunday, December 2, 2018 - link

I just bought a Fatboy...to run in X-fire with my year-old 8GB RX-480 (1.305GHz stock)...! As I am now gaming at 3840x2160, it seemed a worthwhile alternative to dropping $500+ on a single GPU. Paid $297 @ NewEgg & got the 3-game bundle. I read one review by a guy who X-fired a 580 with a 480 without difficulty--and the performance scaled from 70%-90% better when X-Fire is supported. Wouldn't recommend buying two 590's at one time, of course, but for people who already own a 480/580, the X-Fire alternative is the most cost-effective route at present. Gaming sites seem to have forgotten about X-Fire these days, for some reason. Of course, the nVidia 1060 doesn't allow for SLI--so that might be one reason, I suppose. Still, it's kind of baffling as the X-Fire mode seems like such a no brainer. And for those titles that will not X-fire, I'll just run them all @ 1.6GHz on the 590...Until next year when AMD's next < $300 GPU launches...! Then, I may have to think again!quadibloc - Friday, December 7, 2018 - link

Oh, so Global Foundries does now have a 12 nm process? I'm glad they're doing something a little better than 14 nm at least.