Intel's 10nm Cannon Lake and Core i3-8121U Deep Dive Review

by Ian Cutress on January 25, 2019 10:30 AM ESTStock CPU Performance: Encoding Tests

With the rise of streaming, vlogs, and video content as a whole, encoding and transcoding tests are becoming ever more important. Not only are more home users and gamers needing to convert video files into something more manageable, for streaming or archival purposes, but the servers that manage the output also manage around data and log files with compression and decompression. Our encoding tasks are focused around these important scenarios, with input from the community for the best implementation of real-world testing.

All of our benchmark results can also be found in our benchmark engine, Bench.

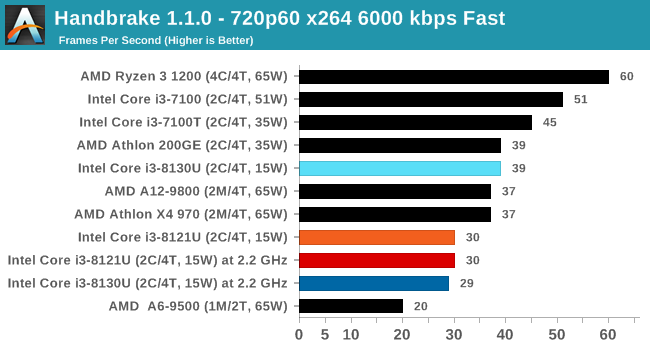

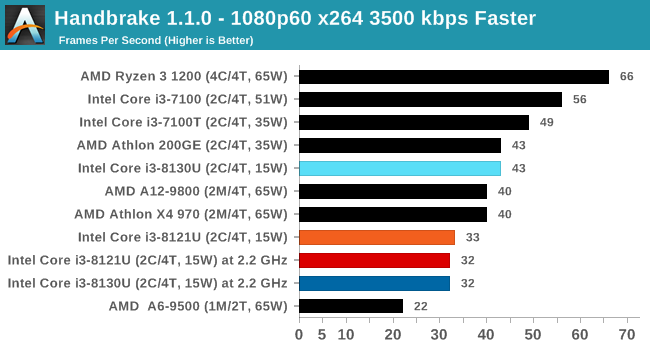

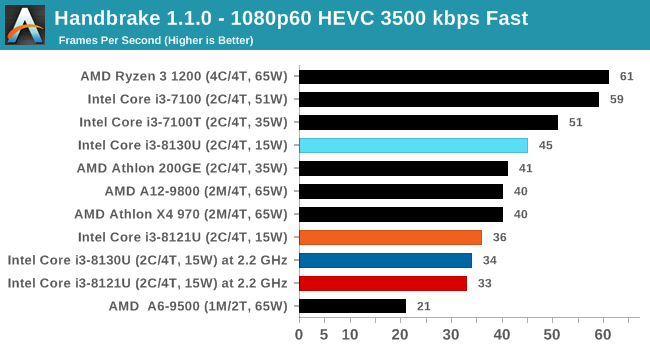

Handbrake 1.1.0: Streaming and Archival Video Transcoding

A popular open source tool, Handbrake is the anything-to-anything video conversion software that a number of people use as a reference point. The danger is always on version numbers and optimization, for example the latest versions of the software can take advantage of AVX-512 and OpenCL to accelerate certain types of transcoding and algorithms. The version we use here is a pure CPU play, with common transcoding variations.

We have split Handbrake up into several tests, using a Logitech C920 1080p60 native webcam recording (essentially a streamer recording), and convert them into two types of streaming formats and one for archival. The output settings used are:

- 720p60 at 6000 kbps constant bit rate, fast setting, high profile

- 1080p60 at 3500 kbps constant bit rate, faster setting, main profile

- 1080p60 HEVC at 3500 kbps variable bit rate, fast setting, main profile

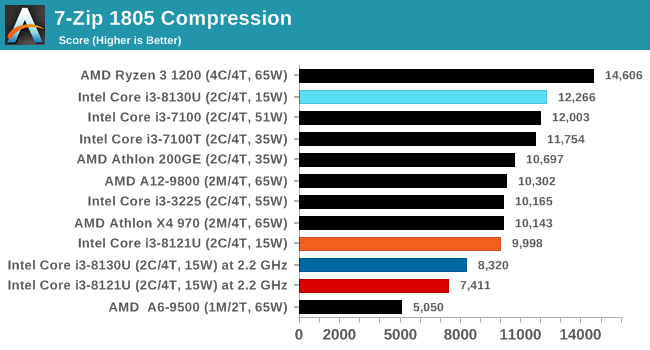

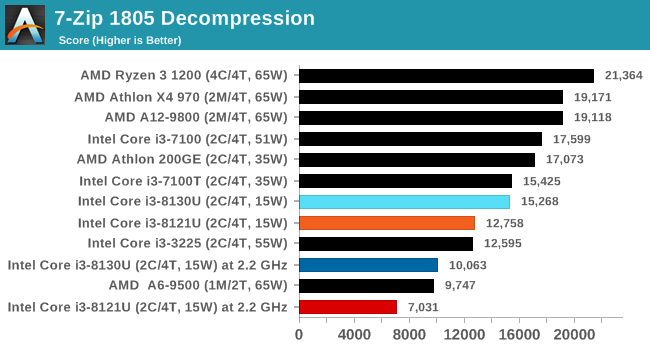

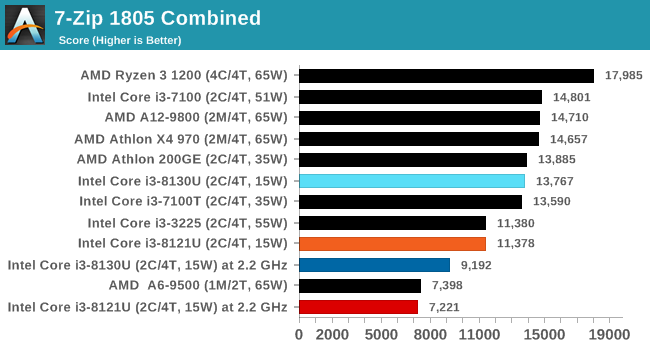

7-zip v1805: Popular Open-Source Encoding Engine

Out of our compression/decompression tool tests, 7-zip is the most requested and comes with a built-in benchmark. For our test suite, we’ve pulled the latest version of the software and we run the benchmark from the command line, reporting the compression, decompression, and a combined score.

It is noted in this benchmark that the latest multi-die processors have very bi-modal performance between compression and decompression, performing well in one and badly in the other. There are also discussions around how the Windows Scheduler is implementing every thread. As we get more results, it will be interesting to see how this plays out.

Please note, if you plan to share out the Compression graph, please include the Decompression one. Otherwise you’re only presenting half a picture.

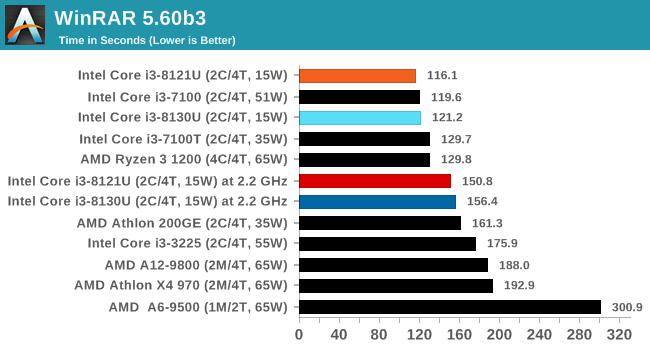

WinRAR 5.60b3: Archiving Tool

My compression tool of choice is often WinRAR, having been one of the first tools a number of my generation used over two decades ago. The interface has not changed much, although the integration with Windows right click commands is always a plus. It has no in-built test, so we run a compression over a set directory containing over thirty 60-second video files and 2000 small web-based files at a normal compression rate.

WinRAR is variable threaded but also susceptible to caching, so in our test we run it 10 times and take the average of the last five, leaving the test purely for raw CPU compute performance.

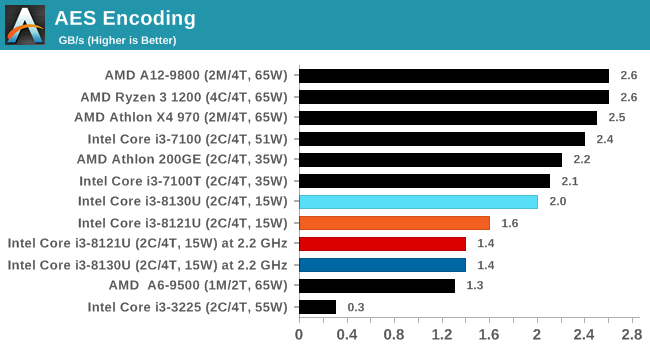

AES Encryption: File Security

A number of platforms, particularly mobile devices, are now offering encryption by default with file systems in order to protect the contents. Windows based devices have these options as well, often applied by BitLocker or third-party software. In our AES encryption test, we used the discontinued TrueCrypt for its built-in benchmark, which tests several encryption algorithms directly in memory.

The data we take for this test is the combined AES encrypt/decrypt performance, measured in gigabytes per second. The software does use AES commands for processors that offer hardware selection, however not AVX-512.

129 Comments

View All Comments

jjj - Friday, January 25, 2019 - link

Bored with laptops, want a large foldable phone with a projected keyboard so i can forget about these bulky heavy things. Ok, fair enough, glasses are way better but those will take a while longer.eastcoast_pete - Friday, January 25, 2019 - link

@Ian: Thanks for the deep dive, and giving the references for background! One comment, three questions (they're related): In addition to being very (overly) ambitious with the 10 nm process, I was particularly struck by the "fused-off integrated graphics" and how Intel's current 10 nm process apparently just won't play nice with the demands in a GPU setting. Question: Any information or rumors on whether that contributed to AMD going the chiplet route for Ryzen going forward? In addition to improving yields, that also allows for heterogeneous manufacturing nodes on the same final chip, so that can get around that problem. Finally, any signs that Intel may go down that road in its upcoming mainstream chips? Any updates on what node they will make their much-announced dGPUs on? Probably won't be this or a related 10 nm process.Lastly, and maybe you and Andrei can weigh in on that: TSMC's (different) 7 nm process seems to work okay for the (smaller) different "iGPUs" in Apple's 12/12x, Huawei's newest Kirin and the new Snapdragon. Any insight/speculation which steps of Intel's 10 nm process cause the apparent incompatibility with GPU usage scenarios?

Thanks!

Rudde - Saturday, January 26, 2019 - link

AMD has lauched huge 7nm desktop graphics cards (2 server and Radeon VII). AMD does not seem to have any problems making gpus on TSMC 7nm.eastcoast_pete - Sunday, January 27, 2019 - link

That's why I asked about the apparent incompatibility of GPU-type dies with Intel's 10 nm process. Isn't it curious that this seems to be the Achilles heel of Intel's process? I wonder if their future chips with " iGPU" will use a chiplet-type approach, with the CPU parts in 10 nm, and the GPU in 14 nm++++ or however many + generations it'd be on. The other big question is what process their upcoming high-end dGPU will be in Unless, Intel let's TSMC make that for them, too.velanapontinha - Friday, January 25, 2019 - link

Every time I read Kaby G I'm instantly stormed by a Kenny G theme stuck in my head, and it ruins the rest of my day.Please stop.

skis4hire - Friday, January 25, 2019 - link

"Fast forward several months later, to May 2018, and we still had not heard anything from Intel."Anton covered their statement in April, where they indicated they weren't shipping volume 10nm until sometime in 2019, and that they would instead release another 14nm product, whiskey lake, in the interim.

https://www.anandtech.com/show/12693/intel-delays-...

Yorgos - Friday, January 25, 2019 - link

>AMD XXXXX (XM/XT, XXW)Thanks Ian for reminding us is every article, that we are reading a Purch media product, or a clueless editor.

Don't forget, 386 was o 0 core CPU.

No, it doesn't bother me as a reader, it bothers me as an engineer who designs and studies digital circuits. But hey you can't have it all, it's hard to find someone who is capable at running windows executables AND know his way in comp. arch..

Ryan Smith - Friday, January 25, 2019 - link

While I'm all for constructive feedback, I have to admit I'm not sure what we're meant to be taking from this.Could you please articulate in more detail what exactly is wrong with the article?

KateH - Saturday, January 26, 2019 - link

i interpreted it as,...

"I disagree with the distinction between 'modules' and 'cores' that is made when some journalistic endevours mention AMD's 'Construction' architecture microprocessors. I find the drawing of a line based on FPU counts inaccurate- disengenous even- given that historic microprocessors such as the renowned Intel 80386 did not feature an on-chip FPU at all, an omission that would under the definitions used by this journalist in this article cause the '386 to be described as having 'zero cores'. The philosophical exercise suggested by such a definition is, based upon my extensive experience in the industry of digital circuit design, repugnant to my sensibilities and in my opinion calls into question the journalistic integrity of this very publication!"

...

or something like that

(automatically translated from Internet Hooligan to American English, tap here to rate translation)

Ryan Smith - Saturday, January 26, 2019 - link

"tap here to rate translation"5/5 stars. Thank you!