The Intel 9th Gen Review: Core i9-9900K, Core i7-9700K and Core i5-9600K Tested

by Ian Cutress on October 19, 2018 9:00 AM EST- Posted in

- CPUs

- Intel

- Coffee Lake

- 14++

- Core 9th Gen

- Core-S

- i9-9900K

- i7-9700K

- i5-9600K

CPU Performance: Web and Legacy Tests

While more the focus of low-end and small form factor systems, web-based benchmarks are notoriously difficult to standardize. Modern web browsers are frequently updated, with no recourse to disable those updates, and as such there is difficulty in keeping a common platform. The fast paced nature of browser development means that version numbers (and performance) can change from week to week. Despite this, web tests are often a good measure of user experience: a lot of what most office work is today revolves around web applications, particularly email and office apps, but also interfaces and development environments. Our web tests include some of the industry standard tests, as well as a few popular but older tests.

We have also included our legacy benchmarks in this section, representing a stack of older code for popular benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

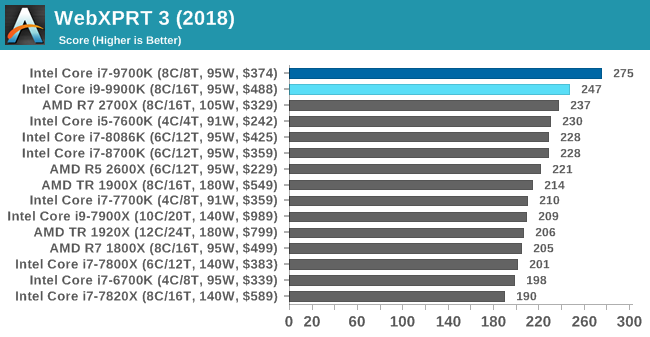

WebXPRT 3: Modern Real-World Web Tasks, including AI

The company behind the XPRT test suites, Principled Technologies, has recently released the latest web-test, and rather than attach a year to the name have just called it ‘3’. This latest test (as we started the suite) has built upon and developed the ethos of previous tests: user interaction, office compute, graph generation, list sorting, HTML5, image manipulation, and even goes as far as some AI testing.

For our benchmark, we run the standard test which goes through the benchmark list seven times and provides a final result. We run this standard test four times, and take an average.

Users can access the WebXPRT test at http://principledtechnologies.com/benchmarkxprt/webxprt/

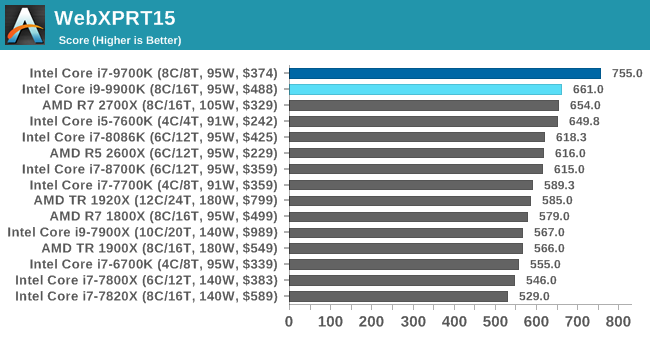

WebXPRT 2015: HTML5 and Javascript Web UX Testing

The older version of WebXPRT is the 2015 edition, which focuses on a slightly different set of web technologies and frameworks that are in use today. This is still a relevant test, especially for users interacting with not-the-latest web applications in the market, of which there are a lot. Web framework development is often very quick but with high turnover, meaning that frameworks are quickly developed, built-upon, used, and then developers move on to the next, and adjusting an application to a new framework is a difficult arduous task, especially with rapid development cycles. This leaves a lot of applications as ‘fixed-in-time’, and relevant to user experience for many years.

Similar to WebXPRT3, the main benchmark is a sectional run repeated seven times, with a final score. We repeat the whole thing four times, and average those final scores.

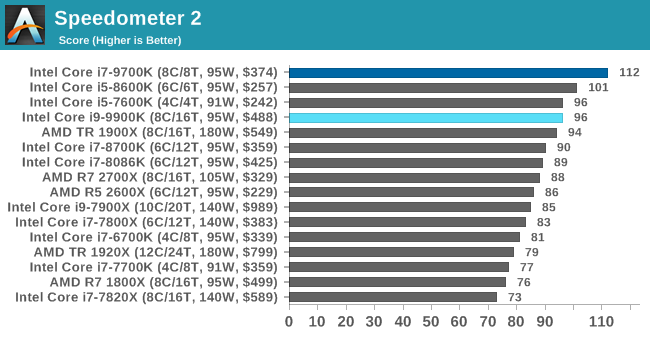

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a accrued test over a series of javascript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics. We report this final score.

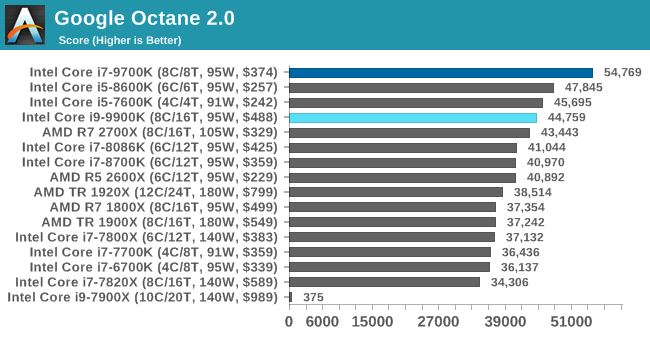

Google Octane 2.0: Core Web Compute

A popular web test for several years, but now no longer being updated, is Octane, developed by Google. Version 2.0 of the test performs the best part of two-dozen compute related tasks, such as regular expressions, cryptography, ray tracing, emulation, and Navier-Stokes physics calculations.

The test gives each sub-test a score and produces a geometric mean of the set as a final result. We run the full benchmark four times, and average the final results.

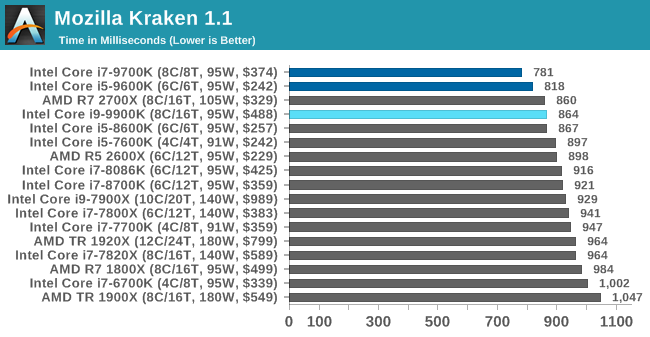

Mozilla Kraken 1.1: Core Web Compute

Even older than Octane is Kraken, this time developed by Mozilla. This is an older test that does similar computational mechanics, such as audio processing or image filtering. Kraken seems to produce a highly variable result depending on the browser version, as it is a test that is keenly optimized for.

The main benchmark runs through each of the sub-tests ten times and produces an average time to completion for each loop, given in milliseconds. We run the full benchmark four times and take an average of the time taken.

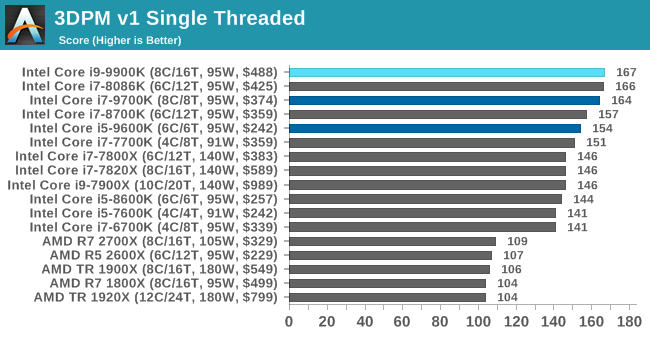

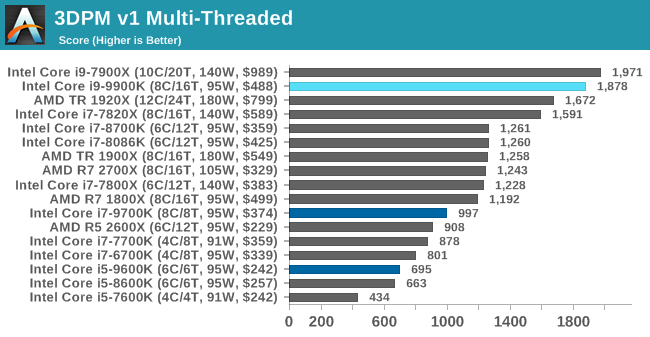

3DPM v1: Naïve Code Variant of 3DPM v2.1

The first legacy test in the suite is the first version of our 3DPM benchmark. This is the ultimate naïve version of the code, as if it was written by scientist with no knowledge of how computer hardware, compilers, or optimization works (which in fact, it was at the start). This represents a large body of scientific simulation out in the wild, where getting the answer is more important than it being fast (getting a result in 4 days is acceptable if it’s correct, rather than sending someone away for a year to learn to code and getting the result in 5 minutes).

In this version, the only real optimization was in the compiler flags (-O2, -fp:fast), compiling it in release mode, and enabling OpenMP in the main compute loops. The loops were not configured for function size, and one of the key slowdowns is false sharing in the cache. It also has long dependency chains based on the random number generation, which leads to relatively poor performance on specific compute microarchitectures.

3DPM v1 can be downloaded with our 3DPM v2 code here: 3DPMv2.1.rar (13.0 MB)

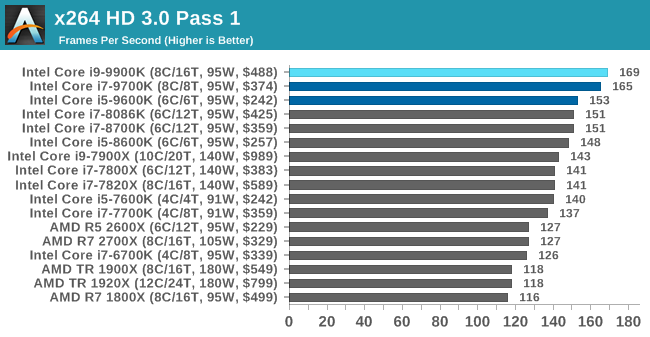

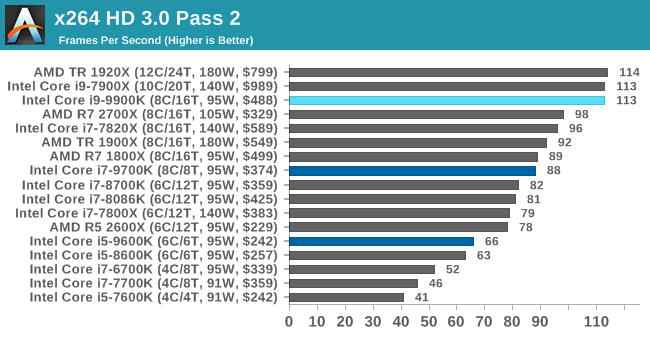

x264 HD 3.0: Older Transcode Test

This transcoding test is super old, and was used by Anand back in the day of Pentium 4 and Athlon II processors. Here a standardized 720p video is transcoded with a two-pass conversion, with the benchmark showing the frames-per-second of each pass. This benchmark is single-threaded, and between some micro-architectures we seem to actually hit an instructions-per-clock wall.

274 Comments

View All Comments

muziqaz - Monday, October 22, 2018 - link

I love the price of $488 stamped all over each of the test results, while over here in UK I see price of £599 and newegg quotes $580. Even your linked amazon has it at $580. And conclusion is awesome with: "At $488 SEP, plus a bit more for 'on-shelf price'..." Since when is extra 100 bucks a bit more? :Dcompudaze - Monday, October 22, 2018 - link

What was the actual vcore for your overclocks?HardwareDufus - Monday, October 22, 2018 - link

I7-9700k.... an I7 that isn't hyperthreaded.... let's totally muddy the waters now Intel.... Guess they had to save some feature for the I9's $100+ surcharge...… Good grief.bogda - Tuesday, October 23, 2018 - link

How pointless is reviewers comment: "... World of Tanks gives the 9900K some room to stretch its legs..."?Difference between two chips in discussion is between 712fps and 681fps! Not even Neo from Matrix could note the difference.

How pointless is discussing top of the line CPU gaming performance in 720p in any game??

How pointless is marketing 8C/16T CPU for gamers???

sseyler - Tuesday, October 23, 2018 - link

Not sure whether this has been pointed out yet, but the Threadripper prices need to be updated. For example, the 1920X is now well under $500 as advertised even on AMD's website and the 1900X goes for $350 on Newegg.dlum - Tuesday, October 23, 2018 - link

For me, listing the long-obsolete prices for AMD processors (still initial, long-outdated MSRP for 1920x $799 - whereas a simple amazon search confirms it's now for just over half of that ($431)) is clearly disrespectful and shamefull practice for a reviewer.It's very sad such dishonest practices found their way to Anandtech and they are so prominent here.

Probably that's also why no one answers nor fixes those clearly misleading figures.

(Maybe that's the cost of being able to read such anyway valuable reviews for free :)

sseyler - Thursday, October 25, 2018 - link

Well, to be fair, I'm sure the editors didn't dig this deeply through the comments. They're busy people.Also, I think I heard something mentioned before about their graphs having some semi-automatic mechanism for listing prices and the like. I don't remember exactly, but it probably has something to do with pulling MSRP data and it's difficult to change given the way the templated graphs are generated from the benchmarks.

I imagine it was done something like this for consistency across the site as well as not biasing prices according to specific vendors. Given the first reason, I don't know why it'd be difficult for individual editors to customize/tweak certain aspects, but maybe that needs to be revised to be more flexible. As for the second reason, there are clearly reasonable solutions, like finding the *current* MSRP (rather than the release MSRP), or selecting the lowest/median/average price among a pool of selected retailers.

Anyway, it doesn't make much sense to me to characterize this as an instance of dishonesty, but rather a technical detail that's important enough to invest the time in it's improvement.

sseyler - Thursday, October 25, 2018 - link

its*zodiacfml - Wednesday, October 24, 2018 - link

Meh. Intel owner could simply delidd and approach these kinds of performance.Resolution above 1080p, AMDs parts have better value.

zodiacfml - Wednesday, October 24, 2018 - link

Made the comment without reading the review. The difference is a lot smaller than I expected where the only useful difference is in Ashes where AMD usually dominates due to sheer core count.I'd be fine with that 6 core CPU from AMD.