Intel Server Roadmap: 14nm Cooper Lake in 2019, 10nm Ice Lake in 2020

by Ian Cutress & Anton Shilov on August 8, 2018 12:57 PM EST- Posted in

- Servers

- Xeon

- Ice Lake

- Cascade Lake

- Cooper Lake

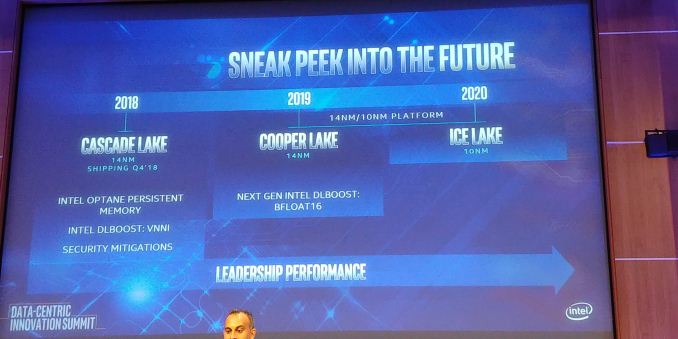

At its Data-Centric Innovation Summit in Santa Clara today, Intel unveiled its official Xeon roadmap for 2018 – 2019. As expected, the company confirmed its upcoming Cascade Lake, Cooper Lake-SP and Ice Lake-SP platforms.

Later this year Intel will release its Cascade Lake server platform, which will feature CPUs that bring support for hardware security mitigations against side-channel attacks through partitioning. In addition, the new Cascade Lake chips will also support AVX512_VNNI instructions for deep learning (originally expected to be a part of the Ice Lake-SP chips, but inserted into an existing design a generation earlier).

Moving on to the next gen. Intel's Cooper Lake-SP will be launched in 2019, several quarters ahead of what was reported several weeks ago. Cooper Lake processors will still be made using a 14 nm process technology, but will support some functional improvements, including the BFLOAT16 feature. By contrast, the Ice Lake-SP platform is due in 2020, just as expected.

One thing to note about Intel’s Xeon launch schedules is that the Cascade Lake will ship in Q4 2018, several months from now. Normally, Intel does not want to create internal competition and release new server platforms too often. That said, it sounds like we should expect Cooper Lake-SP to launch in late 2019 and Ice Lake-SP to hit the market in late 2020. To make it clear: Intel has not officially announced launch timeframes for its CPL and ICL Xeon products and the aforementioned periods should be considered as educated guesses.

| Intel's Server Platform Cadence | ||||

| Platform | Process Node | Release Year | ||

| Haswell-E | 22nm | 2014 | ||

| Broadwell-E | 14nm | 2016 | ||

| Skylake-SP | 14nm+ | 2017 | ||

| Cascade Lake-SP | 14nm++? | 2018 | ||

| Cooper Lake-SP | 14nm++? | 2019 | ||

| Ice Lake-SP | 10nm+ | 2020 | ||

While the Cascade Lake will largely rely on the Skylake-SP hardware platform introduced last year (albeit with some significant improvements when it comes to memory support), the Cooper Lake and Ice Lake will use a brand-new hardware platform. As discovered a while back, that Cooper Lake/Ice Lake server platform will use LGA4189 CPU socket and will support an eight-channel per-socket memory sub-system.

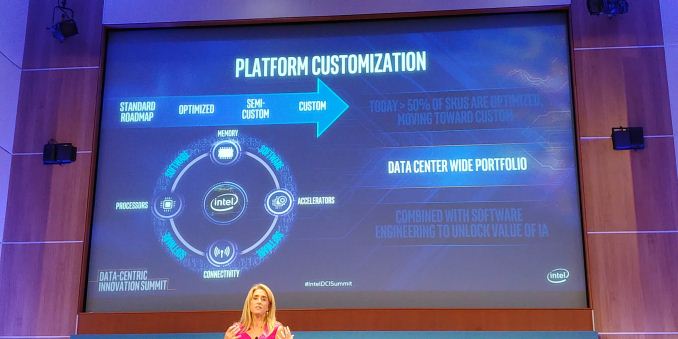

Intel has long understood that one size does not fit all, and that many of its customers need customized/optimized Xeon chips to run their unique applications and algorithms. Google was the first company to get a semi-custom Xeon back in 2008, and today over a half of Intel Xeon processors are customized for particular workloads at particular customers. That said, many of Intel’s future Xeons will feature unique capabilities only available to select clients. In fact, the latter want to keep their IP confidential, so these chips will be kept off Intel’s public roadmap. Meanwhile, as far as Intel’s CPUs and platforms are concerned, both should be ready for various ways of customization whether it is silicon IP, binning for extra speed, or adding discrete special-purpose accelerators.

Overall there are several key elements to the announcement.

Timeline and Competition

What is not clear is timeline. Intel has historically been on a 12-18 month cadence when it comes to new server processor families. As it stands, we expect Cascade Lake to hit in Q4 2018. If Cooper Lake is indeed in 2019, then even if we went on the lower bound of at 12-18 month gap then we would still be looking at Q4 2019. Step forward to Ice Lake, which Intel has listed as 2020. Again, this sounds like another 12 month jump, on the edge of that 12-18 month typical gap. This tells us two things:

Firstly, Intel is pushing the server market to update and update quickly. Typical server markets have a slow update cycle, so Intel is expected to push its new products hoping to offer something special above the previous generation. Aside from the options listed below, and depending on how the product stack looks like, there is nothing listed about the silicon which should drive that updates.

Secondly, if Intel wants to keep revenues high, it would have to increase prices for those that can take advantage of the new features. Some media have reported that the price of the new parts will be increased to compensate the fewer reasons to upgrade to keep overall revenue high.

Security Mitigations

This is going to be a big question mark. With the advent of Spectre and Meltdown, and other side channel attacks, Intel and Microsoft have scrambled to fix the issues mostly through software. The downside of these software fixes is that sometimes they cause performance slowdowns – in our recent Xeon W using Skylake-SP cores, we saw up to a 3-10% performance decreases. At some point we are expecting the processors to implement hardware fixes, and one of the questions will be on the effect on performance that these fixes give.

The fact that the slide mentions security mitigations is confusing – are they hardware or software? (Confirmed hardware) What is the performance impact? (None to next-to-none) Will this require new chipsets to enable? Will this harden against future side channel attacks? (Hopefully) What additional switches are in the firmware for these?

Updated these questions with answers from our interview with Lisa Spelman. Our interview with Lisa will be posted next week (probably).

New Instructions

Running in line with new instructions will be VNNI for Cascade Lake and bfloat16 for Cooper Lake. It is likely that Ice Lake will have new instructions too, but those are not mentioned at this time.

VNNI, or Variable Length Neural Network Instructions, is essentially the ability to support 8-bit INT using the AVX-512 units. This will be one step towards assisting machine learning, which Intel cited as improving performance (along with software enhancements) of 11x compared to when Skylake-SP was first launched. VNNI4, a variant of VNNI, was seen in Knights Mill, and VNNI was meant to be in Ice Lake, but it would appear that Intel is moving this into Cascade Lake. It does make me wonder exactly what is needed to enable VNNI on Cascade compared to what wasn’t possible before, or whether this was just part of Intel’s expected product segmentation.

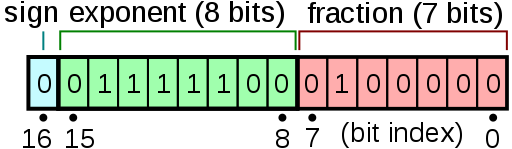

Also on the cards is the support for bfloat16 in Cooper Lake. bfloat16 is a data format, used most recently by Google, like a 16-bit float but in a different way. The letter ‘b’ in this case stands for brain, with the data format expected for deep learning. How it differs regarding a standard 16-bit float is in how the number is defined.

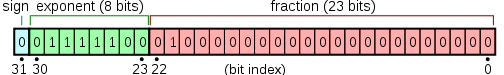

A standard float has the bits split into the sign, the exponent, and the fraction. This is given as:

- <sign> * 1 + <fraction> * 2<exponent>

For a standard IEEE754 compliant number, the standard for computing, there is one bit for the sign, five bits for the exponent, and 10 bits for the fraction. The idea is that this gives a good mix of precision for fractional numbers but also offer numbers large enough to work with.

What bfloat16 does is use one bit for the sign, eight bits for the exponent, and 7 bits for the fraction. This data type is meant to give 32-bit style ranges, but with reduced accuracy in the fraction. As machine learning is resilient to this type of precision, where machine learning would have used a 32-bit float, they can now use a 16-bit bfloat16.

These can be represented as:

| Data Type Representations | ||||||

| Type | Bits | Exponent | Fraction | Precision | Range | Speed |

| float32 | 32 | 8 | 23 | High | High | Slow |

| float16 | 16 | 5 | 10 | Low | Low | 2x Fast |

| bfloat16 | 16 | 8 | 7 | Lower | High | 2x Fast |

This is a breaking news that will be updated as we receive more information.

Related Reading:

- Intel 10nm Production Update: Systems on Shelves For Holiday 2019

- Intel’s Xeon Scalable Roadmap Leaks: Cooper Lake-SP, Ice Lake-SP Due in 2020

- Power Stamp Alliance Exposes Ice Lake Xeon Details: LGA4189 and 8-Channel Memory

- Intel’s High-End Cascade Lake CPUs to Support 3.84 TB of Memory Per Socket

- Intel Documents Point to AVX-512 Support for Cannon Lake Consumer CPUs

- Intel Begins EOL Plan for Xeon Phi 7200-Series ‘Knights Landing’ Host Processors

- Intel Shows Xeon Scalable Gold 6138P with Integrated FPGA, Shipping to Vendors

- Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

51 Comments

View All Comments

nevcairiel - Wednesday, August 8, 2018 - link

Apple just uses the process technology of other companies, they didn't develop it. If anyone, you should be praising TSMC for steady progress in the process-department, not Apple.(The same can be translated for AMD, and anyone else really, since Intel is a rare occurance with both designing and making the chips themselves.)

nevcairiel - Wednesday, August 8, 2018 - link

Also, it absolutely does matter "how you measure" it. Because the smaller you get, the harder it is to make advances. If someone else is behind the curve, they'll have an easier time catching up then the frontrunners making new developments.This is not meant to excuse the delays Intel faced, I just don't know what went wrong there, if they were too ambitious with their goals and it backfired or whatever.

rahvin - Wednesday, August 8, 2018 - link

Transistor sizes are essentially frozen at 16nm, FinFET and all the other technologies to allow die shrinks just dope the transistors in a way that opens them back up to 16nm. Based on this I'd say it's pretty likely that even if we can continue to shrink the trace sizes without quantum effects blowing up, the transistor itself just can't operate below 16nm because the electrons keep tunneling out.I'd also like to point out that Intel's 10nm chips "shipping" are a joke, it was a limited run of like 5k chips that barely work, generate more heat and less computing power than even the lowest grade processor from the previous generation. Intel's 10nm was a marking gimmick and not in the least real. AMD has not only caught up to Intel at this point, by 2020 they should be at least one process node ahead of Intel and the performance leader. Intel's response has been to raise prices again to compensate for the revenue AMD is going to take from them.

Frankly it's astounding how bad it is for Intel right now. It's also astounding how few heads have rolled at Intel for the 10nm debacle.

silverblue - Thursday, August 9, 2018 - link

Assuming people believe that GF's 7nm process is any more advanced than Intel's 10nm process. Regardless of name, don't let that sway you into thinking it's actually going to be superior, at least not until mass production starts on both nodes. 7nm might end up being better, it might not clock as high, no idea. Historically, Intel's process has been better at the same node, and it's only now that GF are really competitive (after years of shafting AMD).There's a table on this link which is a rather brief comparison between the competing processes:

https://www.semiwiki.com/forum/content/7602-semico...

sgeocla - Thursday, August 9, 2018 - link

Intel initially revealed that its own 10nm process was "within" 17% of the best 7nm process of its competitors. Within means they are not ahead but behind. AMD has access to 7nm production from both GF and TSMC so it's likely they have their pick from at least 4 variants of 7nm, one for HP (high frequency) and one for LP from each fab. They are going to use the best variant for the best chip use case.And according to Semiaccurate who claims access to internal documents from Intel (and stated for some time that 10nm Ice Lake was postponed again for late 2020), the next 10nm chips that are going to be released are going to be more like "12nm" (14nm++ with some 10nm features) because the initial 10nm process was never going to yield big enough dies for desktops or servers

LurkingSince97 - Friday, August 10, 2018 - link

Intel's 10nm is about the same size as the others' 7nm. Its performance (power and/or frequency) may be higher, as is typical of Intel's processes as they often build in some extra features that are complicated and hard to design for but improve performance.The foundries can't really do this, as this would make their product harder to use and more expensive.

The reason why the numbers are off is that when the foundries went from 20nm to 16/14 they _DID NOT SHRINK_ they just added fin-fets (mostly, there were other minor changes). So now they were "14nm" but really barely smaller than intel's 22nm. Intel's 14nm was quite a bit smaller than those. For a variety of reasons, the measurements between foundries and various fabs can no longer really be described well with one number like '7nm'.

Really, just look at how much die space 256K of cache takes up, and how much space some logic takes up and compare that. What is important to compare is density. Performance / Power are also very important but aren't things that the 'nm' number can indicate anymore. Remember when TSMC went from 28nm planar to 20nm planar? Remember how Nvidia and AMD did not bother to use it because it only provided density increases and no performance increase? Yeah, the 'nm' of a process is now the least important thing.

wumpus - Thursday, August 9, 2018 - link

"Historically, Intel's process has been better at the same node, and it's only now that GF are really competitive (after years of shafting AMD)."I'm not so sure of that, but largely because Intel has been a node ahead of *everybody*, not just GF/AMD. Also AMD largely screwed itself with the Phenom, Bulldozer, Vega and other lousy designs.

IBM traditionally has been able to produce at nodes similar to Intel, but only for extremely low volume (and they were presumably indifferent to yields, as these go into ultra-high margin machines).

Having TSMC (and hopefully GF) catch up to Intel while AMD has the design side of things working again (rumors that Vega was sacrificed to the Sony-financed Navi imply that Navi might be pretty good, and Zen is amazingly strong) might bring back the strongest competition since Intel tried to use Itanium and Pentium4 to compete with Athlon64 (no process advantage in the world could fix that).

Wilco1 - Thursday, August 9, 2018 - link

Not sure what you mean with "Transistor sizes are essentially frozen at 16nm" - transistors are scaling extremely well, much better than metal. For example Samsung's 7nm uses a fin pitch of 27nm which is almost perfect 2x scaling from their 14nm. The fin widths are already much smaller than 16nm. The foundries are very confident about 5nm and even 3nm, so we're not near the end of scaling.Kvaern1 - Wednesday, August 8, 2018 - link

Imagine if, everything else being equal, Intel had said yes we'll fab to Apple all those years ago.BillBear - Wednesday, August 8, 2018 - link

Passing up Apple's business is widely thought to be Intel's biggest mistake under Otellini.They left a hell of a lot of revenue on the table for Samsung and TSMC who reinvested their profits into quickly improving their process technology.