The Intel SSD 660p SSD Review: QLC NAND Arrives For Consumer SSDs

by Billy Tallis on August 7, 2018 11:00 AM ESTPower Management Features

Real-world client storage workloads leave SSDs idle most of the time, so the active power measurements presented earlier in this review only account for a small part of what determines a drive's suitability for battery-powered use. Especially under light use, the power efficiency of a SSD is determined mostly be how well it can save power when idle.

For many NVMe SSDs, the closely related matter of thermal management can also be important. M.2 SSDs can concentrate a lot of power in a very small space. They may also be used in locations with high ambient temperatures and poor cooling, such as tucked under a GPU on a desktop motherboard, or in a poorly-ventilated notebook.

| Intel SSD 660p 1TB NVMe Power and Thermal Management Features |

|||

| Controller | Silicon Motion SM2263 | ||

| Firmware | NHF034C | ||

| NVMe Version |

Feature | Status | |

| 1.0 | Number of operational (active) power states | 3 | |

| 1.1 | Number of non-operational (idle) power states | 2 | |

| Autonomous Power State Transition (APST) | Supported | ||

| 1.2 | Warning Temperature | 77°C | |

| Critical Temperature | 80°C | ||

| 1.3 | Host Controlled Thermal Management | Supported | |

| Non-Operational Power State Permissive Mode | Not Supported | ||

The Intel SSD 660p's power and thermal management feature set is typical for current-generation NVMe SSDs. The rated exit latency from the deepest idle power state is quite a bit faster than what we have measured in practice from this generation of Silicon Motion controllers, but otherwise the drive's claims about its idle states seem realistic.

| Intel SSD 660p 1TB NVMe Power States |

|||||

| Controller | Silicon Motion SM2263 | ||||

| Firmware | NHF034C | ||||

| Power State |

Maximum Power |

Active/Idle | Entry Latency |

Exit Latency |

|

| PS 0 | 4.0 W | Active | - | - | |

| PS 1 | 3.0 W | Active | - | - | |

| PS 2 | 2.2 W | Active | - | - | |

| PS 3 | 30 mW | Idle | 5 ms | 5 ms | |

| PS 4 | 4 mW | Idle | 5 ms | 9 ms | |

Note that the above tables reflect only the information provided by the drive to the OS. The power and latency numbers are often very conservative estimates, but they are what the OS uses to determine which idle states to use and how long to wait before dropping to a deeper idle state.

Idle Power Measurement

SATA SSDs are tested with SATA link power management disabled to measure their active idle power draw, and with it enabled for the deeper idle power consumption score and the idle wake-up latency test. Our testbed, like any ordinary desktop system, cannot trigger the deepest DevSleep idle state.

Idle power management for NVMe SSDs is far more complicated than for SATA SSDs. NVMe SSDs can support several different idle power states, and through the Autonomous Power State Transition (APST) feature the operating system can set a drive's policy for when to drop down to a lower power state. There is typically a tradeoff in that lower-power states take longer to enter and wake up from, so the choice about what power states to use may differ for desktop and notebooks.

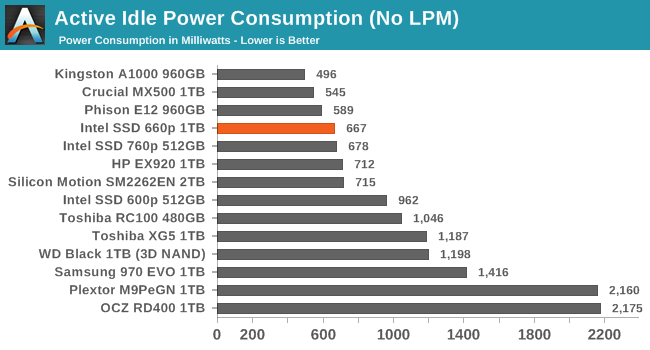

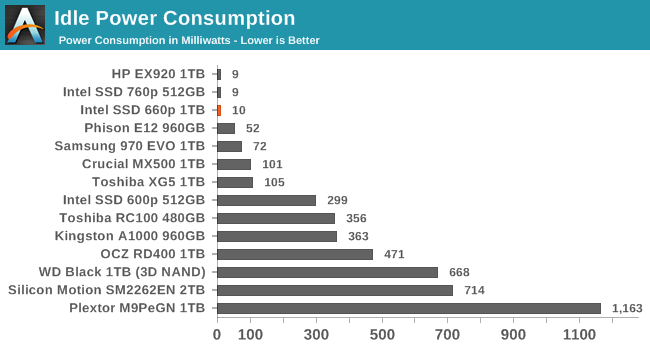

We report two idle power measurements. Active idle is representative of a typical desktop, where none of the advanced PCIe link or NVMe power saving features are enabled and the drive is immediately ready to process new commands. The idle power consumption metric is measured with PCIe Active State Power Management L1.2 state enabled and NVMe APST enabled if supported.

The Intel 660p has a slightly lower active idle power draw than the SM2262-based drives we've tested, thanks to the smaller controller and reduced DRAM capacity. It isn't the lowest active idle power we've measured from a NVMe SSD, but it is definitely better than most high-end NVMe drives. In the deepest idle state our desktop testbed can use, we measure an excellent 10mW draw.

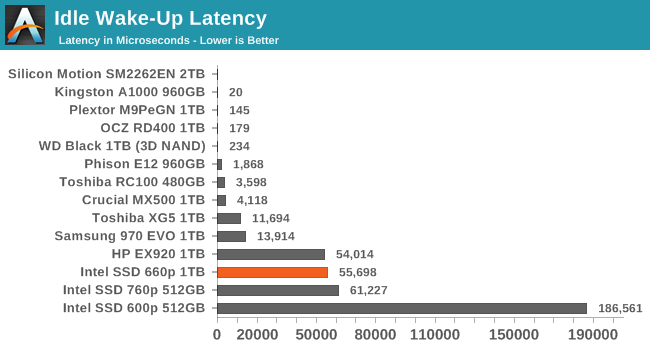

The Intel 660p's idle wake-up time of about 55ms is typical for Silicon Motion's current generation of controllers and much better than their first-generation NVMe controller as used in the Intel SSD 600p. The Phison E12 can wake up in under 2ms from a sleep state of about 52mW, but otherwise the NVMe SSDs that wake up quickly were saving far less power than the 660p's deep idle.

86 Comments

View All Comments

DanNeely - Tuesday, August 7, 2018 - link

Over 18 months between 2013 and 2015 Tech Report tortured a set of early generation SSDs to death via continuous writing until they failed. I'm not aware of anyone else doing the same more recently. Power off retention testing is probably beyond anyone without major OEM sponsorship because each time you power a drive on to see if it's still good you've given its firmware a chance to start running a refresh cycle if needed. As a result to look beyond really short time spans, you'd need an entire stack of each model of drive tested.https://techreport.com/review/27909/the-ssd-endura...

Oxford Guy - Tuesday, August 7, 2018 - link

Torture tests don't test voltage fading from disuse, though.StrangerGuy - Tuesday, August 7, 2018 - link

And audiophiles always claim no tests are ever enough to disprove their supernatural hearing claims, so...Oxford Guy - Tuesday, August 7, 2018 - link

SSD defects have been found in a variety of models, such as the 840 and the OCZ Vertex 2.mapesdhs - Wednesday, August 8, 2018 - link

Please explain the Vertex2, because I have a lot of them and so far none have failed. Or do you mean the original Vertex2 rather than the Vertex2E which very quickly replaced it? Most of mine are V2Es, it was actually quite rare to come across a normal V2, they were replaced in the channel very quickly. The V2E is an excellent SSD, especially for any OS that doesn't support TRIM, such as WinXP or IRIX. Also, most of the talk about the 840 line was of the 840 EVO, not the standard 840; it's hard to find equivalent coverage of the 840, most sites focused on the EVO instead.Valantar - Wednesday, August 8, 2018 - link

If the Vertex2 was the one that caused BSODs and was recalled, then at least I had one. Didn't find out that the drive was the defective part or that it had been recalled until quite a lot later, but at least I got my money back (which then paid for a very nice 840 Pro, so it turned out well in the end XD).Oxford Guy - Friday, August 10, 2018 - link

Not recalled. There was a program where people could ask OCZ for replacements. But, OCZ also "ran out" of stock for that replacement program and never even covered the drive that was most severely affected: the 240 GB 64-bit NAND unit.BurntMyBacon - Wednesday, August 8, 2018 - link

I believe the problems that plagued the 840 EVO were relevant to the 840 based on two facts. Both SSDs used the same flash. Samsung eventually released a (partial) fix for the 840 similar to the 840 EVO. The fix was apparently incompatible with Linux/BSD, though.Spunjji - Wednesday, August 8, 2018 - link

You'd also be providing useless data by doing so. The drives will have been superseded at least twice before you even have anything to show from the (very expensive) testing.JoeyJoJo123 - Tuesday, August 7, 2018 - link

>muh ssd endurance boogeymanLike clockwork.