The Intel Core i7-8086K Review

by Ian Cutress on June 11, 2018 8:00 AM EST- Posted in

- CPUs

- Intel

- Core i7

- Anniversary

- Coffee Lake

- i7-8086K

- 5 GHz

- 8086K

- 5.0 GHz

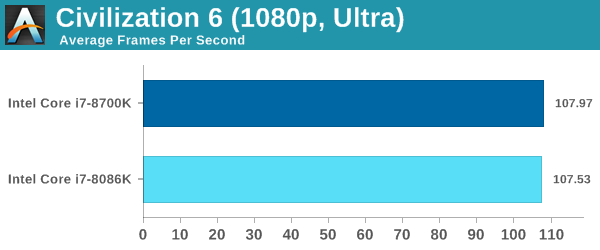

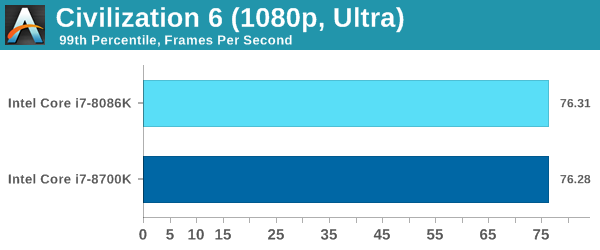

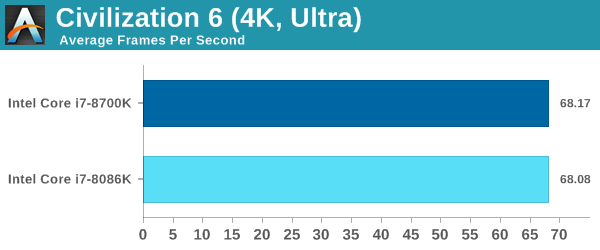

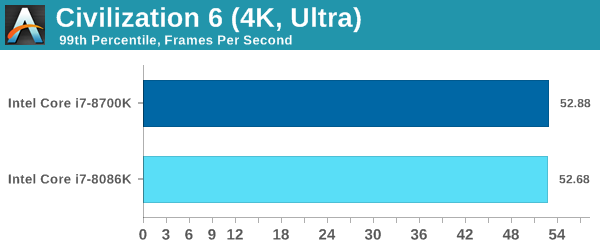

Civilization 6

First up in our CPU gaming tests is Civilization 6. Originally penned by Sid Meier and his team, the Civ series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer overflow. Truth be told I never actually played the first version, but every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, it a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Perhaps a more poignant benchmark would be during the late game, when in the older versions of Civilization it could take 20 minutes to cycle around the AI players before the human regained control. The new version of Civilization has an integrated ‘AI Benchmark’, although it is not currently part of our benchmark portfolio yet, due to technical reasons which we are trying to solve. Instead, we run the graphics test, which provides an example of a mid-game setup at our settings.

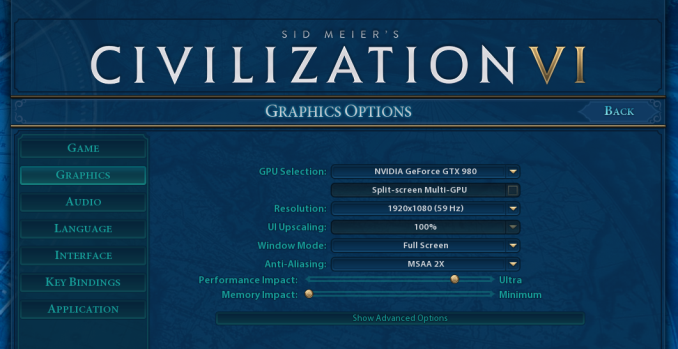

At both 1920x1080 and 4K resolutions, we run the same settings. Civilization 6 has sliders for MSAA, Performance Impact and Memory Impact. The latter two refer to detail and texture size respectively, and are rated between 0 (lowest) to 5 (extreme). We run our Civ6 benchmark in position four for performance (ultra) and 0 on memory, with MSAA set to 2x.

For reviews where we include 8K and 16K benchmarks (Civ6 allows us to benchmark extreme resolutions on any monitor) on our GTX 1080, we run the 8K tests similar to the 4K tests, but the 16K tests are set to the lowest option for Performance.

As a reminder, ASRock were not able to loan us the exact GPU that I normally use for our gaming testing. Instead we were able to source an RX 580, so this means that our gaming testing data will only have two data points: a Core i7-8700K and a Core i7-8086K. We will get some more data next week when we are back in the office.

All of our benchmark results can also be found in our benchmark engine, Bench.

ASRock RX 580 Performance

Almost zero difference for Civilization between the two. The 8086K is never in a situation to fire up to 5.0 GHz.

111 Comments

View All Comments

bug77 - Monday, June 11, 2018 - link

So what happened here? It looks like Intel's play with frequencies made this throttle more often. At least that the only explanation I can find for 8700k ending up better in so many tests.Tkan215215 - Monday, June 11, 2018 - link

As always its called milking and wallet ripper they know people still Buy them anywaybug77 - Monday, June 11, 2018 - link

I wasn't expecting this to be a cost-effective part, but rather a collector-oriented one.But mostly worse than a standard part is surely unexpected.

AutomaticTaco - Monday, June 11, 2018 - link

I don't think it's worse as much as the silicon lottery exists regardless of it. In other words, even among speed binned parts some OC better than others. And that's true for both the 8086K, the 8700K or any others.just4U - Wednesday, June 13, 2018 - link

I agree bug,I'd be very interested in this processor if it brought something to the table to justify it's cost. The 4790K did with a better thermal design. They could have added a kick ass cooler, or a factory delid and redo for better thermals. Something .. anything besides a small bump in clocks.

Drumsticks - Monday, June 11, 2018 - link

It might be milking, but I kind of have a hard time believing that. They're only making 50,000 of them, and only at about a 21% markup over the 8700k. But they're flat out giving away 16% of the chips. I doubt Intel is going to milk much money beyond their regular business from this. It's the companies 50th anniversary year, so I'm going to guess it's just positive fanfare and a collector's item related to that and it happening to be an anniversary for a well known processor at the same time.Old_Fogie_Late_Bloomer - Monday, June 11, 2018 - link

I enjoy hating Intel as much as the next guy but this is a good point.Revenue from 41,914 8086Ks: $17,813,450

Revenue from 50,000 8700Ks: $17,500,000 (at $350 apiece)

The remaining $313,450 doesn't really feel like a lot of money when you factor in binning the chips and dealing with all the other overhead of the promotion, especially since Intel isn't getting all of that money anyway.

SanX - Monday, June 11, 2018 - link

This was actually not the revenue but the PROFIT you blind people with easily effed brains. The production cost for this chip was probably less then 20 bucks. The processor in your phone is probably more hi-tech, has more transistors, more cores, and was made on more advances factories with 10nm litho being all sold below $25.mkaibear - Tuesday, June 12, 2018 - link

What are you smoking?His maths is bang on, although he neglects the cut the retailer will be taking off the top for that. They aren't making that much profit off each chip.

SanX - Tuesday, June 12, 2018 - link

They aren't making that much profit off each chip? If they aren't making huge profits then all mobile chip factories lose money by selling the same transistor count processors like the one in Apple or Samsung phones for just $25