Assessing Cavium's ThunderX2: The Arm Server Dream Realized At Last

by Johan De Gelas on May 23, 2018 9:00 AM EST- Posted in

- CPUs

- Arm

- Enterprise

- SoCs

- Enterprise CPUs

- ARMv8

- Cavium

- ThunderX

- ThunderX2

ThunderX: From Small & Simple to Wide & Complex

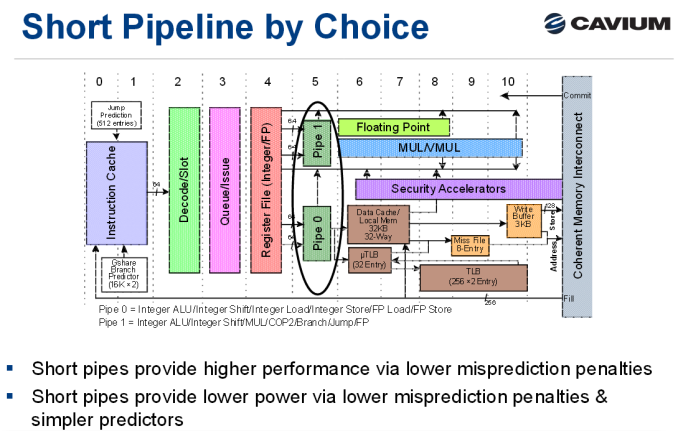

As a brief recap, the original ThunderX was an improved version of the Octeon III: a dual-issue in-order CPU core with two short pipelines.

The advantage of the original ThunderX design is that such a simple core can be very energy efficient, especially for "low ILP" (instruction level parallelism) workloads such as web servers and most database servers. Of course, such a short pipeline limits the clockspeed, and such a simple in-order design offers low single threaded performance in medium and high ILP workloads, whereas more advanced out-of-order processors can extract significant parallelism.

Cavium's "New" Core: Vulcan

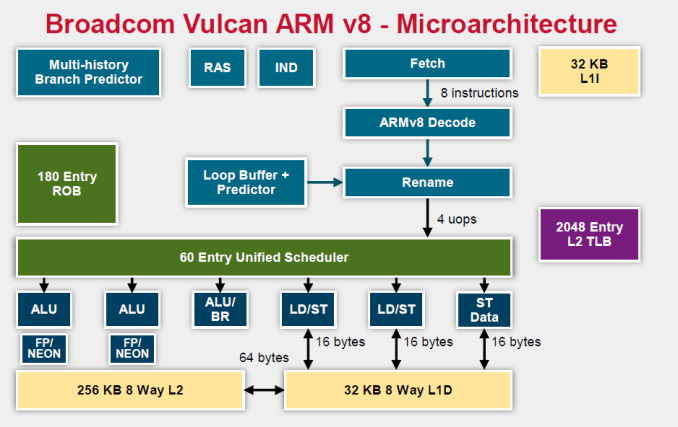

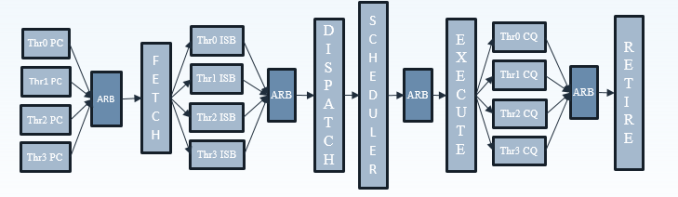

Relative to the original ThunderX, the Vulcan core of the ThunderX2 is an entirely different beast. Announced in 2014 by Broadcom, it is a relatively wide core that runs 4 simultaneous threads (SMT4). As a result, the wide back end should be quite busy even when running low-ILP server workloads.

To make sure that all 4 SMT threads can be sustained, the ThunderX2 front-end can fetch up to 64 bytes from the 8-way set associative 32 KB instruction cache, which is outfitted with a simple next line prefetcher. However, fetching 8 instructions is only possible if there is no taken branch inside those 64 bytes. In that case, the fetch breaks off at the taken branch.

That means that in branch intensive code (databases, AI...) the fetcher will get +/- 5 instructions per clock cycle on average, as one out of 5 instructions is a branch. The fetched instructions are then sent to a smoothing buffer – a buffer where the fetched instructions are held for decoding.

The decoder will then work on a bundle of 4 instructions. Between the decoder and the rename phase each thread has "skid buffer" which consists of 8 bundles. So between the 4 threads up to 32 bundles (128 instructions) can be skid buffered at any one time..

Those 4 instructions – a bundle – travel together through the pipeline until they reach the unified issue queue of the scheduler. Just like Intel has implemented in Nehalem, there is also a loop buffer and predictor, which Intel used to call a "Loop Stream Detector". This loop buffer avoids branch mispredictions and contains decoded µops, which "shortens" the pipeline and reduces the amount of power spent on decoding.

Overall, up to 6 instructions can be executed at the same time. This is divided into 2 ALU/FP/NEON slots, 1 ALU/branch slot, 2 load/store slots (16 bytes), and 1 pure store slot that sends 16 bytes to the D-cache. There is a small (Cavium would not disclose how small) L1 TLB for zero latency translation from Virtual to physical addresses. There is no hardware prefetcher for the L1 D-cache, but the L2 cache has a rather complex hardware prefetcher which is able to recognize patterns (besides being able to stride or fetching the next line).

This is enough to feed the back-end, which can sustain 4 instructions per cycle from 4 different threads.

Micro Architecture Differences

Ultimately Cavium has only published a limited amount of information on the ThunderX2 cores, so there are some limits to our knowledge. But we've gone ahead and summarized some of the key specifications of the different CPU architectures below.

| Feature | Cavium ThunderX2 |

Qualcomm Centriq "Falkor" |

Intel "Skylake"-SP |

AMD EPYC "Zen" |

| L1-I cache Associativity |

32 KB 8-way |

64KB 8-way (+ 24 KB L0) |

32 KB 8-way |

64KB 4-way |

| L1-D cache Associativity |

32 KB 8-way |

32 KB 8-way* |

32 KB 8-way |

32 KB 8-way |

| Load Bandwith | 2x 16B | 2x 16B | 2x 32B | 2x 16B |

| L2-cache | 256 KB 8-way |

256 KB 8-way |

1 MB 16-way |

512 KB 8-way |

| Fetch Width | 8 instructions | 4 instructions | 16 bytes (+/- 4-5 x86) | 32 bytes (+/- 6-8 x86) |

| Issue Queue | 60 | 76 | 97 unified | 6x14 |

| Sustainable Instructions/cycle | 4 | 4 | 5-6 | 4-5 |

| Instructions in Flight | 180 (ROB) | 128 | 224 (ROB) | 192 |

| Int. Pipeline Length |

? |

15 stages | 19 stages 14 stage from µop cache |

19 stages? |

| TLB Instructions TLB Data |

"Small L1" + 2048 unified L2 | ? 64+512 |

128 64 +1536 Unified |

8+64+512 64+1532 |

A detailed analysis is out of the scope of this article. But you can read Ian's analyses of the Falkor, Skylake and Zen architectures here at AnandTech. We limit ourselves to the most obvious differences.

It is pretty clear that Intel's single-threaded performance remains unchallenged: the Skylake core is the widest core, keeps the most instructions in flight, and most importantly runs at the highest clockspeed. The ThunderX2 core is the one that fetches the most instructions per cycle, as it has to be able to keep 4 threads running. The fetch unit will grab 8 instructions from one thread, than grab 8 from the second thread and it will keep cycling between threads. A bad prediction could thus lower the performance of single thread significantly.

97 Comments

View All Comments

Wilco1 - Wednesday, May 23, 2018 - link

That's your uninformed opinion... Microsoft has different plans.ZolaIII - Thursday, May 24, 2018 - link

Windows is DOA anyway. M$ makes more money this day's on Linux then it does on Window's combined. Only thing making it still alive is MS Office but even that will change in couple of years.Wilco1 - Thursday, May 24, 2018 - link

Calling Windows dead when it ships on 95+% of PCs sold is eh... a little bit premature. Get back to me when 50+% of PCs ship with Linux instead of Windows.ZolaIII - Friday, May 25, 2018 - link

Get back to me when windows ships with 5% in; servers, embedded, router's, smartphones...jimbo2779 - Thursday, May 24, 2018 - link

In what way is it making more from Linux?ZolaIII - Friday, May 25, 2018 - link

https://www.computerworld.com/article/3271085/micr...Even your Windows PC, Office and everything else from Microsoft this day's is backed up by a cloud which is Linux based.

defaultluser - Wednesday, May 23, 2018 - link

Page 11 has "Apache Spark and Energy Consumption" in the title, but the page only containsApache Spark results. WHERE IS THE ENERGY CONSUMPTION?

We need power consumption tests during benchmarks to show if the architecture has better perf/watt than Intel. Otherwise, why did you publish this obviously incomplete article?

Ryan Smith - Wednesday, May 23, 2018 - link

Whoops. Sorry, that was a small section that was moved to page 5.ruthan - Wednesday, May 23, 2018 - link

Well, where is the most important chart performance per dollar comparison with x86 solution?That virtualization support, is some arm specific yes i we need feature and proprietary hell like Lpars.. or its finally support Vmware? - that means virtualization.

Where is could it run Crysis test?

HStewart - Wednesday, May 23, 2018 - link

VMWare is not currently support - and probably not for a long time - unless they ran in emulation mode and it would slower than Atomhttps://kb.vmware.com/s/article/1003882