The NVIDIA Titan V Deep Learning Deep Dive: It's All About The Tensor Cores

by Nate Oh on July 3, 2018 10:15 AM ESTDeepBench Training: Convolutions

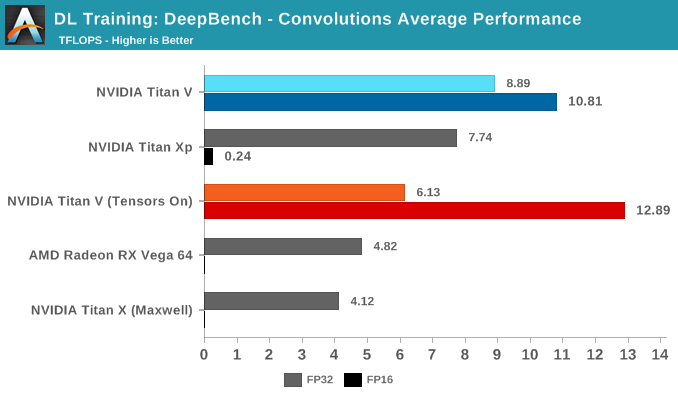

Moving on to DeepBench's convolutions training workloads, we should see tensor cores significantly accelerate performance once again. Given that convolutional layers are essentially standard for image recognition and classification, convolutions are one of the biggest potential beneficiaries of tensor core acceleration.

Taking the average of all tests, we again see Volta's mixed precision (FP16 with tensor cores enabled) taking the lead. Unlike with GEMM, enabling tensors on FP32 convolutions results in a tangible performance penalty.

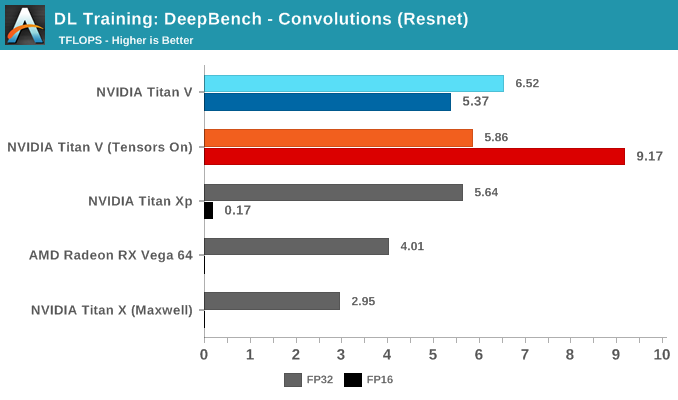

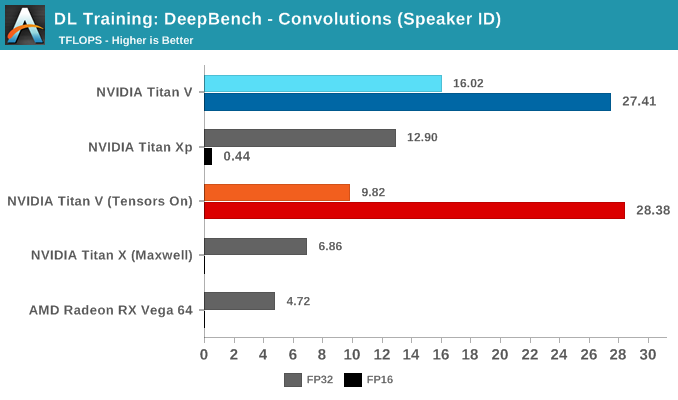

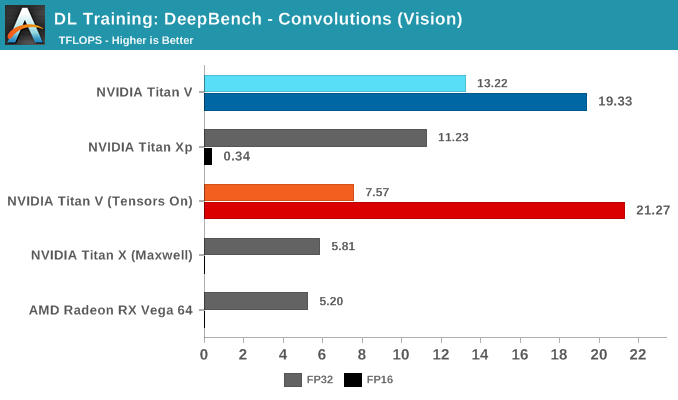

Breaking the tests out by application does not particularly clarify matters. It's only when we return to the DeepBench convolution kernels that we get a little more detail. Performance drops for both mixed precision modes when computations involve ill-matching tensor dimensions, and while standard precision modes follow a cuDNN-specified fastest forward algorithm, such as Winograd, the mixed precision modes are obliged to use implicit precomputed GEMM for all kernels.

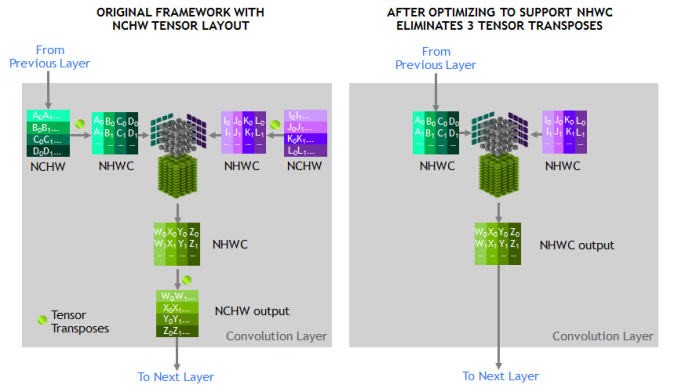

To qualify for tensor core acceleration, both input and output channel dimensions must be a multiple of eight, and the input, filter, and output data-types must be half precision. Without going too deep into detail, the implementation of convolution acceleration with tensor cores requires tensors to be in a NHWC format (Number-Height-Width-Channel), but DeepBench, and most frameworks, expect NCHW formatted tensors. In this case, the input channels are not multiples of eight, but DeepBench does automatic padding to account for this.

The other factor is that all these NCHW kernels would require transposition to NHWC, which NVIDIA has noted takes up appreciable runtime once convolutions are accelerated. This would affect both FP32 and FP16 mixed precision modes.

Convolutions still have to be adjusted correctly to benefit from tensor core acceleration. As DeepBench uses the NVIDIA-supplied libraries and makefiles, it's interesting that the standard behavior here would be to force tensor core use at all times.

65 Comments

View All Comments

mode_13h - Wednesday, July 4, 2018 - link

It's not that hard, really. They're just saying Nvidia made a library (cuDNN), so that different deep learning frameworks don't each have to hand-optimize code for things like its exotic tensor cores.For their part, AMD has a similar library they call MIOpen.

philehidiot - Wednesday, July 4, 2018 - link

Why thank you. That now does help it make a little more sense. The maths does make sense but the computer science is generally beyond me.aelizo - Wednesday, July 4, 2018 - link

At that price point, I would have liked to see some comparison to 2xTinan Xp, or even some comparison to 3x1080Ti's.Last year I saw some comparison between this sets on pytorch:

https://medium.com/@u39kun/titan-v-vs-1080-ti-head...

mode_13h - Wednesday, July 4, 2018 - link

I'm suspicious that he's not actually using the tensor cores. The V100/GV100 also has double-rate fp16, like the P100/GP100 before it. So, a < 2x improvement from going to 16-bit suggests it might only be using the packed half-precision instructions, rather than the tensor cores.Either that or he's not using batching and is completely limited by memory bottlenecks.

aelizo - Wednesday, July 4, 2018 - link

I suspect something similar, that is Why Nate could have done a great job with a similar comparison.Nate Oh - Monday, July 9, 2018 - link

Unfortunately, we only have 1 Titan Xp, which is actually on loan from TH. These class of devices are (usually) not sampled by NVIDIA so we could not have pursued what you suggest. We split custody of Titan V, and that alone was not an insignificant investment.Additionally, mGPU DL analysis introduces a whole new can of worms. As some may have noticed, I have not mentioned NCCL/MPI, NVLink, Volta mGPU enhancements, All Reduce, etc. It's definitely a topic for further investigation if the demand and resources match.

mode_13h - Tuesday, July 10, 2018 - link

Multi-GPU scaling is getting somewhat esoteric, but perhaps a good topic for future articles.Would be cool to see the effect of NVLink, if you can get access to such a system in the cloud. Maybe Nvidia will give you some sort of "press" access to their cloud?

ballsystemlord - Saturday, July 7, 2018 - link

Here are some spelling/grammar corrections. You write far fewer than most of the other authors at anandtech ( If Ian had written this I would have need 2 pages for all the corrections :) ). Good job!"And Volta does has those separate INT32 units."

You mean "have".

And Volta does have those separate INT32 units.

"For our purposes, the tiny image dataset of CIFAR10 works fine as running a single-node on a dataset like ImageNet with non-professional hardware that could be old as Kepler"...

Missing "as".

For our purposes, the tiny image dataset of CIFAR10 works fine as running a single-node on a dataset like ImageNet with non-professional hardware that could be as old as Kepler...

"Moving forward, we're hoping that MLPerf and similar efforts make good headway, so that we can tease out a bit more secrets from GPUs."

Grammar error.

Moving forward, we're hoping that MLPerf and similar efforts make good headway, so that we can tease out a bit more of the secrets from GPUs.

mode_13h - Saturday, July 7, 2018 - link

Yeah, if that's the worst you found, no one would even *suspect* him for being a lolcat.Vanguarde - Monday, July 9, 2018 - link

I purchased this card to get better frames in Witcher 3 at 4K everything maxed out, heavily modded. Never dips below 60fps and usually near 80-100fps