The AMD 2nd Gen Ryzen Deep Dive: The 2700X, 2700, 2600X, and 2600 Tested

by Ian Cutress on April 19, 2018 9:00 AM ESTCivilization 6

First up in our CPU gaming tests is Civilization 6. Originally penned by Sid Meier and his team, the Civ series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer overflow. Truth be told I never actually played the first version, but every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, it a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Perhaps a more poignant benchmark would be during the late game, when in the older versions of Civilization it could take 20 minutes to cycle around the AI players before the human regained control. The new version of Civilization has an integrated ‘AI Benchmark’, although it is not currently part of our benchmark portfolio yet, due to technical reasons which we are trying to solve. Instead, we run the graphics test, which provides an example of a mid-game setup at our settings.

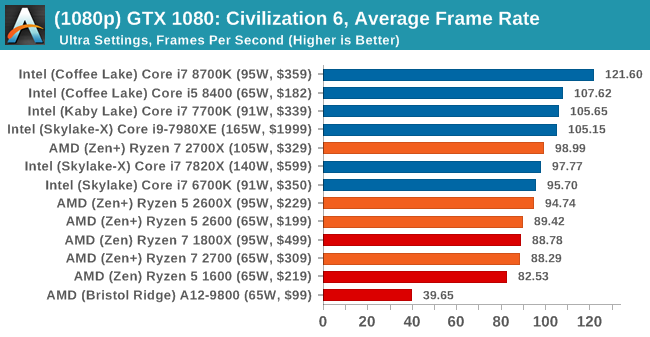

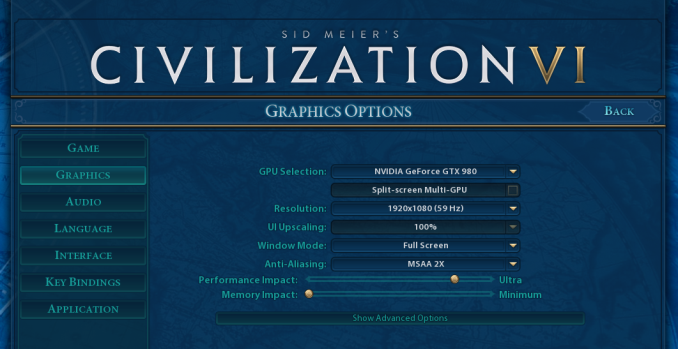

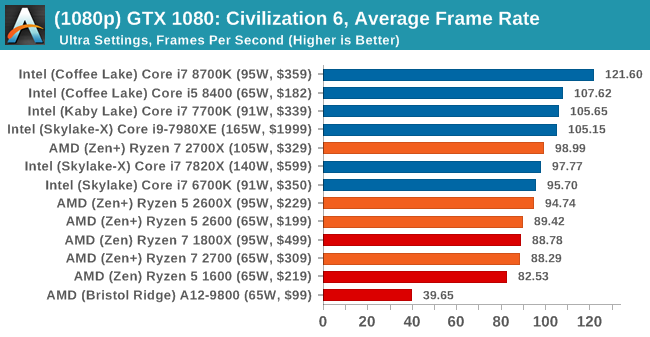

At both 1920x1080 and 4K resolutions, we run the same settings. Civilization 6 has sliders for MSAA, Performance Impact and Memory Impact. The latter two refer to detail and texture size respectively, and are rated between 0 (lowest) to 5 (extreme). We run our Civ6 benchmark in position four for performance (ultra) and 0 on memory, with MSAA set to 2x.

For reviews where we include 8K and 16K benchmarks (Civ6 allows us to benchmark extreme resolutions on any monitor) on our GTX 1080, we run the 8K tests similar to the 4K tests, but the 16K tests are set to the lowest option for Performance.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

8K

16K

545 Comments

View All Comments

jjj - Thursday, April 19, 2018 - link

I was wondering about gaming, so there is no mistake there as Ryzen 2 seems to top Intel.As of right now, I don't seem to find memory specs in the review yet, safe to assume you did as always, highest non-OC so Ryzen is using faster DRAM?

Also yet to spot memory letency, any chance you have some numbers at 3600MHz vs Intel? Thanks.

jjj - Thursday, April 19, 2018 - link

And just between us, would be nice to have some Vega gaming results under DX12.aliquis - Thursday, April 19, 2018 - link

Would be nice if any reviewer actually benchmarked storage devices maybe even virtualization because then we'd see meltdown and spectre mitigation performance. Then again do AMD have any for spectre v2 yet? If not who knows what that will do.HStewart - Thursday, April 19, 2018 - link

I notice that that systems had higher memory, but for me I believe single threaded performance is more important that more cores. But it would be bias if one platform is OC more than another. Personally I don't over clock - except for what is provided with CPU like Turbo mode.One thing that I foresee in the future is Intel coming out with 8 core Coffee Lake

But at least it appears one thing is over is this Meltdown/Spectre stuff

Lolimaster - Thursday, April 19, 2018 - link

Intel 8 core CL won't stop the bleeding, lose more profits making them "cheap" vs a new Ryzen 7nm with at least 10% more clocks and 10% more IPC, RIP.HStewart - Thursday, April 19, 2018 - link

I just have to agree to disagree on that statement - especially on "cheap" statementACE76 - Thursday, April 19, 2018 - link

CL can't scale to 8 cores...not without done serious changes to it's architecture...Intel is in some trouble with this Ryzen refresh...also worth noting is that 7nm Ryzen 2 will likely bring a considerable performance jump while Intel isn't sitting on anything worthwhile at the moment.Alphasoldier - Friday, April 20, 2018 - link

All Intel's 8cores in HEDT except SkylakeX are based on their year older architecture with a bigger cache and the quad channel.So if Intel have the need, they will simply make a CL 8core. 2700X is pretty hungry when OC'd, so Intel don't have to worry at all about its power consuption.

moozooh - Sunday, April 22, 2018 - link

> 2700X is pretty hungry when OC'dAnd Intel chips aren't? If Zen+ is already on Intel's heels for both performance per watt and raw frequency, a 7nm chip with improved IPC and/or cache is very likely going to have them pull ahead by a significant margin. And even if it won't, it's still going to eat into Intel's profit as their next tech is 10nm vs. AMD's 7nm, meaning more optimal wafer estate utilization for the latter.

AMD has really climbed back at the top of their game; I've been in the Intel camp for the last 12 years or so, but the recent developments throw me way back to K7 and A64 days. Almost makes me sad that I won't have any reason to move to a different mobo in the next 6–8 years or so.

mapesdhs - Friday, March 29, 2019 - link

Amusing to look back given how things panned out. So yes, Intel released the 9900K, but it was 100% more expensive than the 2700X. :D A complete joke. And meanwhile tech reviewers raved about a peasly 5 to 5.2 oc, on a chip that already has a 4.7 max turbo (major yawn fest), focusing on specific 1080p gaming tests that gave silly high fps number favoured by a market segment that is a tiny minority. Then what happens, RTX comes out and pushes the PR focus right back down to 60Hz. :DI wish people to stop drinking the Intel/NVIDIA coolaid. AMD does it aswell sometimes, but it's bizarre how uncritical tech reviewers often are about these things. The 9900K dragged mainstream CPU pricing up to HEDT levels; epic fail. Some said oh but it's great for poorly optimised apps like Premiere, completely ignoring the "poorly optimised" part (ie. why the lack of pressure to make Adobe write better code? It's weird to justify an overpriced CPU on the back of a pro app that ought to run a lot better on far cheaper products).