The AMD 2nd Gen Ryzen Deep Dive: The 2700X, 2700, 2600X, and 2600 Tested

by Ian Cutress on April 19, 2018 9:00 AM ESTTranslating to IPC: All This for 3%?

Contrary to popular belief, increasing IPC is difficult. Attempt to ensure that each execution port is fed every cycle requires having wide decoders, large out-of-order queues, fast caches, and the right execution port configuration. It might sound easy to pile it all on, however both physics and economics get in the way: the chip still has to be thermally efficient and it has to make money for the company. Every generational design update will go for what is called the ‘low-hanging fruit’: the identified changes that give the most gain for the smallest effort. Usually reducing cache latency is not always the easiest task, and for non-semiconductor engineers (myself included), it sounds like a lot of work for a small gain.

For our IPC testing, we use the following rules. Each CPU is allocated four cores, without extra threading, and power modes are disabled such that the cores run at a specific frequency only. The DRAM is set to what the processor supports, so in the case of the new CPUs, that is DDR4-2933, and the previous generation at DDR4-2666. I have recently seen threads which dispute if this is fair: this is an IPC test, not an instruction efficiency test. The DRAM official support is part of the hardware specifications, just as much as the size of the caches or the number of execution ports. Running the two CPUs at the same DRAM frequency gives an unfair advantage to one of them: either a bigger overclock/underclock, and deviates from the intended design.

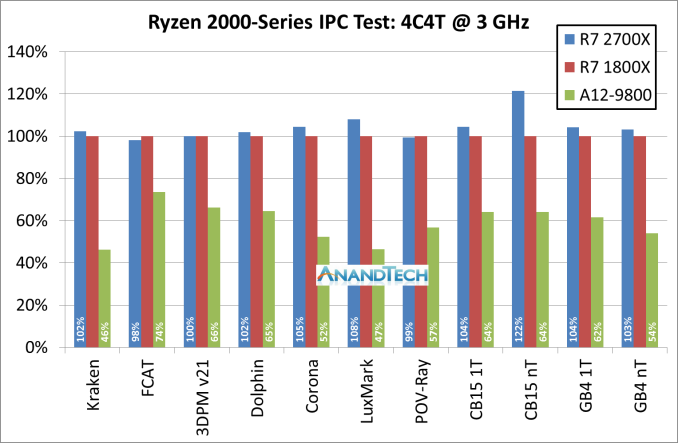

So in our test, we take the new Ryzen 7 2700X, the first generation Ryzen 7 1800X, and the pre-Zen Bristol Ridge based A12-9800, which is based on the AM4 platform and uses DDR4. We set each processors at four cores, no multi-threading, and 3.0 GHz, then ran through some of our tests.

For this graph we have rooted the first generation Ryzen 7 1800X as our 100% marker, with the blue columns as the Ryzen 7 2700X. The problem with trying to identify a 3% IPC increase is that 3% could easily fall within the noise of a benchmark run: if the cache is not fully set before the run, it could encounter different performance. Shown above, a good number of tests fall in that +/- 2% range.

However, for compute heavy tasks, there are 3-4% benefits: Corona, LuxMark, CineBench and GeekBench are the ones here. We haven’t included the GeekBench sub-test results in the graph above, but most of those fall into the 2-5% category for gains.

If we take out Cinebench R15 nT result and the Geekbench memory tests, the average of all of the tests comes out to a +3.1% gain for the new Ryzen 2700X. That sounds bang on the money for what AMD stated it would do.

Cycling back to that Cinebench R15 nT result that showed a 22% gain. We also had some other IPC testing done at 3.0 GHz but with 8C/16T (which we couldn’t compare to Bristol Ridge), and a few other tests also showed 20%+ gains. This is probably a sign that AMD might have also adjusted how it manages its simultaneous multi-threading. This requires further testing.

AMD’s Overall 10% Increase

With some of the benefits of the 12LP manufacturing process, a few editors internally have questioned exactly why AMD hasn’t redesigned certain elements of the microarchitecture to take advantage. Ultimately it would appear that the ‘free’ frequency boost is worth just putting the same design in – as mentioned previously, the 12LP design is based on 14LPP with performance bump improvements. In the past it might not have been mentioned as a separate product line. So pushing through the same design is an easy win, allowing the teams to focus on the next major core redesign.

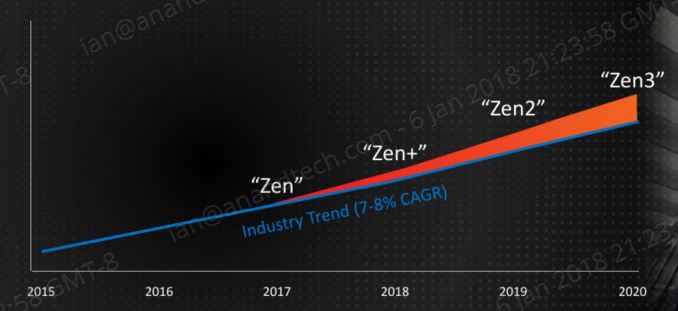

That all being said, AMD has previously already stated its intentions for the Zen+ core design – rolling back to CES at the beginning of the year, AMD stated that they wanted Zen+ and future products to go above and beyond the ‘industry standard’ of a 7-8% performance gain each year.

Clearly 3% IPC is not enough, so AMD is combining the performance gain with the +250 MHz increase, which is about another 6% peak frequency, with better turbo performance with Precision Boost 2 / XFR 2. This is about 10%, on paper at least. Benchmarks to follow.

545 Comments

View All Comments

YukaKun - Saturday, April 21, 2018 - link

Oh, I'm actually curious about your experience with all the systems.I'm still running my i7 2700K at ~4.6Ghz. I do agree I haven't felt that it's a ~2012 CPU and it does everything pretty damn well still, but I'd like to know if you have noticed a difference between the new AMD and your Sandy Bridge. Same for when you assemble the 2700X.

I'm trying to find an excuse to get the 2700X, but I just can't find one, haha.

Cheers!

Luckz - Monday, April 23, 2018 - link

The the once in a lifetime chance to largely keep your CPU name (2700K => 2700X) should be all the excuse you need.YukaKun - Monday, April 23, 2018 - link

That is so incredibly superficial and dumb... I love it!Cheers!

mapesdhs - Monday, April 23, 2018 - link

YukaKun, your 2700K is only at 4.6? Deary me, should be 5.0 and proud, doable with just a basic TRUE and one fan. 8) For reference btw, a 2700K at 5GHz gives the same threaded performance as a 6700K at stock.And I made a typo in my earlier reply, mentioned the wrong XEON model, should have been the 2680 V2.

YukaKun - Tuesday, April 24, 2018 - link

For daily usage and stability, I found that 4.6Ghz worked best in terms of noise/heat/power ratios.I also did not disable any power saving features, so it does not work unnecessarily when not under heavy load.

I'm using AS5 with a TT Frio (the original one) on top, so it's whisper quiet at 4.6Ghz and I like it like that. When I made it work at 5Ghz, I found I had to have the fans near 100%, so it wasn't something I'd like, TBH.

But, all of this to say: yes, I've done it, but settled with 4.6Ghz.

Cheers!

mapesdhs - Friday, March 29, 2019 - link

(an old thread, but in case someone comes across it...)I use dynamic vcore so I still get the clock/voltage drops when idle. I'm using a Corsair H80 with 2x NDS 120mm PWM, so also quiet even at full load; no need for such OTT cooling to handle the load heat, but using an H80 means one can have low noise aswell. An ironic advantage of the lower thermal density of the older process sizes. Modern CPUs with the same TDP dump it out in a smaller area, making it more difficult to keep cool.

Having said that, I've been recently pondering an upgrade to have much better general idle power draw and a decent bump for threaded performance. Considering a Ryzem 5 2600 or 7 2700, but might wait for Zen2, not sure yet.

moozooh - Sunday, April 22, 2018 - link

No, it might have to do with the fact that the 8350K has 1.5x the cache size and beastly per-thread performance that is also sustained at all times—so it doesn't have to switch from a lower-powered state (which the older CPUs were slower at), nor does it taper off as other cores get loaded, which is most noticeable on the the things Samus mentioned, ie. "boot times, app launches and gaming". Boot times and app launches are both essentially single-thread tasks with no prior context, and gaming is where a CPU upgrade like that will improve worst-case scenarios by at least an order of magnitude, which is really what's most noticeable.For instance, if your monitor is 60Hz and your average framerate is 70, you won't notice the difference between 60 and 70—you will only notice the time spent under 60. Even a mildly overclocked 8350K is still the one of best gaming CPUs for this reason, easily rivaling or outperforming previous-gen Ryzens in most cases and often being on par with the much more expensive 8700K where thread count isn't as important as per-thread performance for responsiveness and eliminating stutters. When pushed to or above 5 GHz, I'm reasonably certain it will still give many of the newer, more expensive chips, a run for their money.

spdragoo - Friday, April 20, 2018 - link

Memory prices? Memory prices are still pretty much the way they've always been:-- faster memory costs (a little) more than slower memory

-- larger memory sticks/kits cost (a little) more than smaller sticks/kits

-- last-gen RAM (DDR3) is (very slightly) cheaper than current-gen RAM (DDR4)

I suppose you can wait 5 billion years for the Sun to fade out, at which point all RAM (or whatever has replaced it by then) will have the same cost ($0...since no one will be around to buy or sell it)...but I don't think you need to worry about that.

Ferrari_Freak - Friday, April 20, 2018 - link

You didn't write anything about price there... All you've said is that relative pricing for things is the same it has always been, and that's no surprise.The $$$ cost of any give stick is more than it was a year or two ago. 2x8gb DDR4-3200 G.Skill Ripjaws V is $180 on Newegg today. It was $80 two years ago. Clearly not the way they've always been...

James5mith - Friday, April 20, 2018 - link

2x16GB Crucial DDR4-2400 SO-DIMM kit.https://www.amazon.com/gp/product/B019FRCV9G/

November 29th 2016 (when I purchased): $172

Current Amazon price for exact same kit: $329