AMD Ryzen 5 2400G and Ryzen 3 2200G Core Frequency Scaling: An Analysis

by Gavin Bonshor on June 20, 2018 10:05 AM EST- Posted in

- CPUs

- AMD

- Zen

- APU

- Vega

- Ryzen

- Ryzen 5

- Ryzen 3

- Scaling

- CPU Frequency

- Ryzen 3 2200G

- Ryzen 5 2400G

CPU Performance

As stated on the first page, here we take both APUs from 3.5 GHz to 4.0 GHz in 100 MHz increments and run our testing suite at each stage. This is a 14.3% increase in clock speed, and it is our CPU testing that is likely to show the best linearity in improvement.

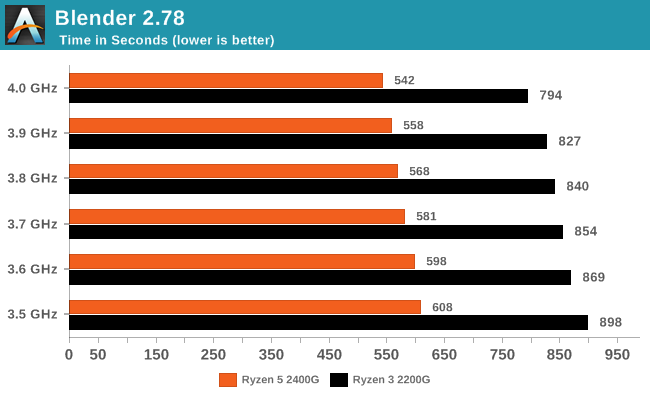

Rendering - Blender 2.78: link

For a render that has been around for what seems like ages, Blender is still a highly popular tool. We managed to wrap up a standard workload into the February 5 nightly build of Blender and measure the time it takes to render the first frame of the scene. Being one of the bigger open source tools out there, it means both AMD and Intel work actively to help improve the codebase, for better or for worse on their own/each other's microarchitecture.

The Ryzen 5 2400G scored a +12.1% increase in throughput, while the Ryzen 3 2200G did a bit better at +13.1%.

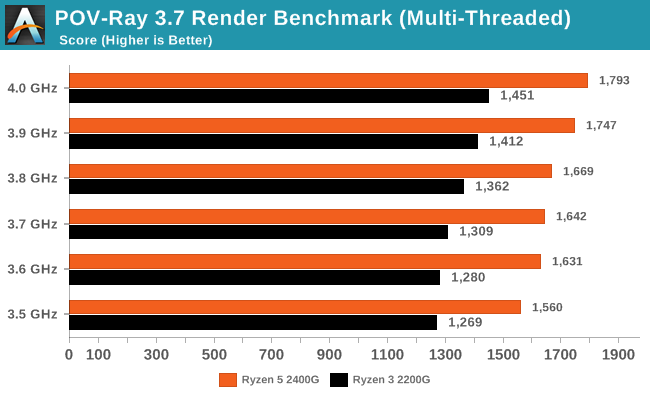

Rendering – POV-Ray 3.7: link

The Persistence of Vision Ray Tracer, or POV-Ray, is a freeware package for as the name suggests, ray tracing. It is a pure renderer, rather than modeling software, but the latest beta version contains a handy benchmark for stressing all processing threads on a platform. We have been using this test in motherboard reviews to test memory stability at various CPU speeds to good effect – if it passes the test, the IMC in the CPU is stable for a given CPU speed. As a CPU test, it runs for approximately 1-2 minutes on high-end platforms.

The Ryzen 5 2400G gets a +14.9% bump in POV-Ray, compared to the +14.3% we get with the 2200G, which is spot on with the frequency gain.

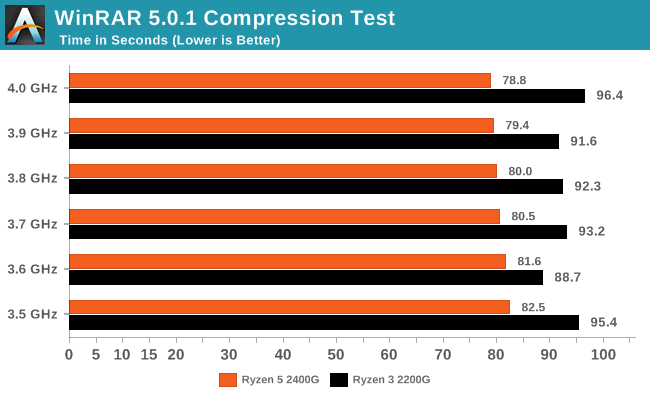

Compression – WinRAR 5.4: link

Our WinRAR test from 2013 is updated to the latest version of WinRAR at the start of 2014. We compress a set of 2867 files across 320 folders totaling 1.52 GB in size – 95% of these files are small typical website files, and the rest (90% of the size) are small 30-second 720p videos.

For this test, the Ryzen 5 2400G scaled at least in part (+4.7%) across the frequency gain, however the Ryzen 3 2200G was jumping a bit over the place. WinRAR is highly memory sensitive, which is particually why the 2400G only scored a smaller gain, but it would seem that other factors came into play with the 2200G.

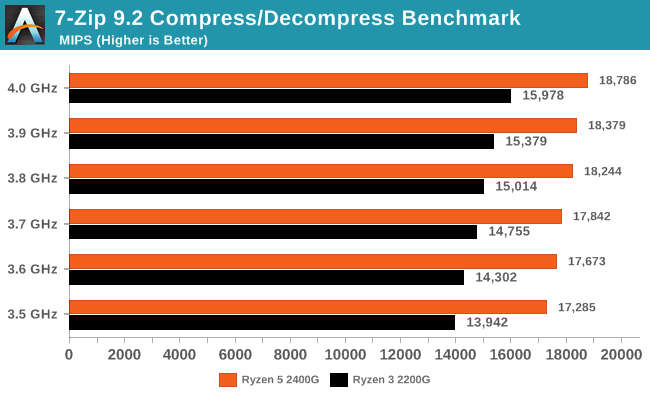

Synthetic – 7-Zip 9.2: link

As an open source compression tool, 7-Zip is a popular tool for making sets of files easier to handle and transfer. The software offers up its own benchmark, to which we report the result.

7-zip is another benchmark that can have other bottlenecks, like memory, and as a result we see only a +8.7% gain on the 2400G, however the 2200G gets a full +14.6% gain in performance.

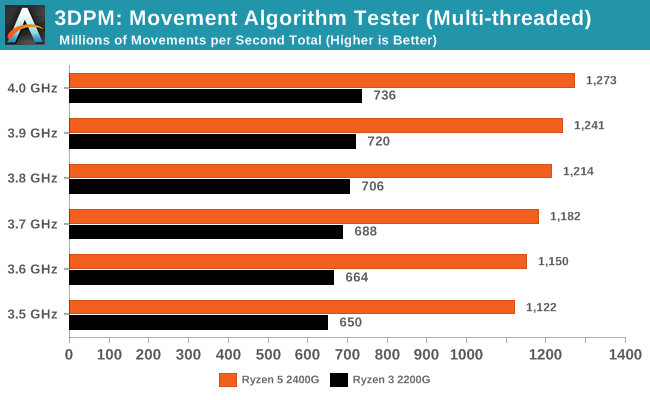

Point Calculations – 3D Movement Algorithm Test: link

3DPM is a self-penned benchmark, taking basic 3D movement algorithms used in Brownian Motion simulations and testing them for speed. High floating point performance, MHz, and IPC win in the single thread version, whereas the multithread version has to handle the threads and loves more cores. For a brief explanation of the platform agnostic coding behind this benchmark, see my forum post here.

3DPM scales very well over cores and threads, being more latency dependent than anything else. The 2400G nets a +13.4% gain in performance up to 4.0 GHz, and the 2200G gets a similar +13.2% gain as well.

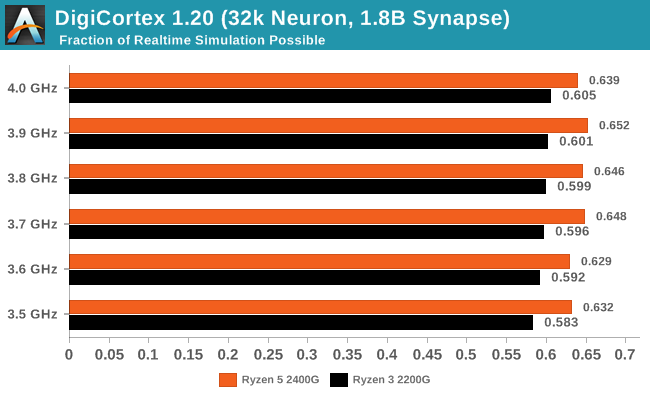

Neuron Simulation - DigiCortex v1.20: link

The newest benchmark in our suite is DigiCortex, a simulation of biologically plausible neural network circuits, and simulates activity of neurons and synapses. DigiCortex relies heavily on a mix of DRAM speed and computational throughput, indicating that systems which apply memory profiles properly should benefit and those that play fast and loose with overclocking settings might get some extra speed up. Results are taken during the steady state period in a 32k neuron simulation and represented as a function of the ability to simulate in real time (1.000x equals real-time).

DigiCortex is almost all about the memory performance, although can sometimes be CPU bottlenecked. The 2400G is ultimately hovering around 0.63x-0.65x simulation speed, however the 2200G does see a small gain up to 4% by increasing the core frequency.

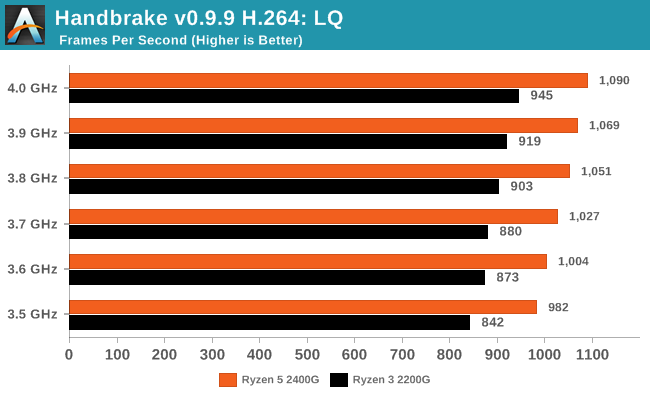

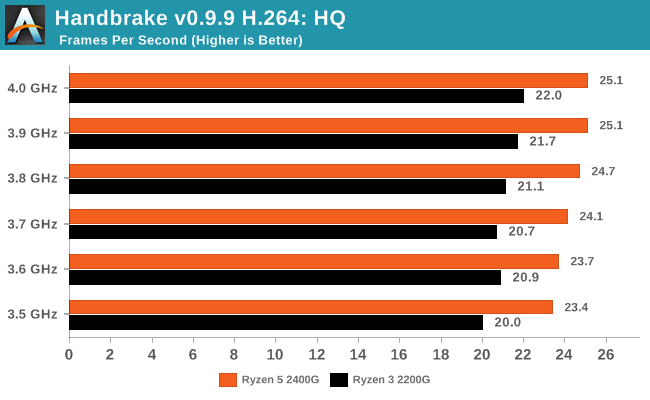

HandBrake v1.0.2 H264 and HEVC: link

As mentioned above, video transcoding (both encode and decode) is a hot topic in performance metrics as more and more content is being created. First consideration is the standard in which the video is encoded, which can be lossless or lossy, trade performance for file-size, trade quality for file-size, or all of the above can increase encoding rates to help accelerate decoding rates. Alongside Google's favorite codec, VP9, there are two others that are taking hold: H264, the older codec, is practically everywhere and is designed to be optimized for 1080p video, and HEVC (or H265) that is aimed to provide the same quality as H264 but at a lower file-size (or better quality for the same size). HEVC is important as 4K is streamed over the air, meaning less bits need to be transferred for the same quality content.

Handbrake is a favored tool for transcoding, and so our test regime takes care of three areas.

Low Quality/Resolution H264: Here we transcode a 640x266 H264 rip of a 2 hour film, and change the encoding from Main profile to High profile, using the very-fast preset.

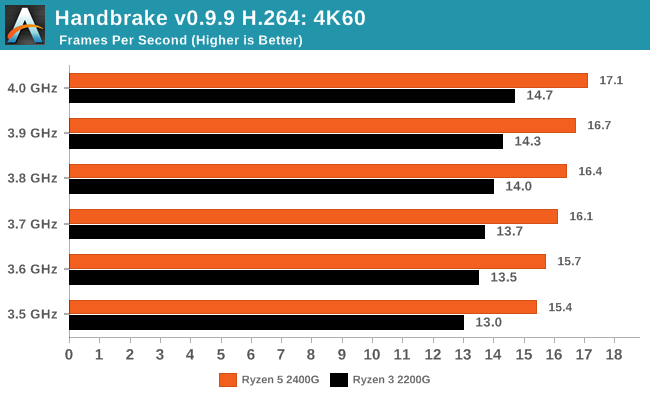

High Quality/Resolution H264: A similar test, but this time we take a ten-minute double 4K (3840x4320) file running at 60 Hz and transcode from Main to High, using the very-fast preset.

HEVC Test: Using the same video in HQ, we change the resolution and codec of the original video from 4K60 in H264 into 4K60 HEVC.

29 Comments

View All Comments

eastcoast_pete - Thursday, June 21, 2018 - link

I hear your point. What worries me about buying second hand GPU especially nowadays is that there is no way to know whether it was used to mine crypto 24/7 for the last 2-3 years or not. Semiconductors can wear out if used for thousands of hours both overvolted and at above normal temps; both can really affect not just the GPU, but especially also the memory.The downside of a 980 or 970 (which wasn't as much at risk for cryptomining) is the now outdated HDMI standard. But yes, just for gaming, they can do.

Lolimaster - Friday, June 22, 2018 - link

A CM Hyper 212X is cheap and it's one the best bang for buck coolers. 16GB of ram is expensive if you want 2400 o 3000 CL15. 8GB is just too low, the igpu needs some of it and many games (2015+ already need 6GB+ of system memory)eastcoast_pete - Thursday, June 21, 2018 - link

Thanks for the link! Yes, those results are REALLY interesting. They used stock 2200G and 2400G, no delidding, no undervolting of the CPU, and on stock heatsinks, and got quite an increase, especially when they also used faster memory (to OC memory speed also) . Downside was notable increase in power draw and the stock cooler's fan running at full tilt.So, Gavin's delidded APUs with their better heatsinks should do even better. The most notable thing in that German article was that the way to the overclock mountain (stable at 1600 Mhz stock cooler etc.) led through a valley of tears, i.e. the APUs crashed reliably when the iGPU was mildly overclocked, but then became stable again at higher iGPU clock speeds and voltage. They actually got some statement from AMD that AMD knows about that strange behavior, but apparently has no explanation for it. But then - running more stable if I run it even faster - bring it!

808Hilo - Friday, June 22, 2018 - link

A R3 is not really an amazing feat. It's a defective R7 with core, lane, fabric, pinout defects. The rest of the chip is run at low speed because the integrity is affected. Not sure anyone is getting their money worth here.Lolimaster - Friday, June 22, 2018 - link

I don't get this nonsense articles on an APU were the MAIN STAR IS THE IGPU. On some builds there mixed results when the gpu frequency jumped around 200-1200Mhz (hence some funny low 0.1-1% lows in benchmarks).It's all about OC the igpu forgetting about the cpu part and addressing/fixing igpu clock rubber band effect, sometimes disabling boost for cpu, increase soc voltage, etc.

Galatian - Friday, June 22, 2018 - link

I'm going to question the results a little bit. For me it looks like that the only ”jump” in performance you get in games occurs whenever you hit an OC over the standard boost clock, e.g. 3700 MHz on the 2400G. I would suspect that you are simply preventing some core parking or some other aggressive power management feature while applying the OC. That would explain the odd numbers with when you increase the OC.That being said I would say a CPU OC doesn't really make sense. An undervolting test to see where the sweet spot lies would be nice though.

melgross - Monday, June 25, 2018 - link

Frankly, the result of all these tests seems to be that overclocking isn’t doing much of anything useful, at least, not the small amounts we see here with AMD.5% is never going to be noticed. Several studies done a number of years ago showed that you need at least an overall 10% improvement in speed for it to even be noticeable. 15% would be barely noticeable.

For heavy database workloads that take place over hours, or long rendering tasks, it will make a difference, but for gaming, which this article is overly interested in, nada!

Allan_Hundeboll - Monday, July 2, 2018 - link

Benchmarks @46W cTDP would be interestingV900 - Friday, September 28, 2018 - link

The 2200G makes sense for an absolute budget system.(Though if you're starting from rock bottom and also need to buy a cabinet, motherboard, RAM, etc. you'll probably be better off taking that money and buying a used computer. You can get some really good deals for less than 500$)

The 2400G however? Not so much. The price is too high and the performance too low to compete with an Intel Pentium/Nvidia 1030 solution.

Or if you want to spend a few dollars more and find a good deal: An Intel Pentium/Nvidia 1050.